Technology peripherals

Technology peripherals AI

AI Timing Analysis Pentagon Warrior! Tsinghua University proposes TimesNet: leading in prediction, filling, classification, and detection

Timing Analysis Pentagon Warrior! Tsinghua University proposes TimesNet: leading in prediction, filling, classification, and detectionAchieving task versatility is a core issue in the research of basic deep learning models, and is also one of the main focuses in the recent direction of large models.

However, in the time series field, various types of analysis tasks vary greatly, including prediction tasks that require fine-grained modeling and classification tasks that require extracting high-level semantic information. How to build a unified deep basic model to efficiently complete various timing analysis tasks, there has been no established solution before.

To this end, a team from the School of Software, Tsinghua University conducted research on the basic issue of timing change modeling and proposed TimesNet, a task-universal timing basic model. The paper was accepted by ICLR 2023.

## Author list: Wu Haixu*, Hu Tenge*, Liu Yong*, Zhou Hang, Wang Jianmin, Long Mingsheng

Link: https://openreview.net/pdf?id=ju_Uqw384Oq

Code: https://github.com/thuml/TimesNet

Time series algorithm library: https://github.com/thuml/Time-Series-Library

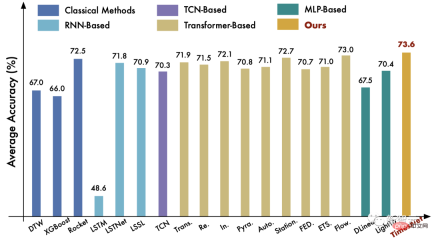

TimesNet has achieved comprehensive leadership in the five major tasks of long-term and short-term prediction, missing value filling, anomaly detection, and classification.

Different from sequence data such as natural language and video, a single Time only saves some scalars, and its key information is more contained in temporal variation (Temporal Variation).

Therefore, Modeling timing changes is a core issue common to all types of timing analysis tasks.

In recent years, various deep models have been widely used in timing analysis tasks, such as recurrent neural networks (RNN), temporal convolutional networks (TCN) and transformer networks (Transformer).

However, the first two types of methods mainly focus on capturing changes between nearby moments, and have insufficient modeling capabilities in long-term dependencies.

Although Transformer has a natural advantage in modeling long-term dependencies, due to the extremely complex timing changes in the real world, it is difficult to mine them by relying solely on attention between discrete time points. Reliable timing dependencies.

To this end, this article analyzes timing changes from a new multi-periodity perspective, as shown in the figure below. We observe that:

- Time series naturally have multi-periodicity.

Time series data in the real world are often the superposition of different periodic processes. For example, traffic data changes on a daily basis in the short term, while in the long term it changes on a weekly basis. . These data of different periods overlap and interfere with each other, which brings great challenges to time series analysis.

- The time series presents two kinds of time series changes within the cycle and between the cycles.

Specifically, for the process of a specific cycle, the change at each time point is not only related to the adjacent moment, but also highly related to similar processes in the adjacent cycle. Among them, intra-cycle changes correspond to short-term processes, while inter-cycle changes can reflect long-term trends in consecutive cycles. Note: If the time series has no obvious periodicity, it is equivalent to the situation where the period is infinitely long.

2 Design IdeasBased on the above two observations, we designed the structure of TimesNet as follows:

- The multi-periodic nature of time series naturally inspired a modular (Modular) design idea, that is, a module Capture temporal changes dominated by a specific cycle. This modular design idea can decouple complex time changes, which is beneficial to subsequent modeling. For the

- intra-cycle and inter-cycle changes of the time series, this article innovatively proposesExpand one-dimensional time series data to two-dimensional space for analysis. As shown in the figure above, by folding a one-dimensional time series based on multiple cycles, multiple two-dimensional tensors (2D tensors) can be obtained. The columns and rows of each two-dimensional tensor reflect the time series within the cycle and between the cycles respectively. Changes, that is, Temporal 2D-variations are obtained.

Therefore, after folding the time series data, we can directly use advanced

Visual Backbone Networkto perform feature extraction on the time series data, such as Swin Transformer, ResNeXt, ConvNeXt, etc. . This design also allows timing analysis tasks to directly benefit from the booming computer vision field. 3 TimesNet

Based on the above ideas, we proposed the TimesNet model, which decomposes complex timing changes into different periods through a modular structure, and transforms the original one-dimensional time into The sequence is converted into a two-dimensional space

to achieve unified modeling of intra-cycle and inter-cycle changes. In this section, we will first introduce the method of extending time series data to two-dimensional space, and then introduce the overall architecture of the model.

3.1 Timing change: 1D->2D

The process of timing folding is shown in the figure above, which is mainly divided into the following two steps:

(1) Period extractionFor a time length of , channel dimensions of dimensional time series, the period information can be directly extracted by the fast Fourier transform (FFT) of the time dimension, that is:

where represents each The intensity of the frequency component, the frequency with the greatest intensity corresponds to the most significant period length.

where represents each The intensity of the frequency component, the frequency with the greatest intensity corresponds to the most significant period length.

(2) Sequence folding 1D->2DFor the selected individual Period, fold the original one-dimensional time series respectively. The process can be formalized as:

where 0 is added to the end of the sequence, Make the sequence length divisible.

where 0 is added to the end of the sequence, Make the sequence length divisible.

Through the above operations, we obtain a set of two-dimensional tensors, which correspond to two-dimensional time series changes with a period of .

3.2 Model Design

The overall architecture of TimesNet is shown in the figure:

Specifically, as shown in the figure below, each TimesBlock contains the following sub-processes:

##(1) Folding time series (1D->2D): TimesBlock first extracts the period from the input one-dimensional time series features, and then converts it into Two-dimensional time series changes, that is, the content covered in the previous section:

(2) Extract two-dimensional time series change representations (2D Representation) : As analyzed previously, the converted two-dimensional time series changes have 2D locality, so 2D convolution can be used directly to extract features. Here, we chose the classic Inception model, namely:

It is worth noting that because we have converted the 1D timing features into the 2D space , so we can also make use of many cutting-edge models in the field of computer vision, such as ResNeXt, ConvNeXt, Attention-based Swin Transformer, etc. This enables time series analysis to work hand-in-hand with the visual backbone network.

(3) Expand time series (2D->1D): For subsequent multi-period fusion, we expand the two-dimensional time series change representation into one-dimensional space:

Trunc(⋅) means to remove the 0 added by the Padding(⋅) operation in step (1).

(4) Adaptive fusion (1D Aggregation) : In order to fuse multi-period information, we perform a weighted summation of the extracted two-dimensional time series representations, and select The summation weight of is the corresponding frequency intensity obtained in step (1):

By converting the 1D time series For the design of 2D space, TimesNet implements the timing change modeling process of "extracting two-dimensional timing changes in multiple cycles and then performing adaptive fusion."

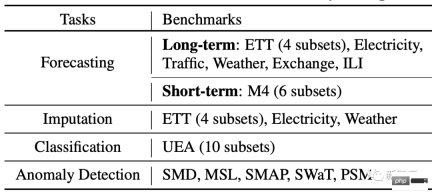

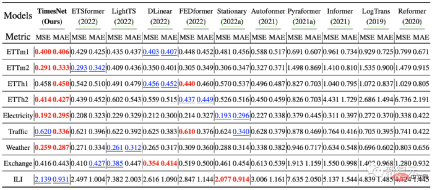

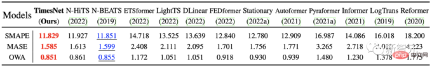

4 ExperimentWe conducted experiments on five major tasks: long-term prediction, short-term prediction, missing value filling, anomaly detection, and classification, covering 36 data sets, 81 different experimental settings.

At the same time, 19 different depth methods were compared, including the latest ones based on RNN, CNN, MLP, and Transformer Models such as N-BEATS (2019), Autoformer (2021), LSSL (2022), N-Hits (2022), FEDformer (2022), Dlinear (2023), etc.

4.1 Overall results

As shown in the opening radar chart, TimesNet achieved SOTA on all five tasks.

(1) Long-term prediction: On this high-profile task, TimesNet surpasses state-of-the-art Transformer and MLP-based models.

(2) Short-term prediction: The M4 data set used in this experiment contains 6 sub-datasets with different sampling frequencies, totaling more than 100,000 pieces of data. TimesNet still achieved optimal results in this complex data distribution situation, verifying the model's temporal change modeling capabilities.

(3) Classification task: On this task, TimesNet surpasses the classic Rocket algorithm and cutting-edge deep learning models Flowformer.

For more comparisons of tasks, please see the paper.

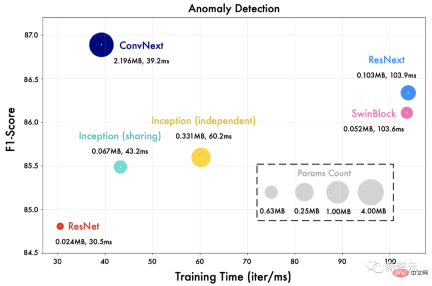

4.2 Generalization of the visual backbone network

We replace the Inception network in TimesNet with a different visual backbone network, For example ResNet, ConvNext, Swin Transformer, etc.

As shown in the figure below, a more advanced visual backbone network can bring better results. This also means that under the framework of TimesNet, time series analysis can directly benefit from advances in the field of visual backbone networks.

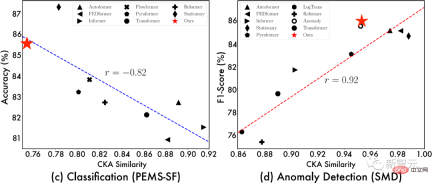

4.3 Representation Analysis

In order to further explore the source of the effect of TimesNet, We show the relationship between "CKA similarity between the bottom-level representation of the model" and "model effect". Among them, the lower the CKA similarity, the greater the representation difference between the bottom layer and the top layer of the model, that is, a more hierarchical representation.

# From the above visualization, we can observe :

- #In prediction and anomaly detection tasks, the better the model is, the higher the representation similarity between the bottom layer and the top layer, indicating that the task requires more Low-level representations;

- #In classification and missing value filling tasks, the better the model is, the lower the similarity between the bottom-level representation, indicating that this task requires hierarchical representation, that is, better global feature extraction capabilities.

Thanks to the convolution operation in 2D space, TimesNet can learn appropriate representations according to different tasks, such as prediction and anomaly detection tasks, learning low-level representations; In classification and missing value filling tasks, hierarchical abstract features are learned. This further proves the task generalization of TimesNet as a basic model.

At the same time, the above representation analysis also provides design ideas for deep models for specific tasks. For example, for prediction tasks, we need to focus on the extraction of underlying fine-grained features, and for filling tasks, we need to further Learning that takes global representation into account.

5 SummaryInspired by the multi-period nature of time series, this article proposes a basic model for task-universal time series analysis - TimesNet. This model innovatively folds one-dimensional time series into two-dimensional space and uses 2D convolution to obtain time series features. This innovation allows timing analysis tasks to directly benefit from the booming visual backbone network, which is very inspiring for subsequent research.

At the same time, TimesNet has achieved comprehensive leadership in the five mainstream time series analysis tasks of long-term and short-term prediction, missing value filling, anomaly detection, and classification, and has excellent application value.

The above is the detailed content of Timing Analysis Pentagon Warrior! Tsinghua University proposes TimesNet: leading in prediction, filling, classification, and detection. For more information, please follow other related articles on the PHP Chinese website!

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AM

AI For Runners And Athletes: We're Making Excellent ProgressApr 22, 2025 am 11:12 AMThere were some very insightful perspectives in this speech—background information about engineering that showed us why artificial intelligence is so good at supporting people’s physical exercise. I will outline a core idea from each contributor’s perspective to demonstrate three design aspects that are an important part of our exploration of the application of artificial intelligence in sports. Edge devices and raw personal data This idea about artificial intelligence actually contains two components—one related to where we place large language models and the other is related to the differences between our human language and the language that our vital signs “express” when measured in real time. Alexander Amini knows a lot about running and tennis, but he still

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AM

Jamie Engstrom On Technology, Talent And Transformation At CaterpillarApr 22, 2025 am 11:10 AMCaterpillar's Chief Information Officer and Senior Vice President of IT, Jamie Engstrom, leads a global team of over 2,200 IT professionals across 28 countries. With 26 years at Caterpillar, including four and a half years in her current role, Engst

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AM

New Google Photos Update Makes Any Photo Pop With Ultra HDR QualityApr 22, 2025 am 11:09 AMGoogle Photos' New Ultra HDR Tool: A Quick Guide Enhance your photos with Google Photos' new Ultra HDR tool, transforming standard images into vibrant, high-dynamic-range masterpieces. Ideal for social media, this tool boosts the impact of any photo,

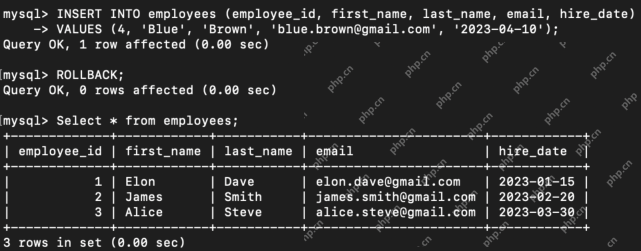

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AM

What are the TCL Commands in SQL? - Analytics VidhyaApr 22, 2025 am 11:07 AMIntroduction Transaction Control Language (TCL) commands are essential in SQL for managing changes made by Data Manipulation Language (DML) statements. These commands allow database administrators and users to control transaction processes, thereby

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AM

How to Make Custom ChatGPT? - Analytics VidhyaApr 22, 2025 am 11:06 AMHarness the power of ChatGPT to create personalized AI assistants! This tutorial shows you how to build your own custom GPTs in five simple steps, even without coding skills. Key Features of Custom GPTs: Create personalized AI models for specific t

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AM

Difference Between Method Overloading and OverridingApr 22, 2025 am 10:55 AMIntroduction Method overloading and overriding are core object-oriented programming (OOP) concepts crucial for writing flexible and efficient code, particularly in data-intensive fields like data science and AI. While similar in name, their mechanis

Difference Between SQL Commit and SQL RollbackApr 22, 2025 am 10:49 AM

Difference Between SQL Commit and SQL RollbackApr 22, 2025 am 10:49 AMIntroduction Efficient database management hinges on skillful transaction handling. Structured Query Language (SQL) provides powerful tools for this, offering commands to maintain data integrity and consistency. COMMIT and ROLLBACK are central to t

PySimpleGUI: Simplifying GUI Development in Python - Analytics VidhyaApr 22, 2025 am 10:46 AM

PySimpleGUI: Simplifying GUI Development in Python - Analytics VidhyaApr 22, 2025 am 10:46 AMPython GUI Development Simplified with PySimpleGUI Developing user-friendly graphical interfaces (GUIs) in Python can be challenging. However, PySimpleGUI offers a streamlined and accessible solution. This article explores PySimpleGUI's core functio

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Mac version

God-level code editing software (SublimeText3)

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

Atom editor mac version download

The most popular open source editor