Technology peripherals

Technology peripherals AI

AI After playing more than 300 times in four minutes, Google taught its robot to play table tennis

After playing more than 300 times in four minutes, Google taught its robot to play table tennisAfter playing more than 300 times in four minutes, Google taught its robot to play table tennis

Let a table tennis enthusiast play against a robot. Judging from the development trend of robots, it’s really hard to say who will win and who will lose.

The robot has dexterous maneuverability, flexible leg movement, and excellent grasping ability... and has been widely used in various challenging tasks. But how do robots perform in tasks that involve close interaction with humans? Take table tennis as an example. This requires a high degree of cooperation from both parties, and the ball moves very fast, which poses a major challenge to the algorithm.

In table tennis, the first priority is speed and accuracy, which places high demands on learning algorithms. At the same time, this sport has two major characteristics: highly structured (with a fixed, predictable environment) and multi-agent collaboration (robots can fight with humans or other robots), making it an ideal place to study human-computer interaction and reinforcement learning. An ideal experimental platform for problems.

The robotics research team from Google has built such a platform to study the problems faced by robots learning in multi-person, dynamic and interactive environments. Google also wrote a special blog for this purpose to introduce the two projects they have been studying, Iterative-Sim2Real (i-S2R) and GoalsEye. i-S2R enabled the bot to play over 300 matches with human players, while GoalsEye enabled the bot to learn some useful strategies (goal-conditional strategies) from amateurs.

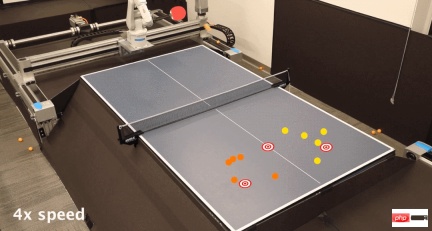

i-S2R strategy allows robots to compete with humans. Although the robot’s grip does not look very professional, it will not miss a ball:

You come and I go, it’s pretty much the same thing, it feels like I’m playing a high-quality ball.

The GoalsEye strategy can return the ball to the designated position on the table, just like hitting it wherever you point it:

i-S2R: Playing games with humans using simulators

In this project, the robot aims to learn to cooperate with humans, that is, to play sparring with humans for as long as possible. Since training directly against human players is tedious and time-consuming, Google adopted a simulation-based approach. However, this faces a new problem. It is difficult for simulation-based methods to accurately simulate human behavior, closed-loop interaction tasks, etc.

In i-S2R, Google proposed a model that can learn human behavior in human-computer interaction tasks and instantiated it on a robotic table tennis platform. Google has built a system that can achieve up to 340 batting throws with amateur human players (shown below).

Human and robot fight for 4 minutes, up to 340 times back and forth

Learning Human behavior models

Allowing robots to accurately learn human behavior also faces the following problems: If there is not a good enough robot strategy from the beginning, it is impossible to collect high-quality data about how humans interact with robots. But without a human behavior model, the robot strategy cannot be obtained from the beginning. This problem is a bit convoluted, like which came first, the chicken or the egg. One approach is to train robot policies directly in the real world, but this is often slow, costly, and poses safety-related challenges that are further exacerbated when humans are involved.

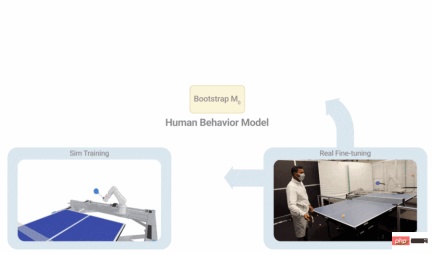

As shown in the figure below, i-S2R uses a simple human behavior model as an approximate starting point and alternates between simulation training and real-world deployment. In each iteration, human behavior models and strategies are adapted.

i-S2R Method

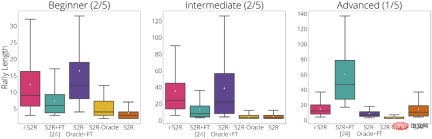

Google breaks down the results of the experiment based on player type: Beginners (40% of players), Intermediate (40% of players) and Advanced (20% of players). From the experimental results, i-S2R performs significantly better than S2R FT (sim-to-real plus fine-tuning) for both beginners and intermediate players (80% of players).

i-S2R results by player type

GoalsEye: Hit exactly where you want

In GoalsEye, Google also demonstrated a method that combines behavioral cloning techniques to learn precise targeting strategies. .

Here Google focuses on the accuracy of table tennis. They hope that the robot can accurately return the ball to any designated position on the table, as shown in the figure below. To achieve the following effects, they also used LFP (Learning from Play) and GCSL (Goal-Conditioned Supervised Learning).

The GoalsEye strategy targets a 20cm diameter circle (left). A human player could aim for the same target (right)

In the first 2,480 demos, Google’s training strategy only worked 9% of the time Accurately hit a circular target with a radius of 30 cm. After about 13,500 demonstrations, the accuracy of the ball reaching its target increased to 43 percent (bottom right).

For more introduction to these two projects, please refer to the following link:

- Iterative-Sim2Real Home Page: https://sites.google.com/view/is2r

- ##GoalsEye Home Page: https://sites.google.com /view/goals-eye

The above is the detailed content of After playing more than 300 times in four minutes, Google taught its robot to play table tennis. For more information, please follow other related articles on the PHP Chinese website!

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AM

You Must Build Workplace AI Behind A Veil Of IgnoranceApr 29, 2025 am 11:15 AMIn John Rawls' seminal 1971 book The Theory of Justice, he proposed a thought experiment that we should take as the core of today's AI design and use decision-making: the veil of ignorance. This philosophy provides a simple tool for understanding equity and also provides a blueprint for leaders to use this understanding to design and implement AI equitably. Imagine that you are making rules for a new society. But there is a premise: you don’t know in advance what role you will play in this society. You may end up being rich or poor, healthy or disabled, belonging to a majority or marginal minority. Operating under this "veil of ignorance" prevents rule makers from making decisions that benefit themselves. On the contrary, people will be more motivated to formulate public

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AM

Decisions, Decisions… Next Steps For Practical Applied AIApr 29, 2025 am 11:14 AMNumerous companies specialize in robotic process automation (RPA), offering bots to automate repetitive tasks—UiPath, Automation Anywhere, Blue Prism, and others. Meanwhile, process mining, orchestration, and intelligent document processing speciali

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AM

The Agents Are Coming – More On What We Will Do Next To AI PartnersApr 29, 2025 am 11:13 AMThe future of AI is moving beyond simple word prediction and conversational simulation; AI agents are emerging, capable of independent action and task completion. This shift is already evident in tools like Anthropic's Claude. AI Agents: Research a

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AM

Why Empathy Is More Important Than Control For Leaders In An AI-Driven FutureApr 29, 2025 am 11:12 AMRapid technological advancements necessitate a forward-looking perspective on the future of work. What happens when AI transcends mere productivity enhancement and begins shaping our societal structures? Topher McDougal's upcoming book, Gaia Wakes:

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AM

AI For Product Classification: Can Machines Master Tax Law?Apr 29, 2025 am 11:11 AMProduct classification, often involving complex codes like "HS 8471.30" from systems such as the Harmonized System (HS), is crucial for international trade and domestic sales. These codes ensure correct tax application, impacting every inv

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AM

Could Data Center Demand Spark A Climate Tech Rebound?Apr 29, 2025 am 11:10 AMThe future of energy consumption in data centers and climate technology investment This article explores the surge in energy consumption in AI-driven data centers and its impact on climate change, and analyzes innovative solutions and policy recommendations to address this challenge. Challenges of energy demand: Large and ultra-large-scale data centers consume huge power, comparable to the sum of hundreds of thousands of ordinary North American families, and emerging AI ultra-large-scale centers consume dozens of times more power than this. In the first eight months of 2024, Microsoft, Meta, Google and Amazon have invested approximately US$125 billion in the construction and operation of AI data centers (JP Morgan, 2024) (Table 1). Growing energy demand is both a challenge and an opportunity. According to Canary Media, the looming electricity

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AM

AI And Hollywood's Next Golden AgeApr 29, 2025 am 11:09 AMGenerative AI is revolutionizing film and television production. Luma's Ray 2 model, as well as Runway's Gen-4, OpenAI's Sora, Google's Veo and other new models, are improving the quality of generated videos at an unprecedented speed. These models can easily create complex special effects and realistic scenes, even short video clips and camera-perceived motion effects have been achieved. While the manipulation and consistency of these tools still need to be improved, the speed of progress is amazing. Generative video is becoming an independent medium. Some models are good at animation production, while others are good at live-action images. It is worth noting that Adobe's Firefly and Moonvalley's Ma

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AM

Is ChatGPT Slowly Becoming AI's Biggest Yes-Man?Apr 29, 2025 am 11:08 AMChatGPT user experience declines: is it a model degradation or user expectations? Recently, a large number of ChatGPT paid users have complained about their performance degradation, which has attracted widespread attention. Users reported slower responses to models, shorter answers, lack of help, and even more hallucinations. Some users expressed dissatisfaction on social media, pointing out that ChatGPT has become “too flattering” and tends to verify user views rather than provide critical feedback. This not only affects the user experience, but also brings actual losses to corporate customers, such as reduced productivity and waste of computing resources. Evidence of performance degradation Many users have reported significant degradation in ChatGPT performance, especially in older models such as GPT-4 (which will soon be discontinued from service at the end of this month). this

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),