Technology peripherals

Technology peripherals AI

AI New research from Google and DeepMind: How does inductive bias affect model scaling?

New research from Google and DeepMind: How does inductive bias affect model scaling?New research from Google and DeepMind: How does inductive bias affect model scaling?

Scaling of Transformer models has aroused the research interest of many scholars in recent years. However, not much is known about the scaling properties of different inductive biases imposed by model architectures. It is often assumed that improvements at a specific scale (computation, size, etc.) can be transferred to different scales and computational regions.

However, it is crucial to understand the interaction between architecture and scaling laws, and it is of great research significance to design models that perform well at different scales. Several questions remain to be clarified: Do model architectures scale differently? If so, how does inductive bias affect scaling performance? How does it affect upstream (pre-training) and downstream (transfer) tasks?

In a recent paper, researchers at Google sought to understand the impact of inductive bias (architecture) on language model scaling . To do this, the researchers pretrained and fine-tuned ten different model architectures across multiple computational regions and scales (from 15 million to 40 billion parameters). Overall, they pretrained and fine-tuned more than 100 models of different architectures and sizes and presented insights and challenges in scaling these ten different architectures.

They also note that scaling these models is not as simple as it seems, that is, the complex details of scaling are intertwined with the architectural choices studied in detail in this article. For example, a feature of Universal Transformers (and ALBERT) is parameter sharing. This architectural choice significantly warps the scaling behavior compared to the standard Transformer not only in terms of performance, but also in terms of computational metrics such as FLOPs, speed, and number of parameters. In contrast, models like Switch Transformers are quite different, with an unusual relationship between FLOPs and parameter quantities.

Specifically, the main contributions of this article are as follows:

- The first derivation of different inductive biases and the scaling law of the model architecture. The researchers found that this scaling factor varied significantly across models and noted that this is an important consideration in model development. It turns out that of all ten architectures they considered, the vanilla Transformer had the best scaling performance, even if it wasn't the best in absolute terms per compute area. Researchers have observed that

- A model that works well in one computational scaling region is not necessarily the best model in another computational scaling region. Additionally, they found that some models, while performing well in low-computation regions, were difficult to scale. This means that it is difficult to get a complete picture of the model's scalability by comparing point by point in a certain computational area. Researchers found that

- when it comes to scaling different model architectures, upstream pre-training perplexity may be less relevant to downstream transfer. Therefore, the underlying architecture and inductive bias are also critical for downstream migration. The researchers highlighted the difficulty of scaling under certain architectures and showed that some models did not scale (or scaled with a negative trend). They also found a tendency for linear temporal attention models (such as Performer) to be difficult to scale.

- Methods and Experiments

In the third chapter of the paper, the researcher outlines the overall experimental setup and introduces the model evaluated in the experiment.

Table 1 below shows the main results of this article, including the number of trainable parameters, FLOPs (single forward pass) and speed (steps per second). In addition, it also includes Validate perplexity (upstream pre-training) and results on 17 downstream tasks.

Are all models scaled the same way?

Figure 2 below shows the scaling behavior of all models when increasing the number of FLOPs. It can be observed that the scaling behavior of all models is quite unique and different, i.e. most of them are different from the standard Transformer. Perhaps the biggest finding here is that most models (e.g., LConv, Evolution) appear to perform on par or better than the standard Transformer, but fail to scale with higher compute budgets.

Another interesting trend is that "linear" Transformers, such as Performer, do not scale. As shown in Figure 2i, compared from base to large scale, the pre-training perplexity only dropped by 2.7%. For the vanilla Transformer this figure is 8.4%.

Figure 3 below shows the scaling curves of all models on the downstream migration task. It can be found that compared with Transformer, most models have different The scaling curve,changes significantly in downstream tasks. It is worth noting that most models have different upstream or downstream scaling curves.

The researchers found that some models, such as Funnel Transformer and LConv, seemed to perform quite well upstream, but were greatly affected downstream. As for Performer, the performance gap between upstream and downstream seems to be even wider. It is worth noting that the downstream tasks of SuperGLUE often require pseudo-cross-attention on the encoder, which models such as convolution cannot handle (Tay et al., 2021a).

Therefore, researchers have found that although some models have good upstream performance, they may still have difficulty learning downstream tasks.

#Is the best model different at each scale?

Figure 1 below shows the Pareto frontier when calculated in terms of upstream or downstream performance. The colors of the plot represent different models, and it can be observed that the best model may be different for each scale and calculation area. Additionally, this can also be seen in Figure 3 above. For example, the Evolved Transformer seems to perform just as well as the standard Transformer in the tiny to small region (downstream), but this changes quickly when scaling up the model. The researchers also observed this in MoS-Transformer, which performed significantly better than the ordinary Transformer in some areas, but not in other areas.

Scaling law of each model

Table 2 below shows the fitting of each model in various situations The slope of the linear straight line α. The researchers obtained α by plotting F (FLOPs), U (upstream perplexity), D (downstream accuracy), and P (number of parameters). Generally speaking, α describes the scalability of the model, e.g. α_F,U plots FLOPs against upstream performance. The only exception is α_U,D, which is a measure of upstream and downstream performance, with high α_U,D values meaning better model scaling to downstream tasks. Overall, the alpha value is a measure of how well a model performs relative to scaling.

Do Scaling Protocols affect model architecture in the same way?

Figure 4 below shows the impact of scaling depth in four model architectures (MoS-Transformer, Transformer, Evolved Transformer, LConv).

Figure 5 below shows the impact of scaling width across the same four architectures. First, on the upstream (negative log-perplexity) curve it can be noticed that although there are clear differences in absolute performance between different architectures, the scaling trends remain very similar. Downstream, with the exception of LConv, deep scaling (Figure 4 above) appears to work the same on most architectures. Also, it seems that the Evolved Transformer is slightly better at applying width scaling relative to width scaling. It is worth noting that depth scaling has a much greater impact on downstream scaling compared to width scaling.

For more research details, please refer to the original paper.

The above is the detailed content of New research from Google and DeepMind: How does inductive bias affect model scaling?. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

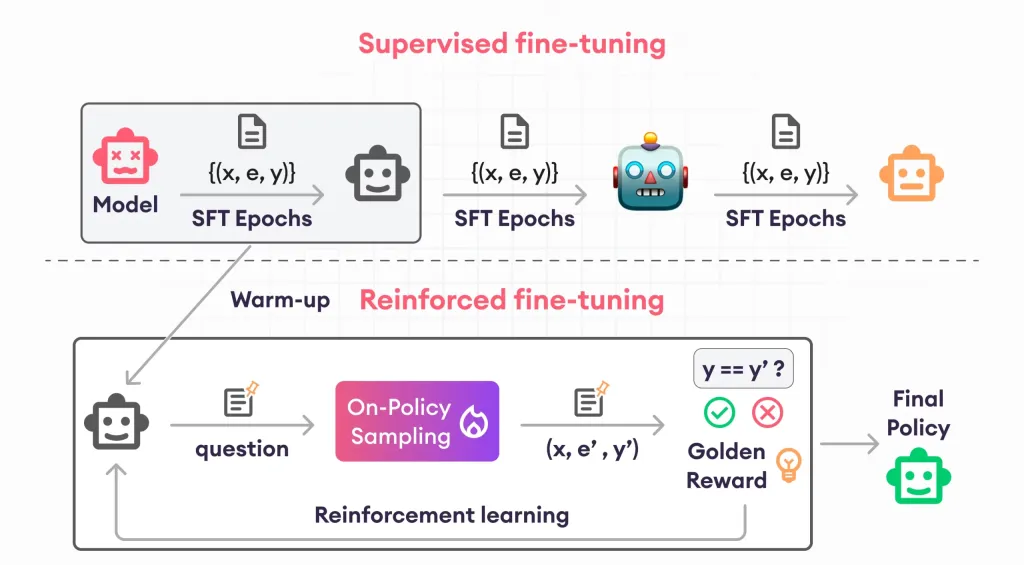

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Zend Studio 13.0.1

Powerful PHP integrated development environment

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 English version

Recommended: Win version, supports code prompts!