Technology peripherals

Technology peripherals AI

AI Even if a large area of the image is missing, it can be restored realistically. The new model CM-GAN takes into account the global structure and texture details.

Even if a large area of the image is missing, it can be restored realistically. The new model CM-GAN takes into account the global structure and texture details.Even if a large area of the image is missing, it can be restored realistically. The new model CM-GAN takes into account the global structure and texture details.

Image restoration refers to completing the missing areas of the image, which is one of the basic tasks of computer vision. This direction has many practical applications, such as object removal, image retargeting, image synthesis, etc.

Early inpainting methods were based on image block synthesis or color diffusion to fill in missing parts of the image. To accomplish more complex image structures, researchers are turning to data-driven approaches, where they utilize deep generative networks to predict visual content and appearance. By training on large sets of images and aided by reconstruction and adversarial losses, generative inpainting models have been shown to produce more visually appealing results on various types of input data, including natural images and human faces.

However, existing works can only show good results in completing simple image structures, and generating image content with complex overall structures and high-detail fidelity is still a huge challenge, Especially when the image holes are large.

Essentially, image inpainting faces two key issues: one is how to accurately propagate global context to incomplete regions, and the other is to synthesize real local parts that are consistent with global cues. detail. To solve the global context propagation problem, existing networks utilize encoder-decoder structures, atrous convolutions, contextual attention, or Fourier convolutions to integrate long-range feature dependencies and expand the effective receptive field. Furthermore, the two-stage approach and iterative hole filling rely on predicting coarse results to enhance the global structure. However, these models lack a mechanism to capture high-level semantics of unmasked regions and effectively propagate them into holes to synthesize an overall global structure.

Based on this, researchers from the University of Rochester and Adobe Research proposed a new generation network: CM-GAN (cascaded modulation GAN), which can be better Geographically synthesize the overall structure and local details. CM-GAN includes an encoder with Fourier convolution blocks to extract multi-scale feature representations from input images with holes. There is also a two-stream decoder in CM-GAN, which sets a novel cascaded global spatial modulation block at each scale layer.

In each decoder block, we first apply global modulation to perform coarse and semantically aware structure synthesis, and then perform spatial modulation to further adjust the feature map in a spatially adaptive manner. . In addition, this study designed an object perception training scheme to prevent artifacts within the cavity to meet the needs of object removal tasks in real-life scenes. The study conducted extensive experiments to show that CM-GAN significantly outperforms existing methods in both quantitative and qualitative evaluations.

- Paper address: https://arxiv.org/pdf/2203.11947.pdf

- Project address: https://github.com/htzheng/CM-GAN-Inpainting

Let’s first look at the image repair effect. Compared with other methods, CM-GAN can reconstruct better textures:

##CM-GAN has better object boundaries:

Let’s take a look at the research methods and experimental results.

Method

Cascade Modulation GAN

In order to better model the global context of image completion, this study proposes a new mechanism that cascades global code modulation and spatial code modulation. This mechanism helps to deal with partially invalid features while better injecting global context into the spatial domain. The new architecture CM-GAN can well synthesize the overall structure and local details, as shown in Figure 1 below.

As shown in Figure 2 (left) below, CM-GAN is based on one encoder branch and two parallel cascades Decoder branch to generate visual output. The encoder takes part of the image and mask as input and generates multi-scale feature maps .

.

Different from most encoder-decoder methods, in order to complete the overall structure, this study extracts global style codes from the highest-level features of the fully connected layer, and then

of the fully connected layer, and then  Normalized. Additionally, an MLP-based mapping network generates style codes w from noise to simulate the randomness of image generation. Codes w are combined with s to produce a global code g = [s; w], which is used in subsequent decoding steps.

Normalized. Additionally, an MLP-based mapping network generates style codes w from noise to simulate the randomness of image generation. Codes w are combined with s to produce a global code g = [s; w], which is used in subsequent decoding steps.

Global spatial cascade modulation. To better connect the global context during the decoding stage, this study proposes global spatial cascaded modulation (CM). As shown in Figure 2 (right), the decoding stage is based on two branches: global modulation block (GB) and spatial modulation block (SB), and upsamples global features F_g and local features F_s in parallel.

Unlike existing methods, CM-GAN introduces a new method of injecting global context into the hole region. At a conceptual level, it consists of cascaded global and spatial modulations between features at each scale, and naturally integrates three compensation mechanisms for global context modeling: 1) feature upsampling; 2) global modulation; 3 ) spatial modulation.

Object Perception Training

The algorithm that generates masks for training is crucial. Essentially, the sampled mask should be similar to the mask that would be drawn in the actual use case, and the mask should avoid covering the entire object or large parts of any new objects. Oversimplified masking schemes can lead to artifacts.

To better support real object removal use cases while preventing the model from synthesizing new objects within holes, this study proposes an object-aware training scheme that generates A more realistic mask, as shown in Figure 4 below.

Specifically, the study first passes the training images to the panoramic segmentation network PanopticFCN to generate highly accurate instance-level The annotations are segmented, then a mixture of free holes and object holes is sampled as an initial mask, and finally the overlap ratio between the hole and each instance in the image is calculated. If the overlap ratio is greater than the threshold, the method excludes the foreground instance from the hole; otherwise, the hole is left unchanged and the simulated object is completed with the threshold set to 0.5. The study randomly expands and translates object masks to avoid overfitting. Additionally, this study enlarges holes on instance segmentation boundaries to avoid leaking background pixels near holes into the inpainted region.

Training objective with Masked-R_1 regularization

The model is trained with a combination of adversarial loss and segmentation-based perceptual loss. Experiments show that this method can also achieve good results when purely using adversarial losses, but adding perceptual losses can further improve performance.

In addition, this study also proposes a masked-R_1 regularization specifically for adversarial training of stable inpainting tasks, where the mask m is utilized to avoid computing the gradient penalty outside the mask.

Experiment

This study conducted an image repair experiment on the Places2 data set at a resolution of 512 × 512, and gave the model's Quantitative and qualitative assessment results.

Quantitative evaluation: Table 1 below compares CM-GAN with other masking methods. The results show that CM-GAN significantly outperforms other methods in terms of FID, LPIPS, U-IDS, and P-IDS. With the help of perceptual loss, LaMa, CM-GAN achieves significantly better LPIPS scores than CoModGAN and other methods, thanks to the additional semantic guidance provided by the pre-trained perceptual model. Compared to LaMa/CoModGAN, CM-GAN reduces FID from 3.864/3.724 to 1.628.

As shown in Table 3 below, with or without fine-tuning, CM-GAN has better performance in LaMa and CoModGAN masks Both have achieved significantly better performance gains than LaMa and CoModGAN, indicating that the model has generalization capabilities. It is worth noting that the performance of CM-GAN trained on CoModGAN mask, object-aware mask is still better than that of CoModGAN mask, confirming that CM-GAN has better generation ability.

#To verify the importance of each component in the model, this study conducted a set of ablation experiments, and all models were trained and evaluated on the Places2 dataset. The results of the ablation experiment are shown in Table 2 and Figure 7 below.

##

##

The above is the detailed content of Even if a large area of the image is missing, it can be restored realistically. The new model CM-GAN takes into account the global structure and texture details.. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

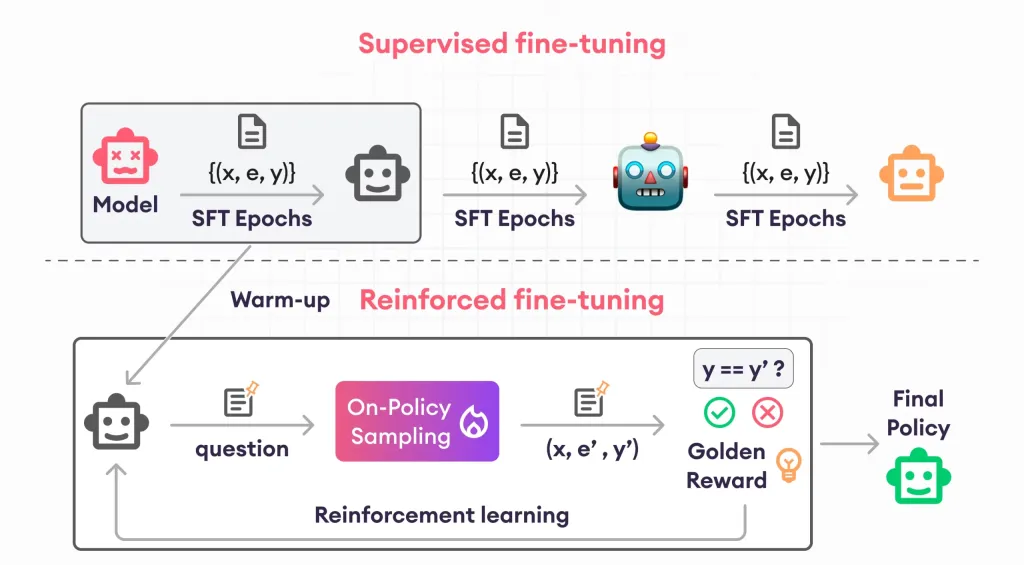

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

Notepad++7.3.1

Easy-to-use and free code editor

Zend Studio 13.0.1

Powerful PHP integrated development environment

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Atom editor mac version download

The most popular open source editor