The topic of this sharing is ChatGPT technology, localization attempts and open source models. The sharing consists of three parts. The first part gives an overall introduction to ChatGPT related technologies: the evolution of ChatGPT technology, current problems, the three stages of ChatGPT technology learning, data organization and effect evaluation; the second part shares our experience in ChatGPT Our attempts at technology localization include the problems we encountered during the experiment, our thoughts, and the effects and applications of the model; the third part introduces the Chinese open source large model we have released, and how to use our own data to train a local model During the operation, the problems that may be encountered during the experiment, the gaps between it and the open source advanced model, and how to further improve the effect of the model.

1. ChatGPT related technologies

ChatGPT is a general functional assistant. On December 5, 2022, OpenAI CEO Sam Altman posted on social media that ChatGPT had exceeded 1 million users five days after its launch. The AI chatbot ChatGPT exploded into popularity and has become a landmark event. Microsoft is in talks to increase its stake by $10 billion and soon integrate it into the Microsoft Cloud.

The above picture shows two examples, showing amazing results.

The reason why ChatGPT is so popular is, on the one hand, its ability to understand user intentions and its better generated effects; on the other hand, through the use of conversational robots form so that everyone can use it.

The following will cover the evolution of the model, problems with the initial model, the three stages of ChatGPT model learning, and the data organization and effects of training the ChatGPT model. introduce.

1. Model evolution

ChatGPT technology has also evolved through several generations of models. The initial GPT model is Proposed in 2018, the model parameters were only 117 million; in 2019, the GPT-2 model parameters were 1.5 billion; by 2020, the GPT-3 model parameters reached 175 billion; through several generations of model update iterations, it will appear by 2022 ChatGPT model.

2. What kind of problems did the previous model have?

In What were the problems with the models before the ChatGPT model came out? Through analysis, it was found that one of the more obvious problems is the alignment problem. Although the generation ability of large models is relatively strong, the generated answers sometimes do not meet the user's intention. Through research, it was found that the main reason for the alignment problem is that the training goal of language model training is to predict the next word, rather than generate it according to the user's intention. In order to solve the alignment problem, the Reinforcement Learning from Human Feedback (RLHF) process is added to the training process of the ChatGPT model.

3. Three stages of learning

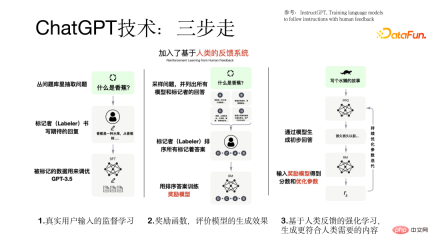

The training process of the ChatGPT model is carried out in a three-step process.

The first step is to use real user input for supervised learning based on the GPT model. In this The data in the process comes from real users, and the data quality is relatively high and valuable.

The second step is to train a reward model. Different models will produce different outputs for a query. As a result, the tagger sorts the output results of all models and uses these sorted data to train the reward model.

The third step is to Input the preliminary answer generated by the model into the reward model. The reward model will evaluate the answer. If the generated If the answer meets the user's intention, a positive feedback will be given, otherwise a negative feedback will be given, thereby making the model better and better. This is the purpose of introducing reinforcement learning to make the generated results more in line with human needs. The three-step process of training the ChatGPT model is shown in the figure below.

4. Data organization and effect evaluation

Before training the model, we need to prepare the data set to be used. In this process, we will encounter the problem of data cold start, can be solved through the following three aspects:

(1) Collect data sets used by users of the old system

(2) Let the annotators annotate some similar prompts and output

based on the questions input by real users before Think of some prompts.

The data for training the ChatGPT model contains three parts of the data set (77k real data):

(1) Supervised learning based on real user prompts Data, user prompt, model response, the amount of data is 13k.

(2) The data set used to train the reward model. This part of the data is for the sorting of multiple responses corresponding to one prompt, and the data volume is 33k.

# (3) A data set based on the reward model using reinforcement learning technology for model training. It only requires user prompts. The data volume is 31k and has high quality requirements. .

After completing the ChatGPT model training, the evaluation of the model is relatively sufficient, mainly from the following aspects:

(1 ) Whether the results generated by the model meet the user's intention

(2) Whether the generated results can satisfy the constraints mentioned by the user

(3) Whether the model can have good results in the field of customer service

Details of comparison with the GPT basic model The experimental results are shown in the figure below.

2. Localization of ChatGPT technology

The following will discuss the background and problems, solution ideas, effects and practices. This aspect introduces our localization of ChatGPT technology.

1. Background and issues

Why we need to carry out localization, we mainly consider the following aspects:

(1) ChatGPT technology itself is relatively advanced and works well on many tasks, but it does not provide services to mainland China.

# (2) It may not be able to meet the needs of domestic enterprise-level customers and cannot provide localized technical support and services.

# (3) The price is priced in US dollars in Europe and the United States as the main markets. The price is relatively expensive and most domestic users may not be able to afford it. Through testing, it was found that each piece of data costs about 0.5 yuan, and commercialization is impossible for customers with large amounts of data.

Due to the above three problems, we tried to localize ChatGPT technology.

2. Solution ideas

#We are in the process of localizing ChatGPT technology, A distributed strategy was adopted.

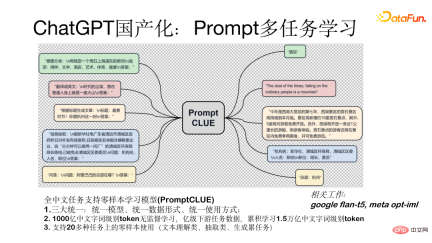

First, a Chinese pre-training model with tens of billions of parameters was trained; secondly, task supervised learning was performed using Prompt on billion-level task data; and then the model was conversationalized, that is, in Interact with people in the form of dialogue or human-computer interaction; finally, we introduce the reinforcement learning RLHF technology of reward model and user feedback.

Prompt multi-task learning model (PromptCLUE) is a model that supports zero-sample learning for all Chinese tasks. This model achieves three major unifications: unified model, unified data form (all tasks are converted into prompt form), and unified usage method (used in zero-sample form). The model is based on unsupervised learning of 100 billion Chinese word-level tokens. It is trained on billion-level downstream task data and has accumulated 1.5 trillion Chinese word-level tokens. Supports zero-sample use on more than 20 tasks (text understanding, extraction, and generation tasks).

How to make the model conversational, that is, convert it into a model in the form of human-computer interaction, we mainly did the following aspects Work:

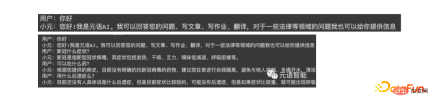

First of all, in order to make the model have a better generation effect, we removed the text understanding and extraction tasks, thus strengthening the question and answer, dialogue and generation tasks. learning; secondly, after transforming into a dialogue model, the generated results will be interfered by the context. To address this problem, we added anti-interference data so that the model can ignore irrelevant context when necessary; finally, we based on the feedback data of real users A learning process is added to enable the model to better understand the user's intentions. The figure below shows the form of single-round and multi-round testing with the model.

3. Effect and practice

The following is a test for the model By comparing the current effect with the ChatGPT model, there is still a gap of 1 to 2 years. However, this gap can be gradually made up. At present, we have made some useful attempts and have achieved certain results. We can currently have some dialogues. , Q&A, writing and other interactions. The image below shows the test results.

##3. Domestic open source large model

1. Chinese open source model

The metalanguage functional dialogue model (ChatYuan) we just released recently has 770 million parameters. The online version is a model with 10 billion parameters. It has been launched on multiple platforms, including Huggingface, ModelScope, and Github. , paddlepaddle can be used. Models can be downloaded locally and fine-tuned based on your own user data set. It is further trained based on PromptCLUE-large combined with hundreds of millions of functional dialogue multi-round dialogue data.

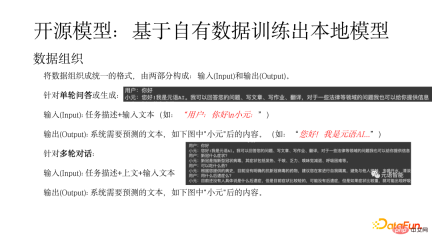

## The Huggingface platform is taken as an example to show how to use the model locally. Search ChatYuan on the platform, load the model, and perform simple packaging. There are some important parameters in use, such as whether to sample samples. If you need to generate a variety of samples, you need to sample. #First, the data needs to be organized into a unified form, which consists of two parts: input and Output. For a single round of question and answer or generated input (Input): task description input text (such as: "User: Hello n Xiaoyuan:"), output (Output) refers to the text that the system needs to predict (such as: "Hello! I am Metalanguage AI..."). For multi-round dialogue input (Input): Task description above input text, output refers to the text that the system needs to predict, as shown in the figure below after "Xiaoyuan". The following figure shows an example of training a local model based on your own data. This example covers the entire process, from data preparation to downloading and converting open source data, as well as model training, prediction, and evaluation. The basis is the pCLUE multi-task dataset. Users can use their own data for training, or use pCLUE for preliminary training to test the effect. ChatYuan and ChatGPT are both general functional conversation models, capable of question and answer, interaction and generation in chatting or professional fields such as law and medicine. Compared with the ChatGPT model, there is still a certain gap, mainly reflected in the following aspects: In the process of using the model, you may encounter problems with the generation effect and text length, depending on whether the data format is correct and whether during the generation process Sampling sample, the length of the output result controls max_length, etc. To further improve the model effect, you can start from the following aspects: (1) Combine industry data for further training, including unsupervised pre-training, and use a large amount of high-quality data for supervised learning. ## (2) Learning using real user feedback data can compensate for distribution differences. #(3) Introduce reinforcement learning to align user intentions. # (4) Choose a larger model. Generally speaking, the larger the model, the stronger the model capability. The new technologies and usage scenarios brought by ChatGPT allow people to see the huge potential of AI. More applications will be upgraded, creating possibilities for some new applications. Yuanyu Intelligence, as a large model Model-as-a-Service service provider, is also constantly exploring in this field. Interested partners are welcome to pay attention to our website and official account. That’s it for today’s sharing, thank you all. 2. Training local models based on own data

3. Possible problems, gaps and how to further improve the effect

The above is the detailed content of An attempt to localize ChatGPT technology. For more information, please follow other related articles on the PHP Chinese website!

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AM

The AI Skills Gap Is Slowing Down Supply ChainsApr 26, 2025 am 11:13 AMThe term "AI-ready workforce" is frequently used, but what does it truly mean in the supply chain industry? According to Abe Eshkenazi, CEO of the Association for Supply Chain Management (ASCM), it signifies professionals capable of critic

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AM

How One Company Is Quietly Working To Transform AI ForeverApr 26, 2025 am 11:12 AMThe decentralized AI revolution is quietly gaining momentum. This Friday in Austin, Texas, the Bittensor Endgame Summit marks a pivotal moment, transitioning decentralized AI (DeAI) from theory to practical application. Unlike the glitzy commercial

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AM

Nvidia Releases NeMo Microservices To Streamline AI Agent DevelopmentApr 26, 2025 am 11:11 AMEnterprise AI faces data integration challenges The application of enterprise AI faces a major challenge: building systems that can maintain accuracy and practicality by continuously learning business data. NeMo microservices solve this problem by creating what Nvidia describes as "data flywheel", allowing AI systems to remain relevant through continuous exposure to enterprise information and user interaction. This newly launched toolkit contains five key microservices: NeMo Customizer handles fine-tuning of large language models with higher training throughput. NeMo Evaluator provides simplified evaluation of AI models for custom benchmarks. NeMo Guardrails implements security controls to maintain compliance and appropriateness

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AM

AI Paints A New Picture For The Future Of Art And DesignApr 26, 2025 am 11:10 AMAI: The Future of Art and Design Artificial intelligence (AI) is changing the field of art and design in unprecedented ways, and its impact is no longer limited to amateurs, but more profoundly affecting professionals. Artwork and design schemes generated by AI are rapidly replacing traditional material images and designers in many transactional design activities such as advertising, social media image generation and web design. However, professional artists and designers also find the practical value of AI. They use AI as an auxiliary tool to explore new aesthetic possibilities, blend different styles, and create novel visual effects. AI helps artists and designers automate repetitive tasks, propose different design elements and provide creative input. AI supports style transfer, which is to apply a style of image

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AM

How Zoom Is Revolutionizing Work With Agentic AI: From Meetings To MilestonesApr 26, 2025 am 11:09 AMZoom, initially known for its video conferencing platform, is leading a workplace revolution with its innovative use of agentic AI. A recent conversation with Zoom's CTO, XD Huang, revealed the company's ambitious vision. Defining Agentic AI Huang d

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AM

The Existential Threat To UniversitiesApr 26, 2025 am 11:08 AMWill AI revolutionize education? This question is prompting serious reflection among educators and stakeholders. The integration of AI into education presents both opportunities and challenges. As Matthew Lynch of The Tech Edvocate notes, universit

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AM

The Prototype: American Scientists Are Looking For Jobs AbroadApr 26, 2025 am 11:07 AMThe development of scientific research and technology in the United States may face challenges, perhaps due to budget cuts. According to Nature, the number of American scientists applying for overseas jobs increased by 32% from January to March 2025 compared with the same period in 2024. A previous poll showed that 75% of the researchers surveyed were considering searching for jobs in Europe and Canada. Hundreds of NIH and NSF grants have been terminated in the past few months, with NIH’s new grants down by about $2.3 billion this year, a drop of nearly one-third. The leaked budget proposal shows that the Trump administration is considering sharply cutting budgets for scientific institutions, with a possible reduction of up to 50%. The turmoil in the field of basic research has also affected one of the major advantages of the United States: attracting overseas talents. 35

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AM

All About Open AI's Latest GPT 4.1 Family - Analytics VidhyaApr 26, 2025 am 10:19 AMOpenAI unveils the powerful GPT-4.1 series: a family of three advanced language models designed for real-world applications. This significant leap forward offers faster response times, enhanced comprehension, and drastically reduced costs compared t

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Chinese version

Chinese version, very easy to use

Notepad++7.3.1

Easy-to-use and free code editor

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.