Technology peripherals

Technology peripherals AI

AI Breaking NAS bottlenecks, new method AIO-P predicts architecture performance across tasks

Breaking NAS bottlenecks, new method AIO-P predicts architecture performance across tasksBreaking NAS bottlenecks, new method AIO-P predicts architecture performance across tasks

Huawei HiSilicon Canada Research Institute and the University of Alberta jointly launched a neural network performance prediction framework based on pre-training and knowledge injection.

The performance evaluation of neural networks (precision, recall, PSNR, etc.) requires a lot of resources and time and is the main bottleneck of neural network structure search (NAS). Early NAS methods required extensive resources to train each new structure searched from scratch. In recent years, network performance predictors are attracting more attention as an efficient performance evaluation method.

However, current predictors are limited in their scope of use because they can only model network structures from a specific search space and can only predict the performance of new structures on specific tasks. For example, the training samples only contain classification networks and their accuracy, so that the trained predictors can only be used to evaluate the performance of new network structures on image classification tasks.

In order to break this boundary and enable the predictor to predict the performance of a certain network structure on multiple tasks and have cross-task and cross-data generalization capabilities, Huawei HiSilicon Canada Research Institute and the University of Alberta jointly introduced a neural network performance prediction framework based on pre-training and knowledge injection. This framework can quickly evaluate the performance of different structures and types of networks on many different types of CV tasks such as classification, detection, segmentation, etc. for neural network structure search. Research paper has been accepted by AAAI 2023.

- Paper link: https://arxiv.org/abs/2211.17228

- Code link: https://github.com/Ascend -Research/AIO-P

The AIO-P (All-in-One Predictors) approach aims to extend the scope of neural predictors to computer vision tasks beyond classification. AIO-P utilizes K-Adapter technology to inject task-related knowledge into the predictor model, and also designs a label scaling mechanism based on FLOPs (Floating Point Operands) to adapt to different performance indicators and distributions. AIO-P uses a unique pseudo-labeling scheme to train K-Adapters, generating new training samples in just minutes. Experimental results show that AIO-P exhibits strong performance prediction capabilities and achieves excellent MAE and SRCC results on several computer vision tasks. In addition, AIO-P can directly migrate and predict the performance of never-before-seen network structures, and can cooperate with NAS to optimize the calculation amount of existing networks without reducing performance.

Method Introduction

AIO-P is a general network performance predictor that can be generalized to multiple tasks. AIO-P achieves performance prediction capabilities across tasks and search spaces through predictor pre-training and domain-specific knowledge injection. AIO-P uses K-Adapter technology to inject task-related knowledge into the predictor, and relies on a common computational graph (CG) format to represent a network structure, ultimately enabling it to support networks from different search spaces and tasks, as shown in Figure 1 below. shown.

Figure 1. How AIO-P represents the network structure used for different tasks

In addition, the pseudo-marking mechanism The use of AIO-P can quickly generate new training samples to train K-Adapters. To bridge the gap between performance measurement ranges on different tasks, AIO-P proposes a label scaling method based on FLOPs to achieve cross-task performance modeling. Extensive experimental results show that AIO-P is able to make accurate performance predictions on a variety of different CV tasks, such as pose estimation and segmentation, without requiring training samples or with only a small amount of fine-tuning. Additionally, AIO-P can correctly rank performance on never-before-seen network structures and, when combined with a search algorithm, is used to optimize Huawei's facial recognition network, keeping its performance unchanged and reducing FLOPs by more than 13.5%. The paper has been accepted by AAAI-23 and the code has been open sourced on GitHub.

Computer vision networks usually consist of a "backbone" that performs feature extraction and a "head" that uses the extracted features to make predictions. The structure of the "backbone" is usually designed based on a certain known network structure (ResNet, Inception, MobileNet, ViT, UNet), while the "head" is designed for a given task, such as classification, pose estimation, segmentation, etc. Designed. Traditional NAS solutions manually customize the search space based on the structure of the "backbone". For example, if the "backbone" is MobileNetV3, the search space may include the number of MBConv Blocks, the parameters of each MBConv (kernel size, expansion), the number of channels, etc. However, this customized search space is not universal. If there is another "backbone" designed based on ResNet, it cannot be optimized through the existing NAS framework, but the search space needs to be redesigned.

In order to solve this problem, AIO-P chose to represent different network structures from the computational graph level, achieving a unified representation of any network structure. As shown in Figure 2, the computational graph format allows AIO-P to encode the header and backbone together to represent the entire network structure. This also allows AIO-P to predict the performance of networks from different search spaces (such as MobileNets and ResNets) on various tasks.

Figure 2. Representation of the Squeeze-and-Excite module in MobileNetV3 at the computational graph level

Proposed in AIO-P The predictor structure starts from a single GNN regression model (Figure 3, green block), which predicts the performance of the image classification network. To add to it the knowledge of other CV tasks, such as detection or segmentation, the study attached a K-Adapter (Fig. 3, orange block) to the original regression model. The K-Adapter is trained on samples from the new task, while the original model weights are frozen. Therefore, this study separately trains multiple K-Adapters (Figure 4) to add knowledge from multiple tasks.

Figure 3. AIO-P predictor with a K-Adapter

Figure 4. AIO-P predictor with multiple K-Adapters

In order to further reduce the cost of training each K-Adapter, this study proposes a clever pseudo-labeling technology . This technique uses a latent sampling scheme to train a "head" model that can be shared between different tasks. The shared head can then be paired with any network backbone in the search space and fine-tuned to generate pseudo-labels in 10-15 minutes (Figure 5).

Figure 5. Training a "head" model that can be shared between different tasks

It has been proven by experiments that using shared heads The pseudo-labels obtained are positively correlated with the actual performance obtained by training a network from scratch for a day or more, sometimes with a rank correlation coefficient exceeding 0.5 (Spearman correlation).

In addition, different tasks will have different performance indicators. These performance indicators usually have their own specific distribution interval. For example, a classification network using a specific backbone may have a classification accuracy of about 75% on ImageNet, while the mAP on the MS-COCO object detection task may be 30-35 %. To account for these different intervals, this study proposes a method to understand network performance from a normal distribution based on the normalization concept. In layman's terms, if the predicted value is 0, the network performance is average; if > 0, it is a better network;

Figure 6. How to normalize network performance

The FLOPs of a network are related to model size, input data, and are generally positively correlated with performance Related trends. This study uses FLOPs transformations to enhance the labels that AIO-P learns from.

Experiments and Results

This study first trained AIO-P on human pose estimation and object detection tasks, and then used it to predict the performance of network structures on multiple tasks, including pose estimation ( LSP and MPII), detection (OD), instance segmentation (IS), semantic segmentation (SS) and panoramic segmentation (PS). Even in the case of zero-shot direct migration, use AIO-P to predict the performance of networks from the Once-for-All (OFA) search space (ProxylessNAS, MobileNetV3 and ResNet-50) on these tasks, and the final prediction results A MAE of less than 1.0% and a ranking correlation of over 0.5 were achieved.

In addition, this study also used AIO-P to predict the performance of networks in the TensorFlow-Slim open source model library (such as DeepLab semantic segmentation model, ResNets, Inception nets, MobileNets and EfficientNets), these network structures may not have appeared in the training samples of AIO-P.

AIO-P can achieve almost perfect SRCC on 3 DeepLab semantic segmentation model libraries, obtain positive SRCC on all 4 classification model libraries, and achieve SRCC=1.0 on the EfficientNet model by utilizing FLOPs transformation. .

Finally, the core motivation of AIO-P is to be able to pair it with a search algorithm and use it to optimize arbitrary network structures, which can be independent and not belong to any The structure of a search space or library of known models, or even one for a task that has never been trained on. This study uses AIO-P and the random mutation search algorithm to optimize the face recognition (FR) model used on Huawei mobile phones. The results show that AIO-P can reduce the model calculation FLOPs by more than 13.5% while maintaining performance (precision (Pr) and recall (Rc)).

Interested readers can read the original text of the paper to learn more research details.

The above is the detailed content of Breaking NAS bottlenecks, new method AIO-P predicts architecture performance across tasks. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

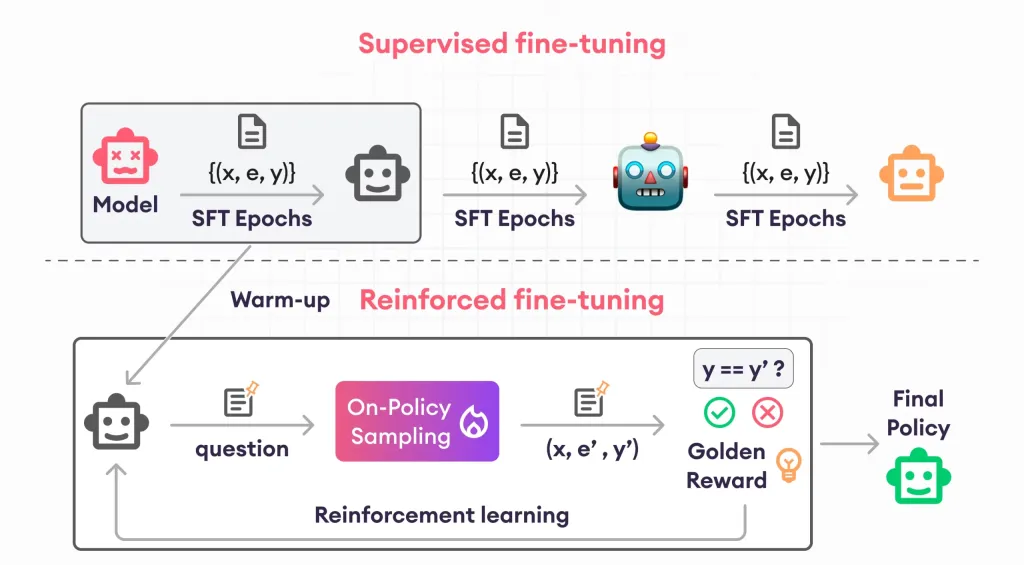

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SublimeText3 Linux new version

SublimeText3 Linux latest version