Home >Operation and Maintenance >Linux Operation and Maintenance >How to install hadoop in linux

How to install hadoop in linux

- 藏色散人Original

- 2021-12-17 17:03:5611917browse

How to install Hadoop on Linux: 1. Install the ssh service; 2. Use ssh to log in without password authentication; 3. Download the Hadoop installation package; 4. Unzip the Hadoop installation package; 5. Configure the corresponding Hadoop Just file.

The operating environment of this article: ubuntu 16.04 system, Hadoop version 2.7.1, Dell G3 computer.

How to install hadoop on linux?

[Big Data] Detailed explanation of installing Hadoop (2.7.1) and running WordCount under Linux

1. Introduction

After completion After configuring the Storm environment, I wanted to tinker with the installation of Hadoop. There were many tutorials on the Internet, but none of them were particularly suitable, so I still encountered a lot of trouble during the installation process. After constantly consulting the information, I finally solved it. Question, I still feel very good about it. Let’s not talk too much nonsense and let’s get to the point.

The configuration environment of this machine is as follows:

Hadoop(2.7.1)

Ubuntu Linux (64-bit system)

The following are divided into several steps Let’s explain the configuration process in detail.

2. Install ssh service

Enter the shell command and enter the following command to check whether the ssh service has been installed. If not, use the following command to install it:

sudo apt-get install ssh openssh-server

The installation process is relatively easy and enjoyable.

3. Use ssh for passwordless authentication login

1. Create ssh-key. Here we use rsa method and use the following command:

ssh-keygen -t rsa -P ""

2. A graphic will appear. The graphic that appears is the password. Don’t worry about it

cat ~/. ssh/id_rsa.pub >> authorized_keys (it seems to be omitted)

3. Then you can log in without password verification, as follows:

ssh localhost

The successful screenshot is as follows:

4. Download the Hadoop installation package

There are also downloads for Hadoop installation Two ways

1. Go directly to the official website to download, http://mirrors.hust.edu.cn/apache/hadoop/core/stable/hadoop-2.7.1.tar.gz

2. Use shell to download, the command is as follows:

wget http://mirrors.hust.edu.cn/apache/hadoop/core/stable/hadoop-2.7.1.tar. gz

It seems that the second method is faster. After a long wait, the download is finally completed.

5. Decompress the Hadoop installation package

Use the following command to decompress the Hadoop installation package

tar -zxvf hadoop-2.7.1.tar. gz

After decompression is completed, the folder of hadoop2.7.1 appears

6. Configure the corresponding files in Hadoop

The files that need to be configured are as follows, hadoop-env.sh, core-site.xml, mapred-site.xml.template, hdfs-site.xml, all files are located under hadoop2.7.1/etc/hadoop. The specific required configuration is as follows:

1.core-site.xml is configured as follows:

<configuration> <property> <name>hadoop.tmp.dir</name> <value>file:/home/leesf/program/hadoop/tmp</value> <description>Abase for other temporary directories.</description> </property> <property> <name>fs.defaultFS</name> <value>hdfs://localhost:9000</value> </property> </configuration>

The path of hadoop.tmp.dir can be set according to your own habits.

2.mapred-site.xml.template is configured as follows:

<configuration> <property> <name>mapred.job.tracker</name> <value>localhost:9001</value> </property> </configuration>

3.hdfs-site.xml is configured as follows:

<configuration> <property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.namenode.name.dir</name> <value>file:/home/leesf/program/hadoop/tmp/dfs/name</value> </property> <property> <name>dfs.datanode.data.dir</name> <value>file:/home/leesf/program/hadoop/tmp/dfs/data</value> </property> </configuration>

Among them, dfs.namenode.name.dir The paths to dfs.datanode.data.dir can be set freely, preferably under the directory of hadoop.tmp.dir.

In addition, if you find that jdk cannot be found when running Hadoop, you can directly place the path of jdk in hadoop.env.sh, as follows:

export JAVA_HOME="/home/ leesf/program/java/jdk1.8.0_60"

7. Run Hadoop

After the configuration is completed, run hadoop.

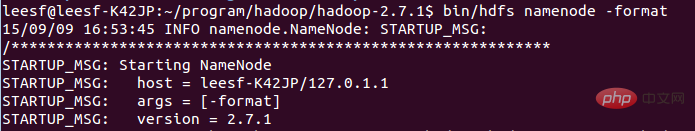

1. Initialize the HDFS system

Use the following command in the hadop2.7.1 directory:

bin/hdfs namenode -format

The screenshot is as follows:

The process requires ssh authentication. You have already logged in before, so just type y between the initialization process.

The successful screenshot is as follows:

Indicates that the initialization has been completed.

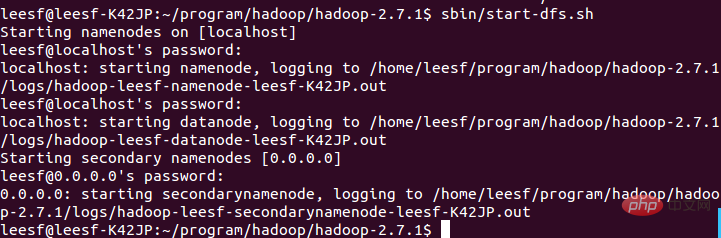

2. Start the NameNode and DataNode daemons

Use the following command to start:

sbin/start- dfs.sh, the successful screenshot is as follows:

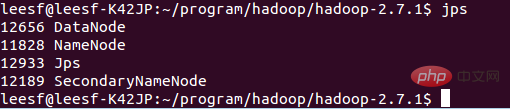

3. View process information

Use the following command to view process information

jps, the screenshot is as follows:

Indicates that both DataNode and NameNode have been turned on

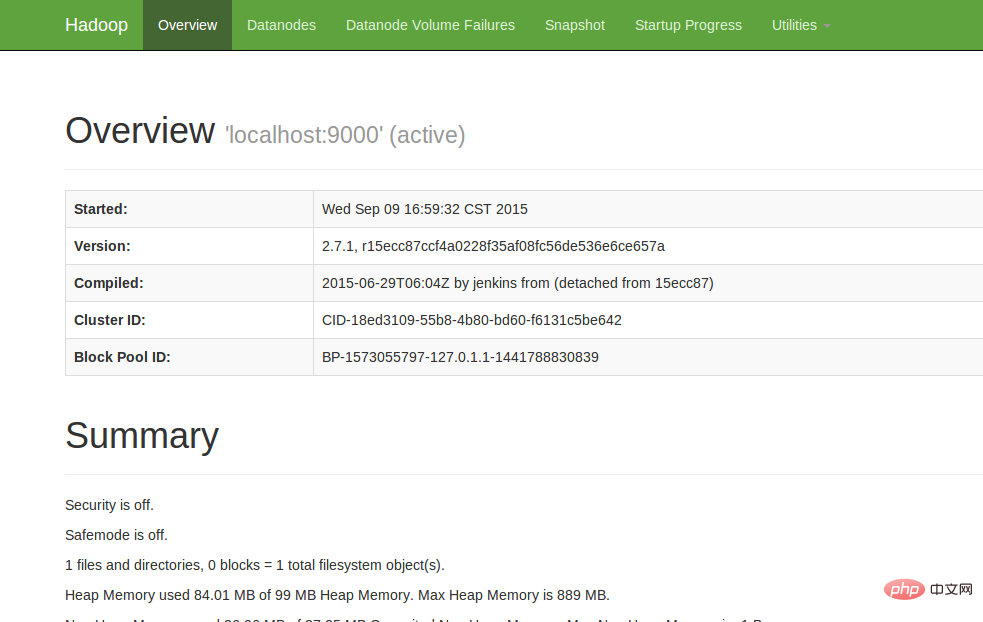

4. View Web UI

Enter http://localhost:50070 in the browser to view relevant information. The screenshot is as follows:

At this point, the hadoop environment has been set up. Let's start using hadoop to run a WordCount example.

8. Run WordCount Demo

1. Create a new file locally. The author created a new word document in the home/leesf directory. You can fill in the content as you like. .

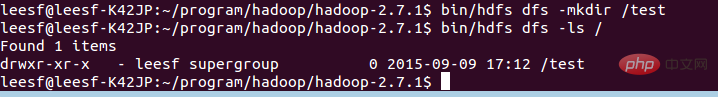

2. Create a new folder in HDFS for uploading local word documents. Enter the following command in the hadoop2.7.1 directory:

bin/hdfs dfs -mkdir /test, which means A test directory was created under the root directory of hdfs

Use the following command to view the directory structure under the root directory of HDFS

bin/hdfs dfs -ls /

Specific screenshots As follows:

… Over welcome] in a test directory has been created under the root directory of HDFS

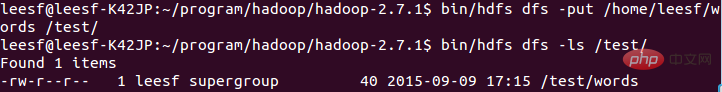

Use the following command to upload:

bin/hdfs dfs -put /home/leesf/words /test/

Use the following command to view

bin/ hdfs dfs -ls /test/

The screenshot of the result is as follows:

It means that the local word document has been uploaded to the test directory.

It means that the local word document has been uploaded to the test directory.

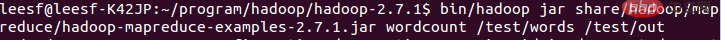

4. Run wordcount

Use the following command to run wordcount:

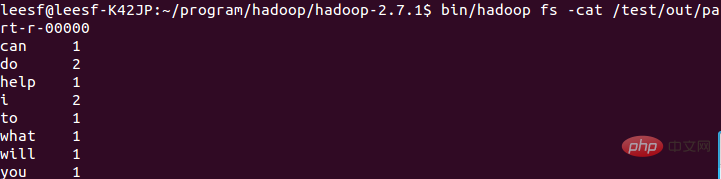

bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.1.jar wordcount /test/words /test/out

#

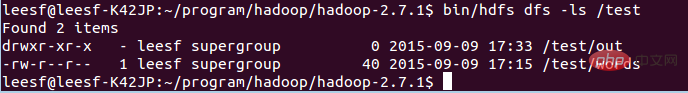

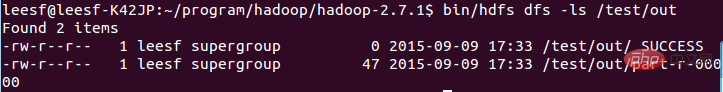

After the operation is completed, generate a file named out in the /test directory, use the following Command to view the files in the /test directory

It means that it is in the test directory There is already a file directory named Out

It means it has been successfully run and the result is saved in part-r-00000.

At this point, the running process has been completed.

9. Summary

I encountered many problems during this hadoop configuration process. The commands of hadoop1.x and 2.x are still very different. The configuration process I still solved the problems one by one, the configuration was successful, and I gained a lot. I would like to share the experience of this configuration for the convenience of all gardeners who want to configure the Hadoop environment. If you have any questions during the configuration process, please feel free to discuss them. , thank you all for watching~

Recommended study: "linux video tutorial

"The above is the detailed content of How to install hadoop in linux. For more information, please follow other related articles on the PHP Chinese website!