Backend Development

Backend Development Python Tutorial

Python Tutorial Analysis of the key points of writing a basic code interpreter using Python

Analysis of the key points of writing a basic code interpreter using PythonAnalysis of the key points of writing a basic code interpreter using Python

I have always been very interested in compilers and parsers. I am also very clear about the concept and overall framework of a compiler, but I don’t know much about the details. The program source code we write is actually a sequence of characters. The compiler or interpreter can directly understand and execute this sequence of characters. This seems really amazing. This article will use Python to implement a simple parser to interpret a small list manipulation language (similar to python's list). In fact, compilers and interpreters are not mysterious. As long as the basic theory is understood, implementation is relatively simple (of course, a product-level compiler or interpreter is still very complicated).

Operations supported by this list language:

veca = [1, 2, 3] # 列表声明 vecb = [4, 5, 6] print 'veca:', veca # 打印字符串、列表,print expr+ print 'veca * 2:', veca * 2 # 列表与整数乘法 print 'veca + 2:', veca + 2 # 列表与整数加法 print 'veca + vecb:', veca + vecb # 列表加法 print 'veca + [11, 12]:', veca + [11, 12] print 'veca * vecb:', veca * vecb # 列表乘法 print 'veca:', veca print 'vecb:', vecb

Corresponding output:

veca: [1, 2, 3] veca * 2: [2, 4, 6] veca + 2: [1, 2, 3, 2] veca + vecb: [1, 2, 3, 2, 4, 5, 6] veca + [11, 12]: [1, 2, 3, 2, 11, 12] veca * vecb: [4, 5, 6, 8, 10, 12, 12, 15, 18, 8, 10, 12] veca: [1, 2, 3, 2] vecb: [4, 5, 6]

When compilers and interpreters process input character streams, they are basically consistent with the way humans understand sentences. For example:

I love you.

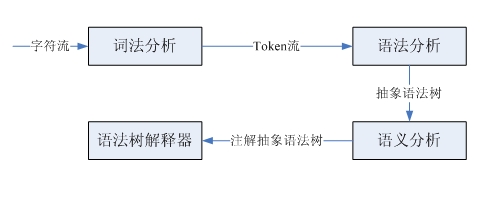

If you are new to learning English, you first need to know the meaning of each word, and then analyze the part of speech of each word to conform to the subject-predicate-object structure, so that you can understand the meaning of this sentence. This sentence is a character sequence. According to the lexical division, a lexical unit stream is obtained. In fact, this is lexical analysis, which completes the conversion from the character stream to the lexical unit stream. Analyzing the part of speech and determining the subject-verb-object structure is to identify this structure according to English grammar. This is grammatical analysis, and the grammatical parsing tree is identified based on the input lexical unit flow. Finally, combining the meaning of the word and the grammatical structure, the meaning of the sentence is finally obtained. This is semantic analysis. The processing process of compiler and interpreter is similar, but it is slightly more complicated. Here we only focus on the interpreter:

We are just implementing a very simple small language, so it does not involve the generation of syntax trees and subsequent complex semantic analysis. Next I will take a look at lexical analysis and syntax analysis.

Lexical analysis and syntax analysis are completed by lexical parser and syntax parser respectively. These two parsers have similar structure and functionality. They both take an input sequence as input and then identify specific structures. The lexical parser parses tokens (lexical units) one by one from the source code character stream, and the syntax parser identifies substructures and lexical units, and then performs some processing. Both parsers can be implemented through LL(1) recursive descent parsers. The steps completed by this type of parser are: predict the type of the clause, call the parsing function to match the substructure, match the lexical unit, and then insert code as needed. Perform custom actions.

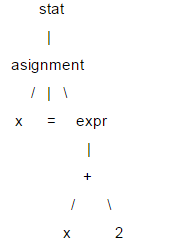

Here is a brief introduction to LL(1). The structure of a statement is usually represented by a tree structure, called a parse tree. LL(1) relies on the parse tree for syntax parsing. For example: x = x +2;

In this tree, leaf nodes like x, =, and 2 are called terminal nodes, and the others are called non-terminal nodes. When parsing LL(1), there is no need to create a specific tree data structure. You can write a parsing function for each non-terminal node and call it when the corresponding node is encountered. In this way, you can pass the parsing function in the calling sequence (equivalent to Tree traversal) to obtain parse tree information. When LL(1) is parsed, it is executed in the order from the root node to the leaf node, so this is a "descending" process, and the parsing function can call itself, so it is "recursive", so LL(1 ) is also called a recursive descent parser.

The two L's in LL(1) both mean left-to-right. The first L means that the parser parses the input content in order from left to right. The second L means that during the descending process, it also analyzes the input content from left to right. Traverse the child nodes in order to the right, and 1 means making predictions based on 1 lookahead unit.

Let's take a look at the implementation of the list mini-language. The first is the grammar of the language. Grammar is used to describe a language and is regarded as the design specification of the parser.

statlist: stat+

stat: ID '=' expr

| 'print' expr (, expr)*

expr: multipart ('+' multipart)*

| STR

multipart: primary ('*' primary)*

primary: INT

| ID

| '[' expr (',', expr)* ']'

INT: (1..9)(0..9)*

ID: (a..z | A..Z)*

STR: (\".*\") | (\'.*\')

这是用DSL描述的文法,其中大部分概念和正则表达式类似。"a|b"表示a或者b,所有以单引号括起的字符串是关键字,比如:print,=等。大写的单词是词法单元。可以看到这个小语言的文法还是很简单的。有很多解析器生成器可以自动根据文法生成对应的解析器,比如:ANTRL,flex,yacc等,这里采用手写解析器主要是为了理解解析器的原理。下面看一下这个小语言的解释器是如何实现的。

首先是词法解析器,完成字符流到token流的转换。采用LL(1)实现,所以使用1个向前看字符预测匹配的token。对于像INT、ID这样由多个字符组成的词法规则,解析器有一个与之对应的方法。由于语法解析器并不关心空白字符,所以词法解析器在遇到空白字符时直接忽略掉。每个token都有两个属性类型和值,比如整型、标识符类型等,对于整型类型它的值就是该整数。语法解析器需要根据token的类型进行预测,所以词法解析必须返回类型信息。语法解析器以iterator的方式获取token,所以词法解析器实现了next_token方法,以元组方式(type, value)返回下一个token,在没有token的情况时返回EOF。

'''''

A simple lexer of a small vector language.

statlist: stat+

stat: ID '=' expr

| 'print' expr (, expr)*

expr: multipart ('+' multipart)*

| STR

multipart: primary ('*' primary)*

primary: INT

| ID

| '[' expr (',', expr)* ']'

INT: (1..9)(0..9)*

ID: (a..z | A..Z)*

STR: (\".*\") | (\'.*\')

Created on 2012-9-26

@author: bjzllou

'''

EOF = -1

# token type

COMMA = 'COMMA'

EQUAL = 'EQUAL'

LBRACK = 'LBRACK'

RBRACK = 'RBRACK'

TIMES = 'TIMES'

ADD = 'ADD'

PRINT = 'print'

ID = 'ID'

INT = 'INT'

STR = 'STR'

class Veclexer:

'''''

LL(1) lexer.

It uses only one lookahead char to determine which is next token.

For each non-terminal token, it has a rule to handle it.

LL(1) is a quit weak parser, it isn't appropriate for the grammar which is

left-recursive or ambiguity. For example, the rule 'T: T r' is left recursive.

However, it's rather simple and has high performance, and fits simple grammar.

'''

def __init__(self, input):

self.input = input

# current index of the input stream.

self.idx = 1

# lookahead char.

self.cur_c = input[0]

def next_token(self):

while self.cur_c != EOF:

c = self.cur_c

if c.isspace():

self.consume()

elif c == '[':

self.consume()

return (LBRACK, c)

elif c == ']':

self.consume()

return (RBRACK, c)

elif c == ',':

self.consume()

return (COMMA, c)

elif c == '=':

self.consume()

return (EQUAL, c)

elif c == '*':

self.consume()

return (TIMES, c)

elif c == '+':

self.consume()

return (ADD, c)

elif c == '\'' or c == '"':

return self._string()

elif c.isdigit():

return self._int()

elif c.isalpha():

t = self._print()

return t if t else self._id()

else:

raise Exception('not support token')

return (EOF, 'EOF')

def has_next(self):

return self.cur_c != EOF

def _id(self):

n = self.cur_c

self.consume()

while self.cur_c.isalpha():

n += self.cur_c

self.consume()

return (ID, n)

def _int(self):

n = self.cur_c

self.consume()

while self.cur_c.isdigit():

n += self.cur_c

self.consume()

return (INT, int(n))

def _print(self):

n = self.input[self.idx - 1 : self.idx + 4]

if n == 'print':

self.idx += 4

self.cur_c = self.input[self.idx]

return (PRINT, n)

return None

def _string(self):

quotes_type = self.cur_c

self.consume()

s = ''

while self.cur_c != '\n' and self.cur_c != quotes_type:

s += self.cur_c

self.consume()

if self.cur_c != quotes_type:

raise Exception('string quotes is not matched. excepted %s' % quotes_type)

self.consume()

return (STR, s)

def consume(self):

if self.idx >= len(self.input):

self.cur_c = EOF

return

self.cur_c = self.input[self.idx]

self.idx += 1

if __name__ == '__main__':

exp = '''''

veca = [1, 2, 3]

print 'veca:', veca

print 'veca * 2:', veca * 2

print 'veca + 2:', veca + 2

'''

lex = Veclexer(exp)

t = lex.next_token()

while t[0] != EOF:

print t

t = lex.next_token()

运行这个程序,可以得到源代码:

veca = [1, 2, 3] print 'veca:', veca print 'veca * 2:', veca * 2 print 'veca + 2:', veca + 2

对应的token序列:

('ID', 'veca')

('EQUAL', '=')

('LBRACK', '[')

('INT', 1)

('COMMA', ',')

('INT', 2)

('COMMA', ',')

('INT', 3)

('RBRACK', ']')

('print', 'print')

('STR', 'veca:')

('COMMA', ',')

('ID', 'veca')

('print', 'print')

('STR', 'veca * 2:')

('COMMA', ',')

('ID', 'veca')

('TIMES', '*')

('INT', 2)

('print', 'print')

('STR', 'veca + 2:')

('COMMA', ',')

('ID', 'veca')

('ADD', '+')

('INT', 2)

接下来看一下语法解析器的实现。语法解析器的的输入是token流,根据一个向前看词法单元预测匹配的规则。对于每个遇到的非终结符调用对应的解析函数,而终结符(token)则match掉,如果不匹配则表示有语法错误。由于都是使用的LL(1),所以和词法解析器类似, 这里不再赘述。

'''''

A simple parser of a small vector language.

statlist: stat+

stat: ID '=' expr

| 'print' expr (, expr)*

expr: multipart ('+' multipart)*

| STR

multipart: primary ('*' primary)*

primary: INT

| ID

| '[' expr (',', expr)* ']'

INT: (1..9)(0..9)*

ID: (a..z | A..Z)*

STR: (\".*\") | (\'.*\')

example:

veca = [1, 2, 3]

vecb = veca + 4 # vecb: [1, 2, 3, 4]

vecc = veca * 3 # vecc:

Created on 2012-9-26

@author: bjzllou

'''

import veclexer

class Vecparser:

'''''

LL(1) parser.

'''

def __init__(self, lexer):

self.lexer = lexer

# lookahead token. Based on the lookahead token to choose the parse option.

self.cur_token = lexer.next_token()

# similar to symbol table, here it's only used to store variables' value

self.symtab = {}

def statlist(self):

while self.lexer.has_next():

self.stat()

def stat(self):

token_type, token_val = self.cur_token

# Asignment

if token_type == veclexer.ID:

self.consume()

# For the terminal token, it only needs to match and consume.

# If it's not matched, it means that is a syntax error.

self.match(veclexer.EQUAL)

# Store the value to symbol table.

self.symtab[token_val] = self.expr()

# print statement

elif token_type == veclexer.PRINT:

self.consume()

v = str(self.expr())

while self.cur_token[0] == veclexer.COMMA:

self.match(veclexer.COMMA)

v += ' ' + str(self.expr())

print v

else:

raise Exception('not support token %s', token_type)

def expr(self):

token_type, token_val = self.cur_token

if token_type == veclexer.STR:

self.consume()

return token_val

else:

v = self.multipart()

while self.cur_token[0] == veclexer.ADD:

self.consume()

v1 = self.multipart()

if type(v1) == int:

v.append(v1)

elif type(v1) == list:

v = v + v1

return v

def multipart(self):

v = self.primary()

while self.cur_token[0] == veclexer.TIMES:

self.consume()

v1 = self.primary()

if type(v1) == int:

v = [x*v1 for x in v]

elif type(v1) == list:

v = [x*y for x in v for y in v1]

return v

def primary(self):

token_type = self.cur_token[0]

token_val = self.cur_token[1]

# int

if token_type == veclexer.INT:

self.consume()

return token_val

# variables reference

elif token_type == veclexer.ID:

self.consume()

if token_val in self.symtab:

return self.symtab[token_val]

else:

raise Exception('undefined variable %s' % token_val)

# parse list

elif token_type == veclexer.LBRACK:

self.match(veclexer.LBRACK)

v = [self.expr()]

while self.cur_token[0] == veclexer.COMMA:

self.match(veclexer.COMMA)

v.append(self.expr())

self.match(veclexer.RBRACK)

return v

def consume(self):

self.cur_token = self.lexer.next_token()

def match(self, token_type):

if self.cur_token[0] == token_type:

self.consume()

return True

raise Exception('expecting %s; found %s' % (token_type, self.cur_token[0]))

if __name__ == '__main__':

prog = '''''

veca = [1, 2, 3]

vecb = [4, 5, 6]

print 'veca:', veca

print 'veca * 2:', veca * 2

print 'veca + 2:', veca + 2

print 'veca + vecb:', veca + vecb

print 'veca + [11, 12]:', veca + [11, 12]

print 'veca * vecb:', veca * vecb

print 'veca:', veca

print 'vecb:', vecb

'''

lex = veclexer.Veclexer(prog)

parser = Vecparser(lex)

parser.statlist()

运行代码便会得到之前介绍中的输出内容。这个解释器极其简陋,只实现了基本的表达式操作,所以不需要构建语法树。如果要为列表语言添加控制结构,就必须实现语法树,在语法树的基础上去解释执行。

Python vs. C : Understanding the Key DifferencesApr 21, 2025 am 12:18 AM

Python vs. C : Understanding the Key DifferencesApr 21, 2025 am 12:18 AMPython and C each have their own advantages, and the choice should be based on project requirements. 1) Python is suitable for rapid development and data processing due to its concise syntax and dynamic typing. 2)C is suitable for high performance and system programming due to its static typing and manual memory management.

Python vs. C : Which Language to Choose for Your Project?Apr 21, 2025 am 12:17 AM

Python vs. C : Which Language to Choose for Your Project?Apr 21, 2025 am 12:17 AMChoosing Python or C depends on project requirements: 1) If you need rapid development, data processing and prototype design, choose Python; 2) If you need high performance, low latency and close hardware control, choose C.

Reaching Your Python Goals: The Power of 2 Hours DailyApr 20, 2025 am 12:21 AM

Reaching Your Python Goals: The Power of 2 Hours DailyApr 20, 2025 am 12:21 AMBy investing 2 hours of Python learning every day, you can effectively improve your programming skills. 1. Learn new knowledge: read documents or watch tutorials. 2. Practice: Write code and complete exercises. 3. Review: Consolidate the content you have learned. 4. Project practice: Apply what you have learned in actual projects. Such a structured learning plan can help you systematically master Python and achieve career goals.

Maximizing 2 Hours: Effective Python Learning StrategiesApr 20, 2025 am 12:20 AM

Maximizing 2 Hours: Effective Python Learning StrategiesApr 20, 2025 am 12:20 AMMethods to learn Python efficiently within two hours include: 1. Review the basic knowledge and ensure that you are familiar with Python installation and basic syntax; 2. Understand the core concepts of Python, such as variables, lists, functions, etc.; 3. Master basic and advanced usage by using examples; 4. Learn common errors and debugging techniques; 5. Apply performance optimization and best practices, such as using list comprehensions and following the PEP8 style guide.

Choosing Between Python and C : The Right Language for YouApr 20, 2025 am 12:20 AM

Choosing Between Python and C : The Right Language for YouApr 20, 2025 am 12:20 AMPython is suitable for beginners and data science, and C is suitable for system programming and game development. 1. Python is simple and easy to use, suitable for data science and web development. 2.C provides high performance and control, suitable for game development and system programming. The choice should be based on project needs and personal interests.

Python vs. C : A Comparative Analysis of Programming LanguagesApr 20, 2025 am 12:14 AM

Python vs. C : A Comparative Analysis of Programming LanguagesApr 20, 2025 am 12:14 AMPython is more suitable for data science and rapid development, while C is more suitable for high performance and system programming. 1. Python syntax is concise and easy to learn, suitable for data processing and scientific computing. 2.C has complex syntax but excellent performance and is often used in game development and system programming.

2 Hours a Day: The Potential of Python LearningApr 20, 2025 am 12:14 AM

2 Hours a Day: The Potential of Python LearningApr 20, 2025 am 12:14 AMIt is feasible to invest two hours a day to learn Python. 1. Learn new knowledge: Learn new concepts in one hour, such as lists and dictionaries. 2. Practice and exercises: Use one hour to perform programming exercises, such as writing small programs. Through reasonable planning and perseverance, you can master the core concepts of Python in a short time.

Python vs. C : Learning Curves and Ease of UseApr 19, 2025 am 12:20 AM

Python vs. C : Learning Curves and Ease of UseApr 19, 2025 am 12:20 AMPython is easier to learn and use, while C is more powerful but complex. 1. Python syntax is concise and suitable for beginners. Dynamic typing and automatic memory management make it easy to use, but may cause runtime errors. 2.C provides low-level control and advanced features, suitable for high-performance applications, but has a high learning threshold and requires manual memory and type safety management.

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Zend Studio 13.0.1

Powerful PHP integrated development environment

Notepad++7.3.1

Easy-to-use and free code editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.