Backend Development

Backend Development Python Tutorial

Python Tutorial IRIS-RAG-Gen: Personalizing ChatGPT RAG Application Powered by IRIS Vector Search

IRIS-RAG-Gen: Personalizing ChatGPT RAG Application Powered by IRIS Vector SearchIRIS-RAG-Gen: Personalizing ChatGPT RAG Application Powered by IRIS Vector Search

Hi Community,

In this article, I will introduce my application iris-RAG-Gen .

Iris-RAG-Gen is a generative AI Retrieval-Augmented Generation (RAG) application that leverages the functionality of IRIS Vector Search to personalize ChatGPT with the help of the Streamlit web framework, LangChain, and OpenAI. The application uses IRIS as a vector store.

Application Features

- Ingest Documents (PDF or TXT) into IRIS

- Chat with the selected Ingested document

- Delete Ingested Documents

- OpenAI ChatGPT

Ingest Documents (PDF or TXT) into IRIS

Follow the Below Steps to Ingest the document:

- Enter OpenAI Key

- Select Document (PDF or TXT)

- Enter Document Description

- Click on the Ingest Document Button

Ingest Document functionality inserts document details into rag_documents table and creates 'rag_document id' (id of the rag_documents) table to save vector data.

The Python code below will save the selected document into vectors:

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.document_loaders import PyPDFLoader, TextLoader

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings

from sqlalchemy import create_engine,text

<span>class RagOpr:</span>

#Ingest document. Parametres contains file path, description and file type

<span>def ingestDoc(self,filePath,fileDesc,fileType):</span>

embeddings = OpenAIEmbeddings()

#Load the document based on the file type

if fileType == "text/plain":

loader = TextLoader(filePath)

elif fileType == "application/pdf":

loader = PyPDFLoader(filePath)

#load data into documents

documents = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=400, chunk_overlap=0)

#Split text into chunks

texts = text_splitter.split_documents(documents)

#Get collection Name from rag_doucments table.

COLLECTION_NAME = self.get_collection_name(fileDesc,fileType)

# function to create collection_name table and store vector data in it.

db = IRISVector.from_documents(

embedding=embeddings,

documents=texts,

collection_name = COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Get collection name

<span>def get_collection_name(self,fileDesc,fileType):</span>

# check if rag_documents table exists, if not then create it

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

SELECT *

FROM INFORMATION_SCHEMA.TABLES

WHERE TABLE_SCHEMA = 'SQLUser'

AND TABLE_NAME = 'rag_documents';

""")

result = []

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

#if table is not created, then create rag_documents table first

if len(result) == 0:

sql = text("""

CREATE TABLE rag_documents (

description VARCHAR(255),

docType VARCHAR(50) )

""")

try:

result = conn.execute(sql)

except Exception as err:

print("An exception occurred:", err)

return ''

#Insert description value

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

INSERT INTO rag_documents

(description,docType)

VALUES (:desc,:ftype)

""")

try:

result = conn.execute(sql, {'desc':fileDesc,'ftype':fileType})

except Exception as err:

print("An exception occurred:", err)

return ''

#select ID of last inserted record

sql = text("""

SELECT LAST_IDENTITY()

""")

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

return "rag_document"+str(result[0][0])

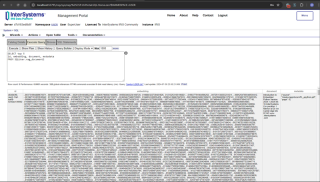

Type the below SQL command in the management portal to retrieve vector data

SELECT top 5 id, embedding, document, metadata FROM SQLUser.rag_document2

Chat with the selected Ingested document

Select the Document from select chat option section and type question. The application will read the vector data and return the relevant answer

The Python code below will save the selected document into vectors:

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings,ChatOpenAI

from langchain.chains import ConversationChain

from langchain.chains.conversation.memory import ConversationSummaryMemory

from langchain.chat_models import ChatOpenAI

<span>class RagOpr:</span>

<span>def ragSearch(self,prompt,id):</span>

#Concat document id with rag_doucment to get the collection name

COLLECTION_NAME = "rag_document"+str(id)

embeddings = OpenAIEmbeddings()

#Get vector store reference

db2 = IRISVector (

embedding_function=embeddings,

collection_name=COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Similarity search

docs_with_score = db2.similarity_search_with_score(prompt)

#Prepair the retrieved documents to pass to LLM

relevant_docs = ["".join(str(doc.page_content)) + " " for doc, _ in docs_with_score]

#init LLM

llm = ChatOpenAI(

temperature=0,

model_name="gpt-3.5-turbo"

)

#manage and handle LangChain multi-turn conversations

conversation_sum = ConversationChain(

llm=llm,

memory= ConversationSummaryMemory(llm=llm),

verbose=False

)

#Create prompt

template = f"""

Prompt: <span>{prompt}

Relevant Docuemnts: {relevant_docs}

"""</span>

#Return the answer

resp = conversation_sum(template)

return resp['response']

For more details, please visit iris-RAG-Gen open exchange application page.

Thanks

The above is the detailed content of IRIS-RAG-Gen: Personalizing ChatGPT RAG Application Powered by IRIS Vector Search. For more information, please follow other related articles on the PHP Chinese website!

Merging Lists in Python: Choosing the Right MethodMay 14, 2025 am 12:11 AM

Merging Lists in Python: Choosing the Right MethodMay 14, 2025 am 12:11 AMTomergelistsinPython,youcanusethe operator,extendmethod,listcomprehension,oritertools.chain,eachwithspecificadvantages:1)The operatorissimplebutlessefficientforlargelists;2)extendismemory-efficientbutmodifiestheoriginallist;3)listcomprehensionoffersf

How to concatenate two lists in python 3?May 14, 2025 am 12:09 AM

How to concatenate two lists in python 3?May 14, 2025 am 12:09 AMIn Python 3, two lists can be connected through a variety of methods: 1) Use operator, which is suitable for small lists, but is inefficient for large lists; 2) Use extend method, which is suitable for large lists, with high memory efficiency, but will modify the original list; 3) Use * operator, which is suitable for merging multiple lists, without modifying the original list; 4) Use itertools.chain, which is suitable for large data sets, with high memory efficiency.

Python concatenate list stringsMay 14, 2025 am 12:08 AM

Python concatenate list stringsMay 14, 2025 am 12:08 AMUsing the join() method is the most efficient way to connect strings from lists in Python. 1) Use the join() method to be efficient and easy to read. 2) The cycle uses operators inefficiently for large lists. 3) The combination of list comprehension and join() is suitable for scenarios that require conversion. 4) The reduce() method is suitable for other types of reductions, but is inefficient for string concatenation. The complete sentence ends.

Python execution, what is that?May 14, 2025 am 12:06 AM

Python execution, what is that?May 14, 2025 am 12:06 AMPythonexecutionistheprocessoftransformingPythoncodeintoexecutableinstructions.1)Theinterpreterreadsthecode,convertingitintobytecode,whichthePythonVirtualMachine(PVM)executes.2)TheGlobalInterpreterLock(GIL)managesthreadexecution,potentiallylimitingmul

Python: what are the key featuresMay 14, 2025 am 12:02 AM

Python: what are the key featuresMay 14, 2025 am 12:02 AMKey features of Python include: 1. The syntax is concise and easy to understand, suitable for beginners; 2. Dynamic type system, improving development speed; 3. Rich standard library, supporting multiple tasks; 4. Strong community and ecosystem, providing extensive support; 5. Interpretation, suitable for scripting and rapid prototyping; 6. Multi-paradigm support, suitable for various programming styles.

Python: compiler or Interpreter?May 13, 2025 am 12:10 AM

Python: compiler or Interpreter?May 13, 2025 am 12:10 AMPython is an interpreted language, but it also includes the compilation process. 1) Python code is first compiled into bytecode. 2) Bytecode is interpreted and executed by Python virtual machine. 3) This hybrid mechanism makes Python both flexible and efficient, but not as fast as a fully compiled language.

Python For Loop vs While Loop: When to Use Which?May 13, 2025 am 12:07 AM

Python For Loop vs While Loop: When to Use Which?May 13, 2025 am 12:07 AMUseaforloopwheniteratingoverasequenceorforaspecificnumberoftimes;useawhileloopwhencontinuinguntilaconditionismet.Forloopsareidealforknownsequences,whilewhileloopssuitsituationswithundeterminediterations.

Python loops: The most common errorsMay 13, 2025 am 12:07 AM

Python loops: The most common errorsMay 13, 2025 am 12:07 AMPythonloopscanleadtoerrorslikeinfiniteloops,modifyinglistsduringiteration,off-by-oneerrors,zero-indexingissues,andnestedloopinefficiencies.Toavoidthese:1)Use'i

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Zend Studio 13.0.1

Powerful PHP integrated development environment

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

SublimeText3 English version

Recommended: Win version, supports code prompts!

PhpStorm Mac version

The latest (2018.2.1) professional PHP integrated development tool