Overview

I wrote a Python script that translates the PDF data extraction business logic into working code.

The script was tested on 71 pages of Custodian Statement PDFs covering a 10 month period (Jan to Oct 2024). Processing the PDFs took about 4 seconds to complete - significantly quicker than doing it manually.

From what I see, the output looks correct and the code did not run into any errors.

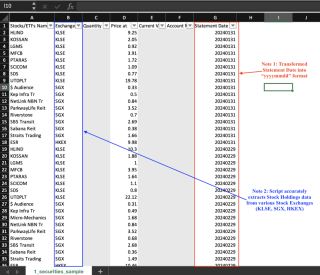

Snapshots of the three CSV outputs are shown below. Note that sensitive data has been greyed out.

Snapshot 1: Stock Holdings

Snapshot 2: Fund Holdings

Snapshot 3: Cash Holdings

This workflow shows the broad steps I took to generate the CSV files.

Now, I will elaborate in more detail how I translated the business logic to code in Python.

Step 1: Read PDF documents

I used pdfplumber's open() function.

# Open the PDF file with pdfplumber.open(file_path) as pdf:

file_path is a declared variable that tells pdfplumber which file to open.

Step 2.0: Extract & filter tables from each page

The extract_tables() function does the hard work of extracting all tables from each page.

Though I am not really familiar with the underlying logic, I think the function did a pretty good job. For example, the two snapshots below show the extracted table vs. the original (from the PDF)

Snapshot A: Output from VS Code Terminal

Snapshot B: Table in PDF

I then needed to uniquely label each table, so that I could "pick and choose" data from specific tables later on.

The ideal option was to use each table's title. However, determining the title coordinates were beyond my capabilities.

As a workaround, I identified each table by concatenating the headers of the first three columns. For example, the Stock Holdings table in Snapshot B is labeled Stocks/ETFsnNameExchangeQuantity.

⚠️This approach has a serious drawback - the first three header names do not make all tables sufficiently unique. Fortunately, this only impacts irrelevant tables.

Step 2.1: Extract, filter & transform non-table text

The specific values I needed - Account Number and Statement Date - were sub-strings in Page 1 of each PDF.

For example, "Account Number M1234567" contains account number "M1234567".

I used Python's re library and got ChatGPT to suggest suitable regular expressions ("regex"). The regex breaks up each string into two groups, with the desired data in the second group.

Regex for Statement Date and Account Number strings

# Open the PDF file with pdfplumber.open(file_path) as pdf:

I next transformed the Statement Date into "yyyymmdd" format. This makes it easier to query and sort data.

regex_date=r'Statement for \b([A-Za-z]{3}-\d{4})\b'

regex_acc_no=r'Account Number ([A-Za-z]\d{7})'

match_date is a variable declared when a string matching the regex is found.

Step 3: Create tabular data

The hard yards - extracting the relevant datapoints - were pretty much done at this point.

Next, I used pandas' DataFrame() function to create tabular data based on the output in Step 2 and Step 3. I also used this function to drop unnecessary columns and rows.

The end result can then be easily written to a CSV or stored in a database.

Step 4: Write data to CSV file

I used Python's write_to_csv() function to write each dataframe to a CSV file.

if match_date:

# Convert string to a mmm-yyyy date

date_obj=datetime.strptime(match_date.group(1),"%b-%Y")

# Get last day of the month

last_day=calendar.monthrange(date_obj.year,date_obj.month[1]

# Replace day with last day of month

last_day_of_month=date_obj.replace(day=last_day)

statement_date=last_day_of_month.strftime("%Y%m%d")

df_cash_selected is the Cash Holdings dataframe while file_cash_holdings is the file name of the Cash Holdings CSV.

➡️ I will write the data to a proper database once I have acquired some database know-how.

Next Steps

A working script is now in place to extract table and text data from the Custodian Statement PDF.

Before I proceed further, I will run some tests to see if the script is working as expected.

--Ends

The above is the detailed content of # | Automate PDF data extraction: Build. For more information, please follow other related articles on the PHP Chinese website!

Merging Lists in Python: Choosing the Right MethodMay 14, 2025 am 12:11 AM

Merging Lists in Python: Choosing the Right MethodMay 14, 2025 am 12:11 AMTomergelistsinPython,youcanusethe operator,extendmethod,listcomprehension,oritertools.chain,eachwithspecificadvantages:1)The operatorissimplebutlessefficientforlargelists;2)extendismemory-efficientbutmodifiestheoriginallist;3)listcomprehensionoffersf

How to concatenate two lists in python 3?May 14, 2025 am 12:09 AM

How to concatenate two lists in python 3?May 14, 2025 am 12:09 AMIn Python 3, two lists can be connected through a variety of methods: 1) Use operator, which is suitable for small lists, but is inefficient for large lists; 2) Use extend method, which is suitable for large lists, with high memory efficiency, but will modify the original list; 3) Use * operator, which is suitable for merging multiple lists, without modifying the original list; 4) Use itertools.chain, which is suitable for large data sets, with high memory efficiency.

Python concatenate list stringsMay 14, 2025 am 12:08 AM

Python concatenate list stringsMay 14, 2025 am 12:08 AMUsing the join() method is the most efficient way to connect strings from lists in Python. 1) Use the join() method to be efficient and easy to read. 2) The cycle uses operators inefficiently for large lists. 3) The combination of list comprehension and join() is suitable for scenarios that require conversion. 4) The reduce() method is suitable for other types of reductions, but is inefficient for string concatenation. The complete sentence ends.

Python execution, what is that?May 14, 2025 am 12:06 AM

Python execution, what is that?May 14, 2025 am 12:06 AMPythonexecutionistheprocessoftransformingPythoncodeintoexecutableinstructions.1)Theinterpreterreadsthecode,convertingitintobytecode,whichthePythonVirtualMachine(PVM)executes.2)TheGlobalInterpreterLock(GIL)managesthreadexecution,potentiallylimitingmul

Python: what are the key featuresMay 14, 2025 am 12:02 AM

Python: what are the key featuresMay 14, 2025 am 12:02 AMKey features of Python include: 1. The syntax is concise and easy to understand, suitable for beginners; 2. Dynamic type system, improving development speed; 3. Rich standard library, supporting multiple tasks; 4. Strong community and ecosystem, providing extensive support; 5. Interpretation, suitable for scripting and rapid prototyping; 6. Multi-paradigm support, suitable for various programming styles.

Python: compiler or Interpreter?May 13, 2025 am 12:10 AM

Python: compiler or Interpreter?May 13, 2025 am 12:10 AMPython is an interpreted language, but it also includes the compilation process. 1) Python code is first compiled into bytecode. 2) Bytecode is interpreted and executed by Python virtual machine. 3) This hybrid mechanism makes Python both flexible and efficient, but not as fast as a fully compiled language.

Python For Loop vs While Loop: When to Use Which?May 13, 2025 am 12:07 AM

Python For Loop vs While Loop: When to Use Which?May 13, 2025 am 12:07 AMUseaforloopwheniteratingoverasequenceorforaspecificnumberoftimes;useawhileloopwhencontinuinguntilaconditionismet.Forloopsareidealforknownsequences,whilewhileloopssuitsituationswithundeterminediterations.

Python loops: The most common errorsMay 13, 2025 am 12:07 AM

Python loops: The most common errorsMay 13, 2025 am 12:07 AMPythonloopscanleadtoerrorslikeinfiniteloops,modifyinglistsduringiteration,off-by-oneerrors,zero-indexingissues,andnestedloopinefficiencies.Toavoidthese:1)Use'i

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Notepad++7.3.1

Easy-to-use and free code editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.