Home >Backend Development >Golang >Build generative AI applications in Go using Amazon Titan Text Premier model

Build generative AI applications in Go using Amazon Titan Text Premier model

- WBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOriginal

- 2024-09-03 14:52:49839browse

Use Amazon Titan Text Premier model with the langchaingo package

In this blog I will walk you through how to use the Amazon Titan Text Premier model in your Go applications with langchaingo which is a Go port of langchain (originally written for Python and JS/TS).

Amazon Titan Text Premier is an advanced LLM within the Amazon Titan Text family. It is useful for a wide range of tasks including RAG, agents, chat, chain of thought, open-ended text generation, brainstorming, summarization, code generation, table creation, data formatting, paraphrasing, rewriting, extraction, and Q&A. Titan Text Premier is also optimized for integration with Agents and Knowledge Bases for Amazon Bedrock.

Using Titan Text Premier with langchaingo

Let's start with an example.

Refer to **Before You Begin* section in this blog post to complete the prerequisites for running the examples. This includes installing Go, configuring Amazon Bedrock access and providing necessary IAM permissions.*

You can refer to the complete code here. To run the example:

git clone https://github.com/abhirockzz/titan-premier-bedrock-go cd titan-premier-bedrock-go go run basic/main.go

I got this below response for the prompt "Explain AI in 100 words or less", but it may be different in your case:

Artificial Intelligence (AI) is a branch of computer science that focuses on creating intelligent machines that can think, learn, and act like humans. It uses advanced algorithms and machine learning techniques to enable computers to recognize patterns, make decisions, and solve problems. AI has the potential to revolutionize various industries, including healthcare, finance, transportation, and entertainment, by automating tasks, improving efficiency, and providing personalized experiences. However, there are also concerns about the ethical implications and potential risks associated with AI, such as job displacement, privacy, and bias.

Here is a quick walk through of the code:

We start by getting instantiating bedrockruntime.Client:

cfg, err := config.LoadDefaultConfig(context.Background(), config.WithRegion(region)) client = bedrockruntime.NewFromConfig(cfg)

We use that to create the langchaingo llm.Model instance - note that modelID we specify is that of Titan Text Premier which is amazon.titan-text-premier-v1:0.

llm, err := bedrock.New(bedrock.WithClient(client), bedrock.WithModel(modelID))

We create a llms.MessageContent and the LLM invocation is done by llm.GenerateContent. Notice how you don't have to think about Titan Text Premier specific request/response payload - that's abstracted by langchaingo:

msg := []llms.MessageContent{

{

Role: llms.ChatMessageTypeHuman,

Parts: []llms.ContentPart{

llms.TextPart("Explain AI in 100 words or less."),

},

},

}

resp, err := llm.GenerateContent(context.Background(), msg, llms.WithMaxTokens(maxTokenCountLimitForTitanTextPremier))

Chat with your documents

This is a pretty common scenario as well. langchaingo support a bunch of types including text, PDF, HTML (and even Notion!).

You can refer to the complete code here. To run this example:

go run doc-chat/main.go

The example uses this page from the Amazon Bedrock User guide as the source document (HTML) but feel free to use any other source:

export SOURCE_URL=<enter URL> go run doc-chat/main.go

You should be prompted to enter your question:

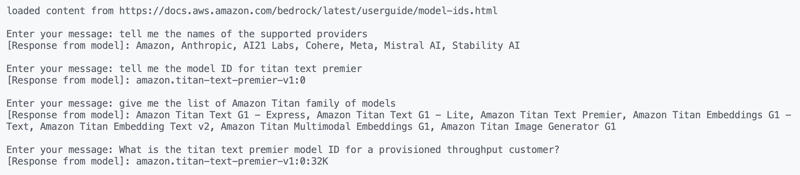

loaded content from https://docs.aws.amazon.com/bedrock/latest/userguide/model-ids.html Enter your message:

I tried these questions and got pretty accurate responses:

1. tell me the names of the supported providers 2. tell me the model ID for titan text premier 3. give me the list of Amazon Titan family of models 4. What is the titan text premier model ID for a provisioned throughput customer?

By the way, the Chat with your document feature is available natively in Amazon Bedrock as well.

Let's walk through the code real quick. We start by loading the content from the source URL:

func getDocs(link string) []schema.Document {

//...

resp, err := http.Get(link)

docs, err := documentloaders.NewHTML(resp.Body).Load(context.Background())

return docs

}

Then, we begin the conversation, using a simple for loop:

//...

for {

fmt.Print("\nEnter your message: ")

input, _ := reader.ReadString('\n')

input = strings.TrimSpace(input)

answer, err := chains.Call(

context.Background(),

docChainWithCustomPrompt(llm),

map[string]any{

"input_documents": docs,

"question": input,

},

chains.WithMaxTokens(maxTokenCountLimitForTitanTextPremier))

//...

}

The chain that we use is created with a custom prompt (based on this guideline) - we override the default behavior in langchaingo:

func docChainWithCustomPrompt(llm *bedrock_llm.LLM) chains.Chain {

ragPromptTemplate := prompts.NewPromptTemplate(

promptTemplateString,

[]string{"context", "question"},

)

qaPromptSelector := chains.ConditionalPromptSelector{

DefaultPrompt: ragPromptTemplate,

}

prompt := qaPromptSelector.GetPrompt(llm)

llmChain := chains.NewLLMChain(llm, prompt)

return chains.NewStuffDocuments(llmChain)

}

Now for the last example - another popular use case.

RAG - Retrieval Augmented Generation

I have previously covered how to use RAG in your Go applications. This time we will use:

- Titan Text Premier as the LLM,

- Titan Embeddings G1 as the embedding model,

- PostgreSQL as the vector store (running locally using Docker) using pgvector extension.

Start the Docker container:

docker run --name pgvector --rm -it -p 5432:5432 -e POSTGRES_USER=postgres -e POSTGRES_PASSWORD=postgres ankane/pgvector

Activate pgvector extension by logging into PostgreSQL (using psql) from a different terminal:

# enter postgres when prompted for password psql -h localhost -U postgres -W CREATE EXTENSION IF NOT EXISTS vector;

You can refer to the complete code here. To run this example:

go run rag/main.go

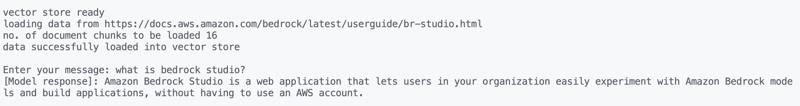

The example uses Amazon Bedrock Studio page as the source document (HTML) but feel free to use any other source:

export SOURCE_URL=<enter URL> go run rag/main.go

You should see an output, and prompted to enter your questions. I tried these:

what is bedrock studio? how do I enable bedrock studio?

As usual, let's see what's going on. Data is loading is done in a similar way as before, and same goes for the conversation (for loop):

for {

fmt.Print("\nEnter your message: ")

question, _ := reader.ReadString('\n')

question = strings.TrimSpace(question)

result, err := chains.Run(

context.Background(),

retrievalQAChainWithCustomPrompt(llm, vectorstores.ToRetriever(store, numOfResults)),

question,

chains.WithMaxTokens(maxTokenCountLimitForTitanTextPremier),

)

//....

}

The RAG part is slightly different. We use a RetrievelQA chain with a custom prompt (similar to one used by Knowledge Bases for Amazon Bedrock):

func retrievalQAChainWithCustomPrompt(llm *bedrock_llm.LLM, retriever vectorstores.Retriever) chains.Chain {

ragPromptTemplate := prompts.NewPromptTemplate(

ragPromptTemplateString,

[]string{"context", "question"},

)

qaPromptSelector := chains.ConditionalPromptSelector{

DefaultPrompt: ragPromptTemplate,

}

prompt := qaPromptSelector.GetPrompt(llm)

llmChain := chains.NewLLMChain(llm, prompt)

stuffDocsChain := chains.NewStuffDocuments(llmChain)

return chains.NewRetrievalQA(

stuffDocsChain,

retriever,

)

}

Conclusion

I covered Amazon Titan Text Premier, which one of multiple text generation models in the Titan family. In addition to text generation, Amazon Titan also has embeddings models (text and multimodal), and image generation. You can learn more by exploring all of these in the Amazon Bedrock documentation. Happy building!

The above is the detailed content of Build generative AI applications in Go using Amazon Titan Text Premier model. For more information, please follow other related articles on the PHP Chinese website!

Related articles

See more- Open golang after installation

- How to tell Gazelle that go files are for go_default_test instead of go_default_library?

- Golang's performance and optimization suggestions under Mac system

- Golang algorithm practice: advantages and challenges

- Discuss the advantages and challenges of Golang in application development