Technology peripherals

Technology peripherals AI

AI ICML2024 high score! Magically modify attention, allowing small models to fight twice as big models

ICML2024 high score! Magically modify attention, allowing small models to fight twice as big modelsICML2024 high score! Magically modify attention, allowing small models to fight twice as big models

Improve the core mechanism of Transformer to focus, so that small models can play twice as big models!

ICML+2024 high-scoring paper, Caiyun Technology team built the DCFormer framework, replacing the Transformer core component attention module (MHA), and proposed a dynamically combined multi-head attention (DCMHA).

DCMHA removes the fixed binding of the search selection loop and transformation loop of the MHA attention head, allowing them to be dynamically combined based on input, which fundamentally improves the expression ability of the model.

The original meaning is that each layer has fixed H attention heads. Now it is almost understood that each layer has fixed H attention heads. Now it uses almost the same parameter amount and calculation. Power can dynamically combine up to HxH attention heads. The fine-tuned content can more clearly express the meaning of the original text, as follows: Each layer of the original model contains a fixed number of H attention heads. Now we can use

DCMHA plug-and-play to replace MHA in any Transformer architecture to obtain a new universal, efficient and scalable model. ArchitectureDCFormer.

This work was jointly completed by researchers from Beijing University of Posts and Telecommunications and AI startup Caiyun Technology.

The model DCPythia-6.9B built by the researchers based on DCFormer is better than the open source Pythia-12B in terms of pre-training perplexity and downstream task evaluation.

The DCFormer model is comparable in performance to those Transformer models that require 1.7-2 times more calculations.

What are the limitations of the multi-head attention module?

The scaling law of large models tells us that with the improvement of computing power, the model will be larger and have more data, and the model effect will become better and better. Although no one can clearly explain how high the ceiling of this road is and whether it can reach AGI, this is indeed the most common approach at present.

But in addition to this, another question is also worth thinking about: Most of the current large models are based on Transformer. They are built up one by one with Transformer blocks like building blocks. As a building block, Transformer In itself, how much room for improvement is there?

This is the basic question to be answered in model structure research, and it is also the starting point of the DCFormer work jointly completed by Caiyun Technology and Beijing University of Posts and Telecommunications.

In Transformer's multi-head attention module (MHA), each attention head works completely independently of each other.

This design has been very successful in practice because of its simplicity and ease of implementation. However, it also brings about the low-ranking of the attention score matrix, which weakens the expressive ability and the repetitive and redundant waste of the attention head function. It eliminates some disadvantages such as parameters and computing resources. Based on this, some research works in recent years have tried to introduce some form of interaction between attention heads.

According to the Transformer loop theory, in MHA, the behavior of each attention head is composed of WQ, WK, WV, WO four weight matrices describe (WO is obtained by cutting the output projection matrix of MHA) .

Among them, WQWK is called the QK loop (or search selection loop) , which determines which item in the context to focus on from the current token (some)token, for example:

WOWV It is called the OV loop (or projection transformation loop) , which determines what information is retrieved from the token of concern (or what attributes are projected) is written into the residual stream at the current position, and then predicted Next token. For example:

The researchers noticed that search (where to get it from) and transformation (what to get) are originally two independent things, and they should be able to specify and Free combination on demand (just like in SQL query, the selection conditions after WHERE and the attribute projection after SELECT are written separately), MHA forces them to be "bundled" in QKOV with one attention head, which limits Flexibility and expressiveness.

For example, suppose there is a model with attention heads A, B, and C whose QK and OV loops can complete the above example =, then replace it with:

It is necessary to cross-combine the QK and OV loops of the existing attention heads, and the model may "not be able to turn a corner" (verified by the synthetic test set constructed by the researcher's system,

What does the dynamic combination of bull attention look like?

With this as a starting point, the research team of this article introduced the compose operation in MHA:

As shown in the figure below, DCMHA is obtained:

△Figure 1. Overall structure of DCMHA

The attention calculated by QWQ and KWK The score matrix AS and the attention weight matrix AW are linearly mapped on the num_heads dimension before being multiplied with VWV to obtain a new matrix A' , through different linear mapping matrices (composition map) , to achieve the effects of various attention head combinations.

For example, in Figure 2(c), the QK loops of heads 3 and 7 are combined with the OV loop of head 1 to form a "new" attention head.

In order to maximize the expression ability, researchers hope that the mapping matrix is dynamically generated

from the input , that is, dynamically determines how the attention heads are combined.

But the mapping matrix they want to generate is not one, but for each pair of query Qi at the source position and key Kj at the destination position in the sequence. To generate such a matrix, the computational overhead and memory usage will be unacceptable.

To this end, they further decompose the mapping matrix into an input-independent static matrix Wb and a low-rank matrix w1w2 and a diagonal matrix Diag(wg), which are respectively responsible for the basic combination, the dynamic combination of the limited way (i.e. rank R between attention heads, and the head itself Dynamic gating (see Figure 2(d) and Figure 3(b)). The latter two matrices are dynamically generated by the Q matrix and the K matrix.

Reduce the calculation and parameter complexity to an almost negligible level without sacrificing the effect(See the complexity analysis in the paper for details). Combined with JAX and PyTorch implementation-level optimization, DCFormer can train and infer efficiently.

(or performance computing power ratio) , that is, the model performance improvement that can be brought about by investing unit computing power - spending less computing power to get a better model.

From the scaling law curves in Figure 4 and Figure 5(In logarithmic coordinates, the loss of each model architecture can be drawn as an approximate straight line as the computing power changes. The lower the loss, the better the model. Good) It can be seen that DCFormer can achieve the effect of the Transformer model with 1.7~2 times the computing power, that is, the intelligent conversion rate of the computing power is increased by 1.7~2 times.

Downstream task evaluation

The research team trained two models, DCPythia-2.8B and DCPythia-6.9B, to evaluate on mainstream NLP downstream tasks and compared them with the open source model Pythia of the same scale( Training uses the same hyperparameter settings as Pythia).

△Table 1. Performance of DCFormer and Pythia in downstream tasks

As can be seen from Table 1, DCPythia-2.8B and 6.9B are not only The ppl on the Pile validation set is lower, and it significantly exceeds Pythia on most downstream tasks. The average accuracy of DCPythia6.9B on ppl and downstream tasks even exceeds Pythia-12B.

DCFormer++2.8B is further improved compared to DCPythia-2.8B, verifying the effectiveness of the combination of DCMHA and Lllama architecture.

Training and inference speed

Although the introduction of DCMHA will bring additional training and inference overhead, it can be seen from Table 2 that the training speed of DCFormer++ is 74.5%-89.2% of Transformer++. The inference speed is 81.1%-89.7%, and as the model parameters increase, the additional computing overhead will gradually decrease.

△Table 2. Comparison of training and inference speeds between Transformer++ and DCFormer++

The training speed is in TPU v3 pod, the sequence length is 2048, and the batch_size is 1k Comparison obtained under the circumstances; the inference speed is evaluated on the A100 80G GPU, the input length is 1024, and the generation length is 128.

Ablation experiment

The results are as follows:

△Table 3. Ablation experiment of DCMHA

From Table 3 The following points can be seen:

- Although adding static combination weights can reduce ppl, introducing dynamic combination weights can further reduce ppl, which illustrates the necessity of dynamic combination.

- Low-rank dynamic combination performs better than dynamic gating.

- The ppl obtained by using only query-wise or key-wise dynamic combination is very similar, and the gap with DCFormer++ is very small.

- Doing attention head combination after softmax is more effective than doing it before softmax, probably because the probability after softmax can more directly affect the output.

- The rank of the dynamic combination weight does not need to be set too large, which also illustrates the low rank of the combination weight.

In addition, the researchers also further reduced training and inference overhead by increasing the proportion of local attention layers and only using query-wise dynamic combination. See Table 10 of the paper for details.

In general, the research team has two conclusions.

About dynamic weights: Recent SSM and linear attention/RNN work such as Mamba, GLA, RWKV6, HGRN, etc. have caught up with Transformer++ by introducing dynamic (input-dependent) weights, but DCFormer uses dynamic The method of combining attention heads shows that when using softmax attention, the effect of Transformer++ can be greatly improved by introducing dynamic weights.

About model architecture innovation: This work shows that if there is an "ideal model architecture" with extreme computing power and intelligent transformation efficiency, although the current Transformer architecture is very powerful, it is probably still far from this ideal architecture. There is a big gap and there is still vast room for improvement. Therefore, in addition to the vigorous development of miracles by stacking computing power and data, innovation in model architecture also has great potential.

The research team also stated that Caiyun Technology will be the first to apply DCformer on its products Caiyun Weather, Caiyun Xiaoyi, and Caiyun Xiaomeng.

For more research details, please refer to the original paper.

ICML2024 paper link: https://icml.cc/virtual/2024/poster/34047.

Arxiv paper link: https://arxiv.org/abs/2405.08553.

Code link: https://github.com/Caiyun-AI/DCFormer.

The above is the detailed content of ICML2024 high score! Magically modify attention, allowing small models to fight twice as big models. For more information, please follow other related articles on the PHP Chinese website!

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AM

The Hidden Dangers Of AI Internal Deployment: Governance Gaps And Catastrophic RisksApr 28, 2025 am 11:12 AMThe unchecked internal deployment of advanced AI systems poses significant risks, according to a new report from Apollo Research. This lack of oversight, prevalent among major AI firms, allows for potential catastrophic outcomes, ranging from uncont

Building The AI PolygraphApr 28, 2025 am 11:11 AM

Building The AI PolygraphApr 28, 2025 am 11:11 AMTraditional lie detectors are outdated. Relying on the pointer connected by the wristband, a lie detector that prints out the subject's vital signs and physical reactions is not accurate in identifying lies. This is why lie detection results are not usually adopted by the court, although it has led to many innocent people being jailed. In contrast, artificial intelligence is a powerful data engine, and its working principle is to observe all aspects. This means that scientists can apply artificial intelligence to applications seeking truth through a variety of ways. One approach is to analyze the vital sign responses of the person being interrogated like a lie detector, but with a more detailed and precise comparative analysis. Another approach is to use linguistic markup to analyze what people actually say and use logic and reasoning. As the saying goes, one lie breeds another lie, and eventually

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AM

Is AI Cleared For Takeoff In The Aerospace Industry?Apr 28, 2025 am 11:10 AMThe aerospace industry, a pioneer of innovation, is leveraging AI to tackle its most intricate challenges. Modern aviation's increasing complexity necessitates AI's automation and real-time intelligence capabilities for enhanced safety, reduced oper

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AM

Watching Beijing's Spring Robot RaceApr 28, 2025 am 11:09 AMThe rapid development of robotics has brought us a fascinating case study. The N2 robot from Noetix weighs over 40 pounds and is 3 feet tall and is said to be able to backflip. Unitree's G1 robot weighs about twice the size of the N2 and is about 4 feet tall. There are also many smaller humanoid robots participating in the competition, and there is even a robot that is driven forward by a fan. Data interpretation The half marathon attracted more than 12,000 spectators, but only 21 humanoid robots participated. Although the government pointed out that the participating robots conducted "intensive training" before the competition, not all robots completed the entire competition. Champion - Tiangong Ult developed by Beijing Humanoid Robot Innovation Center

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AM

The Mirror Trap: AI Ethics And The Collapse Of Human ImaginationApr 28, 2025 am 11:08 AMArtificial intelligence, in its current form, isn't truly intelligent; it's adept at mimicking and refining existing data. We're not creating artificial intelligence, but rather artificial inference—machines that process information, while humans su

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AM

New Google Leak Reveals Handy Google Photos Feature UpdateApr 28, 2025 am 11:07 AMA report found that an updated interface was hidden in the code for Google Photos Android version 7.26, and each time you view a photo, a row of newly detected face thumbnails are displayed at the bottom of the screen. The new facial thumbnails are missing name tags, so I suspect you need to click on them individually to see more information about each detected person. For now, this feature provides no information other than those people that Google Photos has found in your images. This feature is not available yet, so we don't know how Google will use it accurately. Google can use thumbnails to speed up finding more photos of selected people, or may be used for other purposes, such as selecting the individual to edit. Let's wait and see. As for now

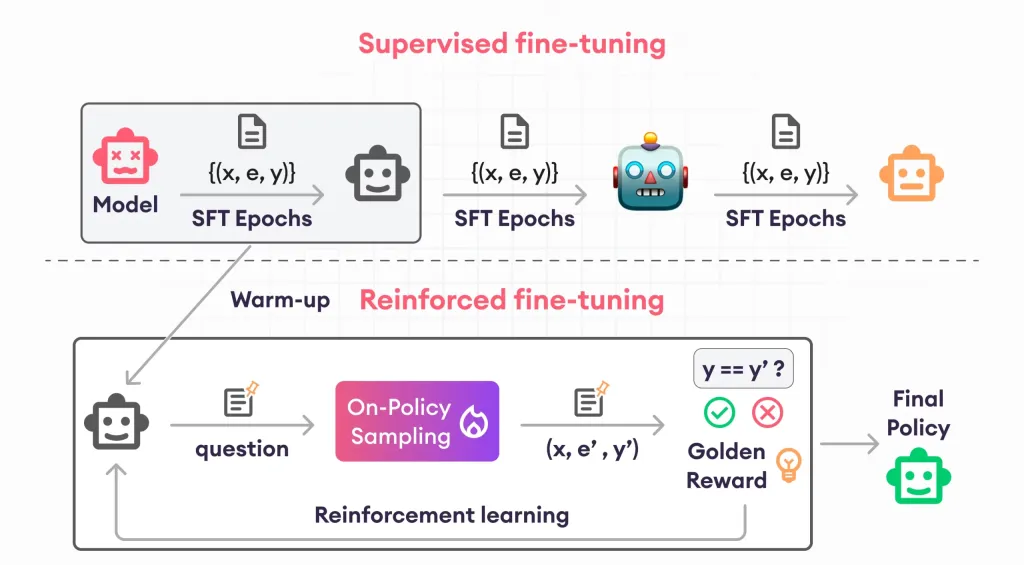

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AM

Guide to Reinforcement Finetuning - Analytics VidhyaApr 28, 2025 am 09:30 AMReinforcement finetuning has shaken up AI development by teaching models to adjust based on human feedback. It blends supervised learning foundations with reward-based updates to make them safer, more accurate, and genuinely help

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

Dreamweaver Mac version

Visual web development tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 Mac version

God-level code editing software (SublimeText3)