###第一步,并发消费###

先看代码,重点是这我们使用的是ConcurrentKafkaListenerContainerFactory并且设置了factory.setConcurrency(4); (我的topic有4个分区,为了加快消费将并发设置为4,也就是有4个KafkaMessageListenerContainer)

@Bean

KafkaListenerContainerFactory<ConcurrentMessageListenerContainer<String, String>> kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, String> factory = new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

factory.setConcurrency(4);

factory.setBatchListener(true);

factory.getContainerProperties().setPollTimeout(3000);

return factory;

}注意也可以直接在application.properties中添加spring.kafka.listener.concurrency=3,然后使用@KafkaListener并发消费。

###第二步,批量消费###

然后是批量消费。重点是factory.setBatchListener(true);

以及 propsMap.put(ConsumerConfig.MAX_POLL_RECORDS_CONFIG, 50);

一个设启用批量消费,一个设置批量消费每次最多消费多少条消息记录。

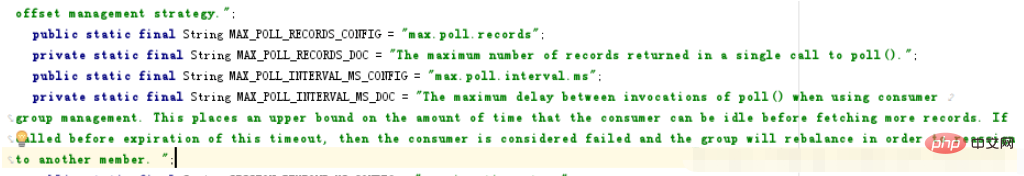

重点说明一下,我们设置的ConsumerConfig.MAX_POLL_RECORDS_CONFIG是50,并不是说如果没有达到50条消息,我们就一直等待。官方的解释是"The maximum number of records returned in a single call to poll().", 也就是50表示的是一次poll最多返回的记录数。

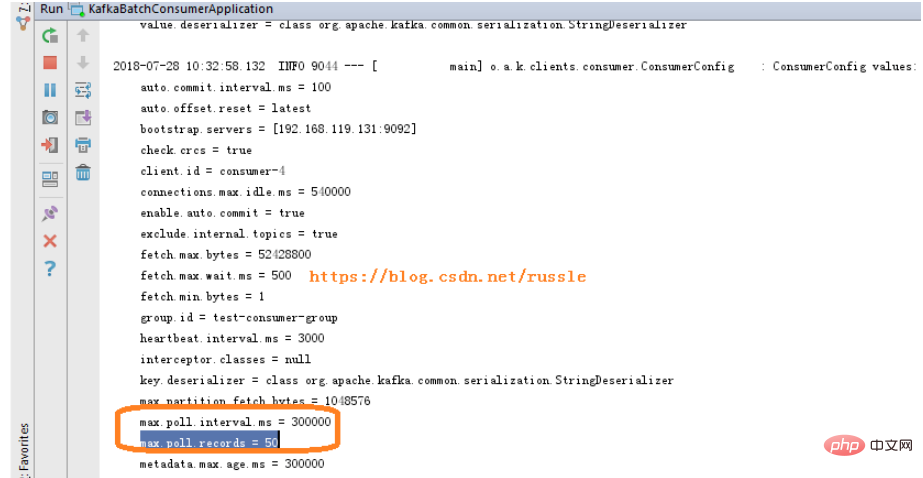

从启动日志中可以看到还有个 max.poll.interval.ms = 300000, 也就说每间隔max.poll.interval.ms我们就调用一次poll。每次poll最多返回50条记录。

max.poll.interval.ms官方解释是"The maximum delay between invocations of poll() when using consumer group management. This places an upper bound on the amount of time that the consumer can be idle before fetching more records. If poll() is not called before expiration of this timeout, then the consumer is considered failed and the group will rebalance in order to reassign the partitions to another member. ";

@Bean

KafkaListenerContainerFactory<ConcurrentMessageListenerContainer<String, String>> kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, String> factory = new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

factory.setConcurrency(4);

factory.setBatchListener(true);

factory.getContainerProperties().setPollTimeout(3000);

return factory;

}

@Bean

public Map consumerConfigs() {

Map propsMap = new HashMap<>();

propsMap.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, propsConfig.getBroker());

propsMap.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, propsConfig.getEnableAutoCommit());

propsMap.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG, "100");

propsMap.put(ConsumerConfig.SESSION_TIMEOUT_MS_CONFIG, "15000");

propsMap.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

propsMap.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

propsMap.put(ConsumerConfig.GROUP_ID_CONFIG, propsConfig.getGroupId());

propsMap.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, propsConfig.getAutoOffsetReset());

propsMap.put(ConsumerConfig.MAX_POLL_RECORDS_CONFIG, 50);

return propsMap;

} 启动日志截图

关于max.poll.records和max.poll.interval.ms官方解释截图:

###第三步,分区消费###

对于只有一个分区的topic,不需要分区消费,因为没有意义。下面的例子是针对有2个分区的情况(我的完整代码中有4个listenPartitionX方法,我的topic设置了4个分区),读者可以根据自己的情况进行调整。

public class MyListener {

private static final String TPOIC = "topic02";

@KafkaListener(id = "id0", topicPartitions = { @TopicPartition(topic = TPOIC, partitions = { "0" }) })

public void listenPartition0(List<ConsumerRecord<?, ?>> records) {

log.info("Id0 Listener, Thread ID: " + Thread.currentThread().getId());

log.info("Id0 records size " + records.size());

for (ConsumerRecord<?, ?> record : records) {

Optional<?> kafkaMessage = Optional.ofNullable(record.value());

log.info("Received: " + record);

if (kafkaMessage.isPresent()) {

Object message = record.value();

String topic = record.topic();

log.info("p0 Received message={}", message);

}

}

}

@KafkaListener(id = "id1", topicPartitions = { @TopicPartition(topic = TPOIC, partitions = { "1" }) })

public void listenPartition1(List<ConsumerRecord<?, ?>> records) {

log.info("Id1 Listener, Thread ID: " + Thread.currentThread().getId());

log.info("Id1 records size " + records.size());

for (ConsumerRecord<?, ?> record : records) {

Optional<?> kafkaMessage = Optional.ofNullable(record.value());

log.info("Received: " + record);

if (kafkaMessage.isPresent()) {

Object message = record.value();

String topic = record.topic();

log.info("p1 Received message={}", message);

}

}

}以上是Spring Boot中怎么使用@KafkaListener并发批量接收消息的详细内容。更多信息请关注PHP中文网其他相关文章!

热AI工具

Undresser.AI Undress

人工智能驱动的应用程序,用于创建逼真的裸体照片

AI Clothes Remover

用于从照片中去除衣服的在线人工智能工具。

Undress AI Tool

免费脱衣服图片

Clothoff.io

AI脱衣机

AI Hentai Generator

免费生成ai无尽的。

热门文章

热工具

SecLists

SecLists是最终安全测试人员的伙伴。它是一个包含各种类型列表的集合,这些列表在安全评估过程中经常使用,都在一个地方。SecLists通过方便地提供安全测试人员可能需要的所有列表,帮助提高安全测试的效率和生产力。列表类型包括用户名、密码、URL、模糊测试有效载荷、敏感数据模式、Web shell等等。测试人员只需将此存储库拉到新的测试机上,他就可以访问到所需的每种类型的列表。

SublimeText3汉化版

中文版,非常好用

禅工作室 13.0.1

功能强大的PHP集成开发环境

Atom编辑器mac版下载

最流行的的开源编辑器

MinGW - 适用于 Windows 的极简 GNU

这个项目正在迁移到osdn.net/projects/mingw的过程中,你可以继续在那里关注我们。MinGW:GNU编译器集合(GCC)的本地Windows移植版本,可自由分发的导入库和用于构建本地Windows应用程序的头文件;包括对MSVC运行时的扩展,以支持C99功能。MinGW的所有软件都可以在64位Windows平台上运行。