社区大家好,

在本文中,我将介绍我的应用程序 iris-RAG-Gen 。

Iris-RAG-Gen 是一款生成式 AI 检索增强生成 (RAG) 应用程序,它利用 IRIS 矢量搜索的功能,在 Streamlit Web 框架、LangChain 和 OpenAI 的帮助下个性化 ChatGPT。该应用程序使用 IRIS 作为矢量存储。

应用功能

- 将文档(PDF 或 TXT)提取到 IRIS

- 与选定的摄取文档聊天

- 删除摄取的文档

- OpenAI ChatGPT

将文档(PDF 或 TXT)提取到 IRIS

按照以下步骤提取文档:

- 输入 OpenAI 密钥

- 选择文档(PDF 或 TXT)

- 输入文档说明

- 单击“摄取文档”按钮

摄取文档功能将文档详细信息插入到 rag_documents 表中,并创建“rag_document id”(rag_documents 的 ID)表来保存矢量数据。

下面的 Python 代码会将所选文档保存到向量中:

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.document_loaders import PyPDFLoader, TextLoader

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings

from sqlalchemy import create_engine,text

<span>class RagOpr:</span>

#Ingest document. Parametres contains file path, description and file type

<span>def ingestDoc(self,filePath,fileDesc,fileType):</span>

embeddings = OpenAIEmbeddings()

#Load the document based on the file type

if fileType == "text/plain":

loader = TextLoader(filePath)

elif fileType == "application/pdf":

loader = PyPDFLoader(filePath)

#load data into documents

documents = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=400, chunk_overlap=0)

#Split text into chunks

texts = text_splitter.split_documents(documents)

#Get collection Name from rag_doucments table.

COLLECTION_NAME = self.get_collection_name(fileDesc,fileType)

# function to create collection_name table and store vector data in it.

db = IRISVector.from_documents(

embedding=embeddings,

documents=texts,

collection_name = COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Get collection name

<span>def get_collection_name(self,fileDesc,fileType):</span>

# check if rag_documents table exists, if not then create it

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

SELECT *

FROM INFORMATION_SCHEMA.TABLES

WHERE TABLE_SCHEMA = 'SQLUser'

AND TABLE_NAME = 'rag_documents';

""")

result = []

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

#if table is not created, then create rag_documents table first

if len(result) == 0:

sql = text("""

CREATE TABLE rag_documents (

description VARCHAR(255),

docType VARCHAR(50) )

""")

try:

result = conn.execute(sql)

except Exception as err:

print("An exception occurred:", err)

return ''

#Insert description value

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

INSERT INTO rag_documents

(description,docType)

VALUES (:desc,:ftype)

""")

try:

result = conn.execute(sql, {'desc':fileDesc,'ftype':fileType})

except Exception as err:

print("An exception occurred:", err)

return ''

#select ID of last inserted record

sql = text("""

SELECT LAST_IDENTITY()

""")

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

return "rag_document"+str(result[0][0])

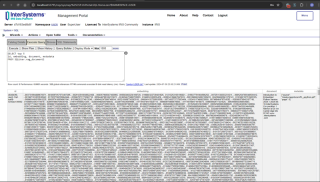

在管理门户中输入以下 SQL 命令来检索矢量数据

SELECT top 5 id, embedding, document, metadata FROM SQLUser.rag_document2

与选定的摄取文档聊天

从选择聊天选项部分选择文档并输入问题。 应用程序将读取矢量数据并返回相关答案

下面的 Python 代码会将所选文档保存到向量中:

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings,ChatOpenAI

from langchain.chains import ConversationChain

from langchain.chains.conversation.memory import ConversationSummaryMemory

from langchain.chat_models import ChatOpenAI

<span>class RagOpr:</span>

<span>def ragSearch(self,prompt,id):</span>

#Concat document id with rag_doucment to get the collection name

COLLECTION_NAME = "rag_document"+str(id)

embeddings = OpenAIEmbeddings()

#Get vector store reference

db2 = IRISVector (

embedding_function=embeddings,

collection_name=COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Similarity search

docs_with_score = db2.similarity_search_with_score(prompt)

#Prepair the retrieved documents to pass to LLM

relevant_docs = ["".join(str(doc.page_content)) + " " for doc, _ in docs_with_score]

#init LLM

llm = ChatOpenAI(

temperature=0,

model_name="gpt-3.5-turbo"

)

#manage and handle LangChain multi-turn conversations

conversation_sum = ConversationChain(

llm=llm,

memory= ConversationSummaryMemory(llm=llm),

verbose=False

)

#Create prompt

template = f"""

Prompt: <span>{prompt}

Relevant Docuemnts: {relevant_docs}

"""</span>

#Return the answer

resp = conversation_sum(template)

return resp['response']

更多详情,请访问iris-RAG-Gen打开交换申请页面。

谢谢

以上是IRIS-RAG-Gen:由 IRIS 矢量搜索提供支持的个性化 ChatGPT RAG 应用程序的详细内容。更多信息请关注PHP中文网其他相关文章!

Python中的合并列表:选择正确的方法May 14, 2025 am 12:11 AM

Python中的合并列表:选择正确的方法May 14, 2025 am 12:11 AMTomergelistsinpython,YouCanusethe操作员,estextMethod,ListComprehension,Oritertools

如何在Python 3中加入两个列表?May 14, 2025 am 12:09 AM

如何在Python 3中加入两个列表?May 14, 2025 am 12:09 AM在Python3中,可以通过多种方法连接两个列表:1)使用 运算符,适用于小列表,但对大列表效率低;2)使用extend方法,适用于大列表,内存效率高,但会修改原列表;3)使用*运算符,适用于合并多个列表,不修改原列表;4)使用itertools.chain,适用于大数据集,内存效率高。

Python串联列表字符串May 14, 2025 am 12:08 AM

Python串联列表字符串May 14, 2025 am 12:08 AM使用join()方法是Python中从列表连接字符串最有效的方法。1)使用join()方法高效且易读。2)循环使用 运算符对大列表效率低。3)列表推导式与join()结合适用于需要转换的场景。4)reduce()方法适用于其他类型归约,但对字符串连接效率低。完整句子结束。

Python执行,那是什么?May 14, 2025 am 12:06 AM

Python执行,那是什么?May 14, 2025 am 12:06 AMpythonexecutionistheprocessoftransformingpypythoncodeintoExecutablestructions.1)InternterPreterReadSthecode,ConvertingTingitIntObyTecode,whepythonvirtualmachine(pvm)theglobalinterpreterpreterpreterpreterlock(gil)the thepythonvirtualmachine(pvm)

Python:关键功能是什么May 14, 2025 am 12:02 AM

Python:关键功能是什么May 14, 2025 am 12:02 AMPython的关键特性包括:1.语法简洁易懂,适合初学者;2.动态类型系统,提高开发速度;3.丰富的标准库,支持多种任务;4.强大的社区和生态系统,提供广泛支持;5.解释性,适合脚本和快速原型开发;6.多范式支持,适用于各种编程风格。

Python:编译器还是解释器?May 13, 2025 am 12:10 AM

Python:编译器还是解释器?May 13, 2025 am 12:10 AMPython是解释型语言,但也包含编译过程。1)Python代码先编译成字节码。2)字节码由Python虚拟机解释执行。3)这种混合机制使Python既灵活又高效,但执行速度不如完全编译型语言。

python用于循环与循环时:何时使用哪个?May 13, 2025 am 12:07 AM

python用于循环与循环时:何时使用哪个?May 13, 2025 am 12:07 AMuseeAforloopWheniteratingOveraseQuenceOrforAspecificnumberoftimes; useAwhiLeLoopWhenconTinuingUntilAcIntiment.ForloopSareIdeAlforkNownsences,而WhileLeleLeleLeleLoopSituationSituationSituationsItuationSuationSituationswithUndEtermentersitations。

Python循环:最常见的错误May 13, 2025 am 12:07 AM

Python循环:最常见的错误May 13, 2025 am 12:07 AMpythonloopscanleadtoerrorslikeinfiniteloops,modifyingListsDuringteritation,逐个偏置,零indexingissues,andnestedloopineflinefficiencies

热AI工具

Undresser.AI Undress

人工智能驱动的应用程序,用于创建逼真的裸体照片

AI Clothes Remover

用于从照片中去除衣服的在线人工智能工具。

Undress AI Tool

免费脱衣服图片

Clothoff.io

AI脱衣机

Video Face Swap

使用我们完全免费的人工智能换脸工具轻松在任何视频中换脸!

热门文章

热工具

PhpStorm Mac 版本

最新(2018.2.1 )专业的PHP集成开发工具

Dreamweaver CS6

视觉化网页开发工具

ZendStudio 13.5.1 Mac

功能强大的PHP集成开发环境

VSCode Windows 64位 下载

微软推出的免费、功能强大的一款IDE编辑器

WebStorm Mac版

好用的JavaScript开发工具