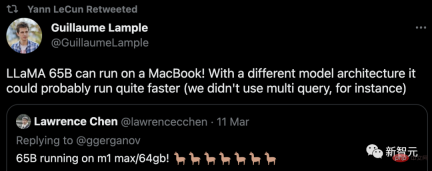

前不久,Meta前腳發布完開源大語言模型LLaMA,後腳就被網友放出了無門檻下載鏈接,“慘遭”開放。

訊息一出,圈內瞬間就熱鬧了起來,大家紛紛開始下載測試。

但那些手邊沒有頂級顯示卡的朋友們,就只能望模型興嘆了。

不過,問題不大。 Georgi Gerganov在最近做了一個名為「llama.cpp」的計畫-沒有GPU也能跑LLaMA。

計畫網址:https://github.com/ggerganov/llama.cpp

是的,這也包括搭載了蘋果晶片的Mac。並且也獲得了LeCun的轉發支援。

在M1/M2的Mac上跑LLaMA

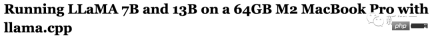

目前來說,比較全面的教學有兩個,分別以蘋果的M1和M2處理器為基礎。

第一篇:https://dev.l1x.be/posts/2023/03/ 12/using-llama-with-m1-mac/

#第二篇:https ://til.simonwillison.net/llms/llama-7b-m2

#話不多說,先看效果。

例如在最小的7B模型上提問:「第一個登上月球的人是」。

很快,幾秒之後,結果就出來了。

-p '第一個登上月球的人是'

第一個登上月球的人是38歲的太空人Neil A. Armstrong。

1969年7月20日,阿波羅11號在月球上著陸。

阿波羅11號是第一個登陸月球的載人任務,也是太空競賽的高潮。 1969年7月,Neil Armstrong和Edwin "Buzz" Aldrin成為第一批在月球上登陸的人類。

阿波羅11號於7月16日從甘迺迪太空中心發射。

從阿姆斯壯登月的年齡、中間名和日期來看,並沒有出現明顯的事實性錯誤。這對於如此體量的小模型來說,實屬不易。

如果使用下面這個提示,可以產生了一些實用的Python程式碼。

-p 'def open_and_return_content(filename):'

def open_and_return_content(filename):

"""Opens file (returning the content) and performs basic sanity checks"""if os.path.isfile(filename):

with open(filename) as f:

content = f.read()

return contentelse:

print('WARNING: file "{}" does not exist'.format(filename), file=sys.stderr)

return ''def get_file_info(filename, fullpath):

"""Get file information (i.e., permission, owner, group, size)"""接下来,我们就来看看具体是如何实现的。

第一步:下載模型

首先要做的就是下載LLaMA模型。

你可以透過官方的表格向Meta提交申請,或是從網友分享的連結直接取得。

總之,完成後你會看到下面這堆東西:

正如你所看到的,不同的模型都在不同的資料夾裡。每個模型都有一個params.json,包含關於該模型的細節。例如:

第二步:安裝依賴項

首先,你需要安装Xcode来编译C++项目。

xcode-select --install

接下来,是构建C++项目的依赖项(pkgconfig和cmake)。

brew install pkgconfig cmake

在环境的配置上,假如你用的是Python 3.11,则可以创建一个虚拟环境:

/opt/homebrew/bin/python3.11 -m venv venv

然后激活venv。(如果是fish以外的shell,只要去掉.fish后缀即可)

. venv/bin/activate.fish

最后,安装Torch。

pip3 install --pre torch torchvision --extra-index-url https://download.pytorch.org/whl/nightly/cpu

如果你对利用新的Metal性能着色器(MPS)后端进行GPU训练加速感兴趣,可以通过运行以下程序来进行验证。但这不是在M1上运行LLaMA的必要条件。

python Python 3.11.2 (main, Feb 16 2023, 02:55:59) [Clang 14.0.0 (clang-1400.0.29.202)] on darwin Type "help", "copyright", "credits" or "license" for more information. >>> import torch; torch.backends.mps.is_available()True

第三步:编译LLaMA CPP

git clone git@github.com:ggerganov/llama.cpp.git

在安装完所有的依赖项后,你可以运行make:

make I llama.cpp build info: I UNAME_S:Darwin I UNAME_P:arm I UNAME_M:arm64 I CFLAGS: -I.-O3 -DNDEBUG -std=c11 -fPIC -pthread -DGGML_USE_ACCELERATE I CXXFLAGS: -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -pthread I LDFLAGS: -framework Accelerate I CC: Apple clang version 14.0.0 (clang-1400.0.29.202)I CXX:Apple clang version 14.0.0 (clang-1400.0.29.202) cc-I.-O3 -DNDEBUG -std=c11 -fPIC -pthread -DGGML_USE_ACCELERATE -c ggml.c -o ggml.o c++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -pthread -c utils.cpp -o utils.o c++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -pthread main.cpp ggml.o utils.o -o main-framework Accelerate ./main -h usage: ./main [options] options: -h, --helpshow this help message and exit -s SEED, --seed SEEDRNG seed (default: -1) -t N, --threads N number of threads to use during computation (default: 4) -p PROMPT, --prompt PROMPT prompt to start generation with (default: random) -n N, --n_predict N number of tokens to predict (default: 128) --top_k N top-k sampling (default: 40) --top_p N top-p sampling (default: 0.9) --temp Ntemperature (default: 0.8) -b N, --batch_size Nbatch size for prompt processing (default: 8) -m FNAME, --model FNAME model path (default: models/llama-7B/ggml-model.bin) c++ -I. -I./examples -O3 -DNDEBUG -std=c++11 -fPIC -pthread quantize.cpp ggml.o utils.o -o quantize-framework Accelerate

第四步:转换模型

假设你已经把模型放在llama.cpp repo中的models/下。

python convert-pth-to-ggml.py models/7B 1

那么,应该会看到像这样的输出:

{'dim': 4096, 'multiple_of': 256, 'n_heads': 32, 'n_layers': 32, 'norm_eps': 1e-06, 'vocab_size': 32000}n_parts =1Processing part0Processing variable: tok_embeddings.weight with shape:torch.Size([32000, 4096])and type:torch.float16

Processing variable: norm.weight with shape:torch.Size([4096])and type:torch.float16

Converting to float32

Processing variable: output.weight with shape:torch.Size([32000, 4096])and type:torch.float16

Processing variable: layers.0.attention.wq.weight with shape:torch.Size([4096, 4096])and type:torch.f

loat16

Processing variable: layers.0.attention.wk.weight with shape:torch.Size([4096, 4096])and type:torch.f

loat16

Processing variable: layers.0.attention.wv.weight with shape:torch.Size([4096, 4096])and type:torch.f

loat16

Processing variable: layers.0.attention.wo.weight with shape:torch.Size([4096, 4096])and type:torch.f

loat16

Processing variable: layers.0.feed_forward.w1.weight with shape:torch.Size([11008, 4096])and type:tor

ch.float16

Processing variable: layers.0.feed_forward.w2.weight with shape:torch.Size([4096, 11008])and type:tor

ch.float16

Processing variable: layers.0.feed_forward.w3.weight with shape:torch.Size([11008, 4096])and type:tor

ch.float16

Processing variable: layers.0.attention_norm.weight with shape:torch.Size([4096])and type:torch.float

16...

Done. Output file: models/7B/ggml-model-f16.bin, (part0 )下一步将是进行量化处理:

./quantize ./models/7B/ggml-model-f16.bin ./models/7B/ggml-model-q4_0.bin 2

输出如下:

llama_model_quantize: loading model from './models/7B/ggml-model-f16.bin'llama_model_quantize: n_vocab = 32000llama_model_quantize: n_ctx = 512llama_model_quantize: n_embd= 4096llama_model_quantize: n_mult= 256llama_model_quantize: n_head= 32llama_model_quantize: n_layer = 32llama_model_quantize: f16 = 1... layers.31.attention_norm.weight - [ 4096, 1], type =f32 size =0.016 MB layers.31.ffn_norm.weight - [ 4096, 1], type =f32 size =0.016 MB llama_model_quantize: model size= 25705.02 MB llama_model_quantize: quant size=4017.27 MB llama_model_quantize: hist: 0.000 0.022 0.019 0.033 0.053 0.078 0.104 0.125 0.134 0.125 0.104 0.078 0.053 0.033 0.019 0.022 main: quantize time = 29389.45 ms main:total time = 29389.45 ms

第五步:运行模型

./main -m ./models/7B/ggml-model-q4_0.bin -t 8 -n 128 -p 'The first president of the USA was '

main: seed = 1678615879llama_model_load: loading model from './models/7B/ggml-model-q4_0.bin' - please wait ... llama_model_load: n_vocab = 32000llama_model_load: n_ctx = 512llama_model_load: n_embd= 4096llama_model_load: n_mult= 256llama_model_load: n_head= 32llama_model_load: n_layer = 32llama_model_load: n_rot = 128llama_model_load: f16 = 2llama_model_load: n_ff= 11008llama_model_load: n_parts = 1llama_model_load: ggml ctx size = 4529.34 MB llama_model_load: memory_size = 512.00 MB, n_mem = 16384llama_model_load: loading model part 1/1 from './models/7B/ggml-model-q4_0.bin'llama_model_load: .................................... donellama_model_load: model size =4017.27 MB / num tensors = 291 main: prompt: 'The first president of the USA was 'main: number of tokens in prompt = 9 1 -> ''1576 -> 'The' 937 -> ' first'6673 -> ' president' 310 -> ' of' 278 -> ' the'8278 -> ' USA' 471 -> ' was' 29871 -> ' ' sampling parameters: temp = 0.800000, top_k = 40, top_p = 0.950000 The first president of the USA was 57 years old when he assumed office (George Washington). Nowadays, the US electorate expects the new president to be more young at heart. President Donald Trump was 70 years old when he was inaugurated. In contrast to his predecessors, he is physically fit, healthy and active. And his fitness has been a prominent theme of his presidency. During the presidential campaign, he famously said he would be the “most active president ever” — a statement Trump has not yet achieved, but one that fits his approach to the office. His tweets demonstrate his physical activity. main: mem per token = 14434244 bytes main: load time =1311.74 ms main: sample time = 278.96 ms main:predict time =7375.89 ms / 54.23 ms per token main:total time =9216.61 ms

资源使用情况

第二位博主表示,在运行时,13B模型使用了大约4GB的内存,以及748%的CPU。(设定的就是让模型使用8个CPU核心)

没有指令微调

GPT-3和ChatGPT效果如此之好的关键原因之一是,它们都经过了指令微调,

这种额外的训练使它们有能力对人类的指令做出有效的反应。比如「总结一下这个」或「写一首关于水獭的诗」或「从这篇文章中提取要点」。

撰写教程的博主表示,据他观察,LLaMA并没有这样的能力。

也就是说,给LLaMA的提示需要采用经典的形式:「一些将由......完成的文本」。这也让提示工程变得更加困难。

举个例子,博主至今都还没有想出一个正确的提示,从而让LLaMA实现文本的总结。

以上是LeCun轉讚:在蘋果M1/M2晶片上跑LLaMA! 130億參數模型僅需4GB內存的詳細內容。更多資訊請關注PHP中文網其他相關文章!

通過AI和NLG進行財務報告 - 分析VidhyaApr 15, 2025 am 10:35 AM

通過AI和NLG進行財務報告 - 分析VidhyaApr 15, 2025 am 10:35 AMAI驅動的財務報告:通過自然語言產生革新見解 在當今動態的業務環境中,準確及時的財務分析對於戰略決策至關重要。 傳統財務報告

這款Google DeepMind機器人會在2028年奧運會上演奏嗎?Apr 15, 2025 am 10:16 AM

這款Google DeepMind機器人會在2028年奧運會上演奏嗎?Apr 15, 2025 am 10:16 AMGoogle DeepMind的乒乓球機器人:體育和機器人技術的新時代 巴黎2024年奧運會可能已經結束,但是由於Google DeepMind,運動和機器人技術的新時代正在興起。 他們的開創性研究(“實現人類水平的競爭

使用Gemini Flash 1.5型號構建食物視覺網絡應用Apr 15, 2025 am 10:15 AM

使用Gemini Flash 1.5型號構建食物視覺網絡應用Apr 15, 2025 am 10:15 AM雙子座閃光燈1.5解鎖效率和可伸縮性:燒瓶食物視覺webapp 在快速發展的AI景觀中,效率和可擴展性至關重要。 開發人員越來越多地尋求高性能模型,以最大程度地減少成本和延遲

使用LlamainDex實施AI代理Apr 15, 2025 am 10:11 AM

使用LlamainDex實施AI代理Apr 15, 2025 am 10:11 AM利用LlamainDex的AI特工的力量:逐步指南 想像一下,一個私人助理了解您的要求並完美地執行它們,無論是快速計算還是檢索最新的市場新聞。本文探索

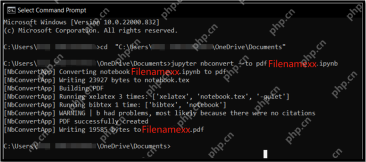

將.ipynb文件轉換為PDF- Analytics Vidhya的5種方法Apr 15, 2025 am 10:06 AM

將.ipynb文件轉換為PDF- Analytics Vidhya的5種方法Apr 15, 2025 am 10:06 AMJupyter Notebook (.ipynb) 文件廣泛用於數據分析、科學計算和交互式編碼。雖然這些 Notebook 非常適合開發和與其他數據科學家共享代碼,但有時您需要將其轉換為更普遍易讀的格式,例如 PDF。本指南將引導您逐步了解將 .ipynb 文件轉換為 PDF 的各種方法,以及技巧、最佳實踐和故障排除建議。 目錄 為什麼將 .ipynb 轉換為 PDF? 將 .ipynb 文件轉換為 PDF 的方法 使用 Jupyter Notebook UI 使用 nbconve

LLM量化和用例的綜合指南Apr 15, 2025 am 10:02 AM

LLM量化和用例的綜合指南Apr 15, 2025 am 10:02 AM介紹 大型語言模型(LLM)正在徹底改變自然語言處理,但它們的巨大規模和計算要求限制了部署。 量化是一種縮小模型和降低計算成本的技術,是至關重要的

python的硒綜合指南Apr 15, 2025 am 09:57 AM

python的硒綜合指南Apr 15, 2025 am 09:57 AM介紹 本指南探討了用於Web自動化和測試的Selenium和Python的強大組合。 Selenium可自動化瀏覽器交互,從而顯著提高了大型Web應用程序的測試效率。 本教程重點o

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

AI Hentai Generator

免費產生 AI 無盡。

熱門文章

熱工具

VSCode Windows 64位元 下載

微軟推出的免費、功能強大的一款IDE編輯器

EditPlus 中文破解版

體積小,語法高亮,不支援程式碼提示功能

SublimeText3 Linux新版

SublimeText3 Linux最新版

Dreamweaver CS6

視覺化網頁開發工具

DVWA

Damn Vulnerable Web App (DVWA) 是一個PHP/MySQL的Web應用程序,非常容易受到攻擊。它的主要目標是成為安全專業人員在合法環境中測試自己的技能和工具的輔助工具,幫助Web開發人員更好地理解保護網路應用程式的過程,並幫助教師/學生在課堂環境中教授/學習Web應用程式安全性。 DVWA的目標是透過簡單直接的介面練習一些最常見的Web漏洞,難度各不相同。請注意,該軟體中