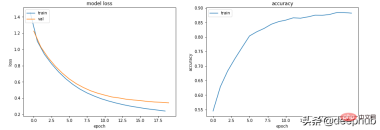

在本文中將對訓練和驗證可能產生的情況進行總結並介紹這些圖表到底能為我們提供什麼樣的資訊。

讓我們從一些簡單的程式碼開始以下程式碼建立了一個基本的訓練流程框架。

from sklearn.model_selection import train_test_split<br>from sklearn.datasets import make_classification<br>import torch<br>from torch.utils.data import Dataset, DataLoader<br>import torch.optim as torch_optim<br>import torch.nn as nn<br>import torch.nn.functional as F<br>import numpy as np<br>import matplotlib.pyplot as pltclass MyCustomDataset(Dataset):<br>def __init__(self, X, Y, scale=False):<br>self.X = torch.from_numpy(X.astype(np.float32))<br>self.y = torch.from_numpy(Y.astype(np.int64))<br><br>def __len__(self):<br>return len(self.y)<br><br>def __getitem__(self, idx):<br>return self.X[idx], self.y[idx]def get_optimizer(model, lr=0.001, wd=0.0):<br>parameters = filter(lambda p: p.requires_grad, model.parameters())<br>optim = torch_optim.Adam(parameters, lr=lr, weight_decay=wd)<br>return optimdef train_model(model, optim, train_dl, loss_func):<br># Ensure the model is in Training mode<br>model.train()<br>total = 0<br>sum_loss = 0<br>for x, y in train_dl:<br>batch = y.shape[0]<br># Train the model for this batch worth of data<br>logits = model(x)<br># Run the loss function. We will decide what this will be when we call our Training Loop<br>loss = loss_func(logits, y)<br># The next 3 lines do all the PyTorch back propagation goodness<br>optim.zero_grad()<br>loss.backward()<br>optim.step()<br># Keep a running check of our total number of samples in this epoch<br>total += batch<br># And keep a running total of our loss<br>sum_loss += batch*(loss.item())<br>return sum_loss/total<br>def train_loop(model, train_dl, valid_dl, epochs, loss_func, lr=0.1, wd=0):<br>optim = get_optimizer(model, lr=lr, wd=wd)<br>train_loss_list = []<br>val_loss_list = []<br>acc_list = []<br>for i in range(epochs): <br>loss = train_model(model, optim, train_dl, loss_func)<br># After training this epoch, keep a list of progress of <br># the loss of each epoch <br>train_loss_list.append(loss)<br>val, acc = val_loss(model, valid_dl, loss_func)<br># Likewise for the validation loss and accuracy<br>val_loss_list.append(val)<br>acc_list.append(acc)<br>print("training loss: %.5f valid loss: %.5f accuracy: %.5f" % (loss, val, acc))<br><br>return train_loss_list, val_loss_list, acc_list<br>def val_loss(model, valid_dl, loss_func):<br># Put the model into evaluation mode, not training mode<br>model.eval()<br>total = 0<br>sum_loss = 0<br>correct = 0<br>batch_count = 0<br>for x, y in valid_dl:<br>batch_count += 1<br>current_batch_size = y.shape[0]<br>logits = model(x)<br>loss = loss_func(logits, y)<br>sum_loss += current_batch_size*(loss.item())<br>total += current_batch_size<br># All of the code above is the same, in essence, to<br># Training, so see the comments there<br># Find out which of the returned predictions is the loudest<br># of them all, and that's our prediction(s)<br>preds = logits.sigmoid().argmax(1)<br># See if our predictions are right<br>correct += (preds == y).float().mean().item()<br>return sum_loss/total, correct/batch_count<br>def view_results(train_loss_list, val_loss_list, acc_list):<br>plt.rcParams["figure.figsize"] = (15, 5)<br>plt.figure()<br>epochs = np.arange(0, len(train_loss_list)) plt.subplot(1, 2, 1)<br>plt.plot(epochs-0.5, train_loss_list)<br>plt.plot(epochs, val_loss_list)<br>plt.title('model loss')<br>plt.ylabel('loss')<br>plt.xlabel('epoch')<br>plt.legend(['train', 'val', 'acc'], loc = 'upper left')<br><br>plt.subplot(1, 2, 2)<br>plt.plot(acc_list)<br>plt.title('accuracy')<br>plt.ylabel('accuracy')<br>plt.xlabel('epoch')<br>plt.legend(['train', 'val', 'acc'], loc = 'upper left')<br>plt.show()<br><br>def get_data_train_and_show(model, batch_size=128, n_samples=10000, n_classes=2, n_features=30, val_size=0.2, epochs=20, lr=0.1, wd=0, break_it=False):<br># We'll make a fictitious dataset, assuming all relevant<br># EDA / Feature Engineering has been done and this is our <br># resultant data<br>X, y = make_classification(n_samples=n_samples, n_classes=n_classes, n_features=n_features, n_informative=n_features, n_redundant=0, random_state=1972)<br><br>if break_it: # Specifically mess up the data<br>X = np.random.rand(n_samples,n_features)<br>X_train, X_val, y_train, y_val = train_test_split(X, y, test_size=val_size, random_state=1972) train_ds = MyCustomDataset(X_train, y_train)<br>valid_ds = MyCustomDataset(X_val, y_val)<br>train_dl = DataLoader(train_ds, batch_size=batch_size, shuffle=True)<br>valid_dl = DataLoader(valid_ds, batch_size=batch_size, shuffle=True) train_loss_list, val_loss_list, acc_list = train_loop(model, train_dl, valid_dl, epochs=epochs, loss_func=F.cross_entropy, lr=lr, wd=wd)<br>view_results(train_loss_list, val_loss_list, acc_list)

以上的程式碼很簡單,就是取得數據,訓練,驗證這樣一個基本的流程,下面我們開始進入正題。

場景1 - 模型似乎可以學習,但在驗證或準確性方面表現不佳

無論超參數如何,模型Train loss 都會緩慢下降,但Val loss 不會下降,並且其Accuracy 並沒有表明它正在學習任何東西。

例如在這種情況下,二進位分類的準確率徘徊在 50% 左右。

class Scenario_1_Model_1(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, out_features)<br>def forward(self, x):<br>x = self.lin1(x)<br>return x<br>get_data_train_and_show(Scenario_1_Model_1(), lr=0.001, break_it=True)

資料中沒有足夠的資訊來允許‘學習’,訓練資料可能沒有包含足夠的資訊來讓模型「學習」。

在這種情況下(程式碼中訓練資料時隨機資料),這意味著它無法學習任何實質內容。

數據必須有足夠的資訊可以從中學習。 EDA 和特徵工程是關鍵!模型學習可以學到的東西,而不是不是編造不存在的東西。

場景2 — 訓練、驗證和準確度曲線都非常不穩定

例如下面程式碼: lr=0.1,bs=128

class Scenario_2_Model_1(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, out_features)<br>def forward(self, x):<br>x = self.lin1(x)<br>return x<br>get_data_train_and_show(Scenario_2_Model_1(), lr=0.1)

#“學習率太高”或“批量太小”可以嘗試將學習率從0.1 降低到0.001,這意味著它不會“反彈”,而是會平穩地降低。

get_data_train_and_show(Scenario_1_Model_1(), lr=0.001)

除了降低學習率外,增加批次大小也會使其更平滑。

get_data_train_and_show(Scenario_1_Model_1(), lr=0.001, batch_size=256)

場景3-訓練損失接近零,準確率看起來還不錯,但驗證並沒有下降,而且還上升了

class Scenario_3_Model_1(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, 50)<br>self.lin2 = nn.Linear(50, 150)<br>self.lin3 = nn.Linear(150, 50)<br>self.lin4 = nn.Linear(50, out_features)<br>def forward(self, x):<br>x = F.relu(self.lin1(x))<br>x = F.relu(self.lin2(x))<br>x = F.relu(self.lin3(x))<br>x = self.lin4(x)<br>return x<br>get_data_train_and_show(Scenario_3_Model_1(), lr=0.001)

這肯定是過度擬合了:訓練損失低且準確率高,而驗證損失和訓練損失越來越大,都是經典的過度擬合指標。

從根本上來說,你的模型學習能力太強了。它對訓練資料的記憶太好,這意味著它也不能泛化到新資料。

我們可以嘗試的第一件事是降低模型的複雜性。

class Scenario_3_Model_2(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, 50)<br>self.lin2 = nn.Linear(50, out_features)<br>def forward(self, x):<br>x = F.relu(self.lin1(x))<br>x = self.lin2(x)<br>return x<br>get_data_train_and_show(Scenario_3_Model_2(), lr=0.001)

這讓它變得更好了,還可以引入 L2 權重衰減正則化,讓它再次變得更好(適用於較淺的模型)。

get_data_train_and_show(Scenario_3_Model_2(), lr=0.001, wd=0.02)

如果我們想保持模型的深度和大小,可以嘗試使用 dropout(適用於更深的模型)。

class Scenario_3_Model_3(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, 50)<br>self.lin2 = nn.Linear(50, 150)<br>self.lin3 = nn.Linear(150, 50)<br>self.lin4 = nn.Linear(50, out_features)<br>self.drops = nn.Dropout(0.4)<br>def forward(self, x):<br>x = F.relu(self.lin1(x))<br>x = self.drops(x)<br>x = F.relu(self.lin2(x))<br>x = self.drops(x)<br>x = F.relu(self.lin3(x))<br>x = self.drops(x)<br>x = self.lin4(x)<br>return x<br>get_data_train_and_show(Scenario_3_Model_3(), lr=0.001)

场景 4 - 训练和验证表现良好,但准确度没有提高

lr = 0.001,bs = 128(默认,分类类别= 5

class Scenario_4_Model_1(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, 2)<br>self.lin2 = nn.Linear(2, out_features)<br>def forward(self, x):<br>x = F.relu(self.lin1(x))<br>x = self.lin2(x)<br>return x<br>get_data_train_and_show(Scenario_4_Model_1(out_features=5), lr=0.001, n_classes=5)

没有足够的学习能力:模型中的其中一层的参数少于模型可能输出中的类。 在这种情况下,当有 5 个可能的输出类时,中间的参数只有 2 个。

这意味着模型会丢失信息,因为它不得不通过一个较小的层来填充它,因此一旦层的参数再次扩大,就很难恢复这些信息。

所以需要记录层的参数永远不要小于模型的输出大小。

class Scenario_4_Model_2(nn.Module):<br>def __init__(self, in_features=30, out_features=2):<br>super().__init__()<br>self.lin1 = nn.Linear(in_features, 50)<br>self.lin2 = nn.Linear(50, out_features)<br>def forward(self, x):<br>x = F.relu(self.lin1(x))<br>x = self.lin2(x)<br>return x<br>get_data_train_and_show(Scenario_4_Model_2(out_features=5), lr=0.001, n_classes=5)

总结

以上就是一些常见的训练、验证时的曲线的示例,希望你在遇到相同情况时可以快速定位并且改进。

以上是機器學習中訓練和驗證指標曲線圖能告訴我們什麼?的詳細內容。更多資訊請關注PHP中文網其他相關文章!

一個提示可以繞過每個主要LLM的保障措施Apr 25, 2025 am 11:16 AM

一個提示可以繞過每個主要LLM的保障措施Apr 25, 2025 am 11:16 AM隱藏者的開創性研究暴露了領先的大語言模型(LLM)的關鍵脆弱性。 他們的發現揭示了一種普遍的旁路技術,稱為“政策木偶”,能夠規避幾乎所有主要LLMS

5個錯誤,大多數企業今年將犯有可持續性Apr 25, 2025 am 11:15 AM

5個錯誤,大多數企業今年將犯有可持續性Apr 25, 2025 am 11:15 AM對環境責任和減少廢物的推動正在從根本上改變企業的運作方式。 這種轉變會影響產品開發,製造過程,客戶關係,合作夥伴選擇以及採用新的

H20芯片禁令震撼中國人工智能公司,但長期以來一直在為影響Apr 25, 2025 am 11:12 AM

H20芯片禁令震撼中國人工智能公司,但長期以來一直在為影響Apr 25, 2025 am 11:12 AM最近對先進AI硬件的限制突出了AI優勢的地緣政治競爭不斷升級,從而揭示了中國對外國半導體技術的依賴。 2024年,中國進口了價值3850億美元的半導體

如果Openai購買Chrome,AI可能會統治瀏覽器戰爭Apr 25, 2025 am 11:11 AM

如果Openai購買Chrome,AI可能會統治瀏覽器戰爭Apr 25, 2025 am 11:11 AM從Google的Chrome剝奪了潛在的剝離,引發了科技行業中的激烈辯論。 OpenAI收購領先的瀏覽器,擁有65%的全球市場份額的前景提出了有關TH的未來的重大疑問

AI如何解決零售媒體的痛苦Apr 25, 2025 am 11:10 AM

AI如何解決零售媒體的痛苦Apr 25, 2025 am 11:10 AM儘管總體廣告增長超過了零售媒體的增長,但仍在放緩。 這個成熟階段提出了挑戰,包括生態系統破碎,成本上升,測量問題和整合複雜性。 但是,人工智能

'AI是我們,比我們更多'Apr 25, 2025 am 11:09 AM

'AI是我們,比我們更多'Apr 25, 2025 am 11:09 AM在一系列閃爍和惰性屏幕中,一個古老的無線電裂縫帶有靜態的裂紋。這堆易於破壞穩定的電子產品構成了“電子廢物之地”的核心,這是沉浸式展覽中的六個裝置之一,&qu&qu

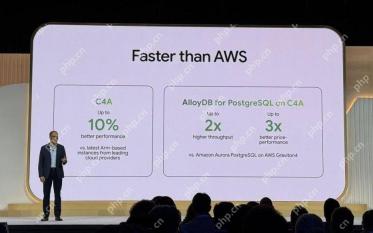

Google Cloud在下一個2025年對基礎架構變得更加認真Apr 25, 2025 am 11:08 AM

Google Cloud在下一個2025年對基礎架構變得更加認真Apr 25, 2025 am 11:08 AMGoogle Cloud的下一個2025:關注基礎架構,連通性和AI Google Cloud的下一個2025會議展示了許多進步,太多了,無法在此處詳細介紹。 有關特定公告的深入分析,請參閱我的文章

IR的秘密支持者透露,Arcana的550萬美元的AI電影管道說話,Arcana的AI Meme,Ai Meme的550萬美元。Apr 25, 2025 am 11:07 AM

IR的秘密支持者透露,Arcana的550萬美元的AI電影管道說話,Arcana的AI Meme,Ai Meme的550萬美元。Apr 25, 2025 am 11:07 AM本週在AI和XR中:一波AI驅動的創造力正在通過從音樂發電到電影製作的媒體和娛樂中席捲。 讓我們潛入頭條新聞。 AI生成的內容的增長影響:技術顧問Shelly Palme

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

Safe Exam Browser

Safe Exam Browser是一個安全的瀏覽器環境,安全地進行線上考試。該軟體將任何電腦變成一個安全的工作站。它控制對任何實用工具的訪問,並防止學生使用未經授權的資源。

PhpStorm Mac 版本

最新(2018.2.1 )專業的PHP整合開發工具

MinGW - Minimalist GNU for Windows

這個專案正在遷移到osdn.net/projects/mingw的過程中,你可以繼續在那裡關注我們。 MinGW:GNU編譯器集合(GCC)的本機Windows移植版本,可自由分發的導入函式庫和用於建置本機Windows應用程式的頭檔;包括對MSVC執行時間的擴展,以支援C99功能。 MinGW的所有軟體都可以在64位元Windows平台上運作。

MantisBT

Mantis是一個易於部署的基於Web的缺陷追蹤工具,用於幫助產品缺陷追蹤。它需要PHP、MySQL和一個Web伺服器。請查看我們的演示和託管服務。

VSCode Windows 64位元 下載

微軟推出的免費、功能強大的一款IDE編輯器