這篇文章主要介紹了關於pytorch visdom CNN處理自建圖片資料集的方法,有著一定的參考價值,現在分享給大家,有需要的朋友可以參考一下

環境

系統:win10

cpu:i7-6700HQ

gpu:gtx965m

python : 3.6

pytorch :0.3

資料下載

#來源自Sasank Chilamkurthy 的教學;資料:下載連結。

下載後解壓縮放到專案根目錄:

資料集為用來分類 螞蟻和蜜蜂。有大約120個訓練圖像,每個類別有75個驗證圖像。

資料導入

可以使用 torchvision.datasets.ImageFolder(root,transforms) 模組 可以將 圖片轉換為 tensor。

先定義transform:

ata_transforms = {

'train': transforms.Compose([

# 随机切成224x224 大小图片 统一图片格式

transforms.RandomResizedCrop(224),

# 图像翻转

transforms.RandomHorizontalFlip(),

# totensor 归一化(0,255) >> (0,1) normalize channel=(channel-mean)/std

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

]),

"val" : transforms.Compose([

# 图片大小缩放 统一图片格式

transforms.Resize(256),

# 以中心裁剪

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])

}

#匯入,載入資料:

##

data_dir = './hymenoptera_data'

# trans data

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x), data_transforms[x]) for x in ['train', 'val']}

# load data

data_loaders = {x: DataLoader(image_datasets[x], batch_size=BATCH_SIZE, shuffle=True) for x in ['train', 'val']}

data_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

print(data_sizes, class_names){'train': 244, 'val': 153} ['ants', 'bees']#訓練集244圖片,測試集153圖片。 視覺化部分圖片看看,由於visdom支援tensor輸入,不用換成numpy,直接用tensor計算可以:

inputs, classes = next(iter(data_loaders['val'])) out = torchvision.utils.make_grid(inputs) inp = torch.transpose(out, 0, 2) mean = torch.FloatTensor([0.485, 0.456, 0.406]) std = torch.FloatTensor([0.229, 0.224, 0.225]) inp = std * inp + mean inp = torch.transpose(inp, 0, 2) viz.images(inp)

建立CNN

net 根據上一篇的處理cifar10的改了一下規格:class CNN(nn.Module):

def __init__(self, in_dim, n_class):

super(CNN, self).__init__()

self.cnn = nn.Sequential(

nn.BatchNorm2d(in_dim),

nn.ReLU(True),

nn.Conv2d(in_dim, 16, 7), # 224 >> 218

nn.BatchNorm2d(16),

nn.ReLU(inplace=True),

nn.MaxPool2d(2, 2), # 218 >> 109

nn.ReLU(True),

nn.Conv2d(16, 32, 5), # 105

nn.BatchNorm2d(32),

nn.ReLU(True),

nn.Conv2d(32, 64, 5), # 101

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.Conv2d(64, 64, 3, 1, 1),

nn.BatchNorm2d(64),

nn.ReLU(True),

nn.MaxPool2d(2, 2), # 101 >> 50

nn.Conv2d(64, 128, 3, 1, 1), #

nn.BatchNorm2d(128),

nn.ReLU(True),

nn.MaxPool2d(3), # 50 >> 16

)

self.fc = nn.Sequential(

nn.Linear(128*16*16, 120),

nn.BatchNorm1d(120),

nn.ReLU(True),

nn.Linear(120, n_class))

def forward(self, x):

out = self.cnn(x)

out = self.fc(out.view(-1, 128*16*16))

return out

# 输入3层rgb ,输出 分类 2

model = CNN(3, 2)

loss,最佳化函數:

line = viz.line(Y=np.arange(10)) loss_f = nn.CrossEntropyLoss() optimizer = optim.SGD(model.parameters(), lr=LR, momentum=0.9) scheduler = optim.lr_scheduler.StepLR(optimizer, step_size=7, gamma=0.1)#參數:

BATCH_SIZE = 4 LR = 0.001 EPOCHS = 10

##運行10個epoch 看看:

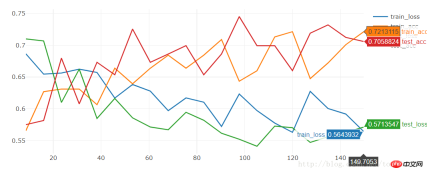

[9/10] train_loss:0.650|train_acc:0.639|test_loss:0.621|test_acc0.706 [10/10] train_loss:0.645|train_acc:0.627|test_loss:0.654|test_acc0.686 Training complete in 1m 16s Best val Acc: 0.712418

運行20個看看:

[19/20] train_loss:0.592|train_acc:0.701|test_loss:0.563|test_acc0.712 [20/20] train_loss:0.564|train_acc:0.721|test_loss:0.571|test_acc0.706 Training complete in 2m 30s Best val Acc: 0.745098

##準確率比較低:只有74.5%

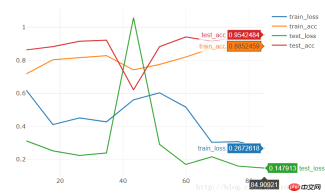

我們使用models 裡的resnet18 運行10個epoch:

model = torchvision.models.resnet18(True) num_ftrs = model.fc.in_features model.fc = nn.Linear(num_ftrs, 2)

[9/10] train_loss:0.621|train_acc:0.652|test_loss:0.588|test_acc0.667 [10/10] train_loss:0.610|train_acc:0.680|test_loss:0.561|test_acc0.667 Training complete in 1m 24s Best val Acc: 0.686275

效果也很一般,想要短時間內就訓練出效果很好的models,我們可以下載訓練好的state,在此基礎上訓練:

model = torchvision.models.resnet18(pretrained=True) num_ftrs = model.fc.in_features model.fc = nn.Linear(num_ftrs, 2)

[9/10] train_loss:0.308|train_acc:0.877|test_loss:0.160|test_acc0.941 [10/10] train_loss:0.267|train_acc:0.885|test_loss:0.148|test_acc0.954 Training complete in 1m 25s Best val Acc: 0.95424810個epoch直接的到95%的準確率。 相關推薦:

pytorch visdom 處理簡單分類問題

###################以上是pytorch + visdom CNN處理自建圖片資料集的方法的詳細內容。更多資訊請關注PHP中文網其他相關文章!

Python的主要目的:靈活性和易用性Apr 17, 2025 am 12:14 AM

Python的主要目的:靈活性和易用性Apr 17, 2025 am 12:14 AMPython的靈活性體現在多範式支持和動態類型系統,易用性則源於語法簡潔和豐富的標準庫。 1.靈活性:支持面向對象、函數式和過程式編程,動態類型系統提高開發效率。 2.易用性:語法接近自然語言,標準庫涵蓋廣泛功能,簡化開發過程。

Python:多功能編程的力量Apr 17, 2025 am 12:09 AM

Python:多功能編程的力量Apr 17, 2025 am 12:09 AMPython因其簡潔與強大而備受青睞,適用於從初學者到高級開發者的各種需求。其多功能性體現在:1)易學易用,語法簡單;2)豐富的庫和框架,如NumPy、Pandas等;3)跨平台支持,可在多種操作系統上運行;4)適合腳本和自動化任務,提升工作效率。

每天2小時學習Python:實用指南Apr 17, 2025 am 12:05 AM

每天2小時學習Python:實用指南Apr 17, 2025 am 12:05 AM可以,在每天花費兩個小時的時間內學會Python。 1.制定合理的學習計劃,2.選擇合適的學習資源,3.通過實踐鞏固所學知識,這些步驟能幫助你在短時間內掌握Python。

Python與C:開發人員的利弊Apr 17, 2025 am 12:04 AM

Python與C:開發人員的利弊Apr 17, 2025 am 12:04 AMPython適合快速開發和數據處理,而C 適合高性能和底層控制。 1)Python易用,語法簡潔,適用於數據科學和Web開發。 2)C 性能高,控制精確,常用於遊戲和系統編程。

Python:時間投入和學習步伐Apr 17, 2025 am 12:03 AM

Python:時間投入和學習步伐Apr 17, 2025 am 12:03 AM學習Python所需時間因人而異,主要受之前的編程經驗、學習動機、學習資源和方法及學習節奏的影響。設定現實的學習目標並通過實踐項目學習效果最佳。

Python:自動化,腳本和任務管理Apr 16, 2025 am 12:14 AM

Python:自動化,腳本和任務管理Apr 16, 2025 am 12:14 AMPython在自動化、腳本編寫和任務管理中表現出色。 1)自動化:通過標準庫如os、shutil實現文件備份。 2)腳本編寫:使用psutil庫監控系統資源。 3)任務管理:利用schedule庫調度任務。 Python的易用性和豐富庫支持使其在這些領域中成為首選工具。

Python和時間:充分利用您的學習時間Apr 14, 2025 am 12:02 AM

Python和時間:充分利用您的學習時間Apr 14, 2025 am 12:02 AM要在有限的時間內最大化學習Python的效率,可以使用Python的datetime、time和schedule模塊。 1.datetime模塊用於記錄和規劃學習時間。 2.time模塊幫助設置學習和休息時間。 3.schedule模塊自動化安排每週學習任務。

Python:遊戲,Guis等Apr 13, 2025 am 12:14 AM

Python:遊戲,Guis等Apr 13, 2025 am 12:14 AMPython在遊戲和GUI開發中表現出色。 1)遊戲開發使用Pygame,提供繪圖、音頻等功能,適合創建2D遊戲。 2)GUI開發可選擇Tkinter或PyQt,Tkinter簡單易用,PyQt功能豐富,適合專業開發。

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

AI Hentai Generator

免費產生 AI 無盡。

熱門文章

熱工具

SecLists

SecLists是最終安全測試人員的伙伴。它是一個包含各種類型清單的集合,這些清單在安全評估過程中經常使用,而且都在一個地方。 SecLists透過方便地提供安全測試人員可能需要的所有列表,幫助提高安全測試的效率和生產力。清單類型包括使用者名稱、密碼、URL、模糊測試有效載荷、敏感資料模式、Web shell等等。測試人員只需將此儲存庫拉到新的測試機上,他就可以存取所需的每種類型的清單。

Safe Exam Browser

Safe Exam Browser是一個安全的瀏覽器環境,安全地進行線上考試。該軟體將任何電腦變成一個安全的工作站。它控制對任何實用工具的訪問,並防止學生使用未經授權的資源。

Atom編輯器mac版下載

最受歡迎的的開源編輯器

MinGW - Minimalist GNU for Windows

這個專案正在遷移到osdn.net/projects/mingw的過程中,你可以繼續在那裡關注我們。 MinGW:GNU編譯器集合(GCC)的本機Windows移植版本,可自由分發的導入函式庫和用於建置本機Windows應用程式的頭檔;包括對MSVC執行時間的擴展,以支援C99功能。 MinGW的所有軟體都可以在64位元Windows平台上運作。

Dreamweaver CS6

視覺化網頁開發工具