社群大家好,

在本文中,我將介紹我的應用程式 iris-RAG-Gen 。

Iris-RAG-Gen 是一款生成式 AI 檢索增強生成 (RAG) 應用程序,它利用 IRIS 向量搜尋的功能,在 Streamlit Web 框架、LangChain 和 OpenAI 的幫助下個性化 ChatGPT。該應用程式使用 IRIS 作為向量存儲。

應用功能

- 將文件(PDF 或 TXT)提取到 IRIS

- 與選定的攝取文件聊天

- 刪除攝取的文件

- OpenAI ChatGPT

將文件(PDF 或 TXT)提取到 IRIS

請依照下列步驟擷取文件:

- 輸入 OpenAI 金鑰

- 選擇文件(PDF 或 TXT)

- 輸入文件說明

- 點選「攝取文件」按鈕

攝取文件功能將文件詳細資料插入 rag_documents 表中,並建立「rag_document id」(rag_documents 的 ID)表來保存向量資料。

下面的 Python 程式碼會將所選文件儲存到向量中:

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.document_loaders import PyPDFLoader, TextLoader

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings

from sqlalchemy import create_engine,text

<span>class RagOpr:</span>

#Ingest document. Parametres contains file path, description and file type

<span>def ingestDoc(self,filePath,fileDesc,fileType):</span>

embeddings = OpenAIEmbeddings()

#Load the document based on the file type

if fileType == "text/plain":

loader = TextLoader(filePath)

elif fileType == "application/pdf":

loader = PyPDFLoader(filePath)

#load data into documents

documents = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=400, chunk_overlap=0)

#Split text into chunks

texts = text_splitter.split_documents(documents)

#Get collection Name from rag_doucments table.

COLLECTION_NAME = self.get_collection_name(fileDesc,fileType)

# function to create collection_name table and store vector data in it.

db = IRISVector.from_documents(

embedding=embeddings,

documents=texts,

collection_name = COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Get collection name

<span>def get_collection_name(self,fileDesc,fileType):</span>

# check if rag_documents table exists, if not then create it

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

SELECT *

FROM INFORMATION_SCHEMA.TABLES

WHERE TABLE_SCHEMA = 'SQLUser'

AND TABLE_NAME = 'rag_documents';

""")

result = []

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

#if table is not created, then create rag_documents table first

if len(result) == 0:

sql = text("""

CREATE TABLE rag_documents (

description VARCHAR(255),

docType VARCHAR(50) )

""")

try:

result = conn.execute(sql)

except Exception as err:

print("An exception occurred:", err)

return ''

#Insert description value

with self.engine.connect() as conn:

with conn.begin():

sql = text("""

INSERT INTO rag_documents

(description,docType)

VALUES (:desc,:ftype)

""")

try:

result = conn.execute(sql, {'desc':fileDesc,'ftype':fileType})

except Exception as err:

print("An exception occurred:", err)

return ''

#select ID of last inserted record

sql = text("""

SELECT LAST_IDENTITY()

""")

try:

result = conn.execute(sql).fetchall()

except Exception as err:

print("An exception occurred:", err)

return ''

return "rag_document"+str(result[0][0])

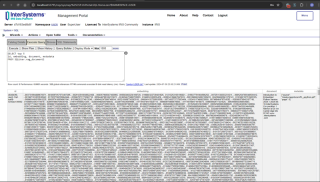

在管理入口網站中輸入以下 SQL 指令來擷取向量資料

SELECT top 5 id, embedding, document, metadata FROM SQLUser.rag_document2

與選定的攝取文件聊天

從選擇聊天選項部分選擇文件並輸入問題。 應用程式將讀取向量資料並傳回相關答案

下面的 Python 程式碼會將所選文件儲存到向量中:

from langchain_iris import IRISVector

from langchain_openai import OpenAIEmbeddings,ChatOpenAI

from langchain.chains import ConversationChain

from langchain.chains.conversation.memory import ConversationSummaryMemory

from langchain.chat_models import ChatOpenAI

<span>class RagOpr:</span>

<span>def ragSearch(self,prompt,id):</span>

#Concat document id with rag_doucment to get the collection name

COLLECTION_NAME = "rag_document"+str(id)

embeddings = OpenAIEmbeddings()

#Get vector store reference

db2 = IRISVector (

embedding_function=embeddings,

collection_name=COLLECTION_NAME,

connection_string=self.CONNECTION_STRING,

)

#Similarity search

docs_with_score = db2.similarity_search_with_score(prompt)

#Prepair the retrieved documents to pass to LLM

relevant_docs = ["".join(str(doc.page_content)) + " " for doc, _ in docs_with_score]

#init LLM

llm = ChatOpenAI(

temperature=0,

model_name="gpt-3.5-turbo"

)

#manage and handle LangChain multi-turn conversations

conversation_sum = ConversationChain(

llm=llm,

memory= ConversationSummaryMemory(llm=llm),

verbose=False

)

#Create prompt

template = f"""

Prompt: <span>{prompt}

Relevant Docuemnts: {relevant_docs}

"""</span>

#Return the answer

resp = conversation_sum(template)

return resp['response']

更多詳情,請造訪iris-RAG-Gen開啟交換申請頁。

謝謝

以上是IRIS-RAG-Gen:由 IRIS 向量搜尋提供支援的個人化 ChatGPT RAG 應用程式的詳細內容。更多資訊請關注PHP中文網其他相關文章!

Python中的合併列表:選擇正確的方法May 14, 2025 am 12:11 AM

Python中的合併列表:選擇正確的方法May 14, 2025 am 12:11 AMTomergelistsinpython,YouCanusethe操作員,estextMethod,ListComprehension,Oritertools

如何在Python 3中加入兩個列表?May 14, 2025 am 12:09 AM

如何在Python 3中加入兩個列表?May 14, 2025 am 12:09 AM在Python3中,可以通過多種方法連接兩個列表:1)使用 運算符,適用於小列表,但對大列表效率低;2)使用extend方法,適用於大列表,內存效率高,但會修改原列表;3)使用*運算符,適用於合併多個列表,不修改原列表;4)使用itertools.chain,適用於大數據集,內存效率高。

Python串聯列表字符串May 14, 2025 am 12:08 AM

Python串聯列表字符串May 14, 2025 am 12:08 AM使用join()方法是Python中從列表連接字符串最有效的方法。 1)使用join()方法高效且易讀。 2)循環使用 運算符對大列表效率低。 3)列表推導式與join()結合適用於需要轉換的場景。 4)reduce()方法適用於其他類型歸約,但對字符串連接效率低。完整句子結束。

Python執行,那是什麼?May 14, 2025 am 12:06 AM

Python執行,那是什麼?May 14, 2025 am 12:06 AMpythonexecutionistheprocessoftransformingpypythoncodeintoExecutablestructions.1)InternterPreterReadSthecode,ConvertingTingitIntObyTecode,whepythonvirtualmachine(pvm)theglobalinterpreterpreterpreterpreterlock(gil)the thepythonvirtualmachine(pvm)

Python:關鍵功能是什麼May 14, 2025 am 12:02 AM

Python:關鍵功能是什麼May 14, 2025 am 12:02 AMPython的關鍵特性包括:1.語法簡潔易懂,適合初學者;2.動態類型系統,提高開發速度;3.豐富的標準庫,支持多種任務;4.強大的社區和生態系統,提供廣泛支持;5.解釋性,適合腳本和快速原型開發;6.多範式支持,適用於各種編程風格。

Python:編譯器還是解釋器?May 13, 2025 am 12:10 AM

Python:編譯器還是解釋器?May 13, 2025 am 12:10 AMPython是解釋型語言,但也包含編譯過程。 1)Python代碼先編譯成字節碼。 2)字節碼由Python虛擬機解釋執行。 3)這種混合機制使Python既靈活又高效,但執行速度不如完全編譯型語言。

python用於循環與循環時:何時使用哪個?May 13, 2025 am 12:07 AM

python用於循環與循環時:何時使用哪個?May 13, 2025 am 12:07 AMUseeAforloopWheniteratingOveraseQuenceOrforAspecificnumberoftimes; useAwhiLeLoopWhenconTinuingUntilAcIntiment.forloopsareIdealForkNownsences,而WhileLeleLeleLeleLeleLoopSituationSituationsItuationsItuationSuationSituationswithUndEtermentersitations。

Python循環:最常見的錯誤May 13, 2025 am 12:07 AM

Python循環:最常見的錯誤May 13, 2025 am 12:07 AMpythonloopscanleadtoerrorslikeinfiniteloops,modifyingListsDuringteritation,逐個偏置,零indexingissues,andnestedloopineflinefficiencies

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

Dreamweaver Mac版

視覺化網頁開發工具

SublimeText3 英文版

推薦:為Win版本,支援程式碼提示!

ZendStudio 13.5.1 Mac

強大的PHP整合開發環境

禪工作室 13.0.1

強大的PHP整合開發環境

Dreamweaver CS6

視覺化網頁開發工具