[ 隨機軟體專案:dev.to 前端挑戰

- WBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWB原創

- 2024-09-12 12:15:10878瀏覽

我們將使用目前的 dev.to 前端挑戰作為探索如何快速建立用於 3D 視覺化的基本靜態檔案 Web 應用程式的手段。我們將使用 THREE.js(我最喜歡的庫之一)來組合一個基本的太陽系工具,可用於顯示挑戰中的標記輸入。

願景

以下是目前啟發此專案的 dev.to 挑戰:

https://dev.to/challenges/frontend-2024-09-04

那麼,讓我們看看我們能多快地按照這些思路組合一些東西!

入門

在一個全新的 Github 項目中,我們將使用 Vite 來啟動並運行項目,並透過開箱即用的熱模組替換(或 HMR)來實現快速迭代:

git clone [url] cd [folder] yarn create vite --template vanilla .

這將建立一個開箱即用的無框架 Vite 專案。我們只需要安裝依賴項,新增三個,然後執行「即時」開發專案:

yarn install yarn add three yarn run dev

這將為我們提供一個「即時」版本,我們可以近乎即時地進行開發和除錯。現在我們準備好進去並開始撕掉東西了!

引擎結構

如果您從未使用過 THREE,有一些事情值得了解。

在引擎設計中,通常在任何給定時間都會發生三個活動或循環。如果這三個操作都是序列完成的,則表示您的核心「遊戲循環」有一系列三種活動:

必須處理某種使用者輸入輪詢或事件

渲染呼叫本身

存在某種內部邏輯/更新行為

諸如網路之類的東西(例如,更新資料包進來)可以被視為此處的輸入,因為(如使用者操作)它們觸發的事件必須傳播到應用程式狀態的某些更新中。

當然,在這一切的背後有一些國家本身的代表。如果您使用的是 ECS,也許這是一組元件表。在我們的例子中,這主要是作為三個物件的實例化(如場景實例)開始的。

考慮到這一點,讓我們開始為我們的應用程式編寫基本佔位符。

剝離東西

我們將從重構頂級index.html開始:

我們不需要靜態檔案引用

我們不需要 Javascript 鉤子

我們需要一個全域範圍的樣式表

我們希望從 HTML 中掛鉤 ES6 模組作為我們的頂級入口點

這使得我們的頂級index.html 檔案看起來像這樣:

<!doctype html> <html lang="en"> <head> <meta charset="UTF-8" /> <meta name="viewport" content="width=device-width, initial-scale=1.0" /> <title>Vite App</title> <link rel="stylesheet" href="index.css" type="text/css" /> <script type="module" src="index.mjs"></script> </head> <body> </body> </html>

我們的全域範圍樣式表將簡單地指定正文應佔據整個螢幕 - 沒有填充、邊距或溢出。

body {

width: 100vw;

height: 100vh;

overflow: hidden;

margin: 0;

padding: 0;

}

現在我們準備好加入 ES6 模組,以及一些基本的佔位符內容,以確保我們的應用程式在清理其餘部分時正常運作:

/**

* index.mjs

*/

function onWindowLoad(event) {

console.log("Window loaded", event);

}

window.addEventListener("load", onWindowLoad);

現在我們可以開始拿出東西了!我們將刪除以下內容:

main.js

javascript.svg

counter.js

公開/

style.css

當然,如果你在瀏覽器中查看「即時」視圖,它將是空白的。但沒關係!現在我們已經準備好進行 3d 了。

三個你好世界

我們將從經典的三個「hello world」旋轉立方體開始。其餘的邏輯將位於我們在上一階段建立的 ES6 模組內。首先我們要導入三個:

import * as THREE from "three";

但是現在怎麼辦?

THREE 有一個既簡單又強大的特定圖形管道。有幾個因素要考慮:

場景

相機

渲染器,它具有(如果未提供)自己的渲染目標和以場景和相機作為參數的 render() 方法

場景只是一個頂層場景圖節點。這些節點是三個有趣屬性的組合:

一個變換(來自父節點)和一個子節點數組

幾何體,它定義我們的頂點緩衝區內容和結構(以及索引緩衝區 - 基本上是定義網格的數值資料)

材質,定義 GPU 如何處理與渲染幾何資料

因此,我們需要定義每一件事才能開始。我們將從我們的相機開始,這要歸功於了解我們的視窗尺寸:

const width = window.innerWidth; const height = window.innerHeight; const camera = new THREE.PerspectiveCamera(70, width/height, 0.01, 10); camera.position.z = 1;

現在我們可以定義場景,我們將向其中添加一個帶有「盒子」幾何和「網格法線」材質的基本立方體:

const scene = new THREE.Scene(); const geometry = new THREE.BoxGeometry(0.2, 0.2, 0.2); const material = new THREE.MeshNormalMaterial(); const mesh = new THREE.Mesh(geometry, material); scene.add(mesh);

Lastly, we'll instantiate the renderer. (Note that, since we don't provide a rendering target, it will create its own canvas, which we will then need to attach to our document body.) We're using a WebGL renderer here; there are some interesting developments in the THREE world towards supporting a WebGPU renderer, too, which are worth checking out.

const renderer = new THREE.WebGLRenderer({

"antialias": true

});

renderer.setSize(width, height);

renderer.setAnimationLoop(animate);

document.body.appendChild(renderer.domElement);

We have one more step to add. We pointed the renderer to an animation loop function, which will be responsible for invoking the render function. We'll also use this opportunity to update the state of our scene.

function animate(time) {

mesh.rotation.x = time / 2000;

mesh.rotation.y = time / 1000;

renderer.render(scene, camera);

}

But this won't quite work yet. The singleton context for a web application is the window; we need to define and attach our application state to this context so various methods (like our animate() function) can access the relevant references. (You could embed the functions in our onWindowLoad(), but this doesn't scale very well when you need to start organizing complex logic across multiple modules and other scopes!)

So, we'll add a window-scoped app object that combines the state of our application into a specific object.

window.app = {

"renderer": null,

"scene": null,

"camera": null

};

Now we can update the animate() and onWindowLoad() functions to reference these properties instead. And once you've done that you will see a Vite-driven spinning cube!

Lastly, let's add some camera controls now. There is an "orbit controls" tool built into the THREE release (but not the default export). This is instantiated with the camera and DOM element, and updated each loop. This will give us some basic pan/rotate/zoom ability in our app; we'll add this to our global context (window.app).

import { OrbitControls } from "three/addons/controls/OrbitControls.js";

// ...in animate():

window.app.controls.update();

// ...in onWindowLoad():

window.app.controls = new OrbitControls(window.app.camera, window.app.renderer.domElement);

We'll also add an "axes helper" to visualize coordinate frame verification and debugging inspections.

// ...in onWindowLoad(): app.scene.add(new THREE.AxesHelper(3));

Not bad. We're ready to move on.

Turning This Into a Solar System

Let's pull up what the solar system should look like. In particular, we need to worry about things like coordinates. The farthest object out will be Pluto (or the Kuiper Belt--but we'll use Pluto as a reference). This is 7.3 BILLION kilometers out--which brings up an interesting problem. Surely we can't use near/far coordinates that big in our camera properties!

These are just floating point values, though. The GPU doesn't care if the exponent is 1 or 100. What matters is, that there is sufficient precision between the near and far values to represent and deconflict pixels in the depth buffer when multiple objects overlap. So, we can move the "far" value out to 8e9 (we'll use kilometers for units here) so long as we also bump up the "near" value, which we'll increase to 8e3. This will give our depth buffer plenty of precision to deconflict large-scale objects like planets and moons.

Next we're going to replace our box geometry and mesh normal material with a sphere geometry and a mesh basic material. We'll use a radius of 7e5 (or 700,000 kilometers) for this sphere. We'll also back out our initial camera position to keep up with the new scale of our scene.

// in onWindowLoad():

app.camera.position.x = 1e7;

// ...

const geometry = new THREE.SPhereGEometry(7e5, 32, 32);

const material = new THERE.MeshBasicMaterial({"color": 0xff7700});

You should now see something that looks like the sun floating in the middle of our solar system!

Planets

Let's add another sphere to represent our first planet, Mercury. We'll do it by hand for now, but it will become quickly obvious how we want to reusably-implement some sort of shared planet model once we've done it once or twice.

We'll start by doing something similar as we did with the sun--defining a spherical geometry and a single-color material. Then, we'll set some position (based on the orbital radius, or semi-major axis, of Mercury's orbit). Finally, we'll add the planet to the scene. We'll also want (though we don't use it yet) to consider what the angular velocity of that planet's orbit is, once we start animating it. We'll consolidate these behaviors, given this interface, within a factory function that returns a new THREE.Mesh instance.

function buildPlanet(radius, initialPosition, angularVelocity, color) {

const geometry = new THREE.SphereGeometry(radius, 32, 32);

const material = new THREE.MeshBasicMaterial({"color": color});

const mesh = new THREE.Mesh(geometry, material);

mesh.position.set(initialPosition.x, initialPosition.y, initialPosition.z);

return mesh;

}

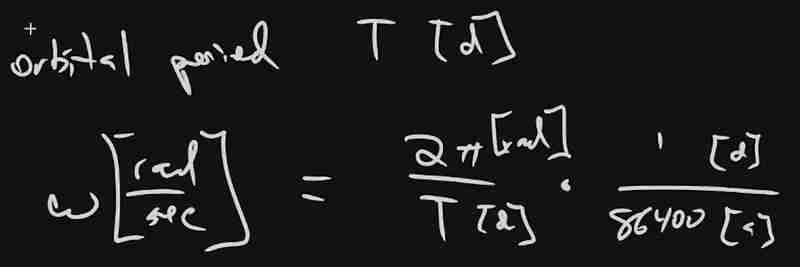

Back in onWindowLoad(), we'll add the planet by calling this function and adding the result to our scene. We'll pass the parameters for Mercury, using a dullish grey for the color. To resolve the angular velocity, which will need to be in radius per second, we'll pass the orbital period (which Wikipedia provides in planet data cards) through a unit conversion:

The resulting call looks something like this:

// ...in onWindowLoad(): window.app.scene.add(buildPlanet(2.4e3, new THREE.Vector3(57.91e6, 0, 0), 2 * Math.PI / 86400 / 87.9691, 0x333333));

(We can also remove the sun rotation calls from the update function at this point.)

If you look at the scene at this point, the sun will look pretty lonely! This is where the realistic scale of the solar system starts becoming an issue. Mercury is small, and compared to the radius of the sun it's still a long way away. So, we'll add a global scaling factor to the radius (to increase it) and the position (to decrease it). This scaling factor will be constant so the relative position of the planets will still be realistic. We'll tweak this value until we are comfortable with how visible our objects are within the scene.

const planetRadiusScale = 1e2;

const planetOrbitScale = 1e-1;

// ...in buildPlanet():

const geometry = new THREE.SphereGeometry(planetRadiusScale * radius, 32, 32);

// ...

mesh.position.set(

planetOrbitScale * initialPosition.x,

planetOrbitScale * initialPosition.y,

planetOrbitScale * initialPosition.z

);

You should now be able to appreciate our Mercury much better!

MOAR PLANETZ

We now have a reasonably-reusable planetary factory. Let's copy and paste spam a few times to finish fleshing out the "inner" solar system. We'll pull our key values from a combination of Wikipedia and our eyeballs' best guess of some approximate color.

// ...in onWindowLoad(): window.app.scene.add(buildPlanet(2.4e3, new THREE.Vector3(57.91e6, 0, 0), 2 * Math.PI / 86400 / 87.9691, 0x666666)); window.app.scene.add(buildPlanet(6.051e3, new THREE.Vector3(108.21e6, 0, 0), 2 * Math.PI / 86400 / 224.701, 0xaaaa77)); window.app.scene.add(buildPlanet(6.3781e3, new THREE.Vector3(1.49898023e8, 0, 0), 2 * Math.PI / 86400 / 365.256, 0x33bb33)); window.app.scene.add(buildPlanet(3.389e3, new THREE.Vector3(2.27939366e8, 0, 0), 2 * Math.PI / 86400 / 686.980, 0xbb3333));

Hey! Not bad. It's worth putting a little effort into reusable code, isn't it?

But this is still something of a mess. We will have a need to reuse this data, so we shouldn't copy-paste "magic values" like these. Let's pretend the planet data is instead coming from a database somewhere. We'll mock this up by creating a global array of objects that are procedurally parsed to extract our planet models. We'll add some annotations for units while we're at it, as well as a "name" field that we can use later to correlate planets, objects, data, and markup entries.

At the top of the module, then, we'll place the following:

const planets = [

{

"name": "Mercury",

"radius_km": 2.4e3,

"semiMajorAxis_km": 57.91e6,

"orbitalPeriod_days": 87.9691,

"approximateColor_hex": 0x666666

}, {

"name": "Venus",

"radius_km": 6.051e3,

"semiMajorAxis_km": 108.21e6,

"orbitalPeriod_days": 224.701,

"approximateColor_hex": 0xaaaa77

}, {

"name": "Earth",

"radius_km": 6.3781e3,

"semiMajorAxis_km": 1.49898023e8,

"orbitalPeriod_days": 365.256,

"approximateColor_hex": 0x33bb33

}, {

"name": "Mars",

"radius_km": 3.389e3,

"semiMajorAxis_km": 2.27939366e8,

"orbitalPeriod_days": 686.980,

"approximateColor_hex": 0xbb3333

}

];

Now we're ready to iterate through these data items when populating our scene:

// ...in onWindowLoad():

planets.forEach(p => {

window.app.scene.add(buildPlanet(p.radius_km, new THREE.Vector3(p.semiMajorAxis_km, 0, 0), 2 * Math.PI / 86400 / p.orbitalPeriod_days, p.approximateColor_hex));

});

Adding Some Tracability

Next we'll add some "orbit traces" that illustrate the path each planet will take during one revolution about the sun. Since (for the time being, until we take into account the specific elliptical orbits of each planet) this is just a circle with a known radius. We'll sample that orbit about one revolution in order to construct a series of points, which we'll use to instantiate a line that is then added to the scene.

This involves the creation of a new factory function, but it can reuse the same iteration and planet models as our planet factory. First, let's define the factory function, which only has one parameter for now:

function buildOrbitTrace(radius) {

const points = [];

const n = 1e2;

for (var i = 0; i < (n = 1); i += 1) {

const ang_rad = 2 * Math.PI * i / n;

points.push(new THREE.Vector3(

planetOrbitScale * radius * Math.cos(ang_rad),

planetOrbitScale * radius * Math.sin(ang_rad),

planetOrbitScale * 0.0

));

}

const geometry = new THREE.BufferGeometry().setFromPoints(points);

const material = new THREE.LineBasicMaterial({

// line shaders are surprisingly tricky, thank goodness for THREE!

"color": 0x555555

});

return new THREE.Line(geometry, material);

}

Now we'll modify the iteration in our onWindowLoad() function to instantiate orbit traces for each planet:

// ...in onWindowLoad():

planets.forEach(p => {

window.app.scene.add(buildPlanet(p.radius_km, new THREE.Vector3(p.semiMajorAxis_km, 0, 0), 2 * Math.PI / 86400 / p.orbitalPeriod_days, p.approximateColor_hex));

window.app.scene.add(buildOrbitTrace(p.semiMajoxAxis_km));

});

Now that we have a more three-dimensional scene, we'll also notice that our axis references are inconsistent. The OrbitControls model assumes y is up, because it looks this up from the default camera frame (LUR, or "look-up-right"). We'll want to adjust this after we initially instantiate the original camera:

// ...in onWindowLoad(): app.camera.position.z = 1e7; app.camera.up.set(0, 0, 1);

Now if you rotate about the center of our solar system with your mouse, you will notice a much more natural motion that stays fixed relative to the orbital plane. And of course you'll see our orbit traces!

Clicky-Clicky

Now it's time to think about how we want to fold in the markup for the challenge. Let's take a step back and consider the design for a moment. Let's say there will be a dialog that comes up when you click on a planet. That dialog will present the relevant section of markup, associated via the name attribute of the object that has been clicked.

But that means we need to detect and compute clicks. This will be done with a technique known as "raycasting". Imagine a "ray" that is cast out of your eyeball, into the direction of the mouse cursor. This isn't a natural part of the graphics pipeline, where the transforms are largely coded into the GPU and result exclusively in colored pixels.

In order to back out those positions relative to mouse coordinates, we'll need some tools that handle those transforms for us within the application layer, on the CPU. This "raycaster" will take the current camera state (position, orientation, and frustrum properties) and the current mouse position. It will look through the scene graph and compare (sometimes against a specific collision distance) the distance of those node positions from the mathematical ray that this represents.

Within THREE, fortunately, there are some great built-in tools for doing this. We'll need to add two things to our state: the raycaster itself, and some representation (a 2d vector) of the mouse state.

window.app = {

// ... previous content

"raycaster": null,

"mouse_pos": new THREE.Vector2(0, 0)

};

We'll need to subscribe to movement events within the window to update this mouse position. We'll create a new function, onMouseMove(), and use it to add an event listener in our onWindowLoad() initialization after we create the raycaster:

// ...in onWindowLoad():

window.app.raycaster = new THREE.Raycaster();

window.addEventListener("pointermove", onPointerMove);

Now let's create the listener itself. This simply transforms the [0,1] window coordinates into [-1,1] coordinates used by the camera frame. This is a fairly straightforward pair of equations:

function onPointerMove(event) {

window.app.mouse_pos.x = (event.clientX / window.innerWidth) * 2 - 1;

window.app.mouse_pos.y = (event.clientY / window.innerHeight) * 2 - 1;

}

Finally, we'll add the raycasting calculation to our rendering pass. Technically (if you recall our "three parts of the game loop" model) this is an internal update that is purely a function of game state. But we'll combine the rendering pass and the update calculation for the time being.

// ...in animate():

window.app.raycaster.setFromCamera(window.app.mouse_pos, window.app.camera):

const intersections = window.app.raycaster.intersectObjects(window.app.scene.children);

if (intersections.length > 0) { console.log(intersections); }

Give it a quick try! That's a pretty neat point to take a break.

What's Next?

What have we accomplished here:

We have a representation of the sun and inner solar system

We have reusable factories for both planets and orbit traces

We have basic raycasting for detecting mouse collisions in real time

We have realistic dimensions (with some scaling) in our solar system frame

But we're not done yet! We still need to present the markup in response to those events, and there's a lot more we can add! So, don't be surprised if there's a Part Two that shows up at some point.

以上是[ 隨機軟體專案:dev.to 前端挑戰的詳細內容。更多資訊請關注PHP中文網其他相關文章!