by A. Jesse Jiryu Davis, Python Evangelist at 10gen In a sharded cluster of replica sets, which server or servers handle each of your queries? What about each insert, update, or command? If you know how a MongoDB cluster routes operations

by A. Jesse Jiryu Davis, Python Evangelist at 10gen

In a sharded cluster of replica sets, which server or servers handle each of your queries? What about each insert, update, or command? If you know how a MongoDB cluster routes operations among its servers, you can predict how your application will scale as you add shards and add members to shards.

Operations are routed according to the type of operation, your shard key, and your read preference. Let’s set up a cluster and use the system profiler to see where each operation is run. This is an interactive, experimental way to learn how your cluster really behaves and how your architecture will scale.

Setup

You’ll need a recent install of MongoDB (I’m using 2.4.4), Python, a recent version of PyMongo (at least 2.4—I’m using 2.5.2) and the code in my cluster-profile repository on GitHub. If you install the Colorama Python package you’ll get cute colored output. These scripts were tested on my Mac.

Sharded cluster of replica sets

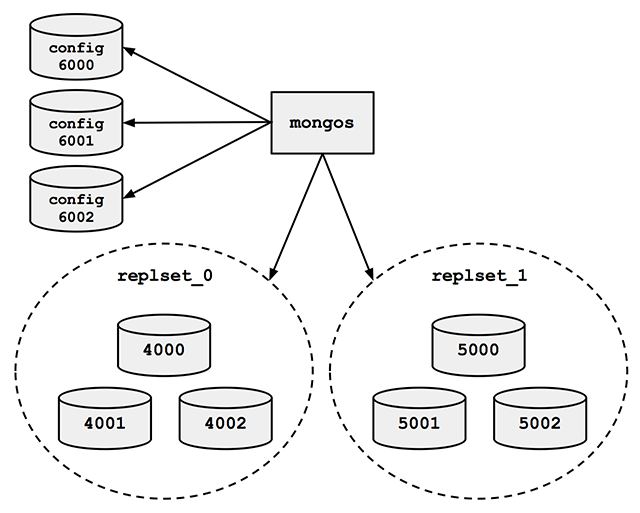

Run the cluster_setup.py script in my repository. It sets up a standard sharded cluster for you running on your local machine. There’s a mongos, three config servers, and two shards, each of which is a three-member replica set. The first shard’s replica set is running on ports 4000 through 4002, the second shard is on ports 5000 through 5002, and the three config servers are on ports 6000 through 6002:

For the finale, cluster_setup.py makes a collection named sharded_collection, sharded on a key named shard_key.

In a normal deployment, we’d let MongoDB’s balancer automatically distribute chunks of data among our two shards. But for this demo we want documents to be on predictable shards, so my script disables the balancer. It makes a chunk for all documents with shard_key less than 500 and another chunk for documents with shard_key greater than or equal to 500. It moves the high chunk to replset_1:

client = MongoClient() # Connect to mongos. admin = client.admin # admin database.

Pre-split.

admin.command(

'split', 'test.sharded_collection',

middle={'shard_key': 500})

admin.command(

'moveChunk', 'test.sharded_collection',

find={'shard_key': 500},

to='replset_1')

If you connect to mongos with the MongoDB shell, sh.status() shows there’s one chunk on each of the two shards:

{ "shard_key" : { "$minKey" : 1 } } -->> { "shard_key" : 500 } on : replset_0 { "t" : 2, "i" : 1 }

{ "shard_key" : 500 } -->> { "shard_key" : { "$maxKey" : 1 } } on : replset_1 { "t" : 2, "i" : 0 }

The setup script also inserts a document with a shard_key of 0 and another with a shard_key of 500. Now we’re ready for some profiling.

Profiling

Run the tail_profile.py script from my repository. It connects to all the replica set members. On each, it sets the profiling level to 2 (“log everything”) on the test database, and creates a tailable cursor on the system.profile collection. The script filters out some noise in the profile collection—for example, the activities of the tailable cursor show up in the system.profile collection that it’s tailing. Any legitimate entries in the profile are spat out to the console in pretty colors.

Experiments

Targeted queries versus scatter-gather

Let’s run a query from Python in a separate terminal:

>>> from pymongo import MongoClient

>>> # Connect to mongos.

>>> collection = MongoClient().test.sharded_collection

>>> collection.find_one({'shard_key': 0})

{'_id': ObjectId('51bb6f1cca1ce958c89b348a'), 'shard_key': 0}

tail_profile.py prints:

replset_0 primary on 4000: query test.sharded_collection {“shard_key”: 0}

The query includes the shard key, so mongos reads from the shard that can satisfy it. Adding shards can scale out your throughput on a query like this. What about a query that doesn’t contain the shard key?:

>>> collection.find_one({})

mongos sends the query to both shards:

replset_0 primary on 4000: query test.sharded_collection {“shard_key”: 0}

replset_1 primary on 5000: query test.sharded_collection {“shard_key”: 500}

For fan-out queries like this, adding more shards won’t scale out your query throughput as well as it would for targeted queries, because every shard has to process every query. But we can scale throughput on queries like these by reading from secondaries.

Queries with read preferences

We can use read preferences to read from secondaries:

>>> from pymongo.read_preferences import ReadPreference

>>> collection.find_one({}, read_preference=ReadPreference.SECONDARY)

tail_profile.py shows us that mongos chose a random secondary from each shard:

replset_0 secondary on 4001: query test.sharded_collection {“$readPreference”: {“mode”: “secondary”}, “$query”: {}}

replset_1 secondary on 5001: query test.sharded_collection {“$readPreference”: {“mode”: “secondary”}, “$query”: {}}

Note how PyMongo passes the read preference to mongos in the query, as the $readPreference field. mongos targets one secondary in each of the two replica sets.

Updates

With a sharded collection, updates must either include the shard key or be “multi-updates”. An update with the shard key goes to the proper shard, of course:

>>> collection.update({'shard_key': -100}, {'$set': {'field': 'value'}})

replset_0 primary on 4000: update test.sharded_collection {“shard_key”: -100}

mongos only sends the update to replset_0, because we put the chunk of documents with shard_key less than 500 there.

A multi-update hits all shards:

>>> collection.update({}, {'$set': {'field': 'value'}}, multi=True)

replset_0 primary on 4000: update test.sharded_collection {}

replset_1 primary on 5000: update test.sharded_collection {}

A multi-update on a range of the shard key need only involve the proper shard:

>>> collection.update({'shard_key': {'$gt': 1000}}, {'$set': {'field': 'value'}}, multi=True)

replset_1 primary on 5000: update test.sharded_collection {“shard_key”: {“$gt”: 1000}}

So targeted updates that include the shard key can be scaled out by adding shards. Even multi-updates can be scaled out if they include a range of the shard key, but multi-updates without the shard key won’t benefit from extra shards.

Commands

In version 2.4, mongos can use secondaries not only for queries, but also for some commands. You can run count on secondaries if you pass the right read preference:

>>> cursor = collection.find(read_preference=ReadPreference.SECONDARY) >>> cursor.count()

replset_0 secondary on 4001: command count: sharded_collection

replset_1 secondary on 5001: command count: sharded_collection

Whereas findAndModify, since it modifies data, is run on the primaries no matter your read preference:

>>> db = MongoClient().test

>>> test.command(

... 'findAndModify',

... 'sharded_collection',

... query={'shard_key': -1},

... remove=True,

... read_preference=ReadPreference.SECONDARY)

replset_0 primary on 4000: command findAndModify: sharded_collection

Go Forth And Scale

To scale a sharded cluster, you should understand how operations are distributed: are they scatter-gather, or targeted to one shard? Do they run on primaries or secondaries? If you set up a cluster and test your queries interactively like we did here, you can see how your cluster behaves in practice, and design your application for future growth.

Read Jesse’s blog, Emptysquare and follow him on Github

原文地址:Real-time Profiling a MongoDB Cluster, 感谢原作者分享。

如何在MySQL中刪除或修改現有視圖?May 16, 2025 am 12:11 AM

如何在MySQL中刪除或修改現有視圖?May 16, 2025 am 12:11 AMtodropaviewInmySQL,使用“ dropviewifexistsview_name;” andTomodifyAview,使用“ createOrreplaceViewViewViewview_nameAsSelect ...”。 whendroppingaview,asew dectivectenciesanduse和showcreateateviewViewview_name;“ tounderStanditSsstructure.whenModifying

MySQL視圖:我可以使用哪些設計模式?May 16, 2025 am 12:10 AM

MySQL視圖:我可以使用哪些設計模式?May 16, 2025 am 12:10 AMmySqlViewScaneFectectialized unizedesignpatternslikeadapter,Decorator,Factory,andObserver.1)adapterPatternadaptSdataForomDifferentTablesIntoAunifiendView.2)decoratorPatternenhancateDataWithCalcalcualdCalcalculenfields.3)fieldfields.3)

在MySQL中使用視圖的優點是什麼?May 16, 2025 am 12:09 AM

在MySQL中使用視圖的優點是什麼?May 16, 2025 am 12:09 AM查看InMysqlareBeneForsImplifyingComplexqueries,增強安全性,確保dataConsistency,andOptimizingPerformance.1)他們simimplifycomplexqueriesbleiesbyEncapsbyEnculatingThemintoreusableviews.2)viewsEnenenhancesecuritybyControllityByControllingDataAcces.3)

如何在MySQL中創建一個簡單的視圖?May 16, 2025 am 12:08 AM

如何在MySQL中創建一個簡單的視圖?May 16, 2025 am 12:08 AMtoCreateAsimpleViewInmySQL,USEthecReateaTeviewStatement.1)defitEtheetEtheTeViewWithCreatEaTeviewView_nameas.2)指定usethectstatementTorivedesireddata.3)usethectStatementTorivedesireddata.3)usetheviewlikeatlikeatlikeatlikeatlikeatlikeatable.views.viewssimplplifefifydataaccessandenenanceberity but consisterfort,butconserfort,consoncontorfinft

MySQL創建用戶語句:示例和常見錯誤May 16, 2025 am 12:04 AM

MySQL創建用戶語句:示例和常見錯誤May 16, 2025 am 12:04 AM1)foralocaluser:createUser'localuser'@'@'localhost'Indidendify'securepassword'; 2)foraremoteuser:creationuser's creationuser'Remoteer'Remoteer'Remoteer'Remoteer'Remoteer'Remoteer'Remoteer'Remoteer'Rocaluser'@'localhost'Indidendify'seceledify'Securepassword'; 2)

在MySQL中使用視圖的局限性是什麼?May 14, 2025 am 12:10 AM

在MySQL中使用視圖的局限性是什麼?May 14, 2025 am 12:10 AMmysqlviewshavelimitations:1)他們不使用Supportallsqloperations,限制DatamanipulationThroughViewSwithJoinsOrsubqueries.2)他們canimpactperformance,尤其是withcomplexcomplexclexeriesorlargedatasets.3)

確保您的MySQL數據庫:添加用戶並授予特權May 14, 2025 am 12:09 AM

確保您的MySQL數據庫:添加用戶並授予特權May 14, 2025 am 12:09 AMporthusermanagementinmysqliscialforenhancingsEcurityAndsingsmenting效率databaseoperation.1)usecReateusertoAddusers,指定connectionsourcewith@'localhost'or@'%'。

哪些因素會影響我可以在MySQL中使用的觸發器數量?May 14, 2025 am 12:08 AM

哪些因素會影響我可以在MySQL中使用的觸發器數量?May 14, 2025 am 12:08 AMmysqldoes notimposeahardlimitontriggers,butacticalfactorsdeterminetheireffactective:1)serverConfiguration impactactStriggerGermanagement; 2)複雜的TriggerSincreaseSySystemsystem load; 3)largertablesslowtriggerperfermance; 4)highConconcConcrencerCancancancancanceTigrignecentign; 5); 5)

熱AI工具

Undresser.AI Undress

人工智慧驅動的應用程序,用於創建逼真的裸體照片

AI Clothes Remover

用於從照片中去除衣服的線上人工智慧工具。

Undress AI Tool

免費脫衣圖片

Clothoff.io

AI脫衣器

Video Face Swap

使用我們完全免費的人工智慧換臉工具,輕鬆在任何影片中換臉!

熱門文章

熱工具

WebStorm Mac版

好用的JavaScript開發工具

SublimeText3 Linux新版

SublimeText3 Linux最新版

MinGW - Minimalist GNU for Windows

這個專案正在遷移到osdn.net/projects/mingw的過程中,你可以繼續在那裡關注我們。 MinGW:GNU編譯器集合(GCC)的本機Windows移植版本,可自由分發的導入函式庫和用於建置本機Windows應用程式的頭檔;包括對MSVC執行時間的擴展,以支援C99功能。 MinGW的所有軟體都可以在64位元Windows平台上運作。

SublimeText3漢化版

中文版,非常好用

SublimeText3 Mac版

神級程式碼編輯軟體(SublimeText3)