Rumah >pembangunan bahagian belakang >Tutorial Python >ClassiSage: Terraform IaC Automated AWS SageMaker berasaskan Model klasifikasi Log HDFS

ClassiSage: Terraform IaC Automated AWS SageMaker berasaskan Model klasifikasi Log HDFS

- Barbara Streisandasal

- 2024-10-26 05:04:30658semak imbas

ClassiSage

Model Pembelajaran Mesin yang dibuat dengan AWS SageMaker dan Python SDK untuk Klasifikasi Log HDFS menggunakan Terraform untuk automasi persediaan infrastruktur.

Pautan: GitHub

Bahasa: HCL (terraform), Python

Kandungan

- Gambaran Keseluruhan: Gambaran Keseluruhan Projek.

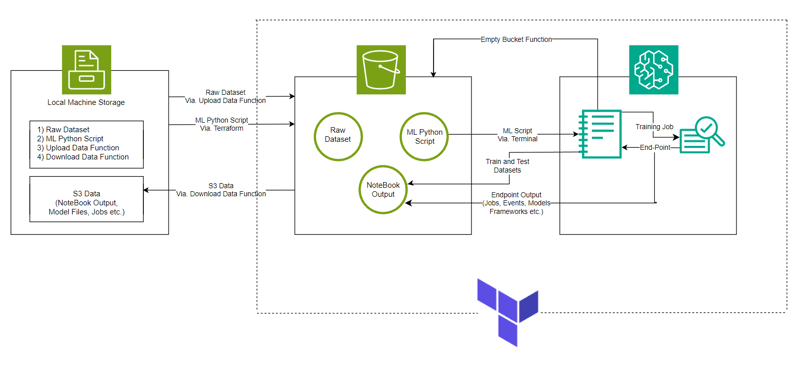

- Seni Bina Sistem: Gambarajah Seni Bina Sistem

- Model ML: Gambaran Keseluruhan Model.

- Bermula: Cara menjalankan projek.

- Pemerhatian Konsol: Perubahan dalam kejadian dan infrastruktur yang boleh diperhatikan semasa menjalankan projek.

- Pengakhiran dan Pembersihan: Memastikan tiada caj tambahan.

- Objek Dicipta Auto: Fail dan Folder dibuat semasa proses pelaksanaan.

- Mula-mula ikuti Struktur Direktori untuk persediaan projek yang lebih baik.

- Ambil rujukan utama daripada Repositori Projek ClassiSage yang dimuat naik dalam GitHub untuk pemahaman yang lebih baik.

Gambaran keseluruhan

- Model ini dibuat dengan AWS SageMaker untuk Klasifikasi Log HDFS bersama-sama dengan S3 untuk menyimpan set data, fail Notebook (mengandungi kod untuk contoh SageMaker) dan Output Model.

- Persediaan Infrastruktur diautomasikan menggunakan alat Terraform untuk menyediakan infrastruktur-sebagai-kod yang dibuat oleh HashiCorp

- Set data yang digunakan ialah HDFS_v1.

- Projek ini melaksanakan SageMaker Python SDK dengan model XGBoost versi 1.2

Seni Bina Sistem

Model ML

- URI Imej

# Looks for the XGBoost image URI and builds an XGBoost container. Specify the repo_version depending on preference.

container = get_image_uri(boto3.Session().region_name,

'xgboost',

repo_version='1.0-1')

- Memulakan panggilan Parameter Hiper dan Penganggar ke bekas

hyperparameters = {

"max_depth":"5", ## Maximum depth of a tree. Higher means more complex models but risk of overfitting.

"eta":"0.2", ## Learning rate. Lower values make the learning process slower but more precise.

"gamma":"4", ## Minimum loss reduction required to make a further partition on a leaf node. Controls the model’s complexity.

"min_child_weight":"6", ## Minimum sum of instance weight (hessian) needed in a child. Higher values prevent overfitting.

"subsample":"0.7", ## Fraction of training data used. Reduces overfitting by sampling part of the data.

"objective":"binary:logistic", ## Specifies the learning task and corresponding objective. binary:logistic is for binary classification.

"num_round":50 ## Number of boosting rounds, essentially how many times the model is trained.

}

# A SageMaker estimator that calls the xgboost-container

estimator = sagemaker.estimator.Estimator(image_uri=container, # Points to the XGBoost container we previously set up. This tells SageMaker which algorithm container to use.

hyperparameters=hyperparameters, # Passes the defined hyperparameters to the estimator. These are the settings that guide the training process.

role=sagemaker.get_execution_role(), # Specifies the IAM role that SageMaker assumes during the training job. This role allows access to AWS resources like S3.

train_instance_count=1, # Sets the number of training instances. Here, it’s using a single instance.

train_instance_type='ml.m5.large', # Specifies the type of instance to use for training. ml.m5.2xlarge is a general-purpose instance with a balance of compute, memory, and network resources.

train_volume_size=5, # 5GB # Sets the size of the storage volume attached to the training instance, in GB. Here, it’s 5 GB.

output_path=output_path, # Defines where the model artifacts and output of the training job will be saved in S3.

train_use_spot_instances=True, # Utilizes spot instances for training, which can be significantly cheaper than on-demand instances. Spot instances are spare EC2 capacity offered at a lower price.

train_max_run=300, # Specifies the maximum runtime for the training job in seconds. Here, it's 300 seconds (5 minutes).

train_max_wait=600) # Sets the maximum time to wait for the job to complete, including the time waiting for spot instances, in seconds. Here, it's 600 seconds (10 minutes).

- Kerja Latihan

estimator.fit({'train': s3_input_train,'validation': s3_input_test})

- Pengerahan

xgb_predictor = estimator.deploy(initial_instance_count=1,instance_type='ml.m5.large')

- Pengesahan

# Looks for the XGBoost image URI and builds an XGBoost container. Specify the repo_version depending on preference.

container = get_image_uri(boto3.Session().region_name,

'xgboost',

repo_version='1.0-1')

Bermula

- Klon repositori menggunakan Git Bash / muat turun fail .zip / garpu repositori.

- Pergi ke Konsol Pengurusan AWS anda, klik pada profil akaun anda di sudut Kanan Atas dan pilih Bukti Kelayakan Keselamatan Saya daripada menu lungsur.

- Buat Kunci Akses: Dalam bahagian Kekunci Akses, klik pada Buat Kunci Akses Baharu, dialog akan muncul dengan ID Kunci Akses dan Kunci Akses Rahsia anda.

- Muat Turun atau Salin Kekunci: (PENTING) Muat turun fail .csv atau salin kekunci ke lokasi yang selamat. Ini adalah satu-satunya masa anda boleh melihat kunci akses rahsia.

- Buka Repo yang diklon. dalam Kod VS anda

- Buat fail di bawah ClassiSage sebagai terraform.tfvars dengan kandungannya sebagai

hyperparameters = {

"max_depth":"5", ## Maximum depth of a tree. Higher means more complex models but risk of overfitting.

"eta":"0.2", ## Learning rate. Lower values make the learning process slower but more precise.

"gamma":"4", ## Minimum loss reduction required to make a further partition on a leaf node. Controls the model’s complexity.

"min_child_weight":"6", ## Minimum sum of instance weight (hessian) needed in a child. Higher values prevent overfitting.

"subsample":"0.7", ## Fraction of training data used. Reduces overfitting by sampling part of the data.

"objective":"binary:logistic", ## Specifies the learning task and corresponding objective. binary:logistic is for binary classification.

"num_round":50 ## Number of boosting rounds, essentially how many times the model is trained.

}

# A SageMaker estimator that calls the xgboost-container

estimator = sagemaker.estimator.Estimator(image_uri=container, # Points to the XGBoost container we previously set up. This tells SageMaker which algorithm container to use.

hyperparameters=hyperparameters, # Passes the defined hyperparameters to the estimator. These are the settings that guide the training process.

role=sagemaker.get_execution_role(), # Specifies the IAM role that SageMaker assumes during the training job. This role allows access to AWS resources like S3.

train_instance_count=1, # Sets the number of training instances. Here, it’s using a single instance.

train_instance_type='ml.m5.large', # Specifies the type of instance to use for training. ml.m5.2xlarge is a general-purpose instance with a balance of compute, memory, and network resources.

train_volume_size=5, # 5GB # Sets the size of the storage volume attached to the training instance, in GB. Here, it’s 5 GB.

output_path=output_path, # Defines where the model artifacts and output of the training job will be saved in S3.

train_use_spot_instances=True, # Utilizes spot instances for training, which can be significantly cheaper than on-demand instances. Spot instances are spare EC2 capacity offered at a lower price.

train_max_run=300, # Specifies the maximum runtime for the training job in seconds. Here, it's 300 seconds (5 minutes).

train_max_wait=600) # Sets the maximum time to wait for the job to complete, including the time waiting for spot instances, in seconds. Here, it's 600 seconds (10 minutes).

- Muat turun dan pasang semua kebergantungan untuk menggunakan Terraform dan Python.

Dalam jenis terminal/tampal terraform init untuk memulakan hujung belakang.

Kemudian taip/tampal terraform Plan untuk melihat pelan atau hanya terraform validate untuk memastikan tiada ralat.

Akhir sekali dalam jenis terminal/tampal terraform apply --auto-lulus

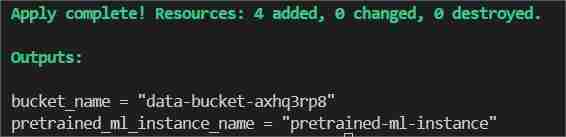

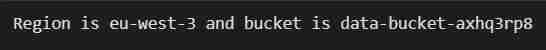

Ini akan menunjukkan dua output satu sebagai bucket_name yang lain sebagai pretrained_ml_instance_name (Sumber ke-3 ialah nama pembolehubah yang diberikan kepada baldi kerana ia adalah sumber global ).

- Selepas Selesai arahan ditunjukkan dalam terminal, navigasi ke ClassiSage/ml_ops/function.py dan pada baris ke-11 fail dengan kod

estimator.fit({'train': s3_input_train,'validation': s3_input_test})

dan ubahnya ke laluan yang terdapat direktori projek dan simpannya.

- Kemudian pada ClassiSageml_opsdata_upload.ipynb jalankan semua sel kod sehingga nombor sel 25 dengan kod

xgb_predictor = estimator.deploy(initial_instance_count=1,instance_type='ml.m5.large')

untuk memuat naik set data ke S3 Bucket.

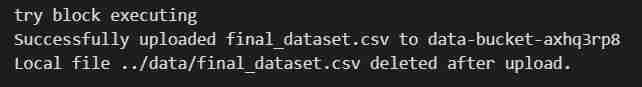

- Output pelaksanaan sel kod

- Selepas pelaksanaan buku nota, buka semula Konsol Pengurusan AWS anda.

- Anda boleh mencari perkhidmatan S3 dan Sagemaker dan akan melihat contoh setiap perkhidmatan yang dimulakan (baldi S3 dan Buku Nota SageMaker)

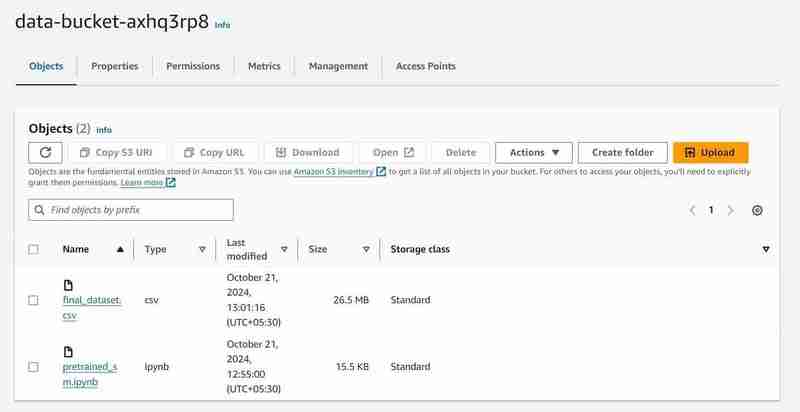

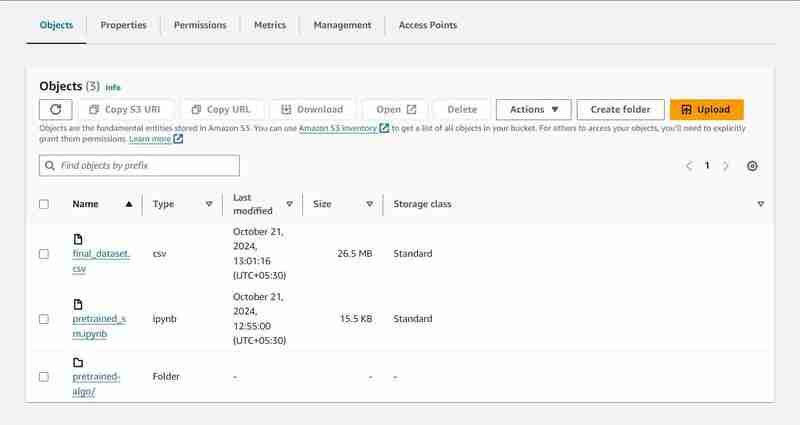

S3 Baldi dengan nama 'data-bucket-' dengan 2 objek dimuat naik, set data dan fail pralatihan_sm.ipynb yang mengandungi kod model.

- Pergi ke contoh buku nota dalam AWS SageMaker, klik pada contoh yang dibuat dan klik pada Jupyter terbuka.

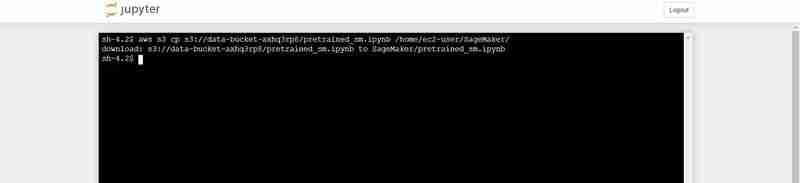

- Selepas itu klik pada baharu di bahagian atas sebelah kanan tetingkap dan pilih pada terminal.

- Ini akan mencipta terminal baharu.

- Pada terminal tampal yang berikut (Menggantikan dengan output bucket_name yang ditunjukkan dalam output terminal Kod VS):

# Looks for the XGBoost image URI and builds an XGBoost container. Specify the repo_version depending on preference.

container = get_image_uri(boto3.Session().region_name,

'xgboost',

repo_version='1.0-1')

Arahan terminal untuk memuat naik pretrained_sm.ipynb dari S3 ke persekitaran Jupyter Notebook

- Kembali ke contoh Jupyter yang dibuka dan klik pada fail pretrained_sm.ipynb untuk membukanya dan berikannya conda_python3 Kernel.

- Tatal ke bawah ke sel ke-4 dan gantikan nilai bucket_name pembolehubah dengan output terminal Kod VS untuk bucket_name = "

"

hyperparameters = {

"max_depth":"5", ## Maximum depth of a tree. Higher means more complex models but risk of overfitting.

"eta":"0.2", ## Learning rate. Lower values make the learning process slower but more precise.

"gamma":"4", ## Minimum loss reduction required to make a further partition on a leaf node. Controls the model’s complexity.

"min_child_weight":"6", ## Minimum sum of instance weight (hessian) needed in a child. Higher values prevent overfitting.

"subsample":"0.7", ## Fraction of training data used. Reduces overfitting by sampling part of the data.

"objective":"binary:logistic", ## Specifies the learning task and corresponding objective. binary:logistic is for binary classification.

"num_round":50 ## Number of boosting rounds, essentially how many times the model is trained.

}

# A SageMaker estimator that calls the xgboost-container

estimator = sagemaker.estimator.Estimator(image_uri=container, # Points to the XGBoost container we previously set up. This tells SageMaker which algorithm container to use.

hyperparameters=hyperparameters, # Passes the defined hyperparameters to the estimator. These are the settings that guide the training process.

role=sagemaker.get_execution_role(), # Specifies the IAM role that SageMaker assumes during the training job. This role allows access to AWS resources like S3.

train_instance_count=1, # Sets the number of training instances. Here, it’s using a single instance.

train_instance_type='ml.m5.large', # Specifies the type of instance to use for training. ml.m5.2xlarge is a general-purpose instance with a balance of compute, memory, and network resources.

train_volume_size=5, # 5GB # Sets the size of the storage volume attached to the training instance, in GB. Here, it’s 5 GB.

output_path=output_path, # Defines where the model artifacts and output of the training job will be saved in S3.

train_use_spot_instances=True, # Utilizes spot instances for training, which can be significantly cheaper than on-demand instances. Spot instances are spare EC2 capacity offered at a lower price.

train_max_run=300, # Specifies the maximum runtime for the training job in seconds. Here, it's 300 seconds (5 minutes).

train_max_wait=600) # Sets the maximum time to wait for the job to complete, including the time waiting for spot instances, in seconds. Here, it's 600 seconds (10 minutes).

Output pelaksanaan sel kod

- Di bahagian atas fail, lakukan Mulakan Semula dengan pergi ke tab Kernel.

- Laksanakan Buku Nota sehingga nombor sel kod 27, dengan kod

estimator.fit({'train': s3_input_train,'validation': s3_input_test})

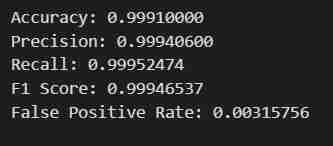

- Anda akan mendapat hasil yang diharapkan. Data akan diambil, dibahagikan kepada set kereta api dan ujian selepas dilaraskan untuk Label dan Ciri dengan laluan keluaran yang ditentukan, kemudian model yang menggunakan SDK Python SageMaker akan Dilatih, Digunakan sebagai Titik Akhir, Disahkan untuk memberikan metrik yang berbeza.

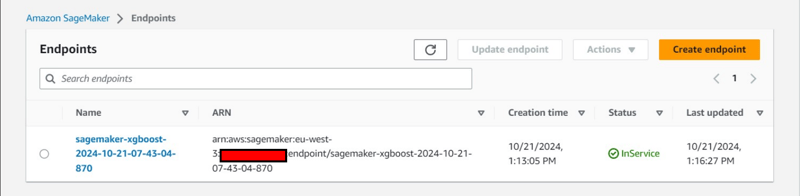

Nota Pemerhatian Konsol

Pelaksanaan sel ke-8

xgb_predictor = estimator.deploy(initial_instance_count=1,instance_type='ml.m5.large')

- Laluan output akan disediakan dalam S3 untuk menyimpan data model.

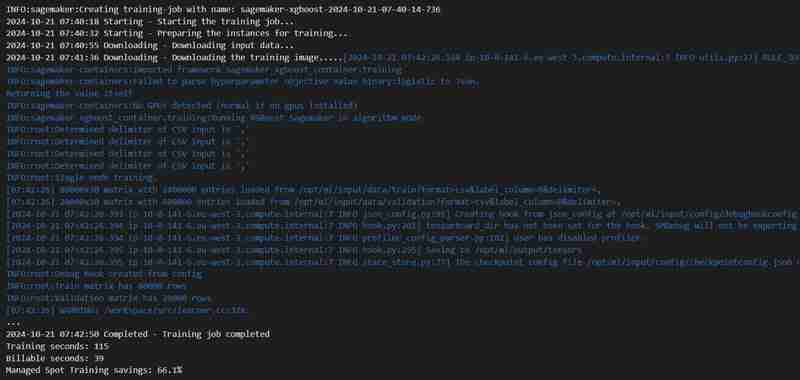

Pelaksanaan sel ke-23

# Looks for the XGBoost image URI and builds an XGBoost container. Specify the repo_version depending on preference.

container = get_image_uri(boto3.Session().region_name,

'xgboost',

repo_version='1.0-1')

- Kerja latihan akan bermula, anda boleh menyemaknya di bawah tab latihan.

- Selepas beberapa ketika (anggaran 3 minit) Ia akan selesai dan akan menunjukkan perkara yang sama.

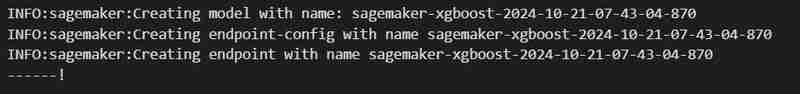

Pelaksanaan sel kod ke-24

hyperparameters = {

"max_depth":"5", ## Maximum depth of a tree. Higher means more complex models but risk of overfitting.

"eta":"0.2", ## Learning rate. Lower values make the learning process slower but more precise.

"gamma":"4", ## Minimum loss reduction required to make a further partition on a leaf node. Controls the model’s complexity.

"min_child_weight":"6", ## Minimum sum of instance weight (hessian) needed in a child. Higher values prevent overfitting.

"subsample":"0.7", ## Fraction of training data used. Reduces overfitting by sampling part of the data.

"objective":"binary:logistic", ## Specifies the learning task and corresponding objective. binary:logistic is for binary classification.

"num_round":50 ## Number of boosting rounds, essentially how many times the model is trained.

}

# A SageMaker estimator that calls the xgboost-container

estimator = sagemaker.estimator.Estimator(image_uri=container, # Points to the XGBoost container we previously set up. This tells SageMaker which algorithm container to use.

hyperparameters=hyperparameters, # Passes the defined hyperparameters to the estimator. These are the settings that guide the training process.

role=sagemaker.get_execution_role(), # Specifies the IAM role that SageMaker assumes during the training job. This role allows access to AWS resources like S3.

train_instance_count=1, # Sets the number of training instances. Here, it’s using a single instance.

train_instance_type='ml.m5.large', # Specifies the type of instance to use for training. ml.m5.2xlarge is a general-purpose instance with a balance of compute, memory, and network resources.

train_volume_size=5, # 5GB # Sets the size of the storage volume attached to the training instance, in GB. Here, it’s 5 GB.

output_path=output_path, # Defines where the model artifacts and output of the training job will be saved in S3.

train_use_spot_instances=True, # Utilizes spot instances for training, which can be significantly cheaper than on-demand instances. Spot instances are spare EC2 capacity offered at a lower price.

train_max_run=300, # Specifies the maximum runtime for the training job in seconds. Here, it's 300 seconds (5 minutes).

train_max_wait=600) # Sets the maximum time to wait for the job to complete, including the time waiting for spot instances, in seconds. Here, it's 600 seconds (10 minutes).

- Titik akhir akan digunakan di bawah tab Inferens.

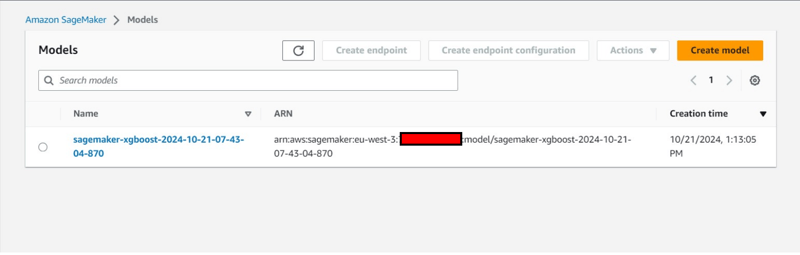

Pemerhatian Konsol Tambahan:

- Penciptaan Konfigurasi Titik Akhir di bawah tab Inferens.

- Penciptaan model juga di bawah tab Inferens.

Pengakhiran dan Pembersihan

- Dalam VS Code kembali ke data_upload.ipynb untuk melaksanakan 2 sel kod terakhir untuk memuat turun data baldi S3 ke dalam sistem setempat.

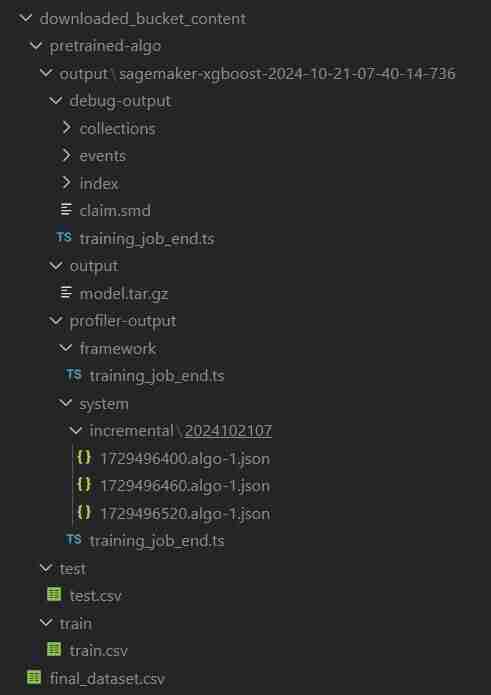

- Folder akan dinamakan downloaded_bucket_content. Struktur Direktori folder Dimuat Turun.

- Anda akan mendapat log fail yang dimuat turun dalam sel output. Ia akan mengandungi pretrained_sm.ipynb mentah, final_dataset.csv dan folder output model bernama 'pretrained-algo' dengan data pelaksanaan fail kod sagemaker.

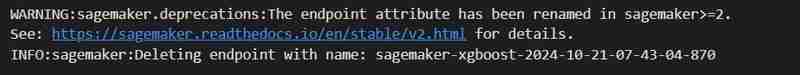

- Akhirnya pergi ke pralatihan_sm.ipynb yang terdapat di dalam contoh SageMaker dan laksanakan 2 sel kod terakhir. Titik akhir dan sumber dalam baldi S3 akan dipadamkan untuk memastikan tiada caj tambahan.

- Memadamkan EndPoint

estimator.fit({'train': s3_input_train,'validation': s3_input_test})

- Mengosongkan S3: (Diperlukan untuk memusnahkan contoh)

# Looks for the XGBoost image URI and builds an XGBoost container. Specify the repo_version depending on preference.

container = get_image_uri(boto3.Session().region_name,

'xgboost',

repo_version='1.0-1')

- Kembali ke terminal VS Code untuk fail projek dan kemudian taip/tampal terraform destroy --auto-approve

- Semua kejadian sumber yang dibuat akan dipadamkan.

Objek Dicipta Auto

ClassiSage/muat turun_bucket_kandungan

ClassiSage/.terraform

ClassiSage/ml_ops/pycache

ClassiSage/.terraform.lock.hcl

ClassiSage/terraform.tfstate

ClassiSage/terraform.tfstate.backup

NOTA:

Jika anda menyukai idea dan pelaksanaan Projek Pembelajaran Mesin ini menggunakan klasifikasi log AWS Cloud S3 dan SageMaker untuk HDFS, menggunakan Terraform untuk IaC (Automasi persediaan infrastruktur), Sila pertimbangkan untuk menyukai siaran ini dan membintangi selepas menyemak repositori projek di GitHub .

Atas ialah kandungan terperinci ClassiSage: Terraform IaC Automated AWS SageMaker berasaskan Model klasifikasi Log HDFS. Untuk maklumat lanjut, sila ikut artikel berkaitan lain di laman web China PHP!