在Centos 上安装Kafka集群

安装准备:版本

Kafka版本:kafka_2.11-0.9.0.0

Zookeeper版本:zookeeper-3.4.7

Zookeeper 集群:bjrenrui0001 bjrenrui0002 bjrenrui0003

Zookeeper集群的搭建参见:在CentOS上安装ZooKeeper集群

物理环境

安装三台物理机:

192.168.100.200 bjrenrui0001(运行3个Broker)

192.168.100.201 bjrenrui0002(运行2个Broker)

192.168.100.202 bjrenrui0003(运行2个Broker)

该集群的创建主要分为三步,单节点单Broker,单节点多Broker,多节点多Broker

单节点单Broker

本节以bjrenrui0001上创建一个Broker为例

下载kafka:

下载路径:http://kafka.apache.org/downloads.html

cd /mq/

wget http://mirrors.hust.edu.cn/apache/kafka/0.9.0.0/kafka_2.11-0.9.0.0.tgz

copyfiles.sh kafka_2.11-0.9.0.0.tgz bjyfnbserver /mq/

tar zxvf kafka_2.11-0.9.0.0.tgz -C /mq/

ln -s /mq/kafka_2.11-0.9.0.0 /mq/kafka

mkdir /mq/kafka/logs

配置

修改config/server.properties

vi /mq/kafka/config/server.properties

broker.id=1

listeners=PLAINTEXT://:9092

port=9092

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181GG

zookeeper.connection.timeout.ms=6000

启动Kafka服务:

cd /mq/kafka;sh bin/kafka-server-start.sh -daemon config/server.properties

或

sh /mq/kafka/bin/kafka-server-start.sh -daemon /mq/kafka/config/server.properties

netstat -ntlp|grep -E '2181|9092'

(Not all processes could be identified, non-owned process info

will not be shown, you would have to be root to see it all.)

tcp6 0 0 :::9092 :::* LISTEN 26903/java

tcp6 0 0 :::2181 :::* LISTEN 24532/java

创建Topic:

sh /mq/kafka/bin/kafka-topics.sh --create --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --replication-factor 1 --partitions 1 --topic test

查看Topic:

sh /mq/kafka/bin/kafka-topics.sh --list --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

producer发送消息:

$ sh /mq/kafka/bin/kafka-console-producer.sh --broker-list bjrenrui0001:9092 --topic test

first

message

consumer接收消息:

$ sh bin/kafka-console-consumer.sh --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --topic test --from-beginning

first

message

如果要最新的数据,可以不带--from-beginning参数即可。

单节点多个Broker

将上个章节中的文件夹再复制两份分别为kafka_2,kafka_3

cp -r /mq/kafka_2.11-0.9.0.0 /mq/kafka_2.11-0.9.0.0_2

cp -r /mq/kafka_2.11-0.9.0.0 /mq/kafka_2.11-0.9.0.0_3

ln -s /mq/kafka_2.11-0.9.0.0_2 /mq/kafka_2

ln -s /mq/kafka_2.11-0.9.0.0_3 /mq/kafka_3

分别修改kafka_2/config/server.properties以及kafka_3/config/server.properties 文件中的broker.id,以及port属性,确保唯一性

vi /mq/kafka_2/config/server.properties

broker.id=2

listeners=PLAINTEXT://:9093

port=9093

host.name=bjrenrui0001

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_2/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

vi /mq/kafka_3/config/server.properties

broker.id=3

listeners=PLAINTEXT://:9094

port=9094

host.name=bjrenrui0001

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_3/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

启动

启动另外两个Broker:

sh /mq/kafka_2/bin/kafka-server-start.sh -daemon /mq/kafka_2/config/server.properties

sh /mq/kafka_3/bin/kafka-server-start.sh -daemon /mq/kafka_3/config/server.properties

检查端口:

[dreamjobs@bjrenrui0001 config]$ netstat -ntlp|grep -E '2181|909[2-9]'|sort -k3

(Not all processes could be identified, non-owned process info

will not be shown, you would have to be root to see it all.)

tcp6 0 0 :::2181 :::* LISTEN 24532/java

tcp6 0 0 :::9092 :::* LISTEN 26903/java

tcp6 0 0 :::9093 :::* LISTEN 28672/java

tcp6 0 0 :::9094 :::* LISTEN 28734/java

创建一个replication factor为3的topic:

sh /mq/kafka/bin/kafka-topics.sh --create --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --replication-factor 3 --partitions 1 --topic my-replicated-topic

查看Topic的状态:

$ sh /mq/kafka/bin/kafka-topics.sh --describe -zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --topic my-replicated-topic

Topic:my-replicated-topic PartitionCount:1 ReplicationFactor:3 Configs:

Topic: my-replicated-topic Partition: 0 Leader: 3 Replicas: 3,1,2 Isr: 3,1,2

从上面的内容可以看出,该topic包含1个part,replicationfactor为3,且Node3 是leador

解释如下:

"leader" is the node responsible for all reads and writes for the given partition. Each node will be the leader for a randomly selected portion of the partitions.

"replicas" is the list of nodes that replicate the log for this partition regardless of whether they are the leader or even if they are currently alive.

"isr" is the set of "in-sync" replicas. This is the subset of the replicas list that is currently alive and caught-up to the leader.

再来看一下之前创建的test topic, 从下图可以看出没有进行replication

$ sh /mq/kafka/bin/kafka-topics.sh --describe --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --topic test

Topic:test PartitionCount:1 ReplicationFactor:1 Configs:

Topic: test Partition: 0 Leader: 1 Replicas: 1 Isr: 1

多个节点的多个Broker

在bjrenrui0002、bjrenrui0003上分别把下载的文件解压缩到kafka_4,kafka_5,kafka_6两个文件夹中,再将bjrenrui0001上的server.properties配置文件拷贝到这三个文件夹中

vi /mq/kafka_4/config/server.properties

broker.id=4

listeners=PLAINTEXT://:9095

port=9095

host.name=bjrenrui0002

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_4/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

vi /mq/kafka_5/config/server.properties

broker.id=5

listeners=PLAINTEXT://:9096

port=9096

host.name=bjrenrui0002

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_5/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

vi /mq/kafka_6/config/server.properties

broker.id=6

listeners=PLAINTEXT://:9097

port=9097

host.name=bjrenrui0003

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_6/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

vi /mq/kafka_7/config/server.properties

broker.id=7

listeners=PLAINTEXT://:9098

port=9098

host.name=bjrenrui0003

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=1048576

socket.receive.buffer.bytes=1048576

socket.request.max.bytes=104857600

log.dirs=/mq/kafka_7/logs/kafka-logs

num.partitions=10

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

log.cleaner.enable=false

zookeeper.connect=bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181

zookeeper.connection.timeout.ms=6000

启动服务

sh /mq/kafka/bin/kafka-server-start.sh -daemon /mq/kafka/config/server.properties

sh /mq/kafka_2/bin/kafka-server-start.sh -daemon /mq/kafka_2/config/server.properties

sh /mq/kafka_3/bin/kafka-server-start.sh -daemon /mq/kafka_3/config/server.properties

sh /mq/kafka_4/bin/kafka-server-start.sh -daemon /mq/kafka_4/config/server.properties

sh /mq/kafka_5/bin/kafka-server-start.sh -daemon /mq/kafka_5/config/server.properties

sh /mq/kafka_6/bin/kafka-server-start.sh -daemon /mq/kafka_6/config/server.properties

sh /mq/kafka_7/bin/kafka-server-start.sh -daemon /mq/kafka_7/config/server.properties

检查:

$ netstat -ntlp|grep -E '2181|909[2-9]'|sort -k3

停服务:

sh /mq/kafka/bin/kafka-server-stop.sh

如果使用脚本停broker服务,会把单节点上的多broker服务都停掉,慎重!!!

ps ax | grep -i 'kafka\.Kafka' | grep java | grep -v grep | awk '{print $1}' | xargs kill -SIGTERM

到目前为止,三台物理机上的7个Broker已经启动完毕:

[dreamjobs@bjrenrui0001 bin]$ netstat -ntlp|grep -E '2181|909[2-9]'|sort -k3

(Not all processes could be identified, non-owned process info

will not be shown, you would have to be root to see it all.)

tcp6 0 0 :::2181 :::* LISTEN 24532/java

tcp6 0 0 :::9092 :::* LISTEN 33212/java

tcp6 0 0 :::9093 :::* LISTEN 32997/java

tcp6 0 0 :::9094 :::* LISTEN 33064/java

[dreamjobs@bjrenrui0002 config]$ netstat -ntlp|grep -E '2181|909[2-9]'|sort -k3

(Not all processes could be identified, non-owned process info

will not be shown, you would have to be root to see it all.)

tcp6 0 0 :::2181 :::* LISTEN 6899/java

tcp6 0 0 :::9095 :::* LISTEN 33251/java

tcp6 0 0 :::9096 :::* LISTEN 33279/java

[dreamjobs@bjrenrui0003 config]$ netstat -ntlp|grep -E '2181|909[2-9]'|sort -k3

(Not all processes could be identified, non-owned process info

will not be shown, you would have to be root to see it all.)

tcp 0 0 0.0.0.0:2181 0.0.0.0:* LISTEN 14562/java

tcp 0 0 0.0.0.0:9097 0.0.0.0:* LISTEN 23246/java

tcp 0 0 0.0.0.0:9098 0.0.0.0:* LISTEN 23270/java

producer发送消息:

$ sh /mq/kafka/bin/kafka-console-producer.sh --broker-list bjrenrui0001:9092 --topic my-replicated-topic

consumer接收消息:

$ sh /mq/kafka_4/bin/kafka-console-consumer.sh --zookeeper bjrenrui0001:2181,bjrenrui0002:2181,bjrenrui0003:2181 --topic my-replicated-topic --from-beginning

如何在 iPhone 和 Android 上关闭蓝色警报Feb 29, 2024 pm 10:10 PM

如何在 iPhone 和 Android 上关闭蓝色警报Feb 29, 2024 pm 10:10 PM根据美国司法部的解释,蓝色警报旨在提供关于可能对执法人员构成直接和紧急威胁的个人的重要信息。这种警报的目的是及时通知公众,并让他们了解与这些罪犯相关的潜在危险。通过这种主动的方式,蓝色警报有助于增强社区的安全意识,促使人们采取必要的预防措施以保护自己和周围的人。这种警报系统的建立旨在提高对潜在威胁的警觉性,并加强执法机构与公众之间的沟通,以共尽管这些紧急通知对我们社会至关重要,但有时可能会对日常生活造成干扰,尤其是在午夜或重要活动时收到通知时。为了确保安全,我们建议您保持这些通知功能开启,但如果

在Android中实现轮询的方法是什么?Sep 21, 2023 pm 08:33 PM

在Android中实现轮询的方法是什么?Sep 21, 2023 pm 08:33 PMAndroid中的轮询是一项关键技术,它允许应用程序定期从服务器或数据源检索和更新信息。通过实施轮询,开发人员可以确保实时数据同步并向用户提供最新的内容。它涉及定期向服务器或数据源发送请求并获取最新信息。Android提供了定时器、线程、后台服务等多种机制来高效地完成轮询。这使开发人员能够设计与远程数据源保持同步的响应式动态应用程序。本文探讨了如何在Android中实现轮询。它涵盖了实现此功能所涉及的关键注意事项和步骤。轮询定期检查更新并从服务器或源检索数据的过程在Android中称为轮询。通过

如何在Android中实现按下返回键再次退出的功能?Aug 30, 2023 am 08:05 AM

如何在Android中实现按下返回键再次退出的功能?Aug 30, 2023 am 08:05 AM为了提升用户体验并防止数据或进度丢失,Android应用程序开发者必须避免意外退出。他们可以通过加入“再次按返回退出”功能来实现这一点,该功能要求用户在特定时间内连续按两次返回按钮才能退出应用程序。这种实现显著提升了用户参与度和满意度,确保他们不会意外丢失任何重要信息Thisguideexaminesthepracticalstepstoadd"PressBackAgaintoExit"capabilityinAndroid.Itpresentsasystematicguid

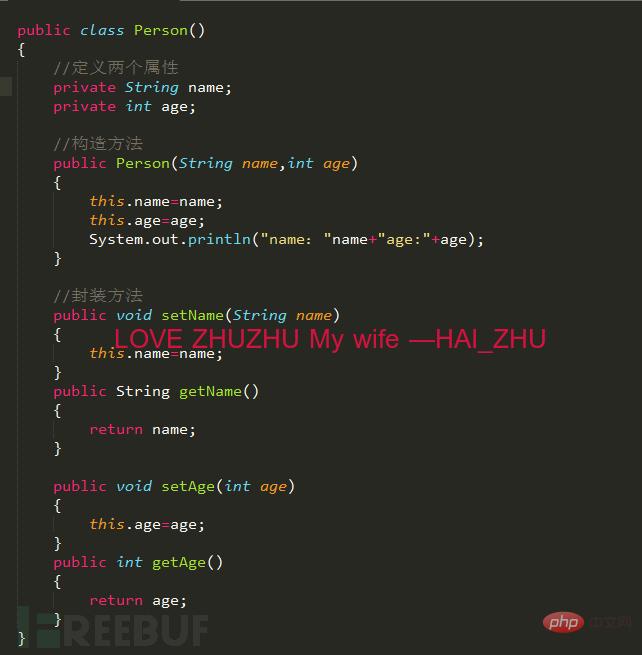

Android逆向中smali复杂类实例分析May 12, 2023 pm 04:22 PM

Android逆向中smali复杂类实例分析May 12, 2023 pm 04:22 PM1.java复杂类如果有什么地方不懂,请看:JAVA总纲或者构造方法这里贴代码,很简单没有难度。2.smali代码我们要把java代码转为smali代码,可以参考java转smali我们还是分模块来看。2.1第一个模块——信息模块这个模块就是基本信息,说明了类名等,知道就好对分析帮助不大。2.2第二个模块——构造方法我们来一句一句解析,如果有之前解析重复的地方就不再重复了。但是会提供链接。.methodpublicconstructor(Ljava/lang/String;I)V这一句话分为.m

如何在2023年将 WhatsApp 从安卓迁移到 iPhone 15?Sep 22, 2023 pm 02:37 PM

如何在2023年将 WhatsApp 从安卓迁移到 iPhone 15?Sep 22, 2023 pm 02:37 PM如何将WhatsApp聊天从Android转移到iPhone?你已经拿到了新的iPhone15,并且你正在从Android跳跃?如果是这种情况,您可能还对将WhatsApp从Android转移到iPhone感到好奇。但是,老实说,这有点棘手,因为Android和iPhone的操作系统不兼容。但不要失去希望。这不是什么不可能完成的任务。让我们在本文中讨论几种将WhatsApp从Android转移到iPhone15的方法。因此,坚持到最后以彻底学习解决方案。如何在不删除数据的情况下将WhatsApp

同样基于linux为什么安卓效率低Mar 15, 2023 pm 07:16 PM

同样基于linux为什么安卓效率低Mar 15, 2023 pm 07:16 PM原因:1、安卓系统上设置了一个JAVA虚拟机来支持Java应用程序的运行,而这种虚拟机对硬件的消耗是非常大的;2、手机生产厂商对安卓系统的定制与开发,增加了安卓系统的负担,拖慢其运行速度影响其流畅性;3、应用软件太臃肿,同质化严重,在一定程度上拖慢安卓手机的运行速度。

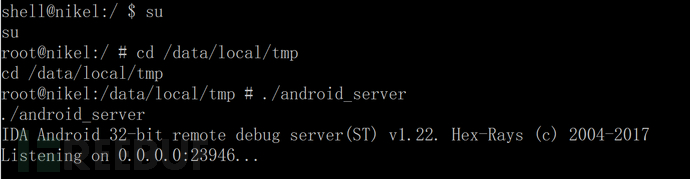

Android中动态导出dex文件的方法是什么May 30, 2023 pm 04:52 PM

Android中动态导出dex文件的方法是什么May 30, 2023 pm 04:52 PM1.启动ida端口监听1.1启动Android_server服务1.2端口转发1.3软件进入调试模式2.ida下断2.1attach附加进程2.2断三项2.3选择进程2.4打开Modules搜索artPS:小知识Android4.4版本之前系统函数在libdvm.soAndroid5.0之后系统函数在libart.so2.5打开Openmemory()函数在libart.so中搜索Openmemory函数并且跟进去。PS:小知识一般来说,系统dex都会在这个函数中进行加载,但是会出现一个问题,后

Android APP测试流程和常见问题是什么May 13, 2023 pm 09:58 PM

Android APP测试流程和常见问题是什么May 13, 2023 pm 09:58 PM1.自动化测试自动化测试主要包括几个部分,UI功能的自动化测试、接口的自动化测试、其他专项的自动化测试。1.1UI功能自动化测试UI功能的自动化测试,也就是大家常说的自动化测试,主要是基于UI界面进行的自动化测试,通过脚本实现UI功能的点击,替代人工进行自动化测试。这个测试的优势在于对高度重复的界面特性功能测试的测试人力进行有效的释放,利用脚本的执行,实现功能的快速高效回归。但这种测试的不足之处也是显而易见的,主要包括维护成本高,易发生误判,兼容性不足等。因为是基于界面操作,界面的稳定程度便成了

핫 AI 도구

Undresser.AI Undress

사실적인 누드 사진을 만들기 위한 AI 기반 앱

AI Clothes Remover

사진에서 옷을 제거하는 온라인 AI 도구입니다.

Undress AI Tool

무료로 이미지를 벗다

Clothoff.io

AI 옷 제거제

AI Hentai Generator

AI Hentai를 무료로 생성하십시오.

인기 기사

뜨거운 도구

Dreamweaver Mac版

시각적 웹 개발 도구

SublimeText3 Linux 새 버전

SublimeText3 Linux 최신 버전

SublimeText3 중국어 버전

중국어 버전, 사용하기 매우 쉽습니다.

SublimeText3 영어 버전

권장 사항: Win 버전, 코드 프롬프트 지원!

ZendStudio 13.5.1 맥

강력한 PHP 통합 개발 환경