YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy while maintaining real-time processing speeds. This article explores the key innovations in YOLO v12, highlighting how it surpasses the previous versions while minimizing computational costs without compromising detection efficiency.

Table of contents

- What’s New in YOLO v12?

- Key Improvements Over Previous Versions

- Computational Efficiency Enhancements

- YOLO v12 Model Variants

- Let’s compare YOLO v11 and YOLO v12 Models

- Expert Opinions on YOLOv11 and YOLOv12

- Conclusion

What’s New in YOLO v12?

Previously, YOLO models relied on Convolutional Neural Networks (CNNs) for object detection due to their speed and efficiency. However, YOLO v12 makes use of attention mechanisms, a concept widely known and used in Transformer models which allow it to recognize patterns more effectively. While attention mechanisms have originally been slow for real-time object detection, YOLO v12 somehow successfully integrates them while maintaining YOLO’s speed, leading to an Attention-Centric YOLO framework.

Key Improvements Over Previous Versions

1. Attention-Centric Framework

YOLO v12 combines the power of attention mechanisms with CNNs, resulting in a model that is both faster and more accurate. Unlike its predecessors which relied solely on CNNs, YOLO v12 introduces optimized attention modules to improve object recognition without adding unnecessary latency.

2. Superior Performance Metrics

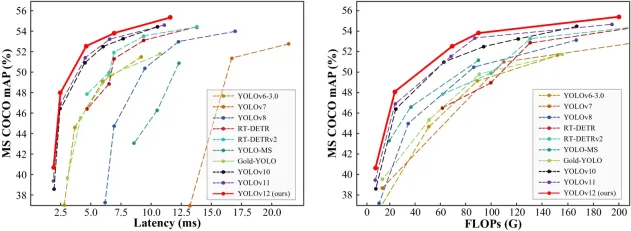

Comparing performance metrics across different YOLO versions and real-time detection models reveals that YOLO v12 achieves higher accuracy while maintaining low latency.

- The mAP (Mean Average Precision) values on datasets like COCO show YOLO v12 outperforming YOLO v11 and YOLO v10 while maintaining comparable speed.

- The model achieves a remarkable 40.6% accuracy (mAP) while processing images in just 1.64 milliseconds on an Nvidia T4 GPU. This performance is superior to YOLO v10 and YOLO v11 without sacrificing speed.

3. Outperforming Non-YOLO Models

YOLO v12 surpasses previous YOLO versions; it also outperforms other real-time object detection frameworks, such as RT-Det and RT-Det v2. These alternative models have higher latency yet fail to match YOLO v12’s accuracy.

Computational Efficiency Enhancements

One of the major concerns with integrating attention mechanisms into YOLO models was their high computational cost (Attention Mechanism) and memory inefficiency. YOLO v12 addresses these issues through several key innovations:

1. Flash Attention for Memory Efficiency

Traditional attention mechanisms consume a large amount of memory, making them impractical for real-time applications. YOLO v12 introduces Flash Attention, a technique that reduces memory consumption and speeds up inference time.

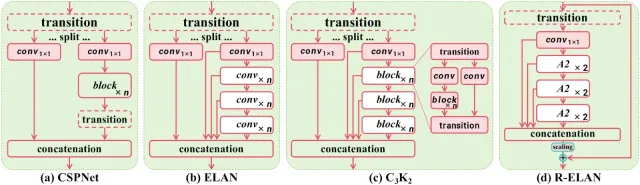

2. Area Attention for Lower Computation Cost

To further optimize efficiency, YOLO v12 employs Area Attention, which focuses only on relevant regions of an image instead of processing the entire feature map. This technique dramatically reduces computation costs while retaining accuracy.

3. R-ELAN for Optimized Feature Processing

YOLO v12 also introduces R-ELAN (Re-Engineered ELAN), which optimizes feature propagation making the model more efficient in handling complex object detection tasks without increasing computational demands.

YOLO v12 Model Variants

YOLO v12 comes in five different variants, catering to different applications:

- N (Nano) & S (Small): Designed for real-time applications where speed is crucial.

- M (Medium): Balances accuracy and speed, suitable for general-purpose tasks.

- L (Large) & XL (Extra Large): Optimized for high-precision tasks where accuracy is prioritized over speed.

Also read:

- A Step-by-Step Introduction to the Basic Object Detection Algorithms (Part 1)

- A Practical Implementation of the Faster R-CNN Algorithm for Object Detection (Part 2)

- A Practical Guide to Object Detection using the Popular YOLO Framework – Part III (with Python codes)

Let’s compare YOLO v11 and YOLO v12 Models

We’ll be experimenting with YOLO v11 and YOLO v12 small models to understand their performance across various tasks like object counting, heatmaps, and speed estimation.

1. Object Counting

YOLO v11

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("highway.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)), int(cap.get(cv2.CAP_PROP_FPS)))

# Define region points

region_points = [(20, 1500), (1080, 1500), (1080, 1460), (20, 1460)] # Lower rectangle region counting

# Video writer (MP4 format)

video_writer = cv2.VideoWriter("object_counting_output.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Init ObjectCounter

counter = solutions.ObjectCounter(

show=False, # Disable internal window display

region=region_points,

model="yolo11s.pt",

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = counter.count(im0)

# Resize to fit screen (optional — scale down for large videos)

im0_resized = cv2.resize(im0, (640, 360)) # Adjust resolution as needed

# Show the resized frame

cv2.imshow("Object Counting", im0_resized)

video_writer.write(im0)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

YOLO v12

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("highway.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)), int(cap.get(cv2.CAP_PROP_FPS)))

# Define region points

region_points = [(20, 1500), (1080, 1500), (1080, 1460), (20, 1460)] # Lower rectangle region counting

# Video writer (MP4 format)

video_writer = cv2.VideoWriter("object_counting_output.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Init ObjectCounter

counter = solutions.ObjectCounter(

show=False, # Disable internal window display

region=region_points,

model="yolo12s.pt",

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = counter.count(im0)

# Resize to fit screen (optional — scale down for large videos)

im0_resized = cv2.resize(im0, (640, 360)) # Adjust resolution as needed

# Show the resized frame

cv2.imshow("Object Counting", im0_resized)

video_writer.write(im0)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

2. Heatmaps

YOLO v11

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("mall_arial.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("heatmap_output_yolov11.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# In case you want to apply object counting + heatmaps, you can pass region points.

# region_points = [(20, 400), (1080, 400)] # Define line points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360)] # Define region points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360), (20, 400)] # Define polygon points

# Init heatmap

heatmap = solutions.Heatmap(

show=True, # Display the output

model="yolo11s.pt", # Path to the YOLO11 model file

colormap=cv2.COLORMAP_PARULA, # Colormap of heatmap

# region=region_points, # If you want to do object counting with heatmaps, you can pass region_points

# classes=[0, 2], # If you want to generate heatmap for specific classes i.e person and car.

# show_in=True, # Display in counts

# show_out=True, # Display out counts

# line_width=2, # Adjust the line width for bounding boxes and text display

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = heatmap.generate_heatmap(im0)

im0_resized = cv2.resize(im0, (w, h))

video_writer.write(im0_resized)

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

YOLO v12

import cv2

from ultralytics import solutions

cap = cv2.VideoCapture("mall_arial.mp4")

assert cap.isOpened(), "Error reading video file"

w, h, fps = (int(cap.get(x)) for x in (cv2.CAP_PROP_FRAME_WIDTH, cv2.CAP_PROP_FRAME_HEIGHT, cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("heatmap_output_yolov12.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# In case you want to apply object counting + heatmaps, you can pass region points.

# region_points = [(20, 400), (1080, 400)] # Define line points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360)] # Define region points

# region_points = [(20, 400), (1080, 400), (1080, 360), (20, 360), (20, 400)] # Define polygon points

# Init heatmap

heatmap = solutions.Heatmap(

show=True, # Display the output

model="yolo12s.pt", # Path to the YOLO11 model file

colormap=cv2.COLORMAP_PARULA, # Colormap of heatmap

# region=region_points, # If you want to do object counting with heatmaps, you can pass region_points

# classes=[0, 2], # If you want to generate heatmap for specific classes i.e person and car.

# show_in=True, # Display in counts

# show_out=True, # Display out counts

# line_width=2, # Adjust the line width for bounding boxes and text display

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

im0 = heatmap.generate_heatmap(im0)

im0_resized = cv2.resize(im0, (w, h))

video_writer.write(im0_resized)

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

3. Speed Estimation

YOLO v11

import cv2

from ultralytics import solutions

import numpy as np

cap = cv2.VideoCapture("cars_on_road.mp4")

assert cap.isOpened(), "Error reading video file"

# Capture video properties

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = int(cap.get(cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("speed_management_yolov11.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Define speed region points (adjust for your video resolution)

speed_region = [(300, h - 200), (w - 100, h - 200), (w - 100, h - 270), (300, h - 270)]

# Initialize SpeedEstimator

speed = solutions.SpeedEstimator(

show=False, # Disable internal window display

model="yolo11s.pt", # Path to the YOLO model file

region=speed_region, # Pass region points

# classes=[0, 2], # Optional: Filter specific object classes (e.g., cars, trucks)

# line_width=2, # Optional: Adjust the line width

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

# Estimate speed and draw bounding boxes

out = speed.estimate_speed(im0)

# Draw the speed region on the frame

cv2.polylines(out, [np.array(speed_region)], isClosed=True, color=(0, 255, 0), thickness=2)

# Resize the frame to fit the screen

im0_resized = cv2.resize(out, (1280, 720)) # Resize for better screen fit

# Show the resized frame

cv2.imshow("Speed Estimation", im0_resized)

video_writer.write(out)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

YOLO v12

import cv2

from ultralytics import solutions

import numpy as np

cap = cv2.VideoCapture("cars_on_road.mp4")

assert cap.isOpened(), "Error reading video file"

# Capture video properties

w = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

h = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fps = int(cap.get(cv2.CAP_PROP_FPS))

# Video writer

video_writer = cv2.VideoWriter("speed_management_yolov12.mp4", cv2.VideoWriter_fourcc(*"mp4v"), fps, (w, h))

# Define speed region points (adjust for your video resolution)

speed_region = [(300, h - 200), (w - 100, h - 200), (w - 100, h - 270), (300, h - 270)]

# Initialize SpeedEstimator

speed = solutions.SpeedEstimator(

show=False, # Disable internal window display

model="yolo12s.pt", # Path to the YOLO model file

region=speed_region, # Pass region points

# classes=[0, 2], # Optional: Filter specific object classes (e.g., cars, trucks)

# line_width=2, # Optional: Adjust the line width

)

# Process video

while cap.isOpened():

success, im0 = cap.read()

if not success:

print("Video frame is empty or video processing has been successfully completed.")

break

# Estimate speed and draw bounding boxes

out = speed.estimate_speed(im0)

# Draw the speed region on the frame

cv2.polylines(out, [np.array(speed_region)], isClosed=True, color=(0, 255, 0), thickness=2)

# Resize the frame to fit the screen

im0_resized = cv2.resize(out, (1280, 720)) # Resize for better screen fit

# Show the resized frame

cv2.imshow("Speed Estimation", im0_resized)

video_writer.write(out)

# Press 'q' to exit

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

video_writer.release()

cv2.destroyAllWindows()

Output

Also Read: Top 30+ Computer Vision Models For 2025

Expert Opinions on YOLOv11 and YOLOv12

Muhammad Rizwan Munawar — Computer Vision Engineer at Ultralytics

“YOLOv12 introduces flash attention, which enhances accuracy, but it requires careful CUDA setup. It’s a solid step forward, especially for complex detection tasks, though YOLOv11 remains faster for real-time needs. In short, choose YOLOv12 for accuracy and YOLOv11 for speed.”

Linkedin Post – Is YOLOv12 really a state-of-the-art model? ?

Muhammad Rizwan, recently tested YOLOv11 and YOLOv12 side by side to break down their real-world performance. His findings highlight the trade-offs between the two models:

- Frames Per Second (FPS): YOLOv11 maintains an average of 40 FPS, while YOLOv12 lags behind at 30 FPS. This makes YOLOv11 the better choice for real-time applications where speed is critical, such as traffic monitoring or live video feeds.

- Training Time: YOLOv12 takes about 20% longer to train than YOLOv11. On a small dataset with 130 training images and 43 validation images, YOLOv11 completed training in 0.009 hours, while YOLOv12 needed 0.011 hours. While this might seem minor for small datasets, the difference becomes significant for larger-scale projects.

- Accuracy: Both models achieved similar accuracy after fine-tuning for 10 epochs on the same dataset. YOLOv12 didn’t dramatically outperform YOLOv11 in terms of accuracy, suggesting the newer model’s improvements lie more in architectural enhancements than raw detection precision.

- Flash Attention: YOLOv12 introduces flash attention, a powerful mechanism that speeds up and optimizes attention layers. However, there’s a catch — this feature isn’t natively supported on the CPU, and enabling it with CUDA requires careful version-specific setup. For teams without powerful GPUs or those working on edge devices, this can become a roadblock.

The PC specifications used for testing:

- GPU: NVIDIA RTX 3050

- CPU: Intel Core-i5-10400 @2.90GHz

- RAM: 64 GB

The model specifications:

- Model = YOLO11n.pt and YOLOv12n.pt

- Image size = 640 for inference

Conclusion

YOLO v12 marks a significant leap forward in real-time object detection, combining CNN speed with Transformer-like attention mechanisms. With improved accuracy, lower computational costs, and a range of model variants, YOLO v12 is poised to redefine the landscape of real-time vision applications. Whether for autonomous vehicles, security surveillance, or medical imaging, YOLO v12 sets a new standard for real-time object detection efficiency.

What’s Next?

- YOLO v13 Possibilities: Will future versions push the attention mechanisms even further?

- Edge Device Optimization: Can Flash Attention or Area Attention be optimized for lower-power devices?

To help you better understand the differences, I’ve attached some code snippets and output results in the comparison section. These examples illustrate how both YOLOv11 and YOLOv12 perform in real-world scenarios, from object counting to speed estimation and heatmaps. I’m excited to see how you guys perceive this new release! Are the improvements in accuracy and attention mechanisms enough to justify the trade-offs in speed? Or do you think YOLOv11 still holds its ground for most applications?

위 내용은 물체 감지에 Yolo V12를 사용하는 방법은 무엇입니까?의 상세 내용입니다. 자세한 내용은 PHP 중국어 웹사이트의 기타 관련 기사를 참조하세요!

가장 많이 사용되는 10 개의 Power BI 차트 -Axaltics VidhyaApr 16, 2025 pm 12:05 PM

가장 많이 사용되는 10 개의 Power BI 차트 -Axaltics VidhyaApr 16, 2025 pm 12:05 PMMicrosoft Power BI 차트로 데이터 시각화의 힘을 활용 오늘날의 데이터 중심 세계에서는 복잡한 정보를 비 기술적 인 청중에게 효과적으로 전달하는 것이 중요합니다. 데이터 시각화는이 차이를 연결하여 원시 데이터를 변환합니다. i

AI의 전문가 시스템Apr 16, 2025 pm 12:00 PM

AI의 전문가 시스템Apr 16, 2025 pm 12:00 PM전문가 시스템 : AI의 의사 결정 능력에 대한 깊은 다이빙 의료 진단에서 재무 계획에 이르기까지 모든 것에 대한 전문가의 조언에 접근 할 수 있다고 상상해보십시오. 그것이 인공 지능 분야의 전문가 시스템의 힘입니다. 이 시스템은 프로를 모방합니다

최고의 바이브 코더 3 명이 코드 에서이 AI 혁명을 분해합니다.Apr 16, 2025 am 11:58 AM

최고의 바이브 코더 3 명이 코드 에서이 AI 혁명을 분해합니다.Apr 16, 2025 am 11:58 AM우선, 이것이 빠르게 일어나고 있음이 분명합니다. 다양한 회사들이 현재 AI가 작성한 코드의 비율에 대해 이야기하고 있으며 빠른 클립에서 증가하고 있습니다. 이미 주변에 많은 작업 변위가 있습니다

활주로 AI의 GEN-4 : AI Montage는 어떻게 부조리를 넘어갈 수 있습니까?Apr 16, 2025 am 11:45 AM

활주로 AI의 GEN-4 : AI Montage는 어떻게 부조리를 넘어갈 수 있습니까?Apr 16, 2025 am 11:45 AM디지털 마케팅에서 소셜 미디어에 이르기까지 모든 창의적 부문과 함께 영화 산업은 기술 교차로에 있습니다. 인공 지능이 시각적 스토리 텔링의 모든 측면을 재구성하고 엔터테인먼트의 풍경을 바꾸기 시작함에 따라

ISRO AI 무료 코스 5 일 동안 등록하는 방법은 무엇입니까? - 분석 VidhyaApr 16, 2025 am 11:43 AM

ISRO AI 무료 코스 5 일 동안 등록하는 방법은 무엇입니까? - 분석 VidhyaApr 16, 2025 am 11:43 AMISRO의 무료 AI/ML 온라인 코스 : 지리 공간 기술 혁신의 관문 IIRS (Indian Institute of Remote Sensing)를 통해 Indian Space Research Organization (ISRO)은 학생과 전문가에게 환상적인 기회를 제공하고 있습니다.

AI의 로컬 검색 알고리즘Apr 16, 2025 am 11:40 AM

AI의 로컬 검색 알고리즘Apr 16, 2025 am 11:40 AM로컬 검색 알고리즘 : 포괄적 인 가이드 대규모 이벤트를 계획하려면 효율적인 작업량 배포가 필요합니다. 전통적인 접근 방식이 실패하면 로컬 검색 알고리즘은 강력한 솔루션을 제공합니다. 이 기사는 언덕 등반과 Simul을 탐구합니다

Openai는 GPT-4.1로 초점을 이동하고 코딩 및 비용 효율성을 우선시합니다.Apr 16, 2025 am 11:37 AM

Openai는 GPT-4.1로 초점을 이동하고 코딩 및 비용 효율성을 우선시합니다.Apr 16, 2025 am 11:37 AM릴리스에는 GPT-4.1, GPT-4.1 MINI 및 GPT-4.1 NANO의 세 가지 모델이 포함되어 있으며, 대형 언어 모델 환경 내에서 작업 별 최적화로 이동합니다. 이 모델은 사용자를 향한 인터페이스를 즉시 대체하지 않습니다

프롬프트 : Chatgpt는 가짜 여권을 생성합니다Apr 16, 2025 am 11:35 AM

프롬프트 : Chatgpt는 가짜 여권을 생성합니다Apr 16, 2025 am 11:35 AMChip Giant Nvidia는 월요일에 AI SuperComputers를 제조하기 시작할 것이라고 말했다. 이 발표는 트럼프 SI 대통령 이후에 나온다

핫 AI 도구

Undresser.AI Undress

사실적인 누드 사진을 만들기 위한 AI 기반 앱

AI Clothes Remover

사진에서 옷을 제거하는 온라인 AI 도구입니다.

Undress AI Tool

무료로 이미지를 벗다

Clothoff.io

AI 옷 제거제

AI Hentai Generator

AI Hentai를 무료로 생성하십시오.

인기 기사

뜨거운 도구

안전한 시험 브라우저

안전한 시험 브라우저는 온라인 시험을 안전하게 치르기 위한 보안 브라우저 환경입니다. 이 소프트웨어는 모든 컴퓨터를 안전한 워크스테이션으로 바꿔줍니다. 이는 모든 유틸리티에 대한 액세스를 제어하고 학생들이 승인되지 않은 리소스를 사용하는 것을 방지합니다.

메모장++7.3.1

사용하기 쉬운 무료 코드 편집기

MinGW - Windows용 미니멀리스트 GNU

이 프로젝트는 osdn.net/projects/mingw로 마이그레이션되는 중입니다. 계속해서 그곳에서 우리를 팔로우할 수 있습니다. MinGW: GCC(GNU Compiler Collection)의 기본 Windows 포트로, 기본 Windows 애플리케이션을 구축하기 위한 무료 배포 가능 가져오기 라이브러리 및 헤더 파일로 C99 기능을 지원하는 MSVC 런타임에 대한 확장이 포함되어 있습니다. 모든 MinGW 소프트웨어는 64비트 Windows 플랫폼에서 실행될 수 있습니다.

DVWA

DVWA(Damn Vulnerable Web App)는 매우 취약한 PHP/MySQL 웹 애플리케이션입니다. 주요 목표는 보안 전문가가 법적 환경에서 자신의 기술과 도구를 테스트하고, 웹 개발자가 웹 응용 프로그램 보안 프로세스를 더 잘 이해할 수 있도록 돕고, 교사/학생이 교실 환경 웹 응용 프로그램에서 가르치고 배울 수 있도록 돕는 것입니다. 보안. DVWA의 목표는 다양한 난이도의 간단하고 간단한 인터페이스를 통해 가장 일반적인 웹 취약점 중 일부를 연습하는 것입니다. 이 소프트웨어는

PhpStorm 맥 버전

최신(2018.2.1) 전문 PHP 통합 개발 도구