参考资料: http://www.cloudera.com/content/cloudera-content/cloudera-docs/CDH4/latest/CDH4-Quick-Start/cdh4qs_topic_3_3.html http://www.cloudera.com/content/cloudera-content/cloudera-docs/Impala/latest/Installing-and-Using-Impala/Installing

参考资料:

http://www.cloudera.com/content/cloudera-content/cloudera-docs/CDH4/latest/CDH4-Quick-Start/cdh4qs_topic_3_3.html

http://www.cloudera.com/content/cloudera-content/cloudera-docs/Impala/latest/Installing-and-Using-Impala/Installing-and-Using-Impala.html

http://blog.cloudera.com/blog/2013/02/from-zero-to-impala-in-minutes/

什么是Impala?

Cloudera发布了实时查询开源项目Impala,根据多款产品实测表明,它比原来基于MapReduce的Hive SQL查询速度提升3~90倍。Impala是Google Dremel的模仿,但在SQL功能上青出于蓝胜于蓝。

1. 安装JDK

$ sudo yum install jdk-6u41-linux-amd64.rpm

2. 伪分布式模式安装CDH4

$ cd /etc/yum.repos.d/

$ sudo wget http://archive.cloudera.com/cdh4/redhat/6/x86_64/cdh/cloudera-cdh4.repo

$ sudo yum install hadoop-conf-pseudo

格式化NameNode.

$ sudo -u hdfs hdfs namenode -format

启动HDFS

$ for x in `cd /etc/init.d ; ls hadoop-hdfs-*` ; do sudo service $x start ; done

创建/tmp目录

$ sudo -u hdfs hadoop fs -rm -r /tmp

$ sudo -u hdfs hadoop fs -mkdir /tmp

$ sudo -u hdfs hadoop fs -chmod -R 1777 /tmp

创建YARN与日志目录

$ sudo -u hdfs hadoop fs -mkdir /tmp/hadoop-yarn/staging

$ sudo -u hdfs hadoop fs -chmod -R 1777 /tmp/hadoop-yarn/staging

$ sudo -u hdfs hadoop fs -mkdir /tmp/hadoop-yarn/staging/history/done_intermediate

$ sudo -u hdfs hadoop fs -chmod -R 1777 /tmp/hadoop-yarn/staging/history/done_intermediate

$ sudo -u hdfs hadoop fs -chown -R mapred:mapred /tmp/hadoop-yarn/staging

$ sudo -u hdfs hadoop fs -mkdir /var/log/hadoop-yarn

$ sudo -u hdfs hadoop fs -chown yarn:mapred /var/log/hadoop-yarn

检查HDFS文件树

$ sudo -u hdfs hadoop fs -ls -R /

drwxrwxrwt - hdfs supergroup 0 2012-05-31 15:31 /tmp drwxr-xr-x - hdfs supergroup 0 2012-05-31 15:31 /tmp/hadoop-yarn drwxrwxrwt - mapred mapred 0 2012-05-31 15:31 /tmp/hadoop-yarn/staging drwxr-xr-x - mapred mapred 0 2012-05-31 15:31 /tmp/hadoop-yarn/staging/history drwxrwxrwt - mapred mapred 0 2012-05-31 15:31 /tmp/hadoop-yarn/staging/history/done_intermediate drwxr-xr-x - hdfs supergroup 0 2012-05-31 15:31 /var drwxr-xr-x - hdfs supergroup 0 2012-05-31 15:31 /var/log drwxr-xr-x - yarn mapred 0 2012-05-31 15:31 /var/log/hadoop-yarn

启动YARN

$ sudo service hadoop-yarn-resourcemanager start

$ sudo service hadoop-yarn-nodemanager start

$ sudo service hadoop-mapreduce-historyserver start

创建用户目录(以用户dong.guo为例):

$ sudo -u hdfs hadoop fs -mkdir /user/dong.guo

$ sudo -u hdfs hadoop fs -chown dong.guo /user/dong.guo

测试上传文件

$ hadoop fs -mkdir input

$ hadoop fs -put /etc/hadoop/conf/*.xml input

$ hadoop fs -ls input

Found 4 items -rw-r--r-- 1 dong.guo supergroup 1461 2013-05-14 03:30 input/core-site.xml -rw-r--r-- 1 dong.guo supergroup 1854 2013-05-14 03:30 input/hdfs-site.xml -rw-r--r-- 1 dong.guo supergroup 1325 2013-05-14 03:30 input/mapred-site.xml -rw-r--r-- 1 dong.guo supergroup 2262 2013-05-14 03:30 input/yarn-site.xml

配置HADOOP_MAPRED_HOME环境变量

$ export HADOOP_MAPRED_HOME=/usr/lib/hadoop-mapreduce

运行一个测试Job

$ hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples.jar grep input output23 'dfs[a-z.]+'

Job完成后,可以看到以下目录

$ hadoop fs -ls

Found 2 items drwxr-xr-x - dong.guo supergroup 0 2013-05-14 03:30 input drwxr-xr-x - dong.guo supergroup 0 2013-05-14 03:32 output23

$ hadoop fs -ls output23

Found 2 items -rw-r--r-- 1 dong.guo supergroup 0 2013-05-14 03:32 output23/_SUCCESS -rw-r--r-- 1 dong.guo supergroup 150 2013-05-14 03:32 output23/part-r-00000

$ hadoop fs -cat output23/part-r-00000 | head

1 dfs.safemode.min.datanodes 1 dfs.safemode.extension 1 dfs.replication 1 dfs.namenode.name.dir 1 dfs.namenode.checkpoint.dir 1 dfs.datanode.data.dir

3. 安装 Hive

$ sudo yum install hive hive-metastore hive-server

$ sudo yum install mysql-server

$ sudo service mysqld start

$ cd ~

$ wget 'http://cdn.mysql.com/Downloads/Connector-J/mysql-connector-java-5.1.25.tar.gz'

$ tar xzf mysql-connector-java-5.1.25.tar.gz

$ sudo cp mysql-connector-java-5.1.25/mysql-connector-java-5.1.25-bin.jar /usr/lib/hive/lib/

$ sudo /usr/bin/mysql_secure_installation

[...] Enter current password for root (enter for none): OK, successfully used password, moving on... [...] Set root password? [Y/n] y New password:hadoophive Re-enter new password:hadoophive Remove anonymous users? [Y/n] Y [...] Disallow root login remotely? [Y/n] N [...] Remove test database and access to it [Y/n] Y [...] Reload privilege tables now? [Y/n] Y All done!

$ mysql -u root -phadoophive

mysql> CREATE DATABASE metastore; mysql> USE metastore; mysql> SOURCE /usr/lib/hive/scripts/metastore/upgrade/mysql/hive-schema-0.10.0.mysql.sql; mysql> CREATE USER 'hive'@'%' IDENTIFIED BY 'hadoophive'; mysql> CREATE USER 'hive'@'localhost' IDENTIFIED BY 'hadoophive'; mysql> REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hive'@'%'; mysql> REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hive'@'localhost'; mysql> GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hive'@'%'; mysql> GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hive'@'localhost'; mysql> FLUSH PRIVILEGES; mysql> quit;

$ sudo mv /etc/hive/conf/hive-site.xml /etc/hive/conf/hive-site.xml.bak

$ sudo vim /etc/hive/conf/hive-site.xml

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="http://heylinux.com/archives/configuration.xsl"?> javax.jdo.option.ConnectionURL jdbc:mysql://localhost/metastore the URL of the MySQL database javax.jdo.option.ConnectionDriverName com.mysql.jdbc.Driver javax.jdo.option.ConnectionUserName hive javax.jdo.option.ConnectionPassword hadoophive datanucleus.autoCreateSchema false datanucleus.fixedDatastore true hive.metastore.uris thrift://127.0.0.1:9083 IP address (or fully-qualified domain name) and port of the metastore host hive.aux.jars.path file:///usr/lib/hive/lib/zookeeper.jar,file:///usr/lib/hive/lib/hbase.jar,file:///usr/lib/hive/lib/hive-hbase-handler-0.10.0-cdh4.2.0.jar,file:///usr/lib/hive/lib/guava-11.0.2.jar

$ sudo service hive-metastore start

Starting (hive-metastore): [ OK ]

$ sudo service hive-server start

Starting (hive-server): [ OK ]

$ sudo -u hdfs hadoop fs -mkdir /user/hive

$ sudo -u hdfs hadoop fs -chown hive /user/hive

$ sudo -u hdfs hadoop fs -mkdir /tmp

$ sudo -u hdfs hadoop fs -chmod 777 /tmp

$ sudo -u hdfs hadoop fs -chmod o+t /tmp

$ sudo -u hdfs hadoop fs -mkdir /data

$ sudo -u hdfs hadoop fs -chown hdfs /data

$ sudo -u hdfs hadoop fs -chmod 777 /data

$ sudo -u hdfs hadoop fs -chmod o+t /data

$ sudo chown -R hive:hive /var/lib/hive

$ sudo vim /tmp/kv1.txt

1 www.baidu.com 2 www.google.com 3 www.sina.com.cn 4 www.163.com 5 heylinx.com

$ sudo -u hive hive

Logging initialized using configuration in file:/etc/hive/conf.dist/hive-log4j.properties Hive history file=/tmp/root/hive_job_log_root_201305140801_825709760.txt hive> CREATE TABLE IF NOT EXISTS pokes ( foo INT,bar STRING ) ROW FORMAT DELIMITED FIELDS TERMINATED BY "\t" LINES TERMINATED BY "\n"; hive> show tables; OK pokes Time taken: 0.415 seconds hive> LOAD DATA LOCAL INPATH '/tmp/kv1.txt' OVERWRITE INTO TABLE pokes; Copying data from file:/tmp/kv1.txt Copying file: file:/tmp/kv1.txt Loading data to table default.pokes rmr: DEPRECATED: Please use 'rm -r' instead. Deleted /user/hive/warehouse/pokes Table default.pokes stats: [num_partitions: 0, num_files: 1, num_rows: 0, total_size: 79, raw_data_size: 0] OK Time taken: 1.681 seconds

$ export HADOOP_MAPRED_HOME=/usr/lib/hadoop-mapreduce

4. 安装 Impala

$ cd /etc/yum.repos.d/

$ sudo wget http://archive.cloudera.com/impala/redhat/6/x86_64/impala/cloudera-impala.repo

$ sudo yum install impala impala-shell

$ sudo yum install impala-server impala-state-store

$ sudo vim /etc/hadoop/conf/hdfs-site.xml

... dfs.client.read.shortcircuit true dfs.domain.socket.path /var/run/hadoop-hdfs/dn._PORT dfs.client.file-block-storage-locations.timeout 3000 dfs.datanode.hdfs-blocks-metadata.enabled true

$ sudo cp -rpa /etc/hadoop/conf/core-site.xml /etc/impala/conf/

$ sudo cp -rpa /etc/hadoop/conf/hdfs-site.xml /etc/impala/conf/

$ sudo service hadoop-hdfs-datanode restart

$ sudo service impala-state-store restart

$ sudo service impala-server restart

$ sudo /usr/java/default/bin/jps

5. 安装 Hbase

$ sudo yum install hbase

$ sudo vim /etc/security/limits.conf

hdfs - nofile 32768 hbase - nofile 32768

$ sudo vim /etc/pam.d/common-session

session required pam_limits.so

$ sudo vim /etc/hadoop/conf/hdfs-site.xml

dfs.datanode.max.xcievers 4096

$ sudo cp /usr/lib/impala/lib/hive-hbase-handler-0.10.0-cdh4.2.0.jar /usr/lib/hive/lib/hive-hbase-handler-0.10.0-cdh4.2.0.jar

$ sudo /etc/init.d/hadoop-hdfs-namenode restart

$ sudo /etc/init.d/hadoop-hdfs-datanode restart

$ sudo yum install hbase-master

$ sudo service hbase-master start

$ sudo -u hive hive

Logging initialized using configuration in file:/etc/hive/conf.dist/hive-log4j.properties

Hive history file=/tmp/hive/hive_job_log_hive_201305140905_2005531704.txt

hive> CREATE TABLE hbase_table_1(key int, value string) STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler' WITH SERDEPROPERTIES ("hbase.columns.mapping" = ":key,cf1:val") TBLPROPERTIES ("hbase.table.name" = "xyz");

OK

Time taken: 3.587 seconds

hive> INSERT OVERWRITE TABLE hbase_table_1 SELECT * FROM pokes WHERE foo=5;

Total MapReduce jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

Starting Job = job_1368502088579_0004, Tracking URL = http://ip-10-197-10-4:8088/proxy/application_1368502088579_0004/

Kill Command = /usr/lib/hadoop/bin/hadoop job -kill job_1368502088579_0004

Hadoop job information for Stage-0: number of mappers: 1; number of reducers: 0

2013-05-14 09:12:45,340 Stage-0 map = 0%, reduce = 0%

2013-05-14 09:12:53,165 Stage-0 map = 100%, reduce = 0%, Cumulative CPU 2.63 sec

MapReduce Total cumulative CPU time: 2 seconds 630 msec

Ended Job = job_1368502088579_0004

1 Rows loaded to hbase_table_1

MapReduce Jobs Launched:

Job 0: Map: 1 Cumulative CPU: 2.63 sec HDFS Read: 288 HDFS Write: 0 SUCCESS

Total MapReduce CPU Time Spent: 2 seconds 630 msec

OK

Time taken: 21.063 seconds

hive> select * from hbase_table_1;

OK

5 heylinx.com

Time taken: 0.685 seconds

hive> SELECT COUNT (*) FROM pokes;

Total MapReduce jobs = 1

Launching Job 1 out of 1

Number of reduce tasks determined at compile time: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapred.reduce.tasks=<number>

Starting Job = job_1368502088579_0005, Tracking URL = http://ip-10-197-10-4:8088/proxy/application_1368502088579_0005/

Kill Command = /usr/lib/hadoop/bin/hadoop job -kill job_1368502088579_0005

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2013-05-14 10:32:04,711 Stage-1 map = 0%, reduce = 0%

2013-05-14 10:32:11,461 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:12,554 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:13,642 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:14,760 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:15,918 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:16,991 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:18,111 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 1.22 sec

2013-05-14 10:32:19,188 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 4.04 sec

MapReduce Total cumulative CPU time: 4 seconds 40 msec

Ended Job = job_1368502088579_0005

MapReduce Jobs Launched:

Job 0: Map: 1 Reduce: 1 Cumulative CPU: 4.04 sec HDFS Read: 288 HDFS Write: 2 SUCCESS

Total MapReduce CPU Time Spent: 4 seconds 40 msec

OK

5

Time taken: 28.195 seconds

</number></number></number>

6. 测试Impala性能

View parameters on http://ec2-204-236-182-78.us-west-1.compute.amazonaws.com:25000

$ impala-shell

[ip-10-197-10-4.us-west-1.compute.internal:21000] > CREATE TABLE IF NOT EXISTS pokes ( foo INT,bar STRING ) ROW FORMAT DELIMITED FIELDS TERMINATED BY "\t" LINES TERMINATED BY "\n"; Query: create TABLE IF NOT EXISTS pokes ( foo INT,bar STRING ) ROW FORMAT DELIMITED FIELDS TERMINATED BY "\t" LINES TERMINATED BY "\n" [ip-10-197-10-4.us-west-1.compute.internal:21000] > show tables; Query: show tables Query finished, fetching results ... +-------+ | name | +-------+ | pokes | +-------+ Returned 1 row(s) in 0.00s [ip-10-197-10-4.us-west-1.compute.internal:21000] > SELECT * from pokes; Query: select * from pokes Query finished, fetching results ... +-----+-----------------+ | foo | bar | +-----+-----------------+ | 1 | www.baidu.com | | 2 | www.google.com | | 3 | www.sina.com.cn | | 4 | www.163.com | | 5 | heylinx.com | +-----+-----------------+ Returned 5 row(s) in 0.28s [ip-10-197-10-4.us-west-1.compute.internal:21000] > SELECT COUNT (*) from pokes; Query: select COUNT (*) from pokes Query finished, fetching results ... +----------+ | count(*) | +----------+ | 5 | +----------+ Returned 1 row(s) in 0.34s

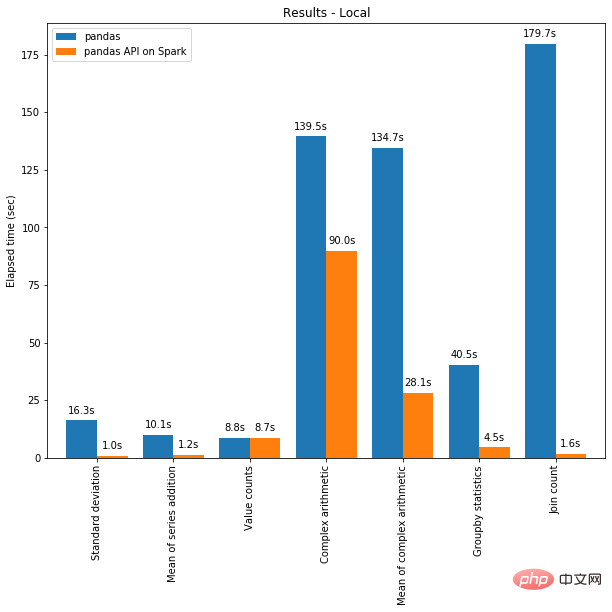

通过两个COUNT的结果来看,Hive使用了 28.195 seconds 而 Impala仅使用了0.34s,由此可以看出Impala的性能确实要优于Hive。

原文地址:伪分布式安装部署CDH4.2.1与Impala[原创实践], 感谢原作者分享。

PHP实现开源SeaweedFS分布式文件系统Jun 18, 2023 pm 03:56 PM

PHP实现开源SeaweedFS分布式文件系统Jun 18, 2023 pm 03:56 PM在分布式系统的架构中,文件管理和存储是非常重要的一部分。然而,传统的文件系统在应对大规模的文件存储和管理时遇到了一些问题。为了解决这些问题,SeaweedFS分布式文件系统被开发出来。在本文中,我们将介绍如何使用PHP来实现开源SeaweedFS分布式文件系统。什么是SeaweedFS?SeaweedFS是一个开源的分布式文件系统,它用于解决大规模文件存储和

Pandas 与 PySpark 强强联手,功能与速度齐飞!May 01, 2023 pm 09:19 PM

Pandas 与 PySpark 强强联手,功能与速度齐飞!May 01, 2023 pm 09:19 PM使用Python做数据处理的数据科学家或数据从业者,对数据科学包pandas并不陌生,也不乏像云朵君一样的pandas重度使用者,项目开始写的第一行代码,大多是importpandasaspd。pandas做数据处理可以说是yyds!而他的缺点也是非常明显,pandas只能单机处理,它不能随数据量线性伸缩。例如,如果pandas试图读取的数据集大于一台机器的可用内存,则会因内存不足而失败。另外pandas在处理大型数据方面非常慢,虽然有像Dask或Vaex等其他库来优化提升数

PHP中的分布式数据中心May 23, 2023 pm 11:40 PM

PHP中的分布式数据中心May 23, 2023 pm 11:40 PM随着互联网的快速发展,网站的访问量也在不断增长。为了满足这一需求,我们需要构建高可用性的系统。分布式数据中心就是这样一个系统,它将各个数据中心的负载分散到不同的服务器上,增加系统的稳定性和可扩展性。在PHP开发中,我们也可以通过一些技术实现分布式数据中心。分布式缓存分布式缓存是互联网分布式应用中最常用的技术之一。它将数据缓存在多个节点上,提高数据的访问速度和

分布式系统必须知道的一个共识算法:RaftApr 07, 2023 pm 05:54 PM

分布式系统必须知道的一个共识算法:RaftApr 07, 2023 pm 05:54 PM一、Raft 概述Raft 算法是分布式系统开发首选的共识算法。比如现在流行 Etcd、Consul。如果掌握了这个算法,就可以较容易地处理绝大部分场景的容错和一致性需求。比如分布式配置系统、分布式 NoSQL 存储等等,轻松突破系统的单机限制。Raft 算法是通过一切以领导者为准的方式,实现一系列值的共识和各节点日志的一致。二、Raft 角色2.1 角色跟随者(Follower):普通群众,默默接收和来自领导者的消息,当领导者心跳信息超时的

使用Redis实现分布式计数器May 11, 2023 am 08:06 AM

使用Redis实现分布式计数器May 11, 2023 am 08:06 AM什么是分布式计数器?在分布式系统中,多个节点之间需要对共同的状态进行更新和读取,而计数器是其中一种应用最广泛的状态之一。通俗地讲,计数器就是一个变量,每次被访问时其值就会加1或减1,用于跟踪某个系统进展的指标。而分布式计数器则指的是在分布式环境下对计数器进行操作和管理。为什么要使用Redis实现分布式计数器?随着分布式计算的普及,分布式系统中的许多细节问题也

Redis实现分布式配置管理的方法与应用实例May 11, 2023 pm 04:22 PM

Redis实现分布式配置管理的方法与应用实例May 11, 2023 pm 04:22 PMRedis实现分布式配置管理的方法与应用实例随着业务的发展,配置管理对于一个系统而言变得越来越重要。一些通用的应用配置(如数据库连接信息,缓存配置等),以及一些需要动态控制的开关配置,都需要进行统一管理和更新。在传统架构中,通常是通过在每台服务器上通过单独的配置文件进行管理,但这种方式会导致配置文件的管理和同步变得十分复杂。因此,在分布式架构下,采用一个可靠

Redis实现分布式对象存储的方法与应用实例May 10, 2023 pm 08:48 PM

Redis实现分布式对象存储的方法与应用实例May 10, 2023 pm 08:48 PMRedis实现分布式对象存储的方法与应用实例随着互联网的快速发展和数据量的快速增长,传统的单机存储已经无法满足业务的需求,因此分布式存储成为了当前业界的热门话题。Redis是一个高性能的键值对数据库,它不仅支持丰富的数据结构,而且支持分布式存储,因此具有极高的应用价值。本文将介绍Redis实现分布式对象存储的方法,并结合应用实例进行说明。一、Redis实现分

PHP与数据库分布式的集成May 15, 2023 pm 09:40 PM

PHP与数据库分布式的集成May 15, 2023 pm 09:40 PM随着互联网技术的发展,对于一个网络应用而言,对数据库的操作非常频繁。特别是对于动态网站,甚至有可能出现每秒数百次的数据库请求,当数据库处理能力不能满足需求时,我们可以考虑使用数据库分布式。而分布式数据库的实现离不开与编程语言的集成。PHP作为一门非常流行的编程语言,具有较好的适用性和灵活性,这篇文章将着重介绍PHP与数据库分布式集成的实践。分布式的概念分布式

핫 AI 도구

Undresser.AI Undress

사실적인 누드 사진을 만들기 위한 AI 기반 앱

AI Clothes Remover

사진에서 옷을 제거하는 온라인 AI 도구입니다.

Undress AI Tool

무료로 이미지를 벗다

Clothoff.io

AI 옷 제거제

AI Hentai Generator

AI Hentai를 무료로 생성하십시오.

인기 기사

뜨거운 도구

ZendStudio 13.5.1 맥

강력한 PHP 통합 개발 환경

Eclipse용 SAP NetWeaver 서버 어댑터

Eclipse를 SAP NetWeaver 애플리케이션 서버와 통합합니다.

에디트플러스 중국어 크랙 버전

작은 크기, 구문 강조, 코드 프롬프트 기능을 지원하지 않음

DVWA

DVWA(Damn Vulnerable Web App)는 매우 취약한 PHP/MySQL 웹 애플리케이션입니다. 주요 목표는 보안 전문가가 법적 환경에서 자신의 기술과 도구를 테스트하고, 웹 개발자가 웹 응용 프로그램 보안 프로세스를 더 잘 이해할 수 있도록 돕고, 교사/학생이 교실 환경 웹 응용 프로그램에서 가르치고 배울 수 있도록 돕는 것입니다. 보안. DVWA의 목표는 다양한 난이도의 간단하고 간단한 인터페이스를 통해 가장 일반적인 웹 취약점 중 일부를 연습하는 것입니다. 이 소프트웨어는

Atom Editor Mac 버전 다운로드

가장 인기 있는 오픈 소스 편집기