Technology peripherals

Technology peripherals AI

AI Reviewing 170 'self-supervised learning' recommendation algorithms, HKU releases SSL4Rec: the code and database are fully open source!

Reviewing 170 'self-supervised learning' recommendation algorithms, HKU releases SSL4Rec: the code and database are fully open source!Recommendation systems are important to address the challenge of information overload as they provide customized recommendations based on users’ personal preferences. In recent years, deep learning technology has greatly promoted the development of recommendation systems and improved insights into user behavior and preferences.

However, traditional supervised learning methods face challenges in practical applications due to data sparsity issues, which limits their ability to effectively learn user performance.

To protect and overcome this problem, self-supervised learning (SSL) technology is applied to students, which uses the inherent structure of the data to generate supervision signals and does not rely entirely on labeled data.

This method uses a recommendation system that can extract meaningful information from unlabeled data and make accurate predictions and recommendations even when data is scarce.

Article address: https://arxiv.org/abs/2404.03354

Open source database : https://github.com/HKUDS/Awesome-SSLRec-Papers

Open source code base: https://github.com/HKUDS/SSLRec

This article reviews self-supervised learning frameworks designed for recommender systems and conducts an in-depth analysis of more than 170 related papers. We explored nine different application scenarios to gain a comprehensive understanding of how SSL can enhance recommendation systems in different scenarios.

For each domain, we discuss different self-supervised learning paradigms in detail, including contrastive learning, generative learning, and adversarial learning, showing how SSL can improve recommendation systems in different situations. performance.

1 Recommended system

The research on recommender system covers various tasks in different scenarios, such as collaborative filtering and sequence recommendation and multi-behavior recommendations, etc. These tasks have different data paradigms and goals. Here, we first provide a general definition without going into specific variations for different recommendation tasks. In the recommendation system, there are two main sets: the user set, denoted as  , and the item set, denoted as

, and the item set, denoted as  .

.

Then, use an interaction matrix  to represent the recorded interactions between the user and the item. In this matrix, the entry Ai,j of the matrix is assigned the value 1 if the user ui has interacted with the item vj, otherwise it is 0.

to represent the recorded interactions between the user and the item. In this matrix, the entry Ai,j of the matrix is assigned the value 1 if the user ui has interacted with the item vj, otherwise it is 0.

The definition of interaction can be adapted to different contexts and data sets (e.g., watching a movie, clicking on an e-commerce site, or making a purchase).

In addition, in different recommendation tasks, there are different auxiliary observation data, recorded as X. For example, in knowledge graph enhanced recommendation, X contains the knowledge graph containing external item attributes. , these attributes include different entity types and corresponding relationships.

In social recommendation, X includes user-level relationships, such as friendship. Based on the above definition, the recommendation model optimizes a prediction function f(⋅), aiming to accurately estimate the preference score between any user u and item v:

The preference score yu,v represents the possibility of user u interacting with item v.

Based on this score, the recommendation system can recommend uninteracted items to each user by providing a ranked list of items based on the estimated preference score. In the review, we further explore the data form of (A,X) in different recommendation scenarios and the role of self-supervised learning in it.

2 Self-supervised learning in recommendation systems

In the past few years, deep neural networks have performed well in supervised learning, which has been widely used in fields including computer vision, natural language processing and recommendation systems. It is reflected in all fields. However, due to its heavy reliance on labeled data, supervised learning faces challenges in dealing with label sparsity, which is also a common problem in recommender systems.

To address this limitation, self-supervised learning emerged as a promising method, which utilizes the data itself as labels for learning. Self-supervised learning in recommender systems includes three different paradigms: contrastive learning, generative learning, and adversarial learning.

2.1 Contrastive Learning

##Contrastive Learning as a A prominent self-supervised learning method whose main goal is to maximize the consistency between different views enhanced from the data. In contrastive learning for recommender systems, the goal is to minimize the following loss function:

E∗∘ω∗ represents the comparison view creation operation. Different recommendation algorithms based on contrastive learning have different creation processes. The construction of each view consists of a data augmentation process ω∗ (which may involve nodes/edges in the augmented graph) and an embedding encoding process E∗.

The goal of minimization is to obtain a robust encoding function that maximizes the consistency between views. This consistency across views can be achieved through methods such as mutual information maximization or instance discrimination.

is to obtain a robust encoding function that maximizes the consistency between views. This consistency across views can be achieved through methods such as mutual information maximization or instance discrimination.

2.2 Generative Learning

The goal of generative learning It is about understanding the structure and patterns of data to learn meaningful representations. It optimizes a deep encoder-decoder model that reconstructs missing or corrupted input data.

The encoder  creates a latent representation from the input, while the decoder

creates a latent representation from the input, while the decoder  reconstructs the original data from the encoder output. The goal is to minimize the difference between the reconstructed and original data as follows:

reconstructs the original data from the encoder output. The goal is to minimize the difference between the reconstructed and original data as follows:

Here, ω represents operations such as masking or perturbation. D∘E represents the process of encoding and decoding to reconstruct the output. Recent research has also introduced a decoder-only architecture that efficiently reconstructs data without an encoder-decoder setup. This approach uses a single model (e.g., Transformer) for reconstruction and is typically applied to serialized recommendations based on generative learning. The format of the loss function  depends on the data type, such as mean square error for continuous data and cross-entropy loss for categorical data.

depends on the data type, such as mean square error for continuous data and cross-entropy loss for categorical data.

2.3 Adversarial Learning

Adversarial learning is a training method that uses the generator G (⋅) generates high-quality output and contains a discriminator Ω(⋅) that determines whether a given sample is real or generated. Unlike generative learning, adversarial learning differs by including a discriminator that uses competitive interactions to improve the generator's ability to produce high-quality output in order to fool the discriminator.

Therefore, the learning goal of adversarial learning can be defined as follows:

Here, the variable x represents the real sample obtained from the underlying data distribution, while  represents the one generated by the generator G(⋅) Synthetic samples. During training, both the generator and the discriminator improve their capabilities through competitive interactions. Ultimately, the generator strives to produce high-quality outputs that are beneficial for downstream tasks.

represents the one generated by the generator G(⋅) Synthetic samples. During training, both the generator and the discriminator improve their capabilities through competitive interactions. Ultimately, the generator strives to produce high-quality outputs that are beneficial for downstream tasks.

3 Classification System (Taxonomy)

In this section, we propose the application of self-supervised learning in recommendation systems comprehensive classification system. As mentioned before, self-supervised learning paradigms can be divided into three categories: contrastive learning, generative learning, and adversarial learning. Therefore, our classification system is built based on these three categories, providing deeper insights into each category.

3.1 Comparative learning in recommendation systems

##The basic principle of contrastive learning (CL) is to maximize the consistency between different views. Therefore, we propose a view-centric taxonomy consisting of three key components to consider when applying contrastive learning: creating views, pairing views to maximize consistency, and optimizing consistency.

View Creation. Create views that emphasize the various aspects of the data that the model focuses on. It can combine global collaborative information to improve the recommendation system's ability to handle global relationships, or introduce random noise to enhance the robustness of the model.

We consider the enhancement of input data (e.g., graphs, sequences, input features) as view creation at the data level, while the enhancement of latent features during inference is regarded as the feature level View creation. We propose a hierarchical classification system that includes view creation techniques from the basic data level to the neural model level.

- Data level Data-based: In comparative learning-based recommendation systems, diverse views are created by enhancing input data. These enhanced data points are then processed through the model. The output embeddings obtained from different views are finally paired and used for comparative learning. The enhancement methods vary depending on the recommendation scenario. For example, graph data can be enhanced using node/edge dropping, while sequences can be enhanced using masking, cropping, and replacement.

- Feature level Feature-based: In addition to generating views directly from data, some methods also consider enhancing the encoded hidden features in the model forward process. These hidden features can include node embeddings of graph neural network layers or token vectors in Transformers. By applying various enhancement techniques multiple times or introducing random perturbations, the final output of the model can be viewed as different views.

- Model level Model-based: Data-level and feature-level enhancements are non-adaptive because they are non-parametric. So there are also ways to use models to generate different views. These views contain specific information based on the model design. For example, intent-decoupled neural modules can capture user intentions, while hypergraph modules can capture global relationships.

Pair Sampling. The view creation process generates at least two different views for each sample in the data. The core of contrastive learning is to maximize the alignment of certain views (i.e., bring them closer) while pushing other views away.

To do this, the key is to identify the positive sample pairs that should be brought closer, and identify other views that form negative sample pairs. This strategy is called paired sampling, which mainly consists of two paired sampling methods:

- Natural Sampling Natural Sampling: A common method of paired sampling is direct rather than heuristic, which we call natural sampling. Positive sample pairs are formed from different views generated by the same data sample, while negative sample pairs are formed from views of different data samples. In the presence of a central view, such as a global view derived from the entire graph, local-global relationships can also naturally form positive sample pairs. This method is widely used in most contrastive learning recommendation systems.

- Score-based Sampling Score-based Sampling: Another method of paired sampling is score-based sampling. In this approach, a module calculates the scores of sample pairs to determine positive or negative sample pairs. For example, the distance between two views can be used to determine positive and negative sample pairs. Alternatively, clustering can be applied on the view, where positive pairs are within the same cluster and negative pairs are within different clusters. For an anchor view, once a positive sample pair is determined, the remaining views are naturally considered negative views and can be paired with the given view to create negative sample pairs, allowing push-away.

Contrastive Objective. The learning goal in contrastive learning is to maximize the mutual information between pairs of positive samples, which in turn can improve the performance of the learning recommendation model. Since it is not feasible to directly calculate mutual information, a feasible lower bound is usually used as the learning target in contrastive learning. However, there are also explicit goals of bringing positive pairs closer together.

- InfoNCE-based: InfoNCE is a variant of noise contrastive estimation. Its optimization process aims to bring positive sample pairs closer and push away negative sample pairs.

- JS-based: In addition to using InfoNCE to estimate mutual information, you can also use Jensen-Shannon divergence to estimate the lower bound. The derived learning objective is similar to combining InfoNCE with standard binary cross-entropy loss, applied to positive and negative sample pairs.

- Explicit Objective: Both the InfoNCE-based and JS-based objectives aim to maximize the estimated lower bound of mutual information in order to maximize the mutual information itself, which is guaranteed in theory of. In addition, there are explicit objectives, such as minimizing the mean square error or maximizing the cosine similarity within a sample pair, to directly align pairs of positive samples. These goals are called explicit goals.

3.2 Generative Learning in Recommender Systems

In generative self-supervised learning, the main goal is to maximize the likelihood estimate of the real data distribution. This allows the learned, meaningful representations to capture the underlying structure and patterns in the data, which can then be used in downstream tasks. In our classification system, we consider two aspects to distinguish different generative learning-based recommendation methods: generative learning paradigm and generative goal.

Generative Learning Paradigm. In the context of recommendation, self-supervised methods employing generative learning can be classified into three paradigms:

- Masked Autoencoding: In a masked autoencoder, the learning process follows the mask-reconstruction method, where the model reconstructs the complete data from partial observations.

- Variational Autoencoding: Variational Autoencoder is another generation method that maximizes the likelihood estimate and has theoretical guarantees. Typically it involves mapping input data onto latent factors that follow a normal Gaussian distribution. The model then reconstructs the input data based on the sampled latent factors.

- Denoised Diffusion: Denoised diffusion is a generative model that generates new data samples by inverting the noise process. In the forward process, Gaussian noise is added to the original data and, over multiple steps, a series of noisy versions are created. During the reverse process, the model learns to remove noise from the noisy version, gradually restoring the original data.

#Generation Target. In generative learning, which pattern of data is considered as a generated label is another issue that needs to be considered to bring meaningful auxiliary self-supervised signals. In general, the generation goals vary for different methods and in different recommendation scenarios. For example, in sequence recommendation, the generation target can be the items in the sequence, with the purpose of simulating the relationship between items in the sequence. In interactive graph recommendation, the generation targets can be nodes/edges in the graph, aiming to capture high-level topological correlations in the graph.

3.3 Adversarial Learning in Recommended Systems

##In In adversarial learning of recommendation systems, the discriminator plays a crucial role in distinguishing generated false samples from real samples. Similar to generative learning, the classification system we propose covers adversarial learning methods in recommender systems from two perspectives: learning paradigm and discrimination goal:

Adversarial Learning Paradigm (Adversarial Learning Paradigm). In recommender systems, adversarial learning consists of two different paradigms, depending on whether the discriminative loss of the discriminator can be back-propagated to the generator in a differentiable manner.

- Differentiable Adversarial Learning (Differentiable AL): The first method involves objects represented in a continuous space, and the gradient of the discriminator can be naturally backpropagated to the generator optimize. This approach is called differentiable adversarial learning.

- Non-Differentiable Adversarial Learning (Non-Differentiable AL): Another method involves identifying the output of the recommendation system, especially the recommended products. However, since the recommendation results are discrete, backpropagation becomes challenging, forming a non-differentiable case where the gradient of the discriminator cannot be directly propagated to the generator. To solve this problem, reinforcement learning and policy gradient are introduced. In this case, the generator acts as an agent that interacts with the environment by predicting goods based on previous interactions. The discriminator acts as a reward function and provides a reward signal to guide the learning of the generator. The discriminator's reward is defined to emphasize different factors that affect recommendation quality, and is optimized to assign higher rewards to real samples rather than generated samples, guiding the generator to produce high-quality recommendations.

Discrimination Target. Different recommendation algorithms cause the generator to generate different inputs, which are then fed to the discriminator for discrimination. This process aims to enhance the generator's ability to produce high-quality content that is closer to reality. Specific discrimination goals are designed based on specific recommendation tasks.

3.4 Diverse recommendation scenarios

In this review, we An in-depth discussion of the design methods of different self-supervised learning methods from nine different recommendation scenarios. These nine recommendation scenarios are as follows (please read the article for details):

- General Collaborative Filtering - This is the most basic form of recommendation system, which mainly relies on interaction data between users and items to generate personalized recommendations.

- Sequential Recommendation (sequence recommendation) - considers the time series of user interaction with items, with the purpose of predicting the user's next possible interaction item.

- Social Recommendation - Combines user relationship information in social networks to provide more personalized recommendations.

- Knowledge-aware Recommendation - Use structured knowledge such as knowledge graphs to enhance the performance of recommendation systems.

- Cross-domain Recommendation - Apply user preferences learned from one domain to another domain to improve recommendation results.

- Group Recommendation - Providing recommendations for groups with common characteristics or interests, rather than for individual users.

- Bundle Recommendation - Recommend a group of items as a whole, usually for promotions or package services.

- Multi-behavior Recommendation - Consider the user's multiple interactions with items, such as browsing, purchasing, rating, etc.

- Multi-modal Recommendation - Combines multiple modal information of items, such as text, images, sounds, etc., to provide richer recommendations.

This article provides a comprehensive review of the application of self-supervised learning (SSL) in recommendation systems. More than 170 papers were analyzed. We proposed a self-supervised classification system covering nine recommendation scenarios, discussed three SSL paradigms of contrastive learning, generative learning and adversarial learning in detail, and discussed future research directions in the article.

We emphasize the importance of SSL in handling data sparsity and improving recommendation system performance, and point out the integration of large language models into recommendation systems, adaptive dynamic recommendation environments, and Potential research directions such as establishing a theoretical foundation for the SSL paradigm. We hope that this review can provide valuable resources for researchers, inspire new research ideas, and promote the further development of recommendation systems.

The above is the detailed content of Reviewing 170 'self-supervised learning' recommendation algorithms, HKU releases SSL4Rec: the code and database are fully open source!. For more information, please follow other related articles on the PHP Chinese website!

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AM

ai合并图层的快捷键是什么Jan 07, 2021 am 10:59 AMai合并图层的快捷键是“Ctrl+Shift+E”,它的作用是把目前所有处在显示状态的图层合并,在隐藏状态的图层则不作变动。也可以选中要合并的图层,在菜单栏中依次点击“窗口”-“路径查找器”,点击“合并”按钮。

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AM

ai橡皮擦擦不掉东西怎么办Jan 13, 2021 am 10:23 AMai橡皮擦擦不掉东西是因为AI是矢量图软件,用橡皮擦不能擦位图的,其解决办法就是用蒙板工具以及钢笔勾好路径再建立蒙板即可实现擦掉东西。

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM

谷歌超强AI超算碾压英伟达A100!TPU v4性能提升10倍,细节首次公开Apr 07, 2023 pm 02:54 PM虽然谷歌早在2020年,就在自家的数据中心上部署了当时最强的AI芯片——TPU v4。但直到今年的4月4日,谷歌才首次公布了这台AI超算的技术细节。论文地址:https://arxiv.org/abs/2304.01433相比于TPU v3,TPU v4的性能要高出2.1倍,而在整合4096个芯片之后,超算的性能更是提升了10倍。另外,谷歌还声称,自家芯片要比英伟达A100更快、更节能。与A100对打,速度快1.7倍论文中,谷歌表示,对于规模相当的系统,TPU v4可以提供比英伟达A100强1.

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PM

ai可以转成psd格式吗Feb 22, 2023 pm 05:56 PMai可以转成psd格式。转换方法:1、打开Adobe Illustrator软件,依次点击顶部菜单栏的“文件”-“打开”,选择所需的ai文件;2、点击右侧功能面板中的“图层”,点击三杠图标,在弹出的选项中选择“释放到图层(顺序)”;3、依次点击顶部菜单栏的“文件”-“导出”-“导出为”;4、在弹出的“导出”对话框中,将“保存类型”设置为“PSD格式”,点击“导出”即可;

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PM

ai顶部属性栏不见了怎么办Feb 22, 2023 pm 05:27 PMai顶部属性栏不见了的解决办法:1、开启Ai新建画布,进入绘图页面;2、在Ai顶部菜单栏中点击“窗口”;3、在系统弹出的窗口菜单页面中点击“控制”,然后开启“控制”窗口即可显示出属性栏。

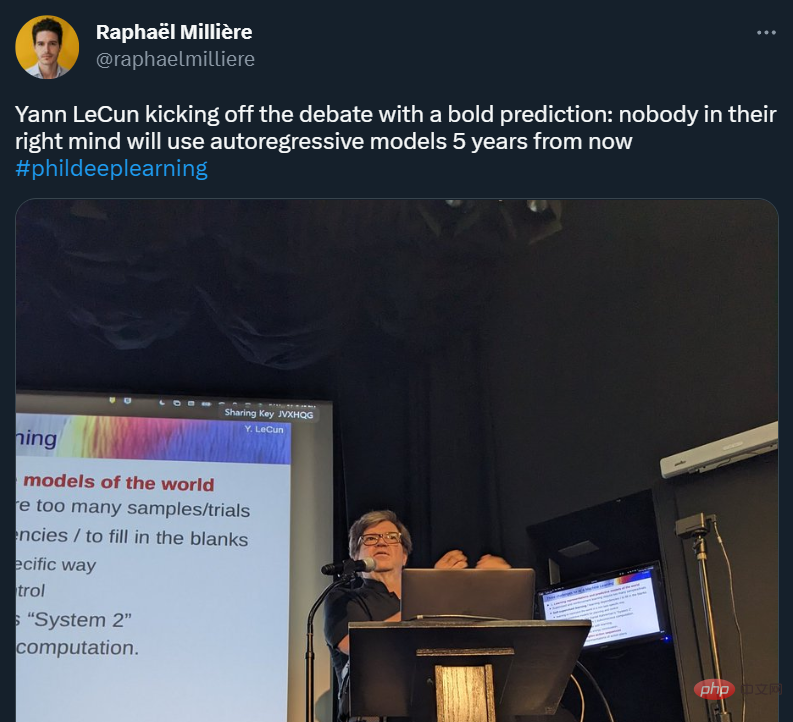

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AM

GPT-4的研究路径没有前途?Yann LeCun给自回归判了死刑Apr 04, 2023 am 11:55 AMYann LeCun 这个观点的确有些大胆。 「从现在起 5 年内,没有哪个头脑正常的人会使用自回归模型。」最近,图灵奖得主 Yann LeCun 给一场辩论做了个特别的开场。而他口中的自回归,正是当前爆红的 GPT 家族模型所依赖的学习范式。当然,被 Yann LeCun 指出问题的不只是自回归模型。在他看来,当前整个的机器学习领域都面临巨大挑战。这场辩论的主题为「Do large language models need sensory grounding for meaning and u

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM

强化学习再登Nature封面,自动驾驶安全验证新范式大幅减少测试里程Mar 31, 2023 pm 10:38 PM引入密集强化学习,用 AI 验证 AI。 自动驾驶汽车 (AV) 技术的快速发展,使得我们正处于交通革命的风口浪尖,其规模是自一个世纪前汽车问世以来从未见过的。自动驾驶技术具有显着提高交通安全性、机动性和可持续性的潜力,因此引起了工业界、政府机构、专业组织和学术机构的共同关注。过去 20 年里,自动驾驶汽车的发展取得了长足的进步,尤其是随着深度学习的出现更是如此。到 2015 年,开始有公司宣布他们将在 2020 之前量产 AV。不过到目前为止,并且没有 level 4 级别的 AV 可以在市场

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AM

ai移动不了东西了怎么办Mar 07, 2023 am 10:03 AMai移动不了东西的解决办法:1、打开ai软件,打开空白文档;2、选择矩形工具,在文档中绘制矩形;3、点击选择工具,移动文档中的矩形;4、点击图层按钮,弹出图层面板对话框,解锁图层;5、点击选择工具,移动矩形即可。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Dreamweaver CS6

Visual web development tools

WebStorm Mac version

Useful JavaScript development tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.