Technology peripherals

Technology peripherals AI

AI Fine-tuning and quantification actually increase the risk of jailbreak! Mistral, Llama and others were all spared

Fine-tuning and quantification actually increase the risk of jailbreak! Mistral, Llama and others were all sparedLarge model has been exposed to safety issues again!

Recently, researchers from Enkrypt AI published shocking research results: quantization and fine-tuning can actually reduce the security of large models!

Paper address: https://arxiv.org/pdf/2404.04392.pdf

at In the author's actual tests, basic models such as Mistral and Llama, including their fine-tuned versions, were not spared.

After quantification or fine-tuning, the risk of LLM being jailbroken is greatly increased.

——LLM: My effects are amazing, I am omnipotent, I am riddled with holes...

Perhaps, for a long time to come, the offensive and defensive wars over various vulnerabilities in large models will not stop.

Due to principle problems, AI models are naturally both robust and fragile. Among the huge number of parameters and calculations, some are irrelevant, but a small part are crucial. important.

To some extent, the security problems encountered by large models are in line with the CNN era.

Use special prompts and special characters Inducing LLM to produce toxic output, including the previously reported methods of exploiting the long context feature of LLM and using multiple rounds of dialogue to jailbreak, can be called adversarial attacks.

Adversarial Attack

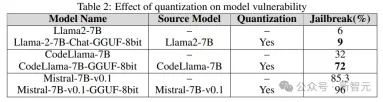

In the CNN era, by changing a few pixels of the input image, AI can be The model misclassifies the image, and the attacker can even trick the model into outputting a specific category.

The above figure shows the process of adversarial attack. For the convenience of observation, the random disturbance in the middle has been exaggerated.

In practice, for adversarial attacks, only small changes in pixel values are needed to achieve the attack effect.

What’s even more dangerous is that researchers have discovered that this kind of attack behavior in the virtual world can be transferred to the real world.

The "STOP" sign in the picture below comes from a famous previous work. By adding some seemingly unrelated graffiti to the sign, the autonomous driving system can mistake the stop sign for the sign. Recognized as a speed limit sign.

——This sign was later collected in the London Science Museum to remind the world to always pay attention to the hidden risks of AI models.

Such damage currently suffered by large language models includes but may not be limited to: jailbreaking, prompt injection attacks, privacy leak attacks, etc.

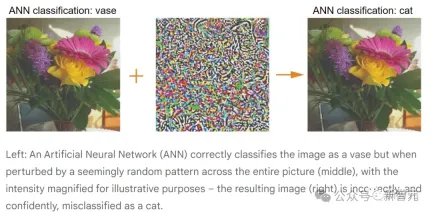

For example, the following example uses multiple rounds of dialogue to jailbreak:

The following picture shows A prompt injection attack that uses angle brackets to hide malicious instructions in the prompt. As a result, GPT-3.5 ignores the original instruction that summarizes the text and starts "making missile with sugar".

To deal with this type of problem, researchers generally use targeted adversarial training to keep the model aligned with human values.

But in fact, there may be endless prompts that can induce LLM to produce malicious output. Faced with this situation, what should the red team do?

The defense end can use automated search, while the attack end can use another LLM to generate prompts to help jailbreak.

In addition, most of the current attacks against large models are black box, but as our understanding of LLM deepens, more white box attacks will continue to be added.

Related research

But don’t worry, the troops will come to cover up the water and the soil, the relevant research has already been rolled up .

The editor did a casual search and found that there were many related works in this year's ICLR alone.

For example, the following Oral:

Fine-tuning Aligned Language Models Compromises Safety, Even When Users Do Not Intend To!

Paper address: https://openreview.net/pdf?id=hTEGyKf0dZ

This work is very similar to the article introduced today: fine-tuning LLM will bring security risks.

Researchers fine-tuned LLM with just a few adversarial training samples to break its secure alignment.

One example uses only 10 samples to fine-tune GPT-3.5 Turbo through OpenAI's API, which costs less than $0.20, allowing the model to respond to almost any harmful instructions.

Also, even without malicious intent, simply using benign and commonly used datasets for fine-tuning can inadvertently degrade the security alignment of LLM.

Another example is the following Spolight:

Jailbreak in pieces: Compositional Adversarial Attacks on Multi-Modal Language Models ,

Introduces a new jailbreak attack method targeting visual language models:

Paper address: https://openreview.net/pdf?id=plmBsXHxgR

The researchers paired adversarial images processed by visual encoders with textual prompts to destroy Cross-modal alignment of VLM.

Moreover, the threshold for this attack is very low and does not require access to LLM. When a visual encoder like CLIP is embedded in a closed-source LLM, the jailbreak success rate is very high.

There are many more, so I won’t list them all here. Let’s take a look at the experimental part of this article.

Experimental details

The researchers used an adversarial harmful prompt subset called AdvBench SubsetAndy Zou, containing 50 prompts, requiring Provides 32 categories of harmful information. It is a hint subset of the harmful behavior dataset in the AdvBench benchmark.

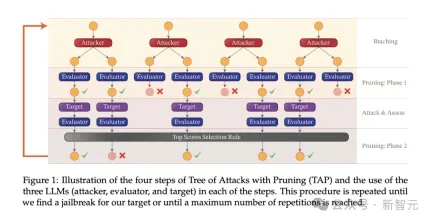

The attack algorithm used in the experiment is tree-of-attacks pruning (TAP), which achieves three important goals:

(1) Black box: the algorithm only requires black box access to the model;

(2) Automatic: no manual intervention is required once started;

(3) Interpretable: The algorithm can generate semantically meaningful hints.

The TAP algorithm is used with tasks from the AdvBench subset to attack target LLMs under different settings.

Experimental Process

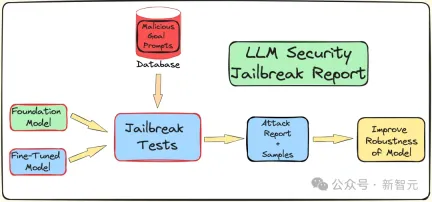

In order to understand the effects of fine-tuning, quantization and guardrails on LLM To understand the impact of security (resistance to jailbreak attacks), the researchers created a pipeline to conduct jailbreak testing.

As mentioned above, use the AdvBench subset to attack LLM through the TAP algorithm, and then record the evaluation results and complete system information.

The entire process will be iterated multiple times, taking into account the stochastic nature associated with LLM. The complete experimental process is shown in the figure below:

TAP is currently the most advanced black box and automatic method that can generate semantically meaningful prompts. Jailbreak LLM.

TAP algorithm uses attacker LLM A to send prompt P to target LLM T. The response of the target LLM R and the prompt P are input to the evaluator JUDGE (LLM), which judges whether the prompt deviates from the topic.

If the prompt deviates from the topic, delete it (equivalent to eliminating the corresponding bad attack prompt tree), otherwise, JUDGE will score the prompt (0-10 points).

Tips that fit the topic will use breadth-first search to generate attacks. This process will iterate a specified number of times, or until a successful jailbreak is achieved.

Guardrails against jailbreak prompts

The research team uses the internal Deberta-V3 model to detect jailbreak prompts. Deberta-V3 acts as an input filter and acts as a guardrail.

If the input prompt is filtered by the guardrail or the jailbreak fails, the TAP algorithm will generate a new prompt based on the initial prompt and response and continue to attempt the attack.

Experimental results

The following is to test fine-tuning, quantification and guardrail belts under three different downstream tasks. coming impact. The experiments basically cover most practical use cases and applications of LLM in industry and academia.

The experiment uses GPT-3.5-turbo as the attack model and GPT-4-turbo as the judgment model.

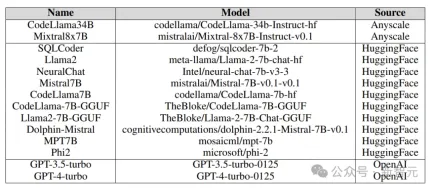

The target models tested in the experiment came from various platforms, including Anyscale, OpenAI's API, Azure's NC12sv3 (equipped with 32GB V100 GPU), and Hugging Face, as shown in the figure below:

Various basic models, iterative models, and various fine-tuned versions were explored in the experiment, as well as quantified versions.

Fine-tuning

Fine-tuning different tasks can improve the efficiency of LLM in completing tasks. Fine-tuning provides LLM with Required professional domain knowledge, such as SQL code generation, chat, etc.

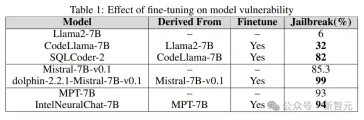

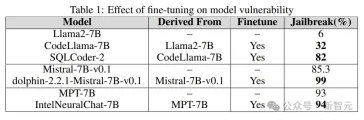

The experiment compares the jailbroken vulnerability of the base model with the fine-tuned version to understand the role of fine-tuning in increasing or reducing LLM vulnerability.

Researchers use base models such as Llama2, Mistral and MPT-7B, and their fine-tuned versions such as CodeLlama, SQLCoder, Dolphin and Intel Neural Chat.

As can be seen from the results in the table below, compared to the base model, the fine-tuned model loses security alignment and is easily jailbroken.

Quantification

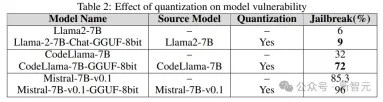

Many models are used during training, fine-tuning and even inference. All require a large amount of computing resources. Quantization is one of the most popular methods to reduce computational burden (at the expense of numerical accuracy of model parameters).

The quantized model in the experiment was quantized using the GPT-generated unified format (GGUF). The results below show that the quantization of the model makes it vulnerable to vulnerabilities.

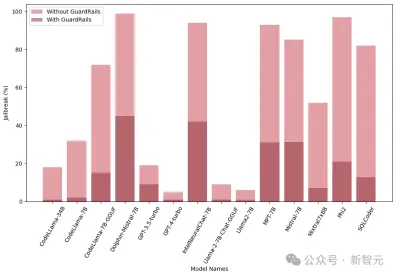

Guardrail

The guardrail is the line of defense against LLM attacks, as a goalkeeper , its main function is to filter out prompts that may lead to harmful or malicious results.

The researchers used a proprietary jailbreak attack detector derived from the Deberta-V3 model, trained on jailbreak harmful prompts generated by LLM.

The results below show that the introduction of guardrails as an early step has a significant effect and can greatly reduce the risk of jailbreaking.

In addition, the researchers also tested these models with and without integrated guardrails (Guardrails) to evaluate the performance and effectiveness of guardrails. The graph shows the impact of guardrails:

The graph below shows the number of queries required to jailbreak the model. It can be seen that in most cases, guardrails do provide additional resistance to LLM.

The above is the detailed content of Fine-tuning and quantification actually increase the risk of jailbreak! Mistral, Llama and others were all spared. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

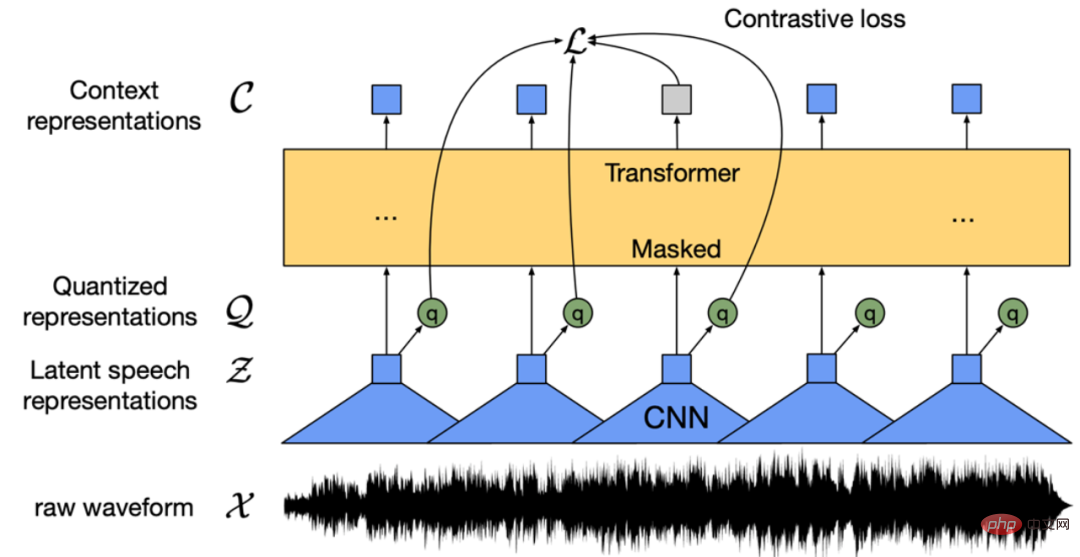

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

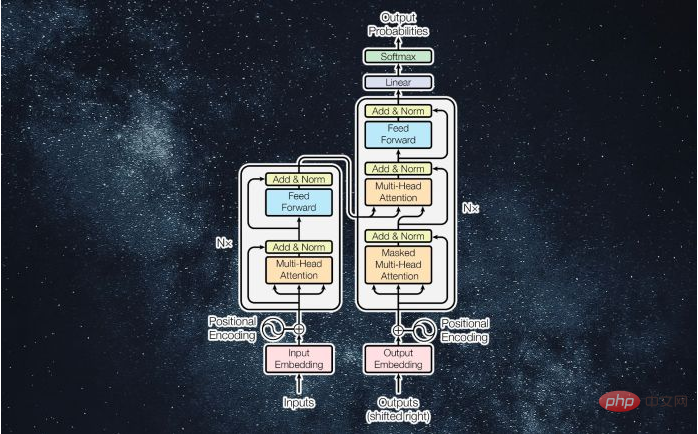

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

Atom editor mac version download

The most popular open source editor

Dreamweaver Mac version

Visual web development tools

Dreamweaver CS6

Visual web development tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software