Technology peripherals

Technology peripherals AI

AI The latest review of controllable image generation! Beijing University of Posts and Telecommunications has opened up 20 pages of 249 documents, covering various "conditions" in the field of Text-to-Image Diffusion.

The latest review of controllable image generation! Beijing University of Posts and Telecommunications has opened up 20 pages of 249 documents, covering various "conditions" in the field of Text-to-Image Diffusion.In the process of rapid development in the field of visual generation, the diffusion model has completely changed the development trend of this field, and its introduction of text-guided generation function marks a profound change in capabilities.

However, relying solely on text to regulate these models cannot fully meet the diverse and complex needs of different applications and scenarios.

Given this shortcoming, many studies aim to control pre-trained text-to-image (T2I) models to support new conditions.

Researchers from Beijing University of Posts and Telecommunications conducted an in-depth review of the controllable generation of T2I diffusion models, outlining the theoretical basis and practical progress in this field. This review covers the latest research results and provides an important reference for the development and application of this field.

Paper: https://arxiv.org/abs/2403.04279 Code: https://github.com/PRIV-Creation/Awesome-Controllable-T2I -Diffusion-Models

Our review begins with a brief introduction to denoised diffusion probabilistic models (DDPMs) and the basics of widely used T2I diffusion models.

We further explored the control mechanism of the diffusion model and determined the effectiveness of introducing new conditions in the denoising process through theoretical analysis.

In addition, we summarized the research in this field in detail and divided it into different categories from the perspective of conditions, such as specific condition generation, multi-condition generation, and general controllability generation.

Figure 1 Schematic diagram of controllable generation using T2I diffusion model. On the basis of text conditions, add "identity" conditions to control the output results.

Classification System

The task of conditional generation using text diffusion models represents a multifaceted and complex field. From a conditional perspective, we divide this task into three subtasks (see Figure 2).

# Figure 2 Classification of controllable generation. From a condition perspective, we divide the controllable generation method into three subtasks, including generation with specific conditions, generation with multiple conditions, and general controllable generation.

Most research is devoted to how to generate images under specific conditions, such as image-guided generation and sketch-to-image generation.

To reveal the theory and characteristics of these methods, we further classify them according to their condition types.

1. Generate using specific conditions: points out the method of introducing specific types of conditions, including customized conditions (Personalization, e.g., DreamBooth, Textual Inversion), It also includes more direct conditions, such as ControlNet series, physiological signal-to-Image

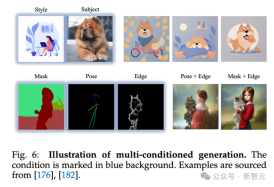

##2. Multi-condition generation: Use multiple Conditions are generated, and we subdivide this task from a technical perspective.

3. Unified controllable generation: This task is designed to be able to generate using any conditions (even any number).

How to introduce new conditions into the T2I diffusion modelFor details, please refer to the original text of the paper. The mechanisms of these methods are briefly introduced below.

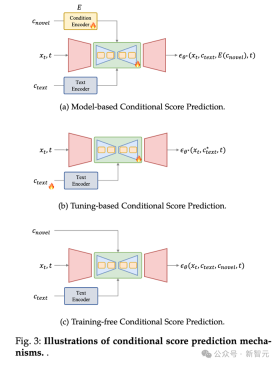

Conditional Score Prediction

In the T2I diffusion model, Utilizing a trainable model (such as UNet) to predict the probability score (i.e., noise) in the denoising process is a basic and effective method.

In the condition-based score prediction method, novel conditions are used as inputs to the prediction model to directly predict new scores.

It can be divided into three methods of introducing new conditions:

1. Based on Conditional score prediction of the model: This type of method will introduce a model used to encode novel conditions, and use the encoding features as the input of UNet (such as acting on the cross-attention layer) to predict novelty Score results under conditions;

2. Conditional score prediction based on fine-tuning: This type of method does not use an explicit condition. Instead, the parameters of the text embedding and denoising network are fine-tuned to learn information about novel conditions, and the fine-tuned weights are used to achieve controllable generation. For example, DreamBooth and Textual Inversion are such practices.

3. Conditional score prediction without training: This type of method does not require training the model, and can directly apply conditions to the model. In the prediction process, for example, in the Layout-to-Image (layout image generation) task, the attention map of the cross-attention layer can be directly modified to set the layout of the object.

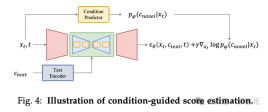

Score evaluation of conditional guidance

Score estimation of conditional guidance evaluation The method is to back-transmit the gradient through the conditional prediction model (such as the Condition Predictor above) to add conditional guidance during the denoising process.

Use specific conditions to generate

1. Personalization: Customized tasks are designed to capture and utilize concepts as generating conditions that are controllable Generating,these conditions are not easily described via text and,need to be extracted from example images. Such as DreamBooth, Texutal Inversion and LoRA.

2. Spatial Control: Because text is difficult to express structural information, that is, position and dense labels, space is used Signal-controlled text-to-image diffusion methods are an important research area in areas such as layout, human pose, and human body parsing. Methods such as ControlNet.

3. Advanced Text-Conditioned Generation: Although text plays a role in the text-to-image diffusion model The role of basic conditions, but there are still some challenges in this area.

First of all, when doing text-guided synthesis in complex texts involving multiple topics or rich descriptions, you often encounter the problem of text misalignment. In addition, these models are mainly trained on English data sets, resulting in a significant lack of multi-language generation capabilities. To address this limitation, many works have proposed innovative approaches aimed at extending the scope of these model languages.

4. In-Context Generation: In the context generation task, based on a pair of task-specific example images and text Guidance,understanding and performing specific tasks on new query,images.

5. Brain-Guided Generation: The brain-guided generation task focuses on controlling images directly from brain activity Create, for example, electroencephalogram (EEG) recordings and functional magnetic resonance imaging (fMRI).

6. Sound-Guided Generation: Generate matching images based on sound.

7. Text Rendering: Generate text in images, which can be widely used in posters, data covers, and expressions package and other application scenarios.

Multi-condition generation

Multi-condition generation tasks are designed to generate Generate images under various conditions, such as generating a specific person in a user-defined pose or generating a person in three personalized identities.

In this section, we provide a comprehensive overview of these methods from a technical perspective and classify them into the following categories:

1. Joint Training: Introduce multiple conditions for joint training during the training phase.

2. Continual Learning: Learn multiple conditions in sequence, and learn new conditions without forgetting the old conditions to achieve multiple Conditional generation.

3. Weight Fusion: Use parameters obtained by fine-tuning under different conditions for weight fusion, so that the model has multiple generated under conditions.

4. Attention-based Integration: Set multiple conditions through attention map (usually object) in the image to achieve multi-condition generation.

Generic condition generation

In addition to methods tailored for specific types of conditions, there are also methods designed to adapt to arbitrary conditions in image generation general method.

These methods are broadly classified into two groups based on their theoretical foundations: general conditional score prediction frameworks and general conditional guided score estimation.

1. Universal condition score prediction framework: The universal condition score prediction framework works by creating a framework that can encode any given conditions and exploit them. A framework for predicting noise at each time step during image synthesis.

This approach provides a universal solution that can be flexibly adapted to a variety of conditions. By directly integrating conditional information into the generative model, this approach allows the image generation process to be dynamically adjusted according to various conditions, making it versatile and applicable to various image synthesis scenarios.

2. General Conditional Guided Score Estimation: Other methods utilize conditionally guided score estimation to incorporate various conditions into text-to-image diffusion models middle. The main challenge lies in obtaining condition-specific guidance from latent variables during denoising.

Applications

Introducing novel conditions can be useful in multiple tasks, including image editing, image completion, image combination, text /Illustration generates 3D.

For example, in image editing, you can use a customized method to edit the cat in the picture into a cat with a specific identity. For other information, please refer to the paper.

Summary

This review delves into the field of conditional generation of text-to-image diffusion models, revealing the incorporation into the text-guided generation process. Novel conditions.

First, the author provides readers with basic knowledge, introducing the denoising diffusion probabilistic model, the famous text-to-image diffusion model, and a well-structured taxonomy. Subsequently, the authors revealed the mechanism for introducing novel conditions into the T2I diffusion model.

Then, the author summarizes the previous conditional generation methods and analyzes them from the aspects of theoretical foundation, technical progress and solution strategies.

In addition, the author explores the practical applications of controllable generation, emphasizing its important role and huge potential in the era of AI content generation.

This survey aims to comprehensively understand the current status of the field of controllable T2I generation, thereby promoting the continued evolution and expansion of this dynamic research field.

The above is the detailed content of The latest review of controllable image generation! Beijing University of Posts and Telecommunications has opened up 20 pages of 249 documents, covering various "conditions" in the field of Text-to-Image Diffusion.. For more information, please follow other related articles on the PHP Chinese website!

![Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]](https://img.php.cn/upload/article/001/242/473/174717025174979.jpg?x-oss-process=image/resize,p_40) Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AM

Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AMChatGPT is not accessible? This article provides a variety of practical solutions! Many users may encounter problems such as inaccessibility or slow response when using ChatGPT on a daily basis. This article will guide you to solve these problems step by step based on different situations. Causes of ChatGPT's inaccessibility and preliminary troubleshooting First, we need to determine whether the problem lies in the OpenAI server side, or the user's own network or device problems. Please follow the steps below to troubleshoot: Step 1: Check the official status of OpenAI Visit the OpenAI Status page (status.openai.com) to see if the ChatGPT service is running normally. If a red or yellow alarm is displayed, it means Open

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AM

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AMOn 10 May 2025, MIT physicist Max Tegmark told The Guardian that AI labs should emulate Oppenheimer’s Trinity-test calculus before releasing Artificial Super-Intelligence. “My assessment is that the 'Compton constant', the probability that a race to

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AM

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AMAI music creation technology is changing with each passing day. This article will use AI models such as ChatGPT as an example to explain in detail how to use AI to assist music creation, and explain it with actual cases. We will introduce how to create music through SunoAI, AI jukebox on Hugging Face, and Python's Music21 library. Through these technologies, everyone can easily create original music. However, it should be noted that the copyright issue of AI-generated content cannot be ignored, and you must be cautious when using it. Let’s explore the infinite possibilities of AI in the music field together! OpenAI's latest AI agent "OpenAI Deep Research" introduces: [ChatGPT]Ope

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AM

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AMThe emergence of ChatGPT-4 has greatly expanded the possibility of AI applications. Compared with GPT-3.5, ChatGPT-4 has significantly improved. It has powerful context comprehension capabilities and can also recognize and generate images. It is a universal AI assistant. It has shown great potential in many fields such as improving business efficiency and assisting creation. However, at the same time, we must also pay attention to the precautions in its use. This article will explain the characteristics of ChatGPT-4 in detail and introduce effective usage methods for different scenarios. The article contains skills to make full use of the latest AI technologies, please refer to it. OpenAI's latest AI agent, please click the link below for details of "OpenAI Deep Research"

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AM

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AMChatGPT App: Unleash your creativity with the AI assistant! Beginner's Guide The ChatGPT app is an innovative AI assistant that handles a wide range of tasks, including writing, translation, and question answering. It is a tool with endless possibilities that is useful for creative activities and information gathering. In this article, we will explain in an easy-to-understand way for beginners, from how to install the ChatGPT smartphone app, to the features unique to apps such as voice input functions and plugins, as well as the points to keep in mind when using the app. We'll also be taking a closer look at plugin restrictions and device-to-device configuration synchronization

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AM

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AMChatGPT Chinese version: Unlock new experience of Chinese AI dialogue ChatGPT is popular all over the world, did you know it also offers a Chinese version? This powerful AI tool not only supports daily conversations, but also handles professional content and is compatible with Simplified and Traditional Chinese. Whether it is a user in China or a friend who is learning Chinese, you can benefit from it. This article will introduce in detail how to use ChatGPT Chinese version, including account settings, Chinese prompt word input, filter use, and selection of different packages, and analyze potential risks and response strategies. In addition, we will also compare ChatGPT Chinese version with other Chinese AI tools to help you better understand its advantages and application scenarios. OpenAI's latest AI intelligence

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AM

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AMThese can be thought of as the next leap forward in the field of generative AI, which gave us ChatGPT and other large-language-model chatbots. Rather than simply answering questions or generating information, they can take action on our behalf, inter

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AM

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AMEfficient multiple account management techniques using ChatGPT | A thorough explanation of how to use business and private life! ChatGPT is used in a variety of situations, but some people may be worried about managing multiple accounts. This article will explain in detail how to create multiple accounts for ChatGPT, what to do when using it, and how to operate it safely and efficiently. We also cover important points such as the difference in business and private use, and complying with OpenAI's terms of use, and provide a guide to help you safely utilize multiple accounts. OpenAI

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Zend Studio 13.0.1

Powerful PHP integrated development environment

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

Dreamweaver Mac version

Visual web development tools