Technology peripherals

Technology peripherals AI

AI Apple's large model MM1 is entering the market: 30 billion parameters, multi-modal, MoE architecture, more than half of the authors are Chinese

Apple's large model MM1 is entering the market: 30 billion parameters, multi-modal, MoE architecture, more than half of the authors are ChineseApple's large model MM1 is entering the market: 30 billion parameters, multi-modal, MoE architecture, more than half of the authors are Chinese

Since this year, Apple has obviously increased its emphasis and investment in generative artificial intelligence (GenAI). At the recent Apple shareholders meeting, Apple CEO Tim Cook said that the company plans to make significant progress in the field of GenAI this year. In addition, Apple announced that it was abandoning its 10-year car-making project, which caused some team members originally engaged in car-making to begin turning to the GenAI field.

Through these initiatives, Apple has demonstrated to the outside world their determination to strengthen GenAI. Currently, GenAI technology and products in the multi-modal field have attracted much attention, especially OpenAI’s Sora. Apple naturally hopes to make a breakthrough in this area.

In a co-authored research paper "MM1: Methods, Analysis & Insights from Multimodal LLM Pre-training", Apple disclosed their research based on multimodal pre-training As a result, a multi-modal LLM series model containing up to 30B parameters was launched.

Paper address: https://arxiv.org/pdf/2403.09611.pdf

at During the study, the team conducted in-depth discussions on the criticality of different architectural components and data selection. Through careful selection of image encoders, visual language connectors, and various pre-training data, they summarized some important design guidelines. Specifically, the main contributions of this study include the following aspects.

First, the researchers conducted small-scale ablation experiments on model architecture decisions and pre-training data selection, and discovered several interesting trends. The importance of modeling design aspects is in the following order: image resolution, visual encoder loss and capacity, and visual encoder pre-training data.

Secondly, the researchers used three different types of pre-training data: image captions, interleaved image text, and plain text data. They found that interleaved and text-only training data were important when it came to few-shot and text-only performance, while for zero-shot performance, subtitle data was most important. These trends persist after supervised fine-tuning (SFT), indicating that the performance and modeling decisions presented during pre-training are preserved after fine-tuning.

Finally, researchers built MM1, a multi-modal model series with parameters up to 30 billion (others are 3 billion and 7 billion), which consists of dense models It is composed of mixed experts (MoE) variants, which not only achieves SOTA in pre-trained indicators, but also maintains competitive performance after supervised fine-tuning on a series of existing multi-modal benchmarks.

The pre-trained model MM1 performs superiorly on subtitles and question and answer tasks in a few-shot scenario, outperforming Emu2, Flamingo and IDEFICS. MM1 after supervised fine-tuning also shows strong competitiveness on 12 multi-modal benchmarks.

Thanks to large-scale multi-modal pre-training, MM1 has good performance in context prediction, multi-image and thought chain reasoning. Similarly, MM1 demonstrates strong few-shot learning capabilities after instruction tuning.

Method Overview: The Secret to Building MM1

Building a high-performance MLLM (Multimodal Large Language Model, multimodal large language model) is a highly practical work. Although the high-level architecture design and training process are clear, the specific implementation methods are not always obvious. In this work, the researchers describe in detail the ablations performed to build high-performance models. They explored three main design decision directions:

- Architecture: The researchers looked at different pre-trained image encoders and explored connecting LLMs with these encoders Various ways to get up.

- Data: The researcher considered different types of data and their relative mixing weights.

- Training procedure: The researchers explored how to train MLLM, including hyperparameters and which parts of the model were trained when.

Ablation settings

Since training large MLLM will consume a lot of resources, The researchers used a simplified ablation setup. The basic configuration of ablation is as follows:

- Image encoder: ViT-L/14 model trained with CLIP loss on DFN-5B and VeCap-300M; image size is 336 ×336.

- Visual language connector: C-Abstractor, containing 144 image tokens.

- Pre-training data: mixed subtitle images (45%), interleaved image text documents (45%) and plain text (10%) data.

- Language Model: 1.2B Transformer Decoder Language Model.

To evaluate different design decisions, the researchers used zero-shot and few-shot (4 and 8 samples) performance on various VQA and image description tasks. : COCO Captioning, NoCaps, TextCaps, VQAv2, TextVQA, VizWiz, GQA and OK-VQA.

Model Architecture Ablation Experiment

The researchers analyzed the components that enable LLM to process visual data. Specifically, they studied (1) how to optimally pretrain a visual encoder, and (2) how to connect visual features to the space of LLMs (see Figure 3 left).

- Image encoder pre-training. In this process, researchers mainly ablated the importance of image resolution and image encoder pre-training goals. It should be noted that unlike other ablation experiments, the researchers used 2.9B LLM (instead of 1.2B) to ensure sufficient capacity to use some larger image encoders.

- Encoder experience: Image resolution has the greatest impact, followed by model size and training data composition. As shown in Table 1, increasing the image resolution from 224 to 336 improves all metrics for all architectures by approximately 3%. Increasing the model size from ViT-L to ViT-H doubles the parameters, but the performance gain is modest, typically less than 1%. Finally, adding VeCap-300M, a synthetic caption dataset, improves performance by more than 1% in few-shot scenarios.

- Visual Language Connector and Image Resolution. The goal of this component is to transform visual representations into LLM space. Since the image encoder is ViT, its output is either a single embedding or a set of grid-arranged embeddings corresponding to input image segments. Therefore, the spatial arrangement of image tokens needs to be converted into the sequential arrangement of LLM. At the same time, the actual image token representation must also be mapped to the word embedding space.

- VL connector experience: The number of visual tokens and image resolution are most important, while the type of VL connector has little impact. As shown in Figure 4, as the number of visual tokens or/and image resolution increases, the recognition rates of zero samples and few samples will increase.

Pre-training data ablation experiment

Generally, the model The training is divided into two stages: pre-training and instruction tuning. The former stage uses network-scale data, while the latter stage uses mission-specific curated data. The following focuses on the pre-training phase of this article and details the researcher’s data selection (Figure 3 right).

There are two types of data commonly used to train MLLM: caption data consisting of image and text pair descriptions; and image-text interleaved documents from the web. Table 2 is the complete list of data sets:

- ##Data Lesson 1: Interleaved data helps is used to improve few-sample and plain text performance, while subtitle data can improve zero-sample performance. Figure 5a shows the results for different combinations of interleaved and subtitled data.

- Data experience 2: Plain text data helps improve few-sample and plain-text performance. As shown in Figure 5b, combining plain text data and subtitle data improves few-shot performance.

- Data Lesson 3: Carefully blending image and text data results in optimal multimodal performance while retaining strong text performance. Figure 5c tries several mixing ratios between image (title and interlaced) and plain text data.

- Data experience 4: Synthetic data helps with few-shot learning. As shown in Figure 5d, synthetic data does significantly improve the performance of few-shot learning, with absolute values of 2.4% and 4% respectively.

Final model and training method

The researcher collected previous ablation results, Determine the final recipe for MM1 multi-modal pre-training:

- Image encoder: Considering the importance of image resolution, the researcher used the ViT-H model with a resolution of 378x378px and pre-trained using the CLIP target on DFN-5B;

- Visual language connector: Since the number of visual tokens is most important, the researcher used a VL connector with 144 tokens. The actual architecture does not seem to be important, and the researcher chose C-Abstract;

- Data: In order to maintain the performance of zero samples and few samples, the researcher used the following carefully combined data: 45 % images-text interleaved documents, 45% images-text documents and 10% text-only documents.

To improve the performance of the model, the researchers expanded the size of the LLM to 3B, 7B, and 30B parameters. All models were fully unfrozen pretrained with a batch size of 512 sequences with a sequence length of 4096, a maximum of 16 images per sequence, and a resolution of 378 × 378. All models were trained using the AXLearn framework.

They performed a grid search on learning rates at small scale, 9M, 85M, 302M and 1.2B, using linear regression in log space to extrapolate from smaller models to larger Changes to the model (see Figure 6), the result is to predict the optimal peak learning rate η given the number of (non-embedded) parameters N:

Extended via Mix of Experts (MoE). In experiments, the researchers further explored ways to extend the dense model by adding more experts to the FFN layer of the language model.

To convert a dense model to MoE, simply replace the dense language decoder with the MoE language decoder. To train MoE, the researchers used the same training hyperparameters and the same training settings as Dense Backbone 4, including training data and training tokens.

Regarding the multi-modal pre-training results, the researchers evaluated the pre-trained models on upper bound and VQA tasks with appropriate prompts. Table 3 evaluates zero samples and few samples:

Supervised fine-tuning results

Finally, The researchers introduced supervised fine-tuning (SFT) experiments trained on top of pre-trained models.

They followed LLaVA-1.5 and LLaVA-NeXT and collected about 1 million SFT samples from different datasets. Given that intuitively higher image resolution leads to better performance, the researchers also adopted the SFT method extended to high resolution.

The results of supervised fine-tuning are as follows:

Table 4 shows the comparison with SOTA, "-Chat" indicates the MM1 model after supervised fine-tuning .

First, on average, the MM1-3B-Chat and MM1-7B-Chat outperform all listed models of the same size. MM1-3B-Chat and MM1-7B-Chat perform particularly well on VQAv2, TextVQA, ScienceQA, MMBench, and recent benchmarks (MMMU and MathVista).

Secondly, the researchers explored two MoE models: 3B-MoE (64 experts) and 6B-MoE (32 experts). Apple's MoE model achieved better performance than the dense model in almost all benchmarks. This shows the huge potential for further expansion of the MoE.

Third, for the 30B size model, MM1-30B-Chat performs better than Emu2-Chat37B and CogVLM-30B on TextVQA, SEED and MMMU. MM1 also achieves competitive overall performance compared to LLaVA-NeXT.

However, LLaVA-NeXT does not support multi-image inference, nor does it support few-sample prompts, because each image is represented as 2880 tokens sent to LLM, and the total number of tokens in MM1 There are only 720 of them. This limits certain applications involving multiple images.

Figure 7b shows the impact of input image resolution on the average performance of the SFT evaluation index. Figure 7c shows that as the pre-training data increases, The performance of the model continues to improve.

The impact of image resolution. Figure 7b shows the impact of input image resolution on the average performance of the SFT evaluation metric.

Impact of pre-training: Figure 7c shows that as the pre-training data increases, the performance of the model continues to improve.

For more research details, please refer to the original paper.

The above is the detailed content of Apple's large model MM1 is entering the market: 30 billion parameters, multi-modal, MoE architecture, more than half of the authors are Chinese. For more information, please follow other related articles on the PHP Chinese website!

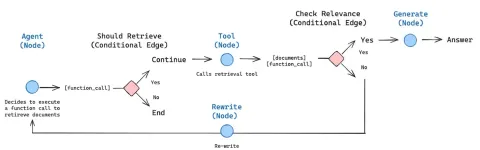

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AM

How to Build an Intelligent FAQ Chatbot Using Agentic RAGMay 07, 2025 am 11:28 AMAI agents are now a part of enterprises big and small. From filling forms at hospitals and checking legal documents to analyzing video footage and handling customer support – we have AI agents for all kinds of tasks. Compan

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AM

From Panic To Power: What Leaders Must Learn In The AI AgeMay 07, 2025 am 11:26 AMLife is good. Predictable, too—just the way your analytical mind prefers it. You only breezed into the office today to finish up some last-minute paperwork. Right after that you’re taking your partner and kids for a well-deserved vacation to sunny H

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AM

Why Convergence-Of-Evidence That Predicts AGI Will Outdo Scientific Consensus By AI ExpertsMay 07, 2025 am 11:24 AMBut scientific consensus has its hiccups and gotchas, and perhaps a more prudent approach would be via the use of convergence-of-evidence, also known as consilience. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AM

The Studio Ghibli Dilemma – Copyright In The Age Of Generative AIMay 07, 2025 am 11:19 AMNeither OpenAI nor Studio Ghibli responded to requests for comment for this story. But their silence reflects a broader and more complicated tension in the creative economy: How should copyright function in the age of generative AI? With tools like

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Mac version

God-level code editing software (SublimeText3)

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.