Technology peripherals

Technology peripherals AI

AI With 35 billion parameters and open weights, the author of Transformer launched a new large model after starting his own business.

With 35 billion parameters and open weights, the author of Transformer launched a new large model after starting his own business.With 35 billion parameters and open weights, the author of Transformer launched a new large model after starting his own business.

Today, Cohere, an artificial intelligence startup co-founded by Aidan Gomez, one of the authors of Transformer, ushered in the release of its own large model.

Cohere’s latest released model is named “Command-R”, has 35B parameters and is designed to handle large-scale production workloads. This model falls into the "scalable" category, with a balance of high efficiency and high accuracy, helping enterprise users move beyond proof-of-concept and into production.

Command-R is a generative model optimized for retrieval-augmented generation (RAG) and other long-context tasks. By combining external APIs and tools, this model aims to improve the performance of RAG applications. It works with industry-leading embedding and reordering models to deliver outstanding performance and best-in-class integration capabilities for enterprise use cases.

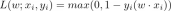

Command-R adopts an optimized transformer architecture and is an autoregressive language model. After pre-training is completed, the model ensures consistency with human preferences through supervised fine-tuning (SFT) and preference training to achieve better usefulness and safety.

Specifically, Command-R has the following functional characteristics:

- High accuracy in RAG and tool usage

- Low latency, high throughput

- Longer 128k context and lower price

- Powerful functionality across 10 major languages

- Model weights are available on HuggingFace for study and evaluation

Command-R is currently available Available on Cohere’s managed API, with plans to launch on major cloud providers soon. This release is the first in a series of models designed to advance capabilities critical to enterprise mass adoption.

Currently, Cohere has opened model weights on Huggingface.

Huggingface Address: https://huggingface.co/CohereForAI/c4ai-command-r-v01

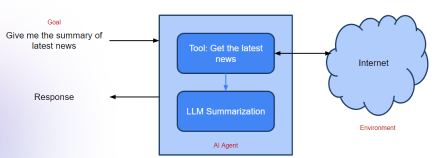

High performance retrieval augmented generation (RAG)

Retrieval augmented generation (RAG) has become a key pattern in the deployment of large language models. With RAG, companies can give models access to private knowledge that would otherwise be unavailable, search private databases, and use relevant information to form responses, significantly increasing accuracy and usefulness. The key components of RAG are:

- Retrieval: Search the corpus of information relevant to the responding user.

- Augmented generation: Use retrieved information to form more informed responses.

For retrieval, Cohere’s Embed model improves contextual and semantic understanding by searching millions or even billions of documents, significantly increasing the practicality and accuracy of the retrieval step. . At the same time, Cohere’s Rerank model helps further increase the value of retrieved information, optimizing results for custom metrics such as relevance and personalization.

For enhanced generation, by identifying the most relevant information, Command-R can summarize, analyze, and package this information, and help employees improve work efficiency or create new product experiences. Command-R is unique in that the model's output comes with clear citations, reducing the risk of hallucinations and rendering more context from the source material.

Even without using its own Embed and Rerank models, Command-R outperforms other models in the scalable generative model category. But when used together, the lead extends significantly, enabling higher performance in more complex domains.

The picture on the left below shows Command-R and Mixtral conducting a Head-to-Head overall human preference assessment on a series of enterprise-related RAG applications, fully considering fluency and answers. Usefulness and citations. The right side of the figure shows the comparison results of Command-R (Embed Rerank), Command-R and Llama 2 70B (chat), Mixtral, GPT3.5-Turbo and other models on benchmarks such as Natural Questions, TriviaQA and HotpotQA. Cohere's big model achieves the lead.

Powerful tool usage capabilities

The large language model should be the core inference engine that can automatically perform tasks and take actual actions, not just extract and generate text machine. Command-R achieves this goal by using tools (APIs) such as code interpreters and other user-defined tools that enable models to automate highly complex tasks.

Tool Use feature enables enterprise developers to turn Command-R into an engine to support the use of "internal infrastructure such as databases and software tools" as well as "external infrastructure such as CRM, search engines, etc." Tools for the automation of tasks and workflows. This allows us to automate time-consuming manual tasks that span multiple systems and require complex reasoning and decision-making.

The picture below shows the comparison of multi-step reasoning capabilities between Command-R and Llama 2 70B (chat), Mixtral, and GPT3.5-turbo when using search tools. The data sets used here are HotpotQA and Bamboogle.

Multi-language generation capability

Command-R model is good at 10 major business languages around the world, including English, French, Spanish, Italian, German, Portuguese, Japanese, Korean, Arabic and Chinese.

Additionally, Cohere’s Embed and Rerank models natively support over 100 languages. This enables users to draw answers from a wide range of data sources, delivering clear and accurate conversations in their native language, regardless of language.

The following figure shows the comparison between Command-R and Llama 2 70B (chat), Mixtral, GPT3.5-turbo on multi-language MMLU and FLORES.

Longer context and lower price

Command-R supports longer Context window - 128k tokens. The upgrade also reduces the price of Cohere’s managed APIs and significantly increases the efficiency of Cohere private cloud deployments. By combining a longer context window with cheaper pricing, Command-R unlocks RAG use cases where additional context can significantly improve performance.

The specific pricing is as follows, where 1 million input tokens for the Command version cost 1 USD, 1 million output tokens cost 2 USD; the Command-R version costs 1 million input tokens USD 0.5, USD 1.5 for 1 million output tokens.

Soon, Cohere will also release a short technical report showing more model details.

Blog address: https://txt.cohere.com/command-r/

The above is the detailed content of With 35 billion parameters and open weights, the author of Transformer launched a new large model after starting his own business.. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

WebStorm Mac version

Useful JavaScript development tools