Technology peripherals

Technology peripherals AI

AI New work by Tian Yuandong and others: Breaking through the memory bottleneck and allowing a 4090 pre-trained 7B large model

New work by Tian Yuandong and others: Breaking through the memory bottleneck and allowing a 4090 pre-trained 7B large modelNew work by Tian Yuandong and others: Breaking through the memory bottleneck and allowing a 4090 pre-trained 7B large model

Meta FAIR The research project Tian Yuandong participated in received widespread praise last month. In their paper "MobileLLM: Optimizing Sub-billion Parameter Language Models for On-Device Use Cases", they began to explore how to optimize small models with less than 1 billion parameters, aiming to achieve the goal of running large language models on mobile devices.

On March 6, Tian Yuandong’s team released the latest research results, this time focusing on improving the efficiency of LLM memory. In addition to Tian Yuandong himself, the research team also includes researchers from the California Institute of Technology, the University of Texas at Austin, and CMU. This research aims to further optimize the performance of LLM memory and provide support and guidance for future technology development.

They jointly proposed a training strategy called GaLore (Gradient Low-Rank Projection), which allows full parameter learning. Compared with common low-rank automatic methods such as LoRA, Adaptation method, GaLore is more memory efficient.

This study shows for the first time that 7B models can be successfully pre-trained on a consumer GPU with 24GB of memory, such as the NVIDIA RTX 4090, without using model parallelism, Checkpoint or offload strategy.

Paper address: https://arxiv.org/abs/2403.03507

Paper title: GaLore: Memory-Efficient LLM Training by Gradient Low-Rank Projection

Next let’s take a look at the main content of the article.

Currently, large language models (LLM) have shown outstanding potential in many fields, but we must also face a realistic problem, that is, pre-training and fine-tuning LLM not only require a large amount of Computing resources also require a large amount of memory support.

LLM's memory requirements include not only parameters in the billions, but also gradients and Optimizer States (such as gradient momentum and variance in Adam), which can be larger than the storage itself. For example, LLaMA 7B, pretrained from scratch using a single batch size, requires at least 58 GB of memory (14 GB for trainable parameters, 42 GB for Adam Optimizer States and weight gradients, and 2 GB for activations). This makes training LLM infeasible on consumer-grade GPUs such as the NVIDIA RTX 4090 with 24GB of memory.

To solve the above problems, researchers continue to develop various optimization techniques to reduce memory usage during pre-training and fine-tuning.

This method reduces memory usage by 65.5% under Optimizer States while maintaining pre-training on the C4 dataset with up to 19.7B tokens on LLaMA 1B and 7B architectures efficiency and performance, as well as fine-tuning the efficiency and performance of RoBERTa on GLUE tasks. Compared to the BF16 baseline, 8-bit GaLore further reduces optimizer memory by 82.5% and total training memory by 63.3%.

After seeing this research, netizens said: “It’s time to forget the cloud and HPC. With GaLore, all AI4Science will be completed on a $2,000 consumer-grade GPU. ."

Tian Yuandong said: "With GaLore, it is now possible to pre-train the 7B model in NVidia RTX 4090s with 24G memory.

Instead of assuming a low-rank weight structure like LoRA, we show that the weight gradients are naturally low-rank and can therefore be projected into a (varying) low-dimensional space. Therefore, we simultaneously save memory for gradients, Adam momentum and variance.

Thus, unlike LoRA, GaLore does not change the training dynamics and can be used to pre-train 7B models from scratch without any memory-consuming pre-training. heat. GaLore can also be used for fine-tuning, producing results comparable to LoRA."

Method introduction

As mentioned earlier, GaLore is a training that allows full parameter learning strategy, but is more memory efficient than common low-rank adaptive methods such as LoRA. The key idea of GaLore is to utilize the slowly changing low-rank structure of the gradient  of the weight matrix W, rather than trying to directly approximate the weight matrix into a low-rank form.

of the weight matrix W, rather than trying to directly approximate the weight matrix into a low-rank form.

This article first theoretically proves that the gradient matrix G will become low rank during the training process. On the basis of theory, this article uses GaLore to calculate two projection matrices  and

and project the gradient matrix G into the low-rank form P^⊤GQ. In this case, the memory cost of Optimizer States that rely on component gradient statistics can be significantly reduced. As shown in Table 1, GaLore is more memory efficient than LoRA. In fact, this can reduce memory by up to 30% during pre-training compared to LoRA.

project the gradient matrix G into the low-rank form P^⊤GQ. In this case, the memory cost of Optimizer States that rely on component gradient statistics can be significantly reduced. As shown in Table 1, GaLore is more memory efficient than LoRA. In fact, this can reduce memory by up to 30% during pre-training compared to LoRA.

This article proves that GaLore performs well in pre-training and fine-tuning. When pre-training LLaMA 7B on the C4 dataset, 8-bit GaLore combines an 8-bit optimizer and layer-by-layer weight update technology to achieve performance comparable to full rank, with less than 10% memory cost for the optimizer state.

It is worth noting that for pre-training, GaLore maintains low memory throughout the training process without requiring full-rank training like ReLoRA. Thanks to the memory efficiency of GaLore, for the first time LLaMA 7B can be trained from scratch on a single GPU with 24GB of memory (e.g., on an NVIDIA RTX 4090) without any expensive memory offloading techniques (Figure 1).

As a gradient projection method, GaLore is independent of the choice of optimizer and can be easily plugged into an existing optimizer with just two lines of code. As shown in Algorithm 1.

The following figure shows the algorithm for applying GaLore to Adam:

Experiments and results

The researchers evaluated the pre-training of GaLore and the fine-tuning of LLM. All experiments were performed on NVIDIA A100 GPU.

To evaluate its performance, the researchers applied GaLore to train a large language model based on LLaMA on the C4 dataset. The C4 dataset is a huge, sanitized version of the Common Crawl web crawling corpus, used primarily to pretrain language models and word representations. In order to best simulate the actual pre-training scenario, the researchers trained on a sufficiently large amount of data without duplicating the data, with model sizes ranging up to 7 billion parameters.

This paper follows the experimental setup of Lialin et al., using an LLaMA3-based architecture with RMSNorm and SwiGLU activation. For each model size, except for the learning rate, they used the same set of hyperparameters and ran all experiments in BF16 format to reduce memory usage while adjusting the learning rate for each method with the same computational budget. and report optimal performance.

In addition, the researchers used the GLUE task as a benchmark for memory-efficient fine-tuning of GaLore and LoRA. GLUE is a benchmark for evaluating the performance of NLP models in a variety of tasks, including sentiment analysis, question answering, and text association.

This paper first uses the Adam optimizer to compare GaLore with existing low-rank methods, and the results are shown in Table 2.

Researchers have proven that GaLore can be applied to various learning algorithms, especially memory-efficient optimizers, to further reduce memory usage. The researchers applied GaLore to the AdamW, 8-bit Adam, and Adafactor optimizers. They employ the first-order statistical Adafactor to avoid performance degradation.

Experiments evaluated them on the LLaMA 1B architecture with 10K training steps, tuned the learning rate for each setting, and reported the best performance. As shown in Figure 3, the graph below demonstrates that GaLore works with popular optimizers such as AdamW, 8-bit Adam, and Adafactor. Furthermore, introducing very few hyperparameters does not affect the performance of GaLore.

As shown in Table 4, GaLore can achieve higher performance than LoRA with less memory usage in most tasks. This demonstrates that GaLore can be used as a full-stack memory-efficient training strategy for LLM pre-training and fine-tuning.

As shown in Figure 4, 8 bit GaLore requires much less memory compared to the BF16 benchmark and 8 bit Adam. Pre-training LLaMA 7B only requires 22.0G of memory, and the token batch size per GPU is small (up to 500 tokens).

For more technical details, please read the original paper.

The above is the detailed content of New work by Tian Yuandong and others: Breaking through the memory bottleneck and allowing a 4090 pre-trained 7B large model. For more information, please follow other related articles on the PHP Chinese website!

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AM

AI Game Development Enters Its Agentic Era With Upheaval's Dreamer PortalMay 02, 2025 am 11:17 AMUpheaval Games: Revolutionizing Game Development with AI Agents Upheaval, a game development studio comprised of veterans from industry giants like Blizzard and Obsidian, is poised to revolutionize game creation with its innovative AI-powered platfor

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AM

Uber Wants To Be Your Robotaxi Shop, Will Providers Let Them?May 02, 2025 am 11:16 AMUber's RoboTaxi Strategy: A Ride-Hail Ecosystem for Autonomous Vehicles At the recent Curbivore conference, Uber's Richard Willder unveiled their strategy to become the ride-hail platform for robotaxi providers. Leveraging their dominant position in

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AM

AI Agents Playing Video Games Will Transform Future RobotsMay 02, 2025 am 11:15 AMVideo games are proving to be invaluable testing grounds for cutting-edge AI research, particularly in the development of autonomous agents and real-world robots, even potentially contributing to the quest for Artificial General Intelligence (AGI). A

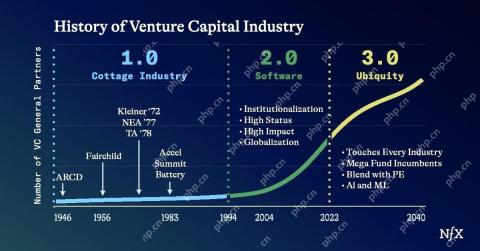

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AM

The Startup Industrial Complex, VC 3.0, And James Currier's ManifestoMay 02, 2025 am 11:14 AMThe impact of the evolving venture capital landscape is evident in the media, financial reports, and everyday conversations. However, the specific consequences for investors, startups, and funds are often overlooked. Venture Capital 3.0: A Paradigm

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AM

Adobe Updates Creative Cloud And Firefly At Adobe MAX London 2025May 02, 2025 am 11:13 AMAdobe MAX London 2025 delivered significant updates to Creative Cloud and Firefly, reflecting a strategic shift towards accessibility and generative AI. This analysis incorporates insights from pre-event briefings with Adobe leadership. (Note: Adob

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AM

Everything Meta Announced At LlamaConMay 02, 2025 am 11:12 AMMeta's LlamaCon announcements showcase a comprehensive AI strategy designed to compete directly with closed AI systems like OpenAI's, while simultaneously creating new revenue streams for its open-source models. This multifaceted approach targets bo

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AM

The Brewing Controversy Over The Proposition That AI Is Nothing More Than Just Normal TechnologyMay 02, 2025 am 11:10 AMThere are serious differences in the field of artificial intelligence on this conclusion. Some insist that it is time to expose the "emperor's new clothes", while others strongly oppose the idea that artificial intelligence is just ordinary technology. Let's discuss it. An analysis of this innovative AI breakthrough is part of my ongoing Forbes column that covers the latest advancements in the field of AI, including identifying and explaining a variety of influential AI complexities (click here to view the link). Artificial intelligence as a common technology First, some basic knowledge is needed to lay the foundation for this important discussion. There is currently a large amount of research dedicated to further developing artificial intelligence. The overall goal is to achieve artificial general intelligence (AGI) and even possible artificial super intelligence (AS)

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AM

Model Citizens, Why AI Value Is The Next Business YardstickMay 02, 2025 am 11:09 AMThe effectiveness of a company's AI model is now a key performance indicator. Since the AI boom, generative AI has been used for everything from composing birthday invitations to writing software code. This has led to a proliferation of language mod

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft