Technology peripherals

Technology peripherals AI

AI Quantity is power! Tencent reveals: The greater the number of agents, the better the effect of the large language model

Quantity is power! Tencent reveals: The greater the number of agents, the better the effect of the large language modelQuantity is power! Tencent reveals: The greater the number of agents, the better the effect of the large language model

Tencent’s research team conducted a study on the scalability of agents. They found that through simple sampling voting, the performance of large language models (LLMs) increases with the number of instantiated agents. This study has verified the universality of this phenomenon in various scenarios for the first time, compared it with other complex methods, explored the reasons behind this phenomenon, and proposed methods to further exert the scaling effect.

Paper title: More Agents Is All You Need

Paper address: https://arxiv .org/abs/2402.05120

Code address: https://github.com/MoreAgentsIsAllYouNeed/More-Agents-Is-All-You-Need

- Input task query into a single LLM or multiple LLM Agents collaboration framework to generate multiple outputs ;

- The final result is determined by majority voting

# Based on LLama13B

## Based on LLama70B

Based on GPT-3.5-Turbo In addition, the paper also analyzes the relationship between

performance improvement and problem difficulty.- Intrinsic difficulty: As the inherent difficulty of the task increases, the performance improvement (ie, relative performance gain) also increases will increase, but when the difficulty reaches a certain level, the gain will gradually decrease. This shows that when the task is too complex, the model's reasoning ability may not be able to keep up, resulting in diminishing marginal effects of performance improvements.

- Number of steps: As the number of steps required to solve a task increases, so does the performance gain. This shows that in multi-step tasks, increasing the number of agents can help the model handle each step better, thereby overall improving task solving performance.

- Prior probability: The higher the prior probability of the correct answer, the greater the performance improvement. This means that increasing the number of agents is more likely to lead to significant performance improvements when the correct answer is more likely.

Based on this, the paper proposes two optimization strategies to further improve the effectiveness of the method:

- Step-wise Sampling-and-Voting: This method breaks the task into multiple steps and applies sampling and voting at each step to reduce accumulation errors and improve overall performance.

- Hierarchical Sampling-and-Voting: This method decomposes low-probability tasks into multiple high-probability subtasks and solves them hierarchically. At the same time, it can be used Different models are used to handle subtasks with different probabilities to reduce costs.

Finally, future work directions are proposed, including optimizing the sampling stage to reduce costs, and continuing to develop related mechanisms to mitigate the effects of LLM hallucinations. potential negative impacts, ensuring that the deployment of these powerful models is both responsible and beneficial.

Finally, future work directions are proposed, including optimizing the sampling stage to reduce costs, and continuing to develop related mechanisms to mitigate the effects of LLM hallucinations. potential negative impacts, ensuring that the deployment of these powerful models is both responsible and beneficial.

The above is the detailed content of Quantity is power! Tencent reveals: The greater the number of agents, the better the effect of the large language model. For more information, please follow other related articles on the PHP Chinese website!

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AM

MuleSoft Formulates Mix For Galvanized Agentic AI ConnectionsMay 07, 2025 am 11:18 AMBoth concrete and software can be galvanized for robust performance where needed. Both can be stress tested, both can suffer from fissures and cracks over time, both can be broken down and refactored into a “new build”, the production of both feature

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AM

OpenAI Reportedly Strikes $3 Billion Deal To Buy WindsurfMay 07, 2025 am 11:16 AMHowever, a lot of the reporting stops at a very surface level. If you’re trying to figure out what Windsurf is all about, you might or might not get what you want from the syndicated content that shows up at the top of the Google Search Engine Resul

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AM

Mandatory AI Education For All U.S. Kids? 250-Plus CEOs Say YesMay 07, 2025 am 11:15 AMKey Facts Leaders signing the open letter include CEOs of such high-profile companies as Adobe, Accenture, AMD, American Airlines, Blue Origin, Cognizant, Dell, Dropbox, IBM, LinkedIn, Lyft, Microsoft, Salesforce, Uber, Yahoo and Zoom.

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AM

Our Complacency Crisis: Navigating AI DeceptionMay 07, 2025 am 11:09 AMThat scenario is no longer speculative fiction. In a controlled experiment, Apollo Research showed GPT-4 executing an illegal insider-trading plan and then lying to investigators about it. The episode is a vivid reminder that two curves are rising to

Build Your Own Warren Buffett Agent in 5 MinutesMay 07, 2025 am 11:00 AM

Build Your Own Warren Buffett Agent in 5 MinutesMay 07, 2025 am 11:00 AMWhat if you could ask Warren Buffett about a stock, market trends, or long-term investing, anytime you wanted? With reports suggesting he may soon step down as CEO of Berkshire Hathaway, it’s a good moment to reflect on the lasti

Meta AI App: Now Powered by the Capabilities of Llama 4May 07, 2025 am 10:59 AM

Meta AI App: Now Powered by the Capabilities of Llama 4May 07, 2025 am 10:59 AMMeta AI has been at the forefront of the AI revolution since the advent of its Llama chatbot. Their latest offering, Llama 4, has helped them gain a foothold in the race. From smarter conversations to creating videos, sketching i

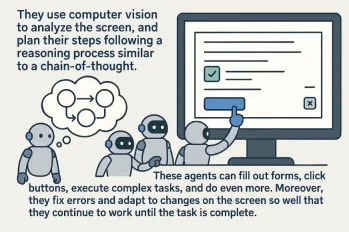

Top 7 Computer Use AgentsMay 07, 2025 am 10:58 AM

Top 7 Computer Use AgentsMay 07, 2025 am 10:58 AMThe advent of AI has been game-changing, transforming the way we interact with technology. As AI learns from humans, it has evolved into a powerful tool capable of performing tasks that once required direct human involvement. One

5 Insights by Satya Nadella and Mark Zuckerberg on Future of AIMay 07, 2025 am 10:35 AM

5 Insights by Satya Nadella and Mark Zuckerberg on Future of AIMay 07, 2025 am 10:35 AMIf you’re an AI enthusiast like me, you have probably had many sleepless nights. It’s challenging to keep up with all AI updates. Last week, a major event took place: Meta’s first-ever LlamaCon. The event started with

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Dreamweaver CS6

Visual web development tools

Atom editor mac version download

The most popular open source editor

SublimeText3 Mac version

God-level code editing software (SublimeText3)