Home >System Tutorial >LINUX >Linux Netlink: an efficient and flexible kernel-user space communication mechanism

Linux Netlink: an efficient and flexible kernel-user space communication mechanism

- WBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBOYWBforward

- 2024-02-10 09:50:171302browse

The Linux kernel is a complex and powerful system that provides many functions and services, such as process management, memory allocation, device drivers, network protocols, etc. But how do you let user space applications interact with the kernel? Traditional methods include system calls, signals, pipes, sockets, etc., but they all have some limitations and shortcomings. For example, system calls can only perform predefined operations, signals can only pass simple information, and pipes and sockets require additional buffers and copies. Is there a more efficient, flexible, and scalable way? The answer is Linux Netlink.

1.What is Netlink

What is Netlink? Netlink is a communication method provided by Linux for communication between the kernel and user-mode processes. But note that although Netlink is mainly used for communication between user space and kernel space, it can also be used for communication between two processes in user space. There are many other ways to communicate between processes, and Netlink is generally not used. Unless you need to use the broadcast feature of Netlink.

So what are the advantages of Netlink? Generally speaking, there are three communication methods between user space and kernel space: /proc, ioctl, and Netlink. The first two are one-way, but Netlink can achieve duplex communication.

Netlink protocol is based on BSDsocket and AF_NETLINK address family (addressfamily), using 32-bit port number addressing (formerly called PID), each Netlink protocol (or bus, called netlinkfamily in the man manual), Usually associated with one or a group of kernel services/components, such as NETLINK_ROUTE for obtaining and setting routing and link information, NETLINK_KOBJECT_UEVENT for the kernel to send notifications to the udev process in user space, etc. netlink has the following characteristics:

①Support full-duplex, asynchronous communication (of course synchronous is also supported)

② The user space can use the standard BSDsocket interface (but netlink does not block the construction and parsing process of the protocol package. It is recommended to use third-party libraries such as libnl)

③Use a dedicated kernel API interface in the kernel space

④Support multicast (therefore supporting "bus" communication and enabling message subscription)

⑤On the kernel side, it can be used for process context and interrupt context

How to learn Netlink? I think the best way is to compare Netlink and UDPsocket. Because they are really similar in places. AF_NETLINK corresponds to AF_INET and is a protocol family, while NETLINK_ROUTE and NETLINK_GENERIC are protocols and correspond to UDP.

Then we mainly focus on the differences between Netlink and UDPsocket. The most important point is: when using UDPsocket to send data packets, the user does not need to construct the header of the UDP data packet. The kernel protocol stack will use the original and destination addresses (sockaddr_in ) to fill in the header information. But Netlink requires us to construct a header ourselves (we will talk about the use of this header later).

Generally when we use Netlink, we have to specify a protocol. We can use NETLINK_GENERIC reserved for us by the kernel (defined in linux/netlink.h), or we can use our own customized protocol. In fact, it means defining a protocol that the kernel has not yet Occupied numbers. Below we use NETLINK_TEST as the protocol we defined to write an example (note: the custom protocol does not have to be added to linux/netlink.h, as long as both user mode and kernel mode code can find the definition). We know that there are two ways to send messages using UDP: sendto and sendmsg. Netlink also supports these two ways. Let’s first look at how to use sendmsg.

2. User mode data structure

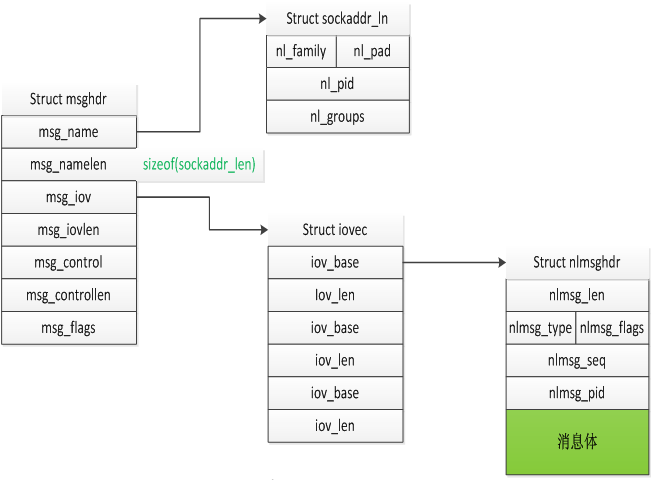

First, let’s take a look at the relationship between several important data structures:

2.1structmsghdr

The msghdr structure will be used in socket generation. It is not exclusive to Netlink and will not be explained in detail here. Just explain how to better understand the function of this structure. We know that the sending and receiving functions of socket messages generally have these pairs: recv/send, readv/writev, recvfrom/sendto. Of course, there is also recvmsg/sendmsg. The first three pairs of functions each have their own unique functions, and recvmsg/sendmsg is to include all the functions of the first three pairs, and of course has its own special uses. The first two members of msghdr are to satisfy the function of recvfrom/sendto, the middle two members msg_iov and msg_iovlen are to satisfy the function of readv/writev, and the last msg_flags is to satisfy the function of flag in recv/send. The remaining msg_control and msg_controllen satisfy the unique functions of recvmsg/sendmsg.

2.2Structsockaddr_ln

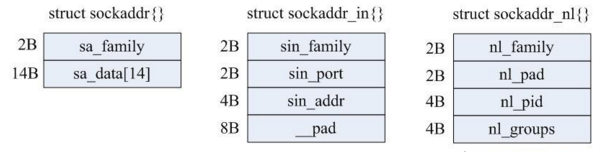

Structsockaddr_ln is the address of Netlink, which has the same function as sockaddr_in in our usual socket programming. Their structures are compared as follows.

The detailed definition and description of structsockaddr_nl{} is as follows:

struct sockaddr_nl

{

sa_family_t nl_family; /*该字段总是为AF_NETLINK */

unsigned short nl_pad; /* 目前未用到,填充为0*/

__u32 nl_pid; /* process pid */

__u32 nl_groups; /* multicast groups mask */

};

(1)nl_pid: In the Netlink specification, the full name of PID is Port-ID (32bits). Its main function is to uniquely identify a netlink-based socket channel. Normally nl_pid is set to the process ID of the current process. We have also said before that Netlink can not only realize user-kernel space communication, but also enable communication between two processes in user space, or between two processes in kernel space. When this attribute is 0, it generally refers to the kernel.

(2)nl_groups: If a user space process wants to join a multicast group, it must execute the bind() system call. This field specifies the mask of the multicast group number that the caller wishes to join (note that it is not the group number, we will explain this field in detail later). If this field is 0, it means that the caller does not want to join any multicast group. For each protocol belonging to the Netlink protocol domain, up to 32 multicast groups can be supported (because the length of nl_groups is 32 bits), and each multicast group is represented by one bit.

2.3structnlmsghdr

Netlink messages consist of a message header and a message body, and structnlmsghdr is the message header. The message header is defined in the file and represented by the structure nlmsghdr:

struct sockaddr_nl

{

sa_family_t nl_family; /*该字段总是为AF_NETLINK */

unsigned short nl_pad; /* 目前未用到,填充为0*/

__u32 nl_pid; /* process pid */

__u32 nl_groups; /* multicast groups mask */

};

Explanation and description of each member attribute in the message header:

(1)nlmsg_len: The length of the entire message, calculated in bytes. Includes the Netlink message header itself.

(2)nlmsg_type: The type of message, that is, whether it is data or control message. Currently (kernel version 2.6.21) Netlink only supports four types of control messages, as follows:

a)NLMSG_NOOP-empty message, do nothing;

b)NLMSG_ERROR-Indicates that the message contains an error;

c) NLMSG_DONE - If the kernel returns multiple messages through the Netlink queue, then the type of the last message in the queue is NLMSG_DONE, and the nlmsg_flags attribute of all remaining messages has the NLM_F_MULTI bit set to be valid.

d)NLMSG_OVERRUN-Not used yet.

(3)nlmsg_flags: Additional descriptive information attached to the message, such as NLM_F_MULTI mentioned above.

How to set the message body? NLMSG_DATA can be used, see the examples below for details.

3.用户态范例一

- 客户端1

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#define MAX_PAYLOAD 1024 //

maximum payload size

#define NETLINK_TEST 25 //自定义的协议

int main(int argc, char* argv[])

{

int state;

struct sockaddr_nl src_addr, dest_addr;

struct nlmsghdr *nlh = NULL; //Netlink数据包头

struct iovec iov;

struct msghdr msg;

int sock_fd, retval;

int state_smg = 0;

// Create a socket

sock_fd = socket(AF_NETLINK, SOCK_RAW, NETLINK_TEST);

if(sock_fd == -1){

printf("error getting socket: %s", strerror(errno));

return -1;

}

// To prepare binding

memset(&src_addr, 0, sizeof(src_addr));

src_addr.nl_family = AF_NETLINK;

src_addr.nl_pid = 100; //A:设置源端端口号

src_addr.nl_groups = 0;

//Bind

retval = bind(sock_fd, (struct sockaddr*)&src_addr, sizeof(src_addr));

if(retval printf("bind failed: %s", strerror(errno));

close(sock_fd);

return -1;

}

// To orepare create mssage

nlh = (struct nlmsghdr *)malloc(NLMSG_SPACE(MAX_PAYLOAD));

if(!nlh){

printf("malloc nlmsghdr error!\n");

close(sock_fd);

return -1;

}

memset(&dest_addr,0,sizeof(dest_addr));

dest_addr.nl_family = AF_NETLINK;

dest_addr.nl_pid = 0; //B:设置目的端口号

dest_addr.nl_groups = 0;

nlh->nlmsg_len = NLMSG_SPACE(MAX_PAYLOAD);

nlh->nlmsg_pid = 100; //C:设置源端口

nlh->nlmsg_flags = 0;

strcpy(NLMSG_DATA(nlh),"Hello you!"); //设置消息体

iov.iov_base = (void *)nlh;

iov.iov_len = NLMSG_SPACE(MAX_PAYLOAD);

//Create mssage

memset(&msg, 0, sizeof(msg));

msg.msg_name = (void *)&dest_addr;

msg.msg_namelen = sizeof(dest_addr);

msg.msg_iov = &iov;

msg.msg_iovlen = 1;

//send message

printf("state_smg\n");

state_smg = sendmsg(sock_fd,&msg,0);

if(state_smg == -1)

{

printf("get error sendmsg = %s\n",strerror(errno));

}

memset(nlh,0,NLMSG_SPACE(MAX_PAYLOAD));

//receive message

printf("waiting received!\n");

while(1){

printf("In while recvmsg\n");

state = recvmsg(sock_fd, &msg, 0);

if(stateprintf("state);

}

printf("Received message: %s\n",(char *) NLMSG_DATA(nlh));

}

close(sock_fd);

return 0;

}

上面程序首先向内核发送一条消息;“Helloyou”,然后进入循环一直等待读取内核的回复,并将收到的回复打印出来。如果看上面程序感觉很吃力,那么应该首先复习一下UDP中使用sendmsg的用法,特别时structmsghdr的结构要清楚,这里再赘述。下面主要分析与UDP发送数据包的不同点:

1.socket地址结构不同,UDP为sockaddr_in,Netlink为structsockaddr_nl;

2.与UDP发送数据相比,Netlink多了一个消息头结构structnlmsghdr需要我们构造。

注意代码注释中的A、B、C三处分别设置了pid。首先解释一下什么是pid,网上很多文章把这个字段说成是进程的pid,其实这完全是望文生义。这里的pid和进程pid没有什么关系,仅仅相当于UDP的port。对于UDP来说port和ip标示一个地址,那对我们的NETLINK_TEST协议(注意Netlink本身不是一个协议)来说,pid就唯一标示了一个地址。所以你如果用进程pid做为标示当然也是可以的。当然同样的pid对于NETLINK_TEST协议和内核定义的其他使用Netlink的协议是不冲突的(就像TCP的80端口和UDP的80端口)。

下面分析这三处设置pid分别有什么作用,首先A和B位置的比较好理解,这是在地址(sockaddr_nl)上进行的设置,就是相当于设置源地址和目的地址(其实是端口),只是注意B处设置pid为0,0就代表是内核,可以理解为内核专用的pid,那么用户进程就不能用0做为自己的pid吗?这个只能说如果你非要用也是可以的,只是会产生一些问题,后面在分析。接下来看为什么C处的消息头仍然需要设置pid呢?这里首先要知道一个前提:内核不会像UDP一样根据我们设置的原、目的地址为我们构造消息头,所以我们不在包头写入我们自己的地址(pid),那内核怎么知道是谁发来的报文呢?当然如果内核只是处理消息不需要回复进程的话舍不设置这个消息头pid都可以。

所以每个pid的设置功能不同:A处的设置是要设置发送者的源地址,有人会说既然源地址又不会自动填充到报文中,我们为什么还要设置这个,因为你还可能要接收回复啊。就像寄信,你连“门牌号”都没有,即使你在写信时候写上你的地址是100号,对方回信目的地址也是100号,但是邮局发现根本没有这个地址怎么可能把信送到你手里呢?所以A的主要作用是注册源地址,保证可以收到回复,如果不需要回复当然可以简单将pid设置为0;B处自然就是收信人的地址,pid为0代表内核的地址,假如有一个进程在101号上注册了地址,并调用了recvmsg,如果你将B处的pid设置为101,那数据包就发给了另一个进程,这就实现了使用Netlink进行进程间通信;C相当于你在信封上写的源地址,通常情况下这个应该和你的真实地址(A)处注册的源地址相同,当然你要是不想收到回信,又想恶搞一下或者有特殊需求,你可以写成其他进程注册的pid(比如101)。这和我们现实中寄信是一样的,你给你朋友写封情书,把写信人写成你的另一个好基友,然后后果你懂得……

好了,有了这个例子我们就大概知道用户态怎么使用Netlink了,至于我们没有用到的nl_groups等其他信息后面讲到再说,下面看下内核是怎么处理Netlink的。

4.内核 Netlinkapi

4.1创建 netlinksocket

struct sock *netlink_kernel_create(struct net *net, int unit,unsigned int groups, void (*input)(struct sk_buff *skb), struct mutex *cb_mutex,struct module *module);

参数说明:

(1)net:是一个网络名字空间namespace,在不同的名字空间里面可以有自己的转发信息库,有自己的一套net_device等等。默认情况下都是使用init_net这个全局变量。

(2)unit:表示netlink协议类型,如NETLINK_TEST、NETLINK_SELINUX。

(3)groups:多播地址。

(4)input:为内核模块定义的netlink消息处理函数,当有消息到达这个netlinksocket时,该input函数指针就会被引用,且只有此函数返回时,调用者的sendmsg才能返回。

(5)cb_mutex:为访问数据时的互斥信号量。

(6)module:一般为THIS_MODULE。

4.2发送单播消息 netlink_unicast

int netlink_unicast(struct sock *ssk, struct sk_buff *skb, u32 pid, int nonblock)

参数说明:

(1)ssk:为函数netlink_kernel_create()返回的socket。

(2)skb:存放消息,它的data字段指向要发送的netlink消息结构,而skb的控制块保存了消息的地址信息,宏NETLINK_CB(skb)就用于方便设置该控制块。

(3)pid:为接收此消息进程的pid,即目标地址,如果目标为组或内核,它设置为0。

(4)nonblock:表示该函数是否为非阻塞,如果为1,该函数将在没有接收缓存可利用时立即返回;而如果为0,该函数在没有接收缓存可利用定时睡眠。

4.3发送广播消息 netlink_broadcast

int netlink_broadcast(struct sock *ssk, struct sk_buff *skb, u32 pid, u32 group, gfp_t allocation)

前面的三个参数与netlink_unicast相同,参数group为接收消息的多播组,该参数的每一个位代表一个多播组,因此如果发送给多个多播组,就把该参数设置为多个多播组组ID的位或。参数allocation为内核内存分配类型,一般地为GFP_ATOMIC或GFP_KERNEL,GFP_ATOMIC用于原子的上下文(即不可以睡眠),而GFP_KERNEL用于非原子上下文。

4.4释放 netlinksocket

int netlink_unicast(struct sock *ssk, struct sk_buff *skb, u32 pid, int nonblock)

5.内核态程序范例一

#include

#include

#include

#include

#include

#include

#include

#define NETLINK_TEST 25

#define MAX_MSGSIZE 1024

int stringlength(char *s);

int err;

struct sock *nl_sk = NULL;

int flag = 0;

//向用户态进程回发消息

void sendnlmsg(char *message, int pid)

{

struct sk_buff *skb_1;

struct nlmsghdr *nlh;

int len = NLMSG_SPACE(MAX_MSGSIZE);

int slen = 0;

if(!message || !nl_sk)

{

return ;

}

printk(KERN_ERR "pid:%d\n",pid);

skb_1 = alloc_skb(len,GFP_KERNEL);

if(!skb_1)

{

printk(KERN_ERR "my_net_link:alloc_skb error\n");

}

slen = stringlength(message);

nlh = nlmsg_put(skb_1,0,0,0,MAX_MSGSIZE,0);

NETLINK_CB(skb_1).pid = 0;

NETLINK_CB(skb_1).dst_group = 0;

message[slen]= '\0';

memcpy(NLMSG_DATA(nlh),message,slen+1);

printk("my_net_link:send message '%s'.\n",(char *)NLMSG_DATA(nlh));

netlink_unicast(nl_sk,skb_1,pid,MSG_DONTWAIT);

}

int stringlength(char *s)

{

int slen = 0;

for(; *s; s++)

{

slen++;

}

return slen;

}

//接收用户态发来的消息

void nl_data_ready(struct sk_buff *__skb)

{

struct sk_buff *skb;

struct nlmsghdr *nlh;

char str[100];

struct completion cmpl;

printk("begin data_ready\n");

int i=10;

int pid;

skb = skb_get (__skb);

if(skb->len >= NLMSG_SPACE(0))

{

nlh = nlmsg_hdr(skb);

memcpy(str, NLMSG_DATA(nlh), sizeof(str));

printk("Message received:%s\n",str) ;

pid = nlh->nlmsg_pid;

while(i--)

{//我们使用completion做延时,每3秒钟向用户态回发一个消息

init_completion(&cmpl);

wait_for_completion_timeout(&cmpl,3 * HZ);

sendnlmsg("I am from kernel!",pid);

}

flag = 1;

kfree_skb(skb);

}

}

// Initialize netlink

int netlink_init(void)

{

nl_sk = netlink_kernel_create(&init_net, NETLINK_TEST, 1,

nl_data_ready, NULL, THIS_MODULE);

if(!nl_sk){

printk(KERN_ERR "my_net_link: create netlink socket error.\n");

return 1;

}

printk("my_net_link_4: create netlink socket ok.\n");

return 0;

}

static void netlink_exit(void)

{

if(nl_sk != NULL){

sock_release(nl_sk->sk_socket);

}

printk("my_net_link: self module exited\n");

}

module_init(netlink_init);

module_exit(netlink_exit);

MODULE_AUTHOR("yilong");

MODULE_LICENSE("GPL");

附上内核代码的Makefile文件:

ifneq ($(KERNELRELEASE),) obj-m :=netl.o else KERNELDIR ?=/lib/modules/$(shell uname -r)/build PWD :=$(shell pwd) default: $(MAKE) -C $(KERNELDIR) M=$(PWD) modules endif

6.程序结构分析

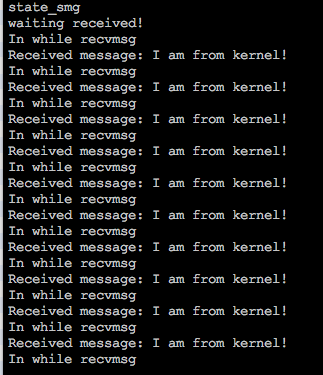

我们将内核模块insmod后,运行用户态程序,结果如下:

这个结果复合我们的预期,但是运行过程中打印出“state_smg”卡了好久才输出了后面的结果。这时候查看客户进程是处于D状态的(不了解D状态的同学可以google一下)。这是为什么呢?因为进程使用Netlink向内核发数据是同步,内核向进程发数据是异步。什么意思呢?也就是用户进程调用sendmsg发送消息后,内核会调用相应的接收函数,但是一定到这个接收函数执行完用户态的sendmsg才能够返回。我们在内核态的接收函数中调用了10次回发函数,每次都等待3秒钟,所以内核接收函数30秒后才返回,所以我们用户态程序的sendmsg也要等30秒后才返回。相反,内核回发的数据不用等待用户程序接收,这是因为内核所发的数据会暂时存放在一个队列中。

再来回到之前的一个问题,用户态程序的源地址(pid)可以用0吗?我把上面的用户程序的A和C处pid都改为了0,结果一运行就死机了。为什么呢?我们看一下内核代码的逻辑,收到用户消息后,根据消息中的pid发送回去,而pid为0,内核并不认为这是用户程序,认为是自身,所有又将回发的10个消息发给了自己(内核),这样就陷入了一个死循环,而用户态这时候进程一直处于D。

另外一个问题,如果同时启动两个用户进程会是什么情况?答案是再调用bind时出错:“Addressalreadyinuse”,这个同UDP一样,同一个地址同一个port如果没有设置SO_REUSEADDR两次bind就会出错,之后我用同样的方式再Netlink的socket上设置了SO_REUSEADDR,但是并没有什么效果。

7.用户态范例二

之前我们说过UDP可以使用sendmsg/recvmsg也可以使用sendto/recvfrom,那么Netlink同样也可以使用sendto/recvfrom。具体实现如下:

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#include

#define MAX_PAYLOAD 1024 //

maximum payload size

#define NETLINK_TEST 25

int main(int argc, char* argv[])

{

struct sockaddr_nl src_addr, dest_addr;

struct nlmsghdr *nlh = NULL;

int sock_fd, retval;

int state,state_smg = 0;

// Create a socket

sock_fd = socket(AF_NETLINK, SOCK_RAW, NETLINK_TEST);

if(sock_fd == -1){

printf("error getting socket: %s", strerror(errno));

return -1;

}

// To prepare binding

memset(&src_addr, 0, sizeof(src_addr));

src_addr.nl_family = AF_NETLINK;

src_addr.nl_pid = 100;

src_addr.nl_groups = 0;

//Bind

retval = bind(sock_fd, (struct sockaddr*)&src_addr, sizeof(src_addr));

if(retval printf("bind failed: %s", strerror(errno));

close(sock_fd);

return -1;

}

// To orepare create mssage head

nlh = (struct nlmsghdr *)malloc(NLMSG_SPACE(MAX_PAYLOAD));

if(!nlh){

printf("malloc nlmsghdr error!\n");

close(sock_fd);

return -1;

}

memset(&dest_addr,0,sizeof(dest_addr));

dest_addr.nl_family = AF_NETLINK;

dest_addr.nl_pid = 0;

dest_addr.nl_groups = 0;

nlh->nlmsg_len = NLMSG_SPACE(MAX_PAYLOAD);

nlh->nlmsg_pid = 100;

nlh->nlmsg_flags = 0;

strcpy(NLMSG_DATA(nlh),"Hello you!");

//send message

printf("state_smg\n");

sendto(sock_fd,nlh,NLMSG_LENGTH(MAX_PAYLOAD),0,(struct sockaddr*)(&dest_addr),sizeof(dest_addr));

if(state_smg == -1)

{

printf("get error sendmsg = %s\n",strerror(errno));

}

memset(nlh,0,NLMSG_SPACE(MAX_PAYLOAD));

//receive message

printf("waiting received!\n");

while(1){

printf("In while recvmsg\n");

state=recvfrom(sock_fd,nlh,NLMSG_LENGTH(MAX_PAYLOAD),0,NULL,NULL);

if(stateprintf("state);

}

printf("Received message: %s\n",(char *) NLMSG_DATA(nlh));

memset(nlh,0,NLMSG_SPACE(MAX_PAYLOAD));

}

close(sock_fd);

return 0;

}

熟悉UDP编程的同学看到这个程序一定很熟悉,除了多了一个Netlink消息头的设置。但是我们发现程序中调用了bind函数,这个函数再UDP编程中的客户端不是必须的,因为我们不需要把UDPsocket与某个地址关联,同时再发送UDP数据包时内核会为我们分配一个随即的端口。但是对于Netlink必须要有这一步bind,因为Netlink内核可不会为我们分配一个pid。再强调一遍消息头(nlmsghdr)中的pid是告诉内核接收端要回复的地址,但是这个地址存不存在内核并不关心,这个地址只有用户端调用了bind后才存在。

再说一个体外话,我们看到这两个例子都是用户态首先发起的,那Netlink是否支持内核态主动发起的情况呢?当然是可以的,只是内核一般需要事件触发,这里,只要和用户态约定号一个地址(pid),内核直接调用netlink_unicast就可以了。

Linux Netlink是一种特殊的套接字类型,它允许内核与用户空间进行双向的异步消息传递。Netlink支持多种协议族,每个协议族负责处理不同的主题,如路由、防火墙、设备监控等。Netlink还提供了一些高级特性,如多播、分组、序列号、确认等。Netlink是一种非常强大和灵活的通信机制,它可以让用户空间的应用程序更方便地访问和控制内核的状态和行为。本文介绍了Netlink的基本概念和使用方法,希望对你有所帮助。

The above is the detailed content of Linux Netlink: an efficient and flexible kernel-user space communication mechanism. For more information, please follow other related articles on the PHP Chinese website!