Technology peripherals

Technology peripherals AI

AI Taking a step closer to complete autonomy, Tsinghua University and HKU's new cross-task self-evolution strategy allows agents to learn to 'learn from experience”

Taking a step closer to complete autonomy, Tsinghua University and HKU's new cross-task self-evolution strategy allows agents to learn to 'learn from experience”"Learning from history can help us understand the ups and downs." The history of human progress is a self-evolution process that constantly draws on past experience and pushes the boundaries of capabilities. We learn from past failures and correct mistakes; we learn from successful experiences to improve efficiency and effectiveness. This self-evolution runs through all aspects of life: summarizing experience to solve work problems, using patterns to predict the weather, we continue to learn and evolve from the past.

Successfully extracting knowledge from past experience and applying it to future challenges is an important milestone on the road to human evolution. So in the era of artificial intelligence, can AI agents do the same thing?

In recent years, language models such as GPT and LLaMA have demonstrated amazing capabilities in solving complex tasks. However, while they can use tools to solve specific tasks, they inherently lack insights and learnings from past successes and failures. This is like a robot that can only perform a specific task. Although it performs well in the current task, it cannot call on its past experience to help when faced with new challenges. Therefore, we need to further develop these models so that they can accumulate knowledge and experience and apply them in new situations. By introducing memory and learning mechanisms, we can make these models more comprehensive in intelligence, able to respond flexibly in different tasks and situations, and gain inspiration from past experiences. This will make language models more powerful and reliable and help advance the development of artificial intelligence.

In response to this problem, a joint team from Tsinghua University, the University of Hong Kong, Renmin University and Wall-Facing Intelligence recently proposed a brand-new self-evolution strategy for agents: Exploration- Consolidation - Exploit (Investigate-Consolidate-Exploit, ICE) . It aims to improve the adaptability and flexibility of AI agents through self-evolution across tasks. It can not only improve the efficiency and effectiveness of the agent in handling new tasks, but also significantly reduce the demand for the capabilities of the agent base model.

The emergence of this strategy has indeed opened a new chapter in the self-evolution of intelligent agents, and also marks another step forward for us to achieve fully autonomous agents.

- Paper title: Investigate-Consolidate-Exploit: A General Strategy for Inter-Task Agent Self-Evolution

- Paper link: https://arxiv.org/abs/2401.13996

Overview of experience transfer between agent tasks to achieve self-evolution

Overview of experience transfer between agent tasks to achieve self-evolution

Two aspects of agent self-evolution: planning and execution

The current complex intelligent agents can be mainly divided into two aspects: task planning and task execution. In terms of task planning, the agent decomposes user needs and develops detailed target strategies through logical reasoning. In terms of task execution, the agent uses various tools to interact with the environment to complete the corresponding sub-goals.

In order to better promote the reuse of past experience, the author first decouples the evolutionary strategy into two aspects in this paper. Specifically, the author takes the tree task planning structure and ReACT chain tool execution in the XAgent agent architecture as examples to introduce the implementation method of the ICE strategy in detail.

ICE self-evolution strategy for agent mission planning

ICE self-evolution strategy for agent mission planning

For mission planning, self-evolution is divided into the following three according to ICE stage:

- In the exploration phase, the agent records the entire tree-like task planning structure and dynamically detects the execution status of each sub-goal at the same time;

- In the solidification phase , the agent first eliminates all failed target nodes, and then for each successfully completed goal, the agent will arrange all the leaf nodes of the subtree with the goal in order to form a planning chain (Workflow );

- In the utilization phase, these planning chains will be used as a reference for the decomposition and refinement of new task objectives to take advantage of these past successful experiences.

ICE self-evolution strategy for agent task execution

ICE self-evolution strategy for agent task execution

The self-evolution strategy for task execution is still divided into ICE three stages, among which:

- #In the exploration stage, the agent dynamically records the tool call chain executed by each target, and performs simple detection of possible problems that arise in the tool calls. Classification;

- In the solidification stage, the tool call chain will be converted into an automaton-like Pipeline structure, tool call The transfer relationship between the sequence and the call will be fixed, and repeated calls will be removed, branch logic will be added, etc. to make the automated execution process of the automaton more robust;

- In the utilization phase, For similar goals, the agent will directly automate the execution pipeline to improve task completion efficiency.

Self-evolution experiment under the XAgent framework

The author tested the proposed ICE self-evolution strategy in the XAgent framework. And summarized the following four findings:

- The ICE strategy can significantly reduce the number of model calls, thereby improving efficiency and reducing overhead.

- The stored experience has a high reuse rate under the ICE strategy, which proves the effectiveness of ICE.

- The ICE strategy can improve the subtask completion rate while reducing the number of planned repairs.

- Through the blessing of past experience, the requirements for model capabilities for task execution have been significantly reduced. Specifically, using GPT-3.5 combined with previous task planning and execution experience, the effect can be directly comparable to GPT-4.

After exploring and solidifying for experience storage, the performance of the test set task under different agent ICE strategies

After exploring and solidifying for experience storage, the performance of the test set task under different agent ICE strategies

At the same time, the author also conducted additional ablation experiments: As the storage experience gradually increases, does the performance of the agent get better and better? The answer is yes. From zero experience, half experience, to full experience, the number of calls to the base model gradually decreases, while the completion of subtasks gradually increases, and the reuse rate also increases. This shows that more past experience can better promote agent execution and achieve scale effects.

Statistics of ablation experiment results of test set task performance under different experience storage amounts

Statistics of ablation experiment results of test set task performance under different experience storage amounts

Conclusion

Imagine a world where everyone can deploy intelligent agents. The number of successful experiences will continue to accumulate as the individual agents perform tasks, and users can also use these experiences in the cloud or in the community. share. These experiences will prompt the intelligent agent to continuously acquire capabilities, evolve itself, and gradually achieve complete autonomy. We are one step closer to such an era.

The above is the detailed content of Taking a step closer to complete autonomy, Tsinghua University and HKU's new cross-task self-evolution strategy allows agents to learn to 'learn from experience”. For more information, please follow other related articles on the PHP Chinese website!

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM

从VAE到扩散模型:一文解读以文生图新范式Apr 08, 2023 pm 08:41 PM1 前言在发布DALL·E的15个月后,OpenAI在今年春天带了续作DALL·E 2,以其更加惊艳的效果和丰富的可玩性迅速占领了各大AI社区的头条。近年来,随着生成对抗网络(GAN)、变分自编码器(VAE)、扩散模型(Diffusion models)的出现,深度学习已向世人展现其强大的图像生成能力;加上GPT-3、BERT等NLP模型的成功,人类正逐步打破文本和图像的信息界限。在DALL·E 2中,只需输入简单的文本(prompt),它就可以生成多张1024*1024的高清图像。这些图像甚至

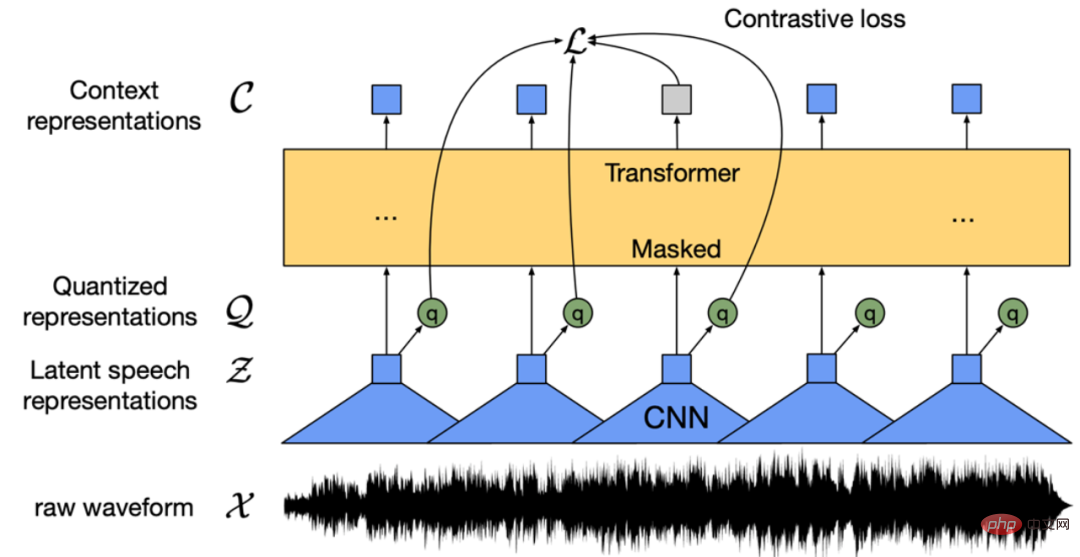

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM

普林斯顿陈丹琦:如何让「大模型」变小Apr 08, 2023 pm 04:01 PM“Making large models smaller”这是很多语言模型研究人员的学术追求,针对大模型昂贵的环境和训练成本,陈丹琦在智源大会青源学术年会上做了题为“Making large models smaller”的特邀报告。报告中重点提及了基于记忆增强的TRIME算法和基于粗细粒度联合剪枝和逐层蒸馏的CofiPruning算法。前者能够在不改变模型结构的基础上兼顾语言模型困惑度和检索速度方面的优势;而后者可以在保证下游任务准确度的同时实现更快的处理速度,具有更小的模型结构。陈丹琦 普

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM

解锁CNN和Transformer正确结合方法,字节跳动提出有效的下一代视觉TransformerApr 09, 2023 pm 02:01 PM由于复杂的注意力机制和模型设计,大多数现有的视觉 Transformer(ViT)在现实的工业部署场景中不能像卷积神经网络(CNN)那样高效地执行。这就带来了一个问题:视觉神经网络能否像 CNN 一样快速推断并像 ViT 一样强大?近期一些工作试图设计 CNN-Transformer 混合架构来解决这个问题,但这些工作的整体性能远不能令人满意。基于此,来自字节跳动的研究者提出了一种能在现实工业场景中有效部署的下一代视觉 Transformer——Next-ViT。从延迟 / 准确性权衡的角度看,

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM

Stable Diffusion XL 现已推出—有什么新功能,你知道吗?Apr 07, 2023 pm 11:21 PM3月27号,Stability AI的创始人兼首席执行官Emad Mostaque在一条推文中宣布,Stable Diffusion XL 现已可用于公开测试。以下是一些事项:“XL”不是这个新的AI模型的官方名称。一旦发布稳定性AI公司的官方公告,名称将会更改。与先前版本相比,图像质量有所提高与先前版本相比,图像生成速度大大加快。示例图像让我们看看新旧AI模型在结果上的差异。Prompt: Luxury sports car with aerodynamic curves, shot in a

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM

五年后AI所需算力超100万倍!十二家机构联合发表88页长文:「智能计算」是解药Apr 09, 2023 pm 07:01 PM人工智能就是一个「拼财力」的行业,如果没有高性能计算设备,别说开发基础模型,就连微调模型都做不到。但如果只靠拼硬件,单靠当前计算性能的发展速度,迟早有一天无法满足日益膨胀的需求,所以还需要配套的软件来协调统筹计算能力,这时候就需要用到「智能计算」技术。最近,来自之江实验室、中国工程院、国防科技大学、浙江大学等多达十二个国内外研究机构共同发表了一篇论文,首次对智能计算领域进行了全面的调研,涵盖了理论基础、智能与计算的技术融合、重要应用、挑战和未来前景。论文链接:https://spj.scien

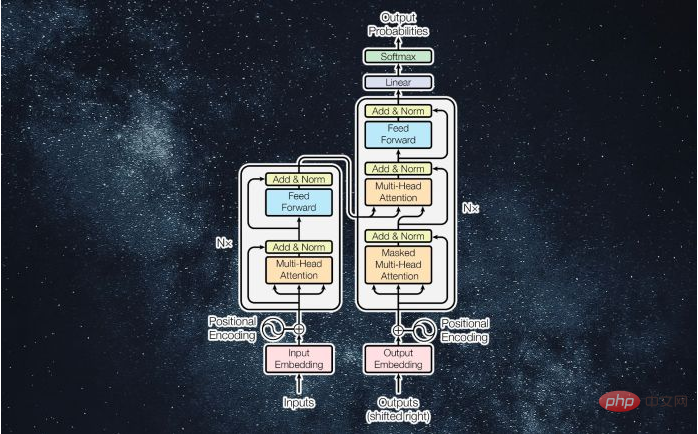

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM

什么是Transformer机器学习模型?Apr 08, 2023 pm 06:31 PM译者 | 李睿审校 | 孙淑娟近年来, Transformer 机器学习模型已经成为深度学习和深度神经网络技术进步的主要亮点之一。它主要用于自然语言处理中的高级应用。谷歌正在使用它来增强其搜索引擎结果。OpenAI 使用 Transformer 创建了著名的 GPT-2和 GPT-3模型。自从2017年首次亮相以来,Transformer 架构不断发展并扩展到多种不同的变体,从语言任务扩展到其他领域。它们已被用于时间序列预测。它们是 DeepMind 的蛋白质结构预测模型 AlphaFold

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM

AI模型告诉你,为啥巴西最可能在今年夺冠!曾精准预测前两届冠军Apr 09, 2023 pm 01:51 PM说起2010年南非世界杯的最大网红,一定非「章鱼保罗」莫属!这只位于德国海洋生物中心的神奇章鱼,不仅成功预测了德国队全部七场比赛的结果,还顺利地选出了最终的总冠军西班牙队。不幸的是,保罗已经永远地离开了我们,但它的「遗产」却在人们预测足球比赛结果的尝试中持续存在。在艾伦图灵研究所(The Alan Turing Institute),随着2022年卡塔尔世界杯的持续进行,三位研究员Nick Barlow、Jack Roberts和Ryan Chan决定用一种AI算法预测今年的冠军归属。预测模型图

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SublimeText3 Linux new version

SublimeText3 Linux latest version

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

WebStorm Mac version

Useful JavaScript development tools

SublimeText3 English version

Recommended: Win version, supports code prompts!