Understand the definition and functionality of embedded models

The embedded model (Embedding) is a machine learning model that is widely used in fields such as natural language processing (NLP) and computer vision (CV). Its main function is to transform high-dimensional data into a low-dimensional embedding space while retaining the characteristics and semantic information of the original data, thereby improving the efficiency and accuracy of the model. Embedded models can map similar data to similar embedding spaces by learning the correlation between data, so that the model can better understand and process the data. The principle of the embedded model is based on the idea of distributed representation, which encodes the semantic information of the data into the vector space by representing each data point as a vector. The advantage of this is that you can take advantage of the properties of vector space. For example, the distance between vectors can represent the similarity of data. Common embedding algorithms include Word2Vec and GloVe. In the field of NLP, these algorithms can map words into vector space, allowing the model to better understand the text. There are many types of embedded models in practical applications. For example, in the field of NLP, you can use

1. Background

In traditional machines In learning, one-hot encoding is often used to convert high-dimensional data (such as text and images) into binary vectors for processing. However, there are two main problems with this approach. First, as the amount of data increases, the dimensions also increase, resulting in huge computing and storage costs, which is called the disaster of dimensionality. Secondly, since each dimension in the vector is independent of each other, it cannot capture features and semantic information, nor can it reflect the relationship between different dimensions. Therefore, in order to overcome these problems, researchers have proposed some new processing methods, such as word embeddings and convolutional neural networks. These methods can capture richer features and semantic information in low-dimensional space and can handle larger-scale data sets, thereby improving the effectiveness and efficiency of machine learning.

In order to solve these problems, researchers have proposed embedded models. This model can transform high-dimensional data into a low-dimensional embedding space and learn to map similar data points to similar positions in the embedding space. In this way, the model can effectively capture feature and semantic information, thereby improving efficiency and accuracy.

2. Principle

The core idea of the embedded model is to map each data point to a low-dimensional embedding vector. Make similar data points close to each other in the embedding space. This embedding vector is a real vector, usually containing tens to hundreds of elements. Each element represents a feature or semantic information. Unlike One-hot encoding, the elements in the embedding vector can be any real value. This representation can better capture the similarities and correlations between data, as well as the underlying structure hidden behind the data.

The generation of embedding vectors is usually trained using a neural network, which includes an input layer, a hidden layer and an output layer. The input layer accepts original high-dimensional data, such as text or images, etc., the hidden layer converts it into embedding vectors, and the output layer maps the embedding vectors to the desired prediction results, such as text classification or image recognition, etc.

When training an embedded model, a large number of data samples are usually used for training, with the purpose of optimizing the representation of the embedding vector by learning the similarities and differences between data samples. During the training process, the loss function is usually used to measure the gap between the representation of the embedding vector and the true value, and the model parameters are updated through the backpropagation algorithm, so that the model can better capture feature and semantic information.

3. Application

Embedded models are widely used in natural language processing, computer vision and other fields. Here are some common ones: Application scenarios:

Text classification: Use embedded models to convert text into embedded vectors to achieve text classification tasks, such as sentiment analysis, spam filtering, etc.

Information retrieval: Use embedded models to convert queries and documents into embedded vectors to achieve retrieval of relevant documents, such as search engines, etc.

Natural language generation: Use embedded models to convert text into embedded vectors, and generate new text through generative models, such as machine translation, dialogue systems, etc.

Image recognition: Use embedded models to convert images into embedding vectors, and classify images through classifiers, such as face recognition, object recognition, etc.

Recommendation system: Use embedded models to convert users and items into embedded vectors to achieve personalized recommendations for users, such as e-commerce platforms, music recommendations, etc.

4. Common types

There are many types of embedded models. Here are some common types:

1.Word2Vec

Word2Vec is an embedded model widely used in the field of natural language processing. It can convert words into vector representations and learn between words. The similarities and differences between words capture the semantic information of words. Common Word2Vec models include Skip-gram and CBOW.

2.GloVe

GloVe is a global vector embedding model that can convert words into vector representations and capture the semantic information of words by learning the co-occurrence relationships between words. The advantage of GloVe is that it can simultaneously consider the contextual and global information of words, thereby improving the quality of embedding vectors.

3.FastText

FastText is a character-level embedding model that can convert words and sub-words into vector representations and Capture the semantic information of words by learning the similarities and differences between words and sub-words. The advantage of FastText is its ability to handle problems such as unknown vocabulary and spelling errors.

4.DeepWalk

DeepWalk is a graph embedding model based on random walk, which can convert graph nodes into vector representations. And by learning the similarities and differences between nodes, it captures the characteristics and semantic information of the graph. The advantage of DeepWalk is that it can process large-scale graph data, such as social networks, knowledge graphs, etc.

5.Autoencoder

Autoencoder is a common unsupervised embedding model that can convert high-dimensional data into low-dimensional embeddings vector, and optimizes the representation of the embedding vector by learning the reconstruction error. The advantage of Autoencoder is that it can automatically learn the characteristics and structure of data, and it can also handle non-linear data distribution.

In short, the embedded model is an important machine learning technology that can transform high-dimensional data into a low-dimensional embedding space and retain the characteristics and semantic information of the original data. , thereby improving the efficiency and accuracy of the model. In practical applications, different types of embedded models have their own advantages and applicable scenarios, and need to be selected and applied according to specific problems.

The above is the detailed content of Understand the definition and functionality of embedded models. For more information, please follow other related articles on the PHP Chinese website!

Newest Annual Compilation Of The Best Prompt Engineering TechniquesApr 10, 2025 am 11:22 AM

Newest Annual Compilation Of The Best Prompt Engineering TechniquesApr 10, 2025 am 11:22 AMFor those of you who might be new to my column, I broadly explore the latest advances in AI across the board, including topics such as embodied AI, AI reasoning, high-tech breakthroughs in AI, prompt engineering, training of AI, fielding of AI, AI re

Europe's AI Continent Action Plan: Gigafactories, Data Labs, And Green AIApr 10, 2025 am 11:21 AM

Europe's AI Continent Action Plan: Gigafactories, Data Labs, And Green AIApr 10, 2025 am 11:21 AMEurope's ambitious AI Continent Action Plan aims to establish the EU as a global leader in artificial intelligence. A key element is the creation of a network of AI gigafactories, each housing around 100,000 advanced AI chips – four times the capaci

Is Microsoft's Straightforward Agent Story Enough To Create More Fans?Apr 10, 2025 am 11:20 AM

Is Microsoft's Straightforward Agent Story Enough To Create More Fans?Apr 10, 2025 am 11:20 AMMicrosoft's Unified Approach to AI Agent Applications: A Clear Win for Businesses Microsoft's recent announcement regarding new AI agent capabilities impressed with its clear and unified presentation. Unlike many tech announcements bogged down in te

Selling AI Strategy To Employees: Shopify CEO's ManifestoApr 10, 2025 am 11:19 AM

Selling AI Strategy To Employees: Shopify CEO's ManifestoApr 10, 2025 am 11:19 AMShopify CEO Tobi Lütke's recent memo boldly declares AI proficiency a fundamental expectation for every employee, marking a significant cultural shift within the company. This isn't a fleeting trend; it's a new operational paradigm integrated into p

IBM Launches Z17 Mainframe With Full AI IntegrationApr 10, 2025 am 11:18 AM

IBM Launches Z17 Mainframe With Full AI IntegrationApr 10, 2025 am 11:18 AMIBM's z17 Mainframe: Integrating AI for Enhanced Business Operations Last month, at IBM's New York headquarters, I received a preview of the z17's capabilities. Building on the z16's success (launched in 2022 and demonstrating sustained revenue grow

5 ChatGPT Prompts To Stop Depending On Others And Trust Yourself FullyApr 10, 2025 am 11:17 AM

5 ChatGPT Prompts To Stop Depending On Others And Trust Yourself FullyApr 10, 2025 am 11:17 AMUnlock unshakeable confidence and eliminate the need for external validation! These five ChatGPT prompts will guide you towards complete self-reliance and a transformative shift in self-perception. Simply copy, paste, and customize the bracketed in

AI Is Dangerously Similar To Your MindApr 10, 2025 am 11:16 AM

AI Is Dangerously Similar To Your MindApr 10, 2025 am 11:16 AMA recent [study] by Anthropic, an artificial intelligence security and research company, begins to reveal the truth about these complex processes, showing a complexity that is disturbingly similar to our own cognitive domain. Natural intelligence and artificial intelligence may be more similar than we think. Snooping inside: Anthropic Interpretability Study The new findings from the research conducted by Anthropic represent significant advances in the field of mechanistic interpretability, which aims to reverse engineer internal computing of AI—not just observe what AI does, but understand how it does it at the artificial neuron level. Imagine trying to understand the brain by drawing which neurons fire when someone sees a specific object or thinks about a specific idea. A

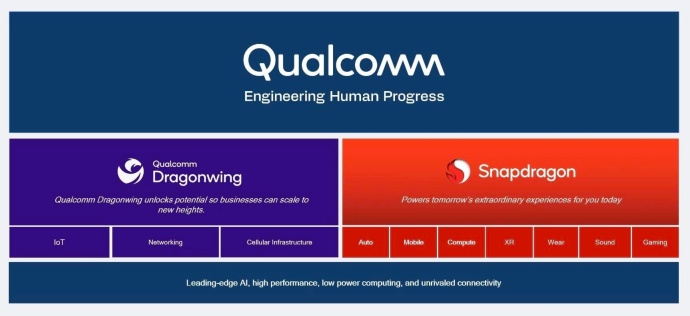

Dragonwing Showcases Qualcomm's Edge MomentumApr 10, 2025 am 11:14 AM

Dragonwing Showcases Qualcomm's Edge MomentumApr 10, 2025 am 11:14 AMQualcomm's Dragonwing: A Strategic Leap into Enterprise and Infrastructure Qualcomm is aggressively expanding its reach beyond mobile, targeting enterprise and infrastructure markets globally with its new Dragonwing brand. This isn't merely a rebran

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SublimeText3 Mac version

God-level code editing software (SublimeText3)

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

SublimeText3 English version

Recommended: Win version, supports code prompts!

Dreamweaver CS6

Visual web development tools