LLM large language model and retrieval enhancement generation

LLM large language models are usually trained using the Transformer architecture, using large amounts of text data to improve the ability to understand and generate natural language. These models are widely used in chatbots, text summarization, machine translation and other fields. Some well-known LLM large language models include OpenAI's GPT series and Google's BERT.

In the field of natural language processing, retrieval-enhanced generation is a technology that combines retrieval and generation. It generates text that meets requirements by retrieving relevant information from large-scale text corpora and using generative models to recombine and arrange this information. This technique has a wide range of applications, including text summarization, machine translation, dialogue generation, and other tasks. By taking advantage of retrieval and generation, retrieval-enhanced generation can improve the quality and accuracy of text generation, thereby playing an important role in the field of natural language processing.

In the LLM large language model, retrieval enhancement generation is considered an important technical means to improve model performance. By integrating retrieval and generation, LLM can more effectively obtain relevant information from massive texts and generate high-quality natural language texts. This technical means can significantly improve the generation effect and accuracy of the model and better meet the needs of various natural language processing applications. By combining retrieval and generation, LLM large language models are able to overcome some limitations of traditional generative models, such as the consistency and relevance of generated content. Therefore, retrieval-augmented generation has great potential in improving model performance and is expected to play an important role in future natural language processing research.

Steps to use retrieval enhancement generation technology to customize the LLM large language model for specific use cases

To use retrieval enhancement generation to customize the LLM large language model for specific use cases, you can follow the following steps:

1. Prepare data

First of all, preparing a large amount of text data is a key step in establishing an LLM large language model. These data include training data and retrieval data. Training data is used to train the model, while retrieval data is used to retrieve relevant information from it. To meet the needs of a specific use case, relevant text data can be selected as needed. This data can be obtained from the Internet, such as relevant articles, news, forum posts, etc. Choosing the right data source is crucial to training a high-quality model. In order to ensure the quality of training data, the data needs to be preprocessed and cleaned. This includes removing noise, normalizing text formats, handling missing values, etc. The cleaned data can be better used to train the model and improve the accuracy and performance of the model. In addition

2. Train the LLM large language model

Use the existing LLM large language model framework, such as OpenAI's GPT series or Google's BERT, to train the prepared training data. During the training process, fine-tuning can be done to improve the model's performance for specific use cases.

3. Build a retrieval system

In order to achieve retrieval enhancement generation, it is necessary to build a retrieval system for retrieving relevant information from a large-scale text corpus. Existing search engine technologies can be used, such as keyword-based or content-based retrieval. In addition, more advanced deep learning technologies, such as Transformer-based retrieval models, can also be used to improve retrieval results. These technologies can better understand the user's query intent by analyzing semantic and contextual information, and accurately return relevant results. Through continuous optimization and iteration, the retrieval system can efficiently retrieve information related to user needs in large-scale text corpora.

4. Combine the retrieval system and the LLM large language model

Combine the retrieval system and the LLM large language model to achieve enhanced retrieval generation. First, a retrieval system is used to retrieve relevant information from a large-scale text corpus. Then, the LLM large language model is used to rearrange and combine this information to generate text that meets the requirements. In this way, the accuracy and diversity of generated text can be improved to better meet the needs of users.

5. Optimization and evaluation

In order to meet the needs of specific use cases, we can optimize and evaluate customized LLM large language models. To evaluate the performance of the model, you can use evaluation indicators such as accuracy, recall, and F1 score. In addition, we can also use data from actual application scenarios to test the practicality of the model.

Example 1: LLM large language model for movie reviews

Suppose we want to customize an LLM large language model for movie reviews, let the user input a movie name, and then the model can generate Reviews of the film.

First, we need to prepare training data and retrieve data. Relevant movie review articles, news, forum posts, etc. can be obtained from the Internet as training data and retrieval data.

Then, we can use OpenAI’s GPT series framework to train the LLM large language model. During the training process, the model can be fine-tuned for the task of movie review, such as adjusting the vocabulary, corpus, etc.

Next, we can build a keyword-based retrieval system for retrieving relevant information from large-scale text corpora. In this example, we can use the movie title as a keyword to retrieve relevant reviews from the training data and retrieval data.

Finally, we combine the retrieval system with the LLM large language model to achieve enhanced retrieval generation. Specifically, you can first use a retrieval system to retrieve comments related to movie titles from a large-scale text corpus, and then use the LLM large language model to rearrange and combine these comments to generate text that meets the requirements.

The following is an example code that uses Python and the GPT library to implement the above process:

<code>import torch from transformers import GPT2Tokenizer, GPT2LMHeadModel # 准备训练数据和检索数据 train_data = [... # 训练数据] retrieval_data = [... # 检索数据] # 训练LLM大语言模型 tokenizer = GPT2Tokenizer.from_pretrained('gpt2-large') model = GPT2LMHeadModel.from_pretrained('gpt2-large') model.train() input_ids = tokenizer.encode("电影名称", return_tensors='pt') output = model(input_ids) output_ids = torch.argmax(output.logits, dim=-1) generated_text = tokenizer.decode(output_ids, skip_special_tokens=True) # 使用检索系统获取相关评论 retrieved_comments = [... # 从大规模文本语料库中检索与电影名称相关的评论] # 结合检索系统和LLM大语言模型生成评论 generated_comment = "".join(retrieved_comments) + " " + generated_text</code>Example 2: Help users answer questions about programming

First, we need a simple Retrieval system, such as using Elasticsearch. We can then write code using Python to connect the LLM model to Elasticsearch and fine-tune it. The following is a simple example code:

<code># 导入所需的库import torchfrom transformers import GPT2LMHeadModel, GPT2Tokenizerfrom elasticsearch import Elasticsearch# 初始化Elasticsearch客户端es = Elasticsearch()# 加载GPT-2模型和tokenizertokenizer = GPT2Tokenizer.from_pretrained("gpt2")model = GPT2LMHeadModel.from_pretrained("gpt2")# 定义一个函数,用于通过Elasticsearch检索相关信息def retrieve_information(query): # 在Elasticsearch上执行查询 # 这里假设我们有一个名为"knowledge_base"的索引 res = es.search(index="knowledge_base", body={"query": {"match": {"text": query}}}) # 返回查询结果 return [hit['_source']['text'] for hit in res['hits']['hits']]# 定义一个函数,用于生成文本,并利用检索到的信息def generate_text_with_retrieval(prompt): # 从Elasticsearch检索相关信息 retrieved_info = retrieve_information(prompt) # 将检索到的信息整合到输入中 prompt += " ".join(retrieved_info) # 将输入编码成tokens input_ids = tokenizer.encode(prompt, return_tensors="pt") # 生成文本 output = model.generate(input_ids, max_length=100, num_return_sequences=1, no_repeat_ngram_size=2) # 解码生成的文本 generated_text = tokenizer.decode(output[0], skip_special_tokens=True) return generated_text# 用例:生成回答编程问题的文本user_query = "What is a function in Python?"generated_response = generate_text_with_retrietrieved_response = generate_text_with_retrieval(user_query)# 打印生成的回答print(generated_response)</code>This Python code example demonstrates how to use a GPT-2 model combined with Elasticsearch to achieve retrieval-enhanced generation. In this example, we assume that there is an index called "knowledge_base" that stores programming-related information. In the function retrieve_information, we execute a simple Elasticsearch query, and then in the generate_text_with_retrieval function, we integrate the retrieved information and generate the answer using the GPT-2 model.

When a user queries a question about a Python function, the code retrieves relevant information from Elasticsearch, integrates it into the user query, and then uses the GPT-2 model to generate an answer.

The above is the detailed content of LLM large language model and retrieval enhancement generation. For more information, please follow other related articles on the PHP Chinese website!

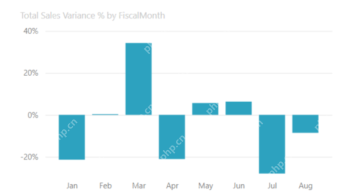

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PM

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PMHarnessing the Power of Data Visualization with Microsoft Power BI Charts In today's data-driven world, effectively communicating complex information to non-technical audiences is crucial. Data visualization bridges this gap, transforming raw data i

Expert Systems in AIApr 16, 2025 pm 12:00 PM

Expert Systems in AIApr 16, 2025 pm 12:00 PMExpert Systems: A Deep Dive into AI's Decision-Making Power Imagine having access to expert advice on anything, from medical diagnoses to financial planning. That's the power of expert systems in artificial intelligence. These systems mimic the pro

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AM

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AMFirst of all, it’s apparent that this is happening quickly. Various companies are talking about the proportions of their code that are currently written by AI, and these are increasing at a rapid clip. There’s a lot of job displacement already around

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AMThe film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AM

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AMISRO's Free AI/ML Online Course: A Gateway to Geospatial Technology Innovation The Indian Space Research Organisation (ISRO), through its Indian Institute of Remote Sensing (IIRS), is offering a fantastic opportunity for students and professionals to

Local Search Algorithms in AIApr 16, 2025 am 11:40 AM

Local Search Algorithms in AIApr 16, 2025 am 11:40 AMLocal Search Algorithms: A Comprehensive Guide Planning a large-scale event requires efficient workload distribution. When traditional approaches fail, local search algorithms offer a powerful solution. This article explores hill climbing and simul

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AMThe release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AMChip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 English version

Recommended: Win version, supports code prompts!

Notepad++7.3.1

Easy-to-use and free code editor