Introduction to neural networks driven by physical information

Physical information-based neural network (PINN) is a method that combines physical models and neural networks. By integrating physical methods into neural networks, PINN can learn the dynamic behavior of nonlinear systems. Compared with traditional physical model-based methods, PINN has higher flexibility and scalability. It can adaptively learn complex nonlinear dynamic systems while meeting the requirements of physical specifications. This article will introduce the basic principles of PINN and provide some practical application examples.

The basic principle of PINN is to integrate physical methods into neural networks to learn the dynamic behavior of the system. Specifically, we can express the physical method in the following form:

F(u(x),\frac{\partial u}{\partial x},x,t) =0

Our goal is to understand the behavior of the system by learning the time evolution of the system state change u(x) and the boundary conditions around the system. To achieve this goal, we can use a neural network to simulate the development of the state change u(x) and use automatic differentiation techniques to calculate the gradient of the state change. At the same time, we can also use physical methods to constrain the relationship between the neural network and state changes. In this way, we can better understand the state evolution of the system and predict future changes.

Specifically, we can use the following loss function to train PINN:

L_{pinn}=L_{data} L_{ Physics}

where L_{data} is data loss, used to simulate the known state change value. Generally, we can use the mean square error to definitely define L_{data}:

L_{data}=\frac{1}{N}\sum_{i=1}^{ N}(u_i-u_{data,i})^2

where $N$ is the number of samples in the data set, u_i is the state change value predicted by the neural network, u_{data ,i} is the corresponding real state change value in the data set.

L_{physics} is the physical constraint loss, which is used to ensure that the neural network and state changes satisfy the physical method. In general, we can use the number of residuals to definitely define L_{physics}:

L_{physics}=\frac{1}{N}\sum_{i=1}^{ N}(F(u_i,\frac{\partial u_i}{\partial x},x_i,t_i))^2

where F is the physical method,\frac{\partial u_i}{\partial x} is the slope of the state change predicted by the neural network, x_i and t_i are the space and time coordinates similar to this i.

By minimizing L_{pinn}, we can simultaneously simulate data and satisfy physical methods, thereby learning the dynamic behavior of the system.

Now let’s look at some realistic PINN demonstrations. One typical example is learning the dynamic behavior of the Navier-Stokes method. The Navier-Stokes method describes the motion behavior of the fluid, which can be written in the following form:

\rho(\frac{\partial u}{\partial t} u\cdot\nabla u)=-\nabla p \mu\nabla^2u f

where \rho is the density of the fluid, u is the velocity of the fluid, p is the pressure of the fluid, \mu is the Density, f is the external force. Our goal is to learn the time evolution of the velocity and pressure of the fluid, as well as the boundary conditions at the fluid boundaries.

To achieve this goal, we can fill in the Navier-Stokes method into the neural network to facilitate learning the time evolution of speed and pressure. Specifically, we can use the following loss to train PINN:

L_{pinn}=L_{data} L_{physics}

The definitions of L_{data} and L_{physics} are the same as before. We can use a fluid dynamics model to generate a set of state variable data including velocity and pressure, and then use PINN to simulate state changes and satisfy the Navier-Stokes method. In this way, we can learn the dynamic behavior of flowing bodies, including phenomena such as wet flows, vortices, and boundary layers, without having to first determine a complex physical model or manually derive the analysis.

Another example is the kinematic behavior of learning nonlinear wave motion methods. The nonlinear wave motion method describes the propagation behavior of wave motion in the introduction, which can be written in the following form:

\frac{\partial^2u}{\partial t^2} -c^2\nabla^2u f(u)=0

where u is the amplitude of the wave speed, c is the wave speed, and f(u) is the item of nonlinear quality. Our goal is to learn the time evolution of the wave dynamics and boundary conditions at the introductory boundaries.

To achieve this goal, we can incorporate nonlinear wave processes into neural networks to facilitate learning of the epochal evolution of wave motion. Specifically, we can use the following damage numbers to train PINN:

L_{pinn}=L_{data} L_{physics}

The definitions of L_{data} and L_{physics} are the same as before. We can use numerical methods to generate a set of state change data containing amplitudes and steps, and then use PINN to simulate the state changes and satisfy the nonlinear wave method. In this way, we can study the time evolution of waves in a medium, including phenomena such as shape changes, refraction and reflection of wave packets, without first defining complex physical models or manually deriving the analysis.

In short, the neural network based on physical information is a method that combines physical models and neural networks, which can adapt to the earth's learning of complex non-linear dynamic systems while maintaining strict satisfaction of physical laws. PINN has been widely used in fluid mechanics, acoustics, structural mechanics and other fields, and has achieved some remarkable results. In the future, with the continuous development of neural networks and automated differential technology, PINN will hopefully become a larger, stronger and more versatile tool for solving various nonlinear dynamics problems.

The above is the detailed content of Introduction to neural networks driven by physical information. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

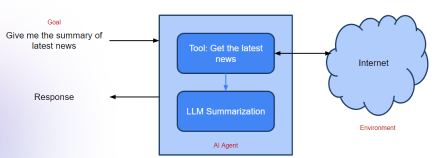

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

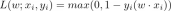

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 Linux new version

SublimeText3 Linux latest version

SublimeText3 Chinese version

Chinese version, very easy to use