The difference between large language models and word embedding models

Large-scale language model and word embedding model are two key concepts in natural language processing. They can both be applied to text analysis and generation, but the principles and application scenarios are different. Large-scale language models are mainly based on statistical and probabilistic models and are suitable for generating continuous text and semantic understanding. The word embedding model can capture the semantic relationship between words by mapping words to vector space, and is suitable for word meaning inference and text classification.

1. Word embedding model

The word embedding model is a technology that processes text information by mapping words into a low-dimensional vector space. . It converts words in a language into vector form so that computers can better understand and process text. Commonly used word embedding models include Word2Vec and GloVe. These models are widely used in natural language processing tasks, such as text classification, sentiment analysis, and machine translation. They provide computers with richer semantic information by capturing the semantic and grammatical relationships between words, thereby improving the effectiveness of text processing.

1.Word2Vec

Word2Vec is a neural network-based word embedding model used to represent words as continuous vectors. It has two commonly used algorithms: CBOW and Skip-gram. CBOW predicts target words through context words, while Skip-gram predicts context words through target words. The core idea of Word2Vec is to obtain the similarity between words by learning their distribution in context. By training a large amount of text data, Word2Vec can generate a dense vector representation for each word, so that semantically similar words are closer in the vector space. This word embedding model is widely used in natural language processing tasks such as text classification, sentiment analysis, and machine translation.

2.GloVe

GloVe is a word embedding model based on matrix decomposition. It utilizes global statistical information and local context information to construct a co-occurrence matrix between words, and obtains the vector representation of words through matrix decomposition. The advantage of GloVe is that it can handle large-scale corpora and does not require random sampling like Word2Vec.

2. Large-scale language model

The large-scale language model is a natural language processing model based on neural networks, which can learn from large-scale Learn the probability distribution of language in the corpus to achieve natural language understanding and generation. Large language models can be used for various text tasks, such as language modeling, text classification, machine translation, etc.

1.GPT

GPT is a large-scale language model based on Transformer, which learns the probability distribution of language through pre-training, and High-quality natural language text can be generated. The pre-training process is divided into two stages: unsupervised pre-training and supervised fine-tuning. In the unsupervised pre-training stage, GPT uses large-scale text corpus to learn the probability distribution of language; in the supervised fine-tuning stage, GPT uses labeled data to optimize the parameters of the model to adapt to the requirements of specific tasks.

2.BERT

BERT is another large-scale language model based on Transformer. It is different from GPT in that it is bidirectional , that is, it can simultaneously use contextual information to predict words. BERT uses two tasks in the pre-training stage: mask language modeling and next sentence prediction. The mask language modeling task is to randomly mask some words in the input sequence and let the model predict these masked words; the next sentence prediction task is to determine whether two sentences are continuous. BERT can be fine-tuned to adapt to various natural language processing tasks, such as text classification, sequence labeling, etc.

3. Differences and connections

Different goals: The goal of the word embedding model is to map words into a low-dimensional vector space so that the computer Can better understand and process text information; the goal of large-scale language models is to learn the probability distribution of language through pre-training, thereby achieving natural language understanding and generation.

Different application scenarios: word embedding models are mainly used in text analysis, information retrieval and other tasks, such as sentiment analysis, recommendation systems, etc.; large language models are mainly used in text generation, text classification, Tasks such as machine translation, such as generating dialogues, generating news articles, etc.

The algorithm principles are different: word embedding models mainly use neural network-based algorithms, such as Word2Vec, GloVe, etc.; large language models mainly use Transformer-based algorithms, such as GPT, BERT, etc.

Different model sizes: Word embedding models are generally smaller than large language models because they only need to learn similarities between words, while large language models need to learn more complex language structures and semantic information.

The pre-training methods are different: word embedding models usually use unsupervised pre-training, while large language models usually use a mixture of supervised and unsupervised pre-training.

In general, word embedding models and large language models are very important technologies in natural language processing. Their differences mainly lie in their goals, application scenarios, algorithm principles, model scale and pre-training methods. In practical applications, it is very important to choose an appropriate model based on specific task requirements and data conditions.

The above is the detailed content of The difference between large language models and word embedding models. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

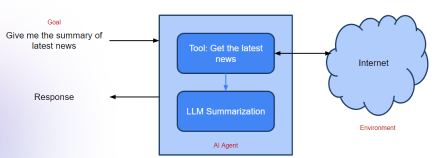

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

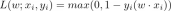

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

SublimeText3 Linux new version

SublimeText3 Linux latest version

SublimeText3 Chinese version

Chinese version, very easy to use