Technology peripherals

Technology peripherals AI

AI Introduce the definition, usage scenarios, algorithms and techniques of ensemble learning

Introduce the definition, usage scenarios, algorithms and techniques of ensemble learningIntroduce the definition, usage scenarios, algorithms and techniques of ensemble learning

Ensemble learning is a method that achieves consensus by integrating the salient features of multiple models. By combining predictions from multiple models, ensemble learning frameworks can improve the robustness of predictions and thereby reduce prediction errors. By integrating the different advantages of multiple models, ensemble learning can better adapt to complex data distribution and uncertainty, and improve the accuracy and robustness of predictions.

To understand simply, ensemble learning captures complementary information from different models.

In this article, let’s take a look at the situations in which integrated learning will be used, and what algorithms and techniques are available for integrated learning?

Application of ensemble learning

1. Unable to choose the best model

Different models are Certain distributions in the data set perform better, and an ensemble of models may draw more discriminative decision boundaries between all three classes of data.

2. Excess/insufficiency of data

When a large amount of data is available, we can divide the classification between different classifiers tasks and integrate them within prediction time, rather than trying to train a classifier with large amounts of data. And in cases where the available data set is smaller, a guided integration strategy can be used.

3. Confidence estimation

The core of the ensemble framework is based on the confidence of different model predictions.

4. High problem complexity

A single classifier may not be able to generate appropriate boundaries. An ensemble of multiple linear classifiers can generate any polynomial decision boundary.

5. Information fusion

The most common reason for using ensemble learning models is information fusion to improve classification performance. That is, use a model that has been trained on different data distributions belonging to the same set of categories during prediction time to obtain more robust decisions.

Ensemble learning algorithms and techniques

Bagging integration algorithm

As the earliest proposed ensemble One of the methods. Subsamples are created from a data set and they are called "bootstrap sampling". Simply put, random subsets of the dataset are created using replacement, which means the same data points may exist in multiple subsets.

These subsets are now treated as independent datasets to which multiple machine learning models will be fit. During testing, the predictions of all such models trained on different subsets of the same data are taken into account. Finally there is an aggregation mechanism used to calculate the final prediction.

Parallel processing flows occur in the mechanism of Bagging, whose main purpose is to reduce the variance in ensemble predictions. , therefore, the selected ensemble classifier usually has high variance and low bias.

Therefore, the selected ensemble classifier usually has high variance and low bias.

Boosting integration algorithm

Different from the Bagging integration algorithm, the Boosting integration algorithm does not process data in parallel, but processes the data set sequentially. The first classifier takes in the entire dataset and analyzes the predictions. Instances that fail to produce correct predictions are fed to a second classifier. The ensemble of all these previous classifiers is then computed to make the final prediction on the test data.

The main purpose of the Boosting algorithm is to reduce bias in ensemble decision-making. Therefore, the classifier selected for the ensemble usually needs to have low variance and high bias, i.e. a simpler model with fewer trainable parameters.

stacking ensemble algorithm

The output of this algorithm model is used as the input of another classifier (meta-classifier), and finally Prediction sample. The purpose of using a two-layer classifier is to determine whether the training data has been learned, helping the meta-classifier to correct or improve before making the final prediction.

Mixture of Experts

This method trains multiple classifiers, and then the output is integrated using generalized linear rules. The weights assigned to these combinations are further determined by the "Gating Network", which is also a trainable model, usually a neural network.

Majority Voting

Majority Voting is one of the earliest and simplest integration schemes in the literature. In this method, an odd number of contributing classifiers are selected and the predictions from the classifiers are calculated for each sample. Then, most of the predicted classes considered as sets are obtained from the pool of classifiers.

This method is suitable for binary classification problems because only two candidate classifiers can be voted on. However, methods based on confidence scores are more reliable for now.

Max rule

The "Max rule" ensemble method relies on the probability distribution generated by each classifier. This method uses the concept of "prediction confidence" of the classifier, and for the class predicted by the classifier, the corresponding confidence score is checked. Consider the prediction of the classifier with the highest confidence score as the prediction of the ensemble framework.

Probability average

In this ensemble technique, the probability scores of multiple models are first calculated. Then, the scores of all models across all classes in the dataset are averaged. The probability score is the confidence level in a particular model's prediction. Therefore, the confidence scores of several models are pooled to generate the final probability score of the ensemble. The class with the highest probability after the averaging operation is assigned as the prediction.

Weighted Probability Averaging

Similar to the method of probability averaging, the probability or confidence scores are extracted from different contributing models. But the difference is that a weighted average of the probabilities is calculated. The weight in this method refers to the importance of each classifier, that is, a classifier whose overall performance on the data set is better than another classifier is given a higher importance when calculating the ensemble, thus giving the ensemble framework Better predictive capabilities.

The above is the detailed content of Introduce the definition, usage scenarios, algorithms and techniques of ensemble learning. For more information, please follow other related articles on the PHP Chinese website!

Newest Annual Compilation Of The Best Prompt Engineering TechniquesApr 10, 2025 am 11:22 AM

Newest Annual Compilation Of The Best Prompt Engineering TechniquesApr 10, 2025 am 11:22 AMFor those of you who might be new to my column, I broadly explore the latest advances in AI across the board, including topics such as embodied AI, AI reasoning, high-tech breakthroughs in AI, prompt engineering, training of AI, fielding of AI, AI re

Europe's AI Continent Action Plan: Gigafactories, Data Labs, And Green AIApr 10, 2025 am 11:21 AM

Europe's AI Continent Action Plan: Gigafactories, Data Labs, And Green AIApr 10, 2025 am 11:21 AMEurope's ambitious AI Continent Action Plan aims to establish the EU as a global leader in artificial intelligence. A key element is the creation of a network of AI gigafactories, each housing around 100,000 advanced AI chips – four times the capaci

Is Microsoft's Straightforward Agent Story Enough To Create More Fans?Apr 10, 2025 am 11:20 AM

Is Microsoft's Straightforward Agent Story Enough To Create More Fans?Apr 10, 2025 am 11:20 AMMicrosoft's Unified Approach to AI Agent Applications: A Clear Win for Businesses Microsoft's recent announcement regarding new AI agent capabilities impressed with its clear and unified presentation. Unlike many tech announcements bogged down in te

Selling AI Strategy To Employees: Shopify CEO's ManifestoApr 10, 2025 am 11:19 AM

Selling AI Strategy To Employees: Shopify CEO's ManifestoApr 10, 2025 am 11:19 AMShopify CEO Tobi Lütke's recent memo boldly declares AI proficiency a fundamental expectation for every employee, marking a significant cultural shift within the company. This isn't a fleeting trend; it's a new operational paradigm integrated into p

IBM Launches Z17 Mainframe With Full AI IntegrationApr 10, 2025 am 11:18 AM

IBM Launches Z17 Mainframe With Full AI IntegrationApr 10, 2025 am 11:18 AMIBM's z17 Mainframe: Integrating AI for Enhanced Business Operations Last month, at IBM's New York headquarters, I received a preview of the z17's capabilities. Building on the z16's success (launched in 2022 and demonstrating sustained revenue grow

5 ChatGPT Prompts To Stop Depending On Others And Trust Yourself FullyApr 10, 2025 am 11:17 AM

5 ChatGPT Prompts To Stop Depending On Others And Trust Yourself FullyApr 10, 2025 am 11:17 AMUnlock unshakeable confidence and eliminate the need for external validation! These five ChatGPT prompts will guide you towards complete self-reliance and a transformative shift in self-perception. Simply copy, paste, and customize the bracketed in

AI Is Dangerously Similar To Your MindApr 10, 2025 am 11:16 AM

AI Is Dangerously Similar To Your MindApr 10, 2025 am 11:16 AMA recent [study] by Anthropic, an artificial intelligence security and research company, begins to reveal the truth about these complex processes, showing a complexity that is disturbingly similar to our own cognitive domain. Natural intelligence and artificial intelligence may be more similar than we think. Snooping inside: Anthropic Interpretability Study The new findings from the research conducted by Anthropic represent significant advances in the field of mechanistic interpretability, which aims to reverse engineer internal computing of AI—not just observe what AI does, but understand how it does it at the artificial neuron level. Imagine trying to understand the brain by drawing which neurons fire when someone sees a specific object or thinks about a specific idea. A

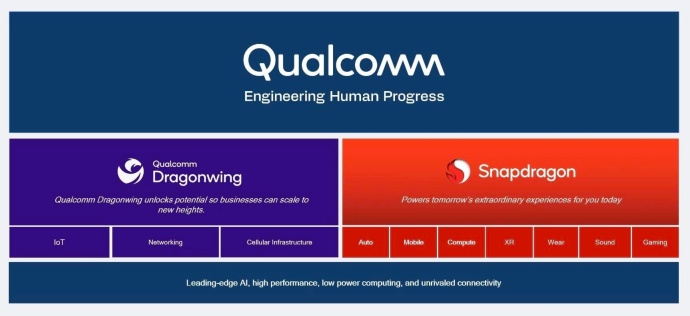

Dragonwing Showcases Qualcomm's Edge MomentumApr 10, 2025 am 11:14 AM

Dragonwing Showcases Qualcomm's Edge MomentumApr 10, 2025 am 11:14 AMQualcomm's Dragonwing: A Strategic Leap into Enterprise and Infrastructure Qualcomm is aggressively expanding its reach beyond mobile, targeting enterprise and infrastructure markets globally with its new Dragonwing brand. This isn't merely a rebran

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SublimeText3 Linux new version

SublimeText3 Linux latest version

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.