Dropout is a simple and effective regularization strategy used to reduce overfitting of neural networks and improve generalization capabilities. The main idea is to randomly discard a part of neurons during the training process so that the network does not rely too much on the output of any one neuron. This mandatory random dropping allows the network to learn more robust feature representations. With Dropout, neural networks become more robust, adapt better to new data, and reduce the risk of overfitting. This regularization method is widely used in practice and has been shown to significantly improve the performance of neural networks.

Dropout is a commonly used regularization technique used to reduce overfitting of neural networks. It does this by randomly setting the output of some neurons to 0 with a certain probability on each training sample. Specifically, Dropout can be viewed as randomly sampling a neural network multiple times. Each sampling generates a different subnetwork in which some neurons are temporarily ignored. Parameters are shared between these sub-networks, but since each sub-network only sees the output of a subset of neurons, they learn different feature representations. During the training process, Dropout can reduce the interdependence between neurons and prevent certain neurons from being overly dependent on other neurons. This helps improve the generalization ability of the network. And while testing, Dropout no longer works. To keep the expected value constant, the outputs of all neurons are multiplied by a fixed ratio. This results in a network that averages the outputs of all subnetworks during training. By using Dropout, overfitting can be effectively reduced and the performance and generalization ability of the neural network can be improved.

The advantage of Dropout is that it can effectively reduce the risk of over-fitting and improve the generalization performance of the neural network. By randomly discarding some neurons, Dropout can reduce the synergy between neurons, thereby forcing the network to learn more robust feature representations. In addition, Dropout can also prevent co-adaptation between neurons, that is, prevent certain neurons from functioning only in the presence of other neurons, thereby enhancing the generalization ability of the network. In this way, the neural network is better able to adapt to unseen data and is more robust to noisy data. Therefore, Dropout is a very effective regularization method and is widely used in deep learning.

However, although Dropout is widely used in deep neural networks to improve the generalization ability of the model and prevent overfitting, it also has some shortcomings that need to be noted. First, Dropout will reduce the effective capacity of the neural network. This is because during the training process, the output of each neuron is set to 0 with a certain probability, thus reducing the expressive ability of the network. This means that the network may not be able to adequately learn complex patterns and relationships, limiting its performance. Secondly, Dropout introduces a certain amount of noise, which may reduce the training speed and efficiency of the network. This is because in each training sample, Dropout will randomly discard a part of neurons, causing the backpropagation algorithm of the network to be interfered, thereby increasing the complexity and time overhead of training. In addition, Dropout requires special processing methods to handle the connections between different layers in the network to ensure the correctness and stability of the network. Since Dropout discards some neurons, the connections in the network will become sparse, which may lead to an unbalanced structure of the network and thus affect the performance of the network. In summary

#In order to overcome these problems, researchers have proposed some improved Dropout methods. One approach is to combine Dropout with other regularization techniques, such as L1 and L2 regularization, to improve the generalization ability of the network. By using these methods together, you can reduce the risk of overfitting and improve the network's performance on unseen data. In addition, some studies have shown that Dropout-based methods can further improve the performance of the network by dynamically adjusting the Dropout rate. This means that during the training process, the Dropout rate can be automatically adjusted according to the learning situation of the network, thereby better controlling the degree of overfitting. Through these improved Dropout methods, the network can improve generalization performance and reduce the risk of overfitting while maintaining effective capacity.

Below we will use a simple example to demonstrate how to use Dropout regularization to improve the generalization performance of neural networks. We will use the Keras framework to implement a Dropout-based multilayer perceptron (MLP) model for classifying handwritten digits.

First, we need to load the MNIST data set and preprocess the data. In this example, we will normalize the input data to real numbers between 0 and 1 and convert the output labels to one-hot encoding. The code is as follows:

import numpy as np from tensorflow import keras # 加载MNIST数据集 (x_train, y_train), (x_test, y_test) = keras.datasets.mnist.load_data() # 将输入数据归一化为0到1之间的实数 x_train = x_train.astype(np.float32) / 255. x_test = x_test.astype(np.float32) / 255. # 将输出标签转换为one-hot编码 y_train = keras.utils.to_categorical(y_train, 10) y_test = keras.utils.to_categorical(y_test, 10)

Next, we define an MLP model based on Dropout. The model consists of two hidden layers and an output layer, each hidden layer uses a ReLU activation function, and a Dropout layer is used after each hidden layer. We set the dropout rate to 0.2, which means randomly dropping 20% of neurons on each training sample. code show as below:

# 定义基于Dropout的MLP模型

model = keras.models.Sequential([

keras.layers.Flatten(input_shape=[28, 28]),

keras.layers.Dense(128, activation="relu"),

keras.layers.Dropout(0.2),

keras.layers.Dense(64, activation="relu"),

keras.layers.Dropout(0.2),

keras.layers.Dense(10, activation="softmax")

])最后,我们使用随机梯度下降(SGD)优化器和交叉熵损失函数来编译模型,并在训练过程中使用早停法来避免过拟合。代码如下:

# 定义基于Dropout的MLP模型

model = keras.models.Sequential([

keras.layers.Flatten(input_shape=[28, 28]),

keras.layers.Dense(128, activation="relu"),

keras.layers.Dropout(0.2),

keras.layers.Dense(64, activation="relu"),

keras.layers.Dropout(0.2),

keras.layers.Dense(10, activation="softmax")

])在训练过程中,我们可以观察到模型的训练误差和验证误差随着训练轮数的增加而减小,说明Dropout正则化确实可以减少过拟合的风险。最终,我们可以评估模型在测试集上的性能,并输出分类准确率。代码如下:

# 评估模型性能

test_loss, test_acc = model.evaluate(x_test, y_test)

# 输出分类准确率

print("Test accuracy:", test_acc)通过以上步骤,我们就完成了一个基于Dropout正则化的多层感知机模型的构建和训练。通过使用Dropout,我们可以有效地提高模型的泛化性能,并减少过拟合的风险。

The above is the detailed content of Explain and demonstrate the Dropout regularization strategy. For more information, please follow other related articles on the PHP Chinese website!

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM

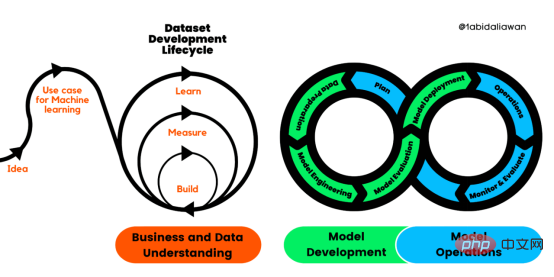

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM译者 | 布加迪审校 | 孙淑娟目前,没有用于构建和管理机器学习(ML)应用程序的标准实践。机器学习项目组织得不好,缺乏可重复性,而且从长远来看容易彻底失败。因此,我们需要一套流程来帮助自己在整个机器学习生命周期中保持质量、可持续性、稳健性和成本管理。图1. 机器学习开发生命周期流程使用质量保证方法开发机器学习应用程序的跨行业标准流程(CRISP-ML(Q))是CRISP-DM的升级版,以确保机器学习产品的质量。CRISP-ML(Q)有六个单独的阶段:1. 业务和数据理解2. 数据准备3. 模型

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM

2023年机器学习的十大概念和技术Apr 04, 2023 pm 12:30 PM机器学习是一个不断发展的学科,一直在创造新的想法和技术。本文罗列了2023年机器学习的十大概念和技术。 本文罗列了2023年机器学习的十大概念和技术。2023年机器学习的十大概念和技术是一个教计算机从数据中学习的过程,无需明确的编程。机器学习是一个不断发展的学科,一直在创造新的想法和技术。为了保持领先,数据科学家应该关注其中一些网站,以跟上最新的发展。这将有助于了解机器学习中的技术如何在实践中使用,并为自己的业务或工作领域中的可能应用提供想法。2023年机器学习的十大概念和技术:1. 深度神经网

基于因果森林算法的决策定位应用Apr 08, 2023 am 11:21 AM

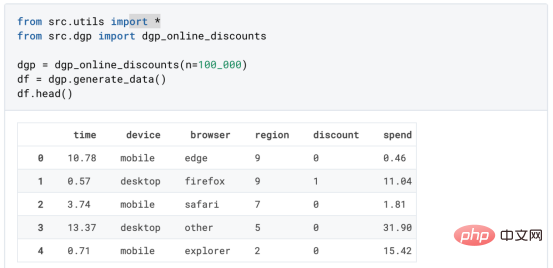

基于因果森林算法的决策定位应用Apr 08, 2023 am 11:21 AM译者 | 朱先忠审校 | 孙淑娟在我之前的博客中,我们已经了解了如何使用因果树来评估政策的异质处理效应。如果你还没有阅读过,我建议你在阅读本文前先读一遍,因为我们在本文中认为你已经了解了此文中的部分与本文相关的内容。为什么是异质处理效应(HTE:heterogenous treatment effects)呢?首先,对异质处理效应的估计允许我们根据它们的预期结果(疾病、公司收入、客户满意度等)选择提供处理(药物、广告、产品等)的用户(患者、用户、客户等)。换句话说,估计HTE有助于我

使用PyTorch进行小样本学习的图像分类Apr 09, 2023 am 10:51 AM

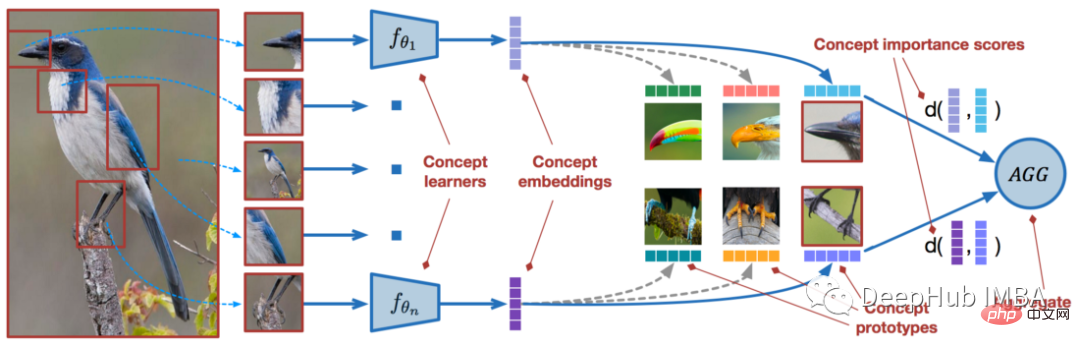

使用PyTorch进行小样本学习的图像分类Apr 09, 2023 am 10:51 AM近年来,基于深度学习的模型在目标检测和图像识别等任务中表现出色。像ImageNet这样具有挑战性的图像分类数据集,包含1000种不同的对象分类,现在一些模型已经超过了人类水平上。但是这些模型依赖于监督训练流程,标记训练数据的可用性对它们有重大影响,并且模型能够检测到的类别也仅限于它们接受训练的类。由于在训练过程中没有足够的标记图像用于所有类,这些模型在现实环境中可能不太有用。并且我们希望的模型能够识别它在训练期间没有见到过的类,因为几乎不可能在所有潜在对象的图像上进行训练。我们将从几个样本中学习

LazyPredict:为你选择最佳ML模型!Apr 06, 2023 pm 08:45 PM

LazyPredict:为你选择最佳ML模型!Apr 06, 2023 pm 08:45 PM本文讨论使用LazyPredict来创建简单的ML模型。LazyPredict创建机器学习模型的特点是不需要大量的代码,同时在不修改参数的情况下进行多模型拟合,从而在众多模型中选出性能最佳的一个。 摘要本文讨论使用LazyPredict来创建简单的ML模型。LazyPredict创建机器学习模型的特点是不需要大量的代码,同时在不修改参数的情况下进行多模型拟合,从而在众多模型中选出性能最佳的一个。本文包括的内容如下:简介LazyPredict模块的安装在分类模型中实施LazyPredict

Mango:基于Python环境的贝叶斯优化新方法Apr 08, 2023 pm 12:44 PM

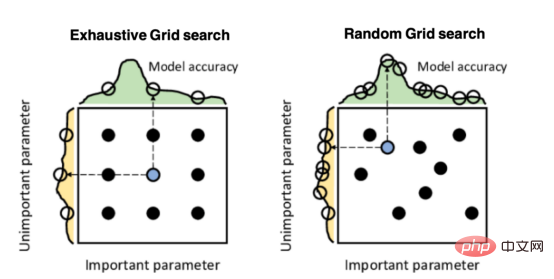

Mango:基于Python环境的贝叶斯优化新方法Apr 08, 2023 pm 12:44 PM译者 | 朱先忠审校 | 孙淑娟引言模型超参数(或模型设置)的优化可能是训练机器学习算法中最重要的一步,因为它可以找到最小化模型损失函数的最佳参数。这一步对于构建不易过拟合的泛化模型也是必不可少的。优化模型超参数的最著名技术是穷举网格搜索和随机网格搜索。在第一种方法中,搜索空间被定义为跨越每个模型超参数的域的网格。通过在网格的每个点上训练模型来获得最优超参数。尽管网格搜索非常容易实现,但它在计算上变得昂贵,尤其是当要优化的变量数量很大时。另一方面,随机网格搜索是一种更快的优化方法,可以提供更好的

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM

超参数优化比较之网格搜索、随机搜索和贝叶斯优化Apr 04, 2023 pm 12:05 PM本文将详细介绍用来提高机器学习效果的最常见的超参数优化方法。 译者 | 朱先忠审校 | 孙淑娟简介通常,在尝试改进机器学习模型时,人们首先想到的解决方案是添加更多的训练数据。额外的数据通常是有帮助(在某些情况下除外)的,但生成高质量的数据可能非常昂贵。通过使用现有数据获得最佳模型性能,超参数优化可以节省我们的时间和资源。顾名思义,超参数优化是为机器学习模型确定最佳超参数组合以满足优化函数(即,给定研究中的数据集,最大化模型的性能)的过程。换句话说,每个模型都会提供多个有关选项的调整“按钮

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM

人工智能自动获取知识和技能,实现自我完善的过程是什么Aug 24, 2022 am 11:57 AM实现自我完善的过程是“机器学习”。机器学习是人工智能核心,是使计算机具有智能的根本途径;它使计算机能模拟人的学习行为,自动地通过学习来获取知识和技能,不断改善性能,实现自我完善。机器学习主要研究三方面问题:1、学习机理,人类获取知识、技能和抽象概念的天赋能力;2、学习方法,对生物学习机理进行简化的基础上,用计算的方法进行再现;3、学习系统,能够在一定程度上实现机器学习的系统。

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

Notepad++7.3.1

Easy-to-use and free code editor

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.