Methods and steps for using BERT for sentiment analysis in Python

BERT is a pre-trained deep learning language model proposed by Google in 2018. The full name is Bidirectional Encoder Representations from Transformers. It is based on the Transformer architecture and has the characteristics of bidirectional encoding. Compared with traditional one-way coding models, BERT can consider contextual information at the same time when processing text, so it performs well in natural language processing tasks. Its bidirectionality enables BERT to better understand the semantic relationships in sentences, thereby improving the expressive ability of the model. Through pre-training and fine-tuning methods, BERT can be used for various natural language processing tasks, such as sentiment analysis, named entity recognition, and question answering systems. The emergence of BERT has attracted great attention in the field of natural language processing and has achieved remarkable research results. Its success also provides new ideas and methods for the application of deep learning in the field of natural language processing.

Sentiment analysis is a natural language processing task that aims to identify emotions or sentiments in text. It is important for businesses and organizations to understand how the public views them, for governments to monitor public opinion on social media, and for e-commerce websites to identify consumers' emotions. Traditional sentiment analysis methods are mainly based on dictionaries, utilizing predefined vocabularies to identify emotions. However, these methods often fail to capture contextual information and the complexity of language, so their accuracy is limited. To overcome this problem, sentiment analysis methods based on machine learning and deep learning have emerged in recent years. These methods utilize large amounts of text data for training and can better understand context and semantics, thereby improving the accuracy of sentiment analysis. Through these methods, we can better understand and apply sentiment analysis technology to provide more accurate analysis results for corporate decision-making, public opinion monitoring, and product promotion.

With BERT, we can more accurately identify emotional information in text. BERT captures the semantic information of each text segment by representing it as vectors, and inputs these vectors into a classification model to determine the emotional category of the text. To achieve this goal, BERT first pre-trains on a large corpus to learn the capabilities of the language model, and then improves the performance of the model by fine-tuning the model to adapt to specific sentiment analysis tasks. By combining pre-training and fine-tuning, BERT is able to perform excellently in sentiment analysis.

In Python, we can use Hugging Face’s Transformers library to perform sentiment analysis using BERT. The following are the basic steps for using BERT for sentiment analysis:

1. Install the Transformers library and the TensorFlow or PyTorch library.

!pip install transformers !pip install tensorflow # 或者 PyTorch

2. Import the necessary libraries and modules, including the Transformers library and classifier model.

import tensorflow as tf from transformers import BertTokenizer, TFBertForSequenceClassification

3. Load the BERT model and classifier model. In this example, we use BERT’s pre-trained model “bert-base-uncased” and a binary classifier.

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased') model = TFBertForSequenceClassification.from_pretrained('bert-base-uncased', num_labels=2)

4. Prepare text data and encode it. Use a tokenizer to encode the text so that it can be fed into the BERT model. In sentiment analysis tasks, we usually use binary classifiers, so we need to label text as positive or negative sentiment.

text = "I love this movie!" encoded_text = tokenizer(text, padding=True, truncation=True, return_tensors='tf')

5. Using the encoded text as input, feed it into the BERT model to obtain the representation vector of the text.

output = model(encoded_text['input_ids'])

6. Based on the output of the classifier, determine the emotional category of the text.

sentiment = tf.argmax(output.logits, axis=1)

if sentiment == 0:

print("Negative sentiment")

else:

print("Positive sentiment")This is the basic step for sentiment analysis using BERT. Of course, this is just a simple example, you can fine-tune the model as needed and use more complex classifiers to improve the accuracy of your sentiment analysis.

In short, BERT is a powerful natural language processing model that can help us better identify emotions in text. Using the Transformers library and Python, we can easily use BERT for sentiment analysis.

The above is the detailed content of Methods and steps for using BERT for sentiment analysis in Python. For more information, please follow other related articles on the PHP Chinese website!

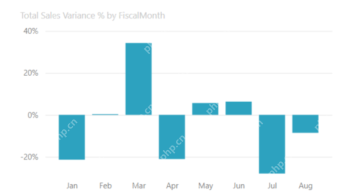

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PM

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PMHarnessing the Power of Data Visualization with Microsoft Power BI Charts In today's data-driven world, effectively communicating complex information to non-technical audiences is crucial. Data visualization bridges this gap, transforming raw data i

Expert Systems in AIApr 16, 2025 pm 12:00 PM

Expert Systems in AIApr 16, 2025 pm 12:00 PMExpert Systems: A Deep Dive into AI's Decision-Making Power Imagine having access to expert advice on anything, from medical diagnoses to financial planning. That's the power of expert systems in artificial intelligence. These systems mimic the pro

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AM

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AMFirst of all, it’s apparent that this is happening quickly. Various companies are talking about the proportions of their code that are currently written by AI, and these are increasing at a rapid clip. There’s a lot of job displacement already around

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AMThe film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AM

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AMISRO's Free AI/ML Online Course: A Gateway to Geospatial Technology Innovation The Indian Space Research Organisation (ISRO), through its Indian Institute of Remote Sensing (IIRS), is offering a fantastic opportunity for students and professionals to

Local Search Algorithms in AIApr 16, 2025 am 11:40 AM

Local Search Algorithms in AIApr 16, 2025 am 11:40 AMLocal Search Algorithms: A Comprehensive Guide Planning a large-scale event requires efficient workload distribution. When traditional approaches fail, local search algorithms offer a powerful solution. This article explores hill climbing and simul

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AMThe release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AMChip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Dreamweaver Mac version

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor