Random forest is a powerful machine learning algorithm popular for its ability to handle complex data sets and achieve high accuracy. However, on some given data sets, the default hyperparameters of Random Forest may not achieve optimal results. Therefore, hyperparameter tuning becomes a key step to improve model performance. By exploring different hyperparameter combinations, you can find optimal hyperparameter values to build a robust and accurate model. This process is particularly important for random forests as it provides better model generalization and prediction accuracy.

The hyperparameters of random forests cover the number of trees, the depth of the trees, and the minimum number of samples per node. In order to optimize model performance, different hyperparameter tuning methods can be used, such as grid search, random search, and Bayesian optimization. Grid search searches for the best combination by exhausting all possible hyperparameter combinations; random search randomly samples the hyperparameter space to find the optimal hyperparameter. The Bayesian optimization method uses the prior distribution and the objective function to establish a Gaussian process model, and continuously adjusts the hyperparameters to minimize the objective function. When adjusting hyperparameters, cross-validation is an essential step to evaluate model performance and avoid overfitting and underfitting problems.

In addition, there are some common techniques that can be used in hyperparameter adjustment of random forests, such as:

1. Increase the number of trees Number

Increasing the number of trees can improve model accuracy, but will increase computational costs. The more trees there are, the higher the accuracy, but it tends to be saturated.

2. Limit the depth of the tree

Limiting the depth of the tree can effectively avoid overfitting. Generally speaking, the deeper the depth of the tree, the higher the complexity of the model and it is easy to overfit.

3. Adjust the minimum number of samples for each node

Adjusting the minimum number of samples for each node can control the growth speed and complexity of the tree. A smaller minimum number of samples can cause the tree to grow deeper, but also increases the risk of overfitting; a larger minimum number of samples can limit the growth of the tree, but can also lead to underfitting.

4. Choose the appropriate number of features

Random forest can randomly select a part of features for training each decision tree, thereby avoiding certain Features have too large an impact on the model. Generally speaking, the more features you select, the higher the accuracy of the model, but it also increases the computational cost and the risk of overfitting.

5. Use OOB error to estimate model performance

Each decision tree in a random forest is trained using a subset of samples, so You can use an untrained sample set to estimate the performance of the model. This set is the Out-Of-Bag sample set. OOB error can be used to evaluate the generalization ability of the model.

6. Choose appropriate random seeds

The randomness in random forests comes not only from the random selection of features, but also from random seeds s Choice. Different random seeds may lead to different model performance, so appropriate random seeds need to be selected to ensure the stability and repeatability of the model.

7. Resample samples

By resampling samples, the diversity of the model can be increased, thereby improving the accuracy of the model. . Commonly used resampling methods include Bootstrap and SMOTE.

8. Use the ensemble method

Random forest itself is an ensemble method that can combine multiple random forest models to form a more powerful model. Commonly used integration methods include Bagging and Boosting.

9. Consider the class imbalance problem

When dealing with the class imbalance problem, random forests can be used for classification. Commonly used methods include increasing the weight of positive samples, reducing the weight of negative samples, using cost-sensitive learning, etc.

10. Use feature engineering

Feature engineering can help improve the accuracy and generalization ability of the model. Commonly used feature engineering methods include feature selection, feature extraction, feature transformation, etc.

The above is the detailed content of Optimizing Hyperparameters of Random Forest. For more information, please follow other related articles on the PHP Chinese website!

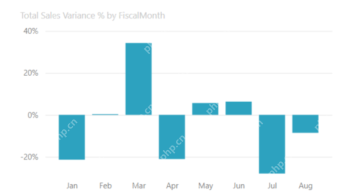

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PM

Most Used 10 Power BI Charts - Analytics VidhyaApr 16, 2025 pm 12:05 PMHarnessing the Power of Data Visualization with Microsoft Power BI Charts In today's data-driven world, effectively communicating complex information to non-technical audiences is crucial. Data visualization bridges this gap, transforming raw data i

Expert Systems in AIApr 16, 2025 pm 12:00 PM

Expert Systems in AIApr 16, 2025 pm 12:00 PMExpert Systems: A Deep Dive into AI's Decision-Making Power Imagine having access to expert advice on anything, from medical diagnoses to financial planning. That's the power of expert systems in artificial intelligence. These systems mimic the pro

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AM

Three Of The Best Vibe Coders Break Down This AI Revolution In CodeApr 16, 2025 am 11:58 AMFirst of all, it’s apparent that this is happening quickly. Various companies are talking about the proportions of their code that are currently written by AI, and these are increasing at a rapid clip. There’s a lot of job displacement already around

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AM

Runway AI's Gen-4: How Can AI Montage Go Beyond AbsurdityApr 16, 2025 am 11:45 AMThe film industry, alongside all creative sectors, from digital marketing to social media, stands at a technological crossroad. As artificial intelligence begins to reshape every aspect of visual storytelling and change the landscape of entertainment

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AM

How to Enroll for 5 Days ISRO AI Free Courses? - Analytics VidhyaApr 16, 2025 am 11:43 AMISRO's Free AI/ML Online Course: A Gateway to Geospatial Technology Innovation The Indian Space Research Organisation (ISRO), through its Indian Institute of Remote Sensing (IIRS), is offering a fantastic opportunity for students and professionals to

Local Search Algorithms in AIApr 16, 2025 am 11:40 AM

Local Search Algorithms in AIApr 16, 2025 am 11:40 AMLocal Search Algorithms: A Comprehensive Guide Planning a large-scale event requires efficient workload distribution. When traditional approaches fail, local search algorithms offer a powerful solution. This article explores hill climbing and simul

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AM

OpenAI Shifts Focus With GPT-4.1, Prioritizes Coding And Cost EfficiencyApr 16, 2025 am 11:37 AMThe release includes three distinct models, GPT-4.1, GPT-4.1 mini and GPT-4.1 nano, signaling a move toward task-specific optimizations within the large language model landscape. These models are not immediately replacing user-facing interfaces like

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AM

The Prompt: ChatGPT Generates Fake PassportsApr 16, 2025 am 11:35 AMChip giant Nvidia said on Monday it will start manufacturing AI supercomputers— machines that can process copious amounts of data and run complex algorithms— entirely within the U.S. for the first time. The announcement comes after President Trump si

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

WebStorm Mac version

Useful JavaScript development tools

EditPlus Chinese cracked version

Small size, syntax highlighting, does not support code prompt function

Dreamweaver Mac version

Visual web development tools

Zend Studio 13.0.1

Powerful PHP integrated development environment

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.