Technology peripherals

Technology peripherals AI

AI iPhone renders a 300-square-meter room in real time, reaching centimeter-level accuracy! Google's latest research: NeRF is not bankrupt yet

iPhone renders a 300-square-meter room in real time, reaching centimeter-level accuracy! Google's latest research: NeRF is not bankrupt yet3D real-time rendering of large scenes can be completed with a computer or even a mobile phone.

Every blind corner from the living room to the master bedroom, storage room, kitchen, and bathroom can be realistically rendered on the computer, just like shooting a video of the real thing.

Moreover, you can also complete complex scene rendering on an iPhone.

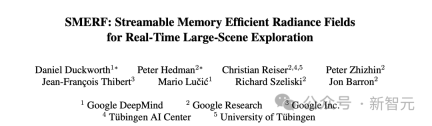

Researchers from Google, Google DeepMind and the University of Tübingen recently proposed a new technology SMERF.

It can render large view scenes in real time on various devices such as smartphones and laptops.

Paper address: https://arxiv.org/pdf/2312.07541.pdf

Essence Technically speaking, SMERF is a method based on NeRFs and relies on MERF (Memory-Efficient Radiance Fields), which is more memory efficient.

NeRF is dead?

Currently, Radiance fields have become a powerful and easy-to-optimize representation for reconstructing and re-rendering photorealistic real-world 3D scenes.

In contrast to explicit representations such as meshes and point clouds, radiation fields are typically stored as neural networks and rendered using volumetric ray travel.

Given a large enough computational budget, neural networks can concisely represent complex geometries and view-dependent effects.

#As a volumetric representation, the number of operations required to render an image is measured in the number of pixels rather than the number of primitives (e.g. triangles), giving the best performance The best models require tens of millions of network evaluations.

Therefore, real-time methods of radiation fields make concessions in terms of quality, speed, or representation size, and whether such representations can compete with alternatives such as Gaussian Splatting , remains an open question.

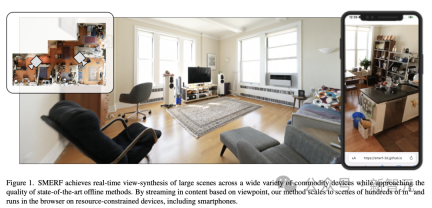

In the latest research, the author proposes a scalable method to achieve higher fidelity real-time large-space rendering than ever before.

SMERF renders in real time with centimeter-level accuracy

SMERF is specially designed for learning large 3D representations, such as the rendering of houses.

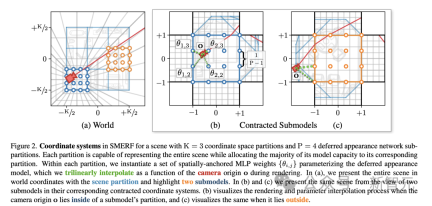

Google and other researchers combined a hierarchical model partitioning scheme, in which different parts of the space and learning parameters are represented by different MERFs.

This not only increases model capacity, but also limits computational and memory requirements. Because large 3D representations like this cannot be rendered in real time with classic NERF.

Coordinate system of a scene in SMERF with K=3 coordinate space partitions and P=4 delayed appearance network sub-partitions

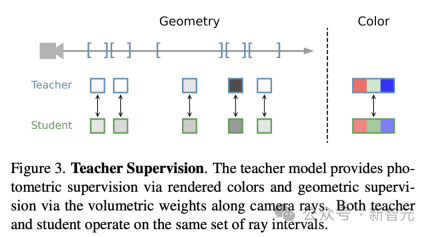

In order to improve the rendering quality of SMERF, the research team also used a "teacher-student" distillation method.

In this method, the already trained high-quality Zip-Nerf model (teacher) is used to train a new MERF model (student).

As shown below, the overall process of "teacher supervision". The teacher model provides photometric supervision by rendering colors and geometric supervision by volumetric weighting along camera rays. Both teacher and student operate on the same set of light intervals.

This approach allows researchers to transfer the detail and image quality of powerful Zip-Nerf models to more efficient and faster structures.

This is especially useful for apps on less powerful devices such as smartphones and laptops.

Experimental evaluation

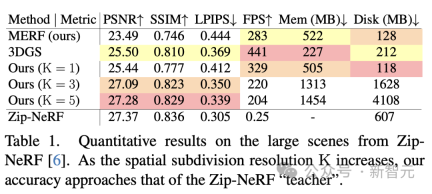

The researchers first evaluated the method on the four major scenarios introduced by Zip-NeRF: Berlin, Alameda, and London and New York.

Each of these scenes were taken from 1,000-2,000 photos using a 180° fisheye lens. For a comprehensive comparison with 3DGS, the researchers cropped the photos to 110° and used COLMAP to re-estimate the camera parameters.

The results shown in Table 1 show that for moderate spatial subdivisions K, the accuracy of the state-of-the-art methods significantly exceeds MERF and 3DGS.

As K increases, the reconstruction accuracy of the model improves and is close to the accuracy of its Zip-NeRF teacher. When K=5, the difference is less than 0.1 PSNR and 0.01 SSIM.

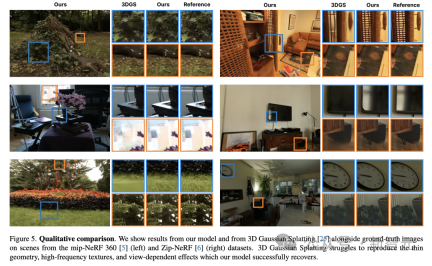

The researchers also found that these quantitative improvements underestimated the qualitative improvements in reconstruction accuracy, as shown in Figure 5.

In large scenes, the SMERF method consistently models thin geometry, high-frequency textures, specular highlights, and distant content beyond the reach of real-time baselines.

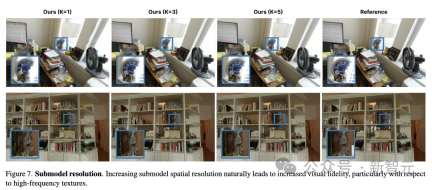

#At the same time, the researchers found that increasing sub-model resolution naturally improves quality, especially in terms of high-frequency textures.

In fact, the researchers found that the latest rendering method is almost indistinguishable from Zip-NeRF, as shown in Figure 8.

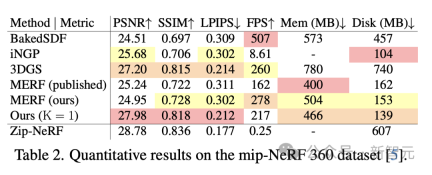

Additionally, the researchers further evaluated the state-of-the-art method on the mip-NeRF 360 dataset of indoor and outdoor scenes.

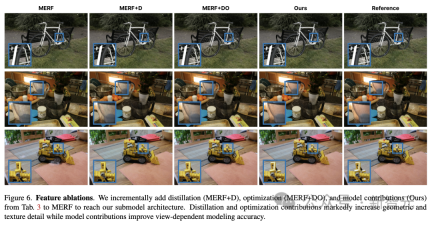

These scenes are much smaller than those in the Zip-NeRF dataset, so no spatial subdivision is required to obtain high-quality results. As shown in Table 2, the K=1 version of the model outperforms all previous real-time models in this benchmark in terms of image quality and is comparable in rendering speed to 3DGS.

Figures 6 and 8 qualitatively illustrate this improvement. The method proposed by the researchers is much better at representing high-frequency geometry and textures. , while eliminating distracting flotsam and fog.

Web pages can transmit realistic 3D spaces

Once trained, SMERF can be used in browsers Rollup enables full 6 degrees of freedom navigation with real-time rendering on popular smartphones and laptops.

Everyone knows that the ability to render large 3D scenes in real time is important for a variety of applications, including video games, virtual augmented reality, and professional design and architecture applications.

For example, in Google Immersive Maps, real-time navigation is possible.

However, the latest methods proposed by teams such as Google also have certain limitations. Although SMERF has excellent reconstruction quality and storage efficiency, it has high storage cost, long loading time, and heavy training workload.

However, this study shows that NeRFs and similar radiation fields may still have advantages in the future compared with three-dimensional Gaussian stitching methods.

The above is the detailed content of iPhone renders a 300-square-meter room in real time, reaching centimeter-level accuracy! Google's latest research: NeRF is not bankrupt yet. For more information, please follow other related articles on the PHP Chinese website!

![Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]](https://img.php.cn/upload/article/001/242/473/174717025174979.jpg?x-oss-process=image/resize,p_40) Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AM

Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AMChatGPT is not accessible? This article provides a variety of practical solutions! Many users may encounter problems such as inaccessibility or slow response when using ChatGPT on a daily basis. This article will guide you to solve these problems step by step based on different situations. Causes of ChatGPT's inaccessibility and preliminary troubleshooting First, we need to determine whether the problem lies in the OpenAI server side, or the user's own network or device problems. Please follow the steps below to troubleshoot: Step 1: Check the official status of OpenAI Visit the OpenAI Status page (status.openai.com) to see if the ChatGPT service is running normally. If a red or yellow alarm is displayed, it means Open

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AM

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AMOn 10 May 2025, MIT physicist Max Tegmark told The Guardian that AI labs should emulate Oppenheimer’s Trinity-test calculus before releasing Artificial Super-Intelligence. “My assessment is that the 'Compton constant', the probability that a race to

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AM

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AMAI music creation technology is changing with each passing day. This article will use AI models such as ChatGPT as an example to explain in detail how to use AI to assist music creation, and explain it with actual cases. We will introduce how to create music through SunoAI, AI jukebox on Hugging Face, and Python's Music21 library. Through these technologies, everyone can easily create original music. However, it should be noted that the copyright issue of AI-generated content cannot be ignored, and you must be cautious when using it. Let’s explore the infinite possibilities of AI in the music field together! OpenAI's latest AI agent "OpenAI Deep Research" introduces: [ChatGPT]Ope

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AM

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AMThe emergence of ChatGPT-4 has greatly expanded the possibility of AI applications. Compared with GPT-3.5, ChatGPT-4 has significantly improved. It has powerful context comprehension capabilities and can also recognize and generate images. It is a universal AI assistant. It has shown great potential in many fields such as improving business efficiency and assisting creation. However, at the same time, we must also pay attention to the precautions in its use. This article will explain the characteristics of ChatGPT-4 in detail and introduce effective usage methods for different scenarios. The article contains skills to make full use of the latest AI technologies, please refer to it. OpenAI's latest AI agent, please click the link below for details of "OpenAI Deep Research"

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AM

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AMChatGPT App: Unleash your creativity with the AI assistant! Beginner's Guide The ChatGPT app is an innovative AI assistant that handles a wide range of tasks, including writing, translation, and question answering. It is a tool with endless possibilities that is useful for creative activities and information gathering. In this article, we will explain in an easy-to-understand way for beginners, from how to install the ChatGPT smartphone app, to the features unique to apps such as voice input functions and plugins, as well as the points to keep in mind when using the app. We'll also be taking a closer look at plugin restrictions and device-to-device configuration synchronization

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AM

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AMChatGPT Chinese version: Unlock new experience of Chinese AI dialogue ChatGPT is popular all over the world, did you know it also offers a Chinese version? This powerful AI tool not only supports daily conversations, but also handles professional content and is compatible with Simplified and Traditional Chinese. Whether it is a user in China or a friend who is learning Chinese, you can benefit from it. This article will introduce in detail how to use ChatGPT Chinese version, including account settings, Chinese prompt word input, filter use, and selection of different packages, and analyze potential risks and response strategies. In addition, we will also compare ChatGPT Chinese version with other Chinese AI tools to help you better understand its advantages and application scenarios. OpenAI's latest AI intelligence

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AM

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AMThese can be thought of as the next leap forward in the field of generative AI, which gave us ChatGPT and other large-language-model chatbots. Rather than simply answering questions or generating information, they can take action on our behalf, inter

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AM

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AMEfficient multiple account management techniques using ChatGPT | A thorough explanation of how to use business and private life! ChatGPT is used in a variety of situations, but some people may be worried about managing multiple accounts. This article will explain in detail how to create multiple accounts for ChatGPT, what to do when using it, and how to operate it safely and efficiently. We also cover important points such as the difference in business and private use, and complying with OpenAI's terms of use, and provide a guide to help you safely utilize multiple accounts. OpenAI

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Linux new version

SublimeText3 Linux latest version

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

Notepad++7.3.1

Easy-to-use and free code editor