Technology peripherals

Technology peripherals AI

AI Sun Yat-sen University's new spatiotemporal knowledge embedding framework drives the latest progress in video scene graph generation tasks, published in TIP '24

Sun Yat-sen University's new spatiotemporal knowledge embedding framework drives the latest progress in video scene graph generation tasks, published in TIP '24Video Scene Graph Generation (VidSGG) aims to identify objects in visual scenes and infer visual relationships between them.

The task requires not only a comprehensive understanding of each object scattered throughout the scene, but also an in-depth study of their movement and interaction over time.

Recently, researchers from Sun Yat-sen University published a paper in the top artificial intelligence journal IEEE T-IP. They explored related tasks and found that: each pair of object combinations and The relationship between them has spatial co-occurrence correlation within each image, and temporal consistency/translation correlation between different images.

Paper link: https://arxiv.org/abs/2309.13237

Based on these first Based on prior knowledge, the researchers proposed a Transformer (STKET) based on spatiotemporal knowledge embedding to incorporate prior spatiotemporal knowledge into the multi-head cross attention mechanism to learn more representative visual relationship representations.

Specifically, spatial co-occurrence and temporal transformation correlation are first statistically learned; then, a spatiotemporal knowledge embedding layer is designed to fully explore the interaction between visual representation and knowledge. , respectively generate spatial and temporal knowledge-embedded visual relation representations; finally, the authors aggregate these features to predict the final semantic labels and their visual relations.

Extensive experiments show that the framework proposed in this article is significantly better than the current competing algorithms. Currently, the paper has been accepted.

Paper Overview

With the rapid development of the field of scene understanding, many researchers have begun to try to use various frameworks to solve scene graph generation ( Scene Graph Generation (SGG) task and has made considerable progress.

However, these methods often only consider the situation of a single image and ignore the large amount of contextual information existing in the time series, resulting in the inability of most existing scene graph generation algorithms to accurately Identify dynamic visual relationships contained in a given video.

Therefore, many researchers are committed to developing Video Scene Graph Generation (VidSGG) algorithms to solve this problem.

Current work focuses on aggregating object-level visual information from spatial and temporal perspectives to learn corresponding visual relationship representations.

However, due to the large variance in the visual appearance of various objects and interactive actions and the significant long-tail distribution of visual relationships caused by video collection, simply using visual information alone can easily lead to model predictions Wrong visual relationship.

In response to the above problems, researchers have done the following two aspects of work:

Firstly, it is proposed to mine the prior space-time contained in the training samples. Knowledge is used to advance the field of video scene graph generation. Among them, prior spatiotemporal knowledge includes:

1) Spatial co-occurrence correlation: The relationship between certain object categories tends to specific interactions.

2) Temporal consistency/transition correlation: A given pair of relationships tends to be consistent across consecutive video clips, or has a high probability of transitioning to another specific relationship.

Secondly, a novel Transformer (Spatial-Temporal Knowledge-Embedded Transformer, STKET) framework based on spatial-temporal knowledge embedding is proposed.

This framework incorporates prior spatiotemporal knowledge into the multi-head cross-attention mechanism to learn more representative visual relationship representations. According to the comparison results obtained on the test benchmark, it can be found that the STKET framework proposed by the researchers outperforms the previous state-of-the-art methods.

Figure 1: Due to the variable visual appearance and the long-tail distribution of visual relationships, video scene graph generation is full of challenges

Transformer based on spatiotemporal knowledge embedding

Spatial and temporal knowledge representation

When inferring visual relationships, humans not only use visual clues, but also use accumulated prior knowledge empirical knowledge [1, 2]. Inspired by this, researchers propose to extract prior spatiotemporal knowledge directly from the training set to facilitate the video scene graph generation task.

Among them, the spatial co-occurrence correlation is specifically manifested in that when a given object is combined, its visual relationship distribution will be highly skewed (for example, the distribution of the visual relationship between "person" and "cup" is obviously different from " The distribution between "dog" and "toy") and time transfer correlation are specifically manifested in that the transition probability of each visual relationship will change significantly when the visual relationship at the previous moment is given (for example, when the visual relationship at the previous moment is known When it is "eating", the probability of the visual relationship shifting to "writing" at the next moment is greatly reduced).

As shown in Figure 2, after you can intuitively feel the given object combination or previous visual relationship, the prediction space can be greatly reduced.

Figure 2: Spatial co-occurrence probability [3] and temporal transition probability of visual relationships

Specifically, for the combination of the i-th type object and the j-th type object, and the relationship between the i-th type object and the j-th type object at the previous moment, the corresponding spatial co-occurrence probability matrix E^{i,j is first obtained statistically } and the time transition probability matrix Ex^{i,j}.

Then, input it into the fully connected layer to obtain the corresponding feature representation, and use the corresponding objective function to ensure that the knowledge representation learned by the model contains the corresponding prior spatiotemporal knowledge. .

Figure 3: The process of learning spatial (a) and temporal (b) knowledge representation

Knowledge Embedding Note Force layer

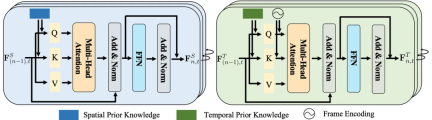

Spatial knowledge usually contains information about the positions, distances and relationships between entities. Temporal knowledge, on the other hand, involves the sequence, duration, and intervals between actions.

Given their unique properties, treating them individually can allow specialized modeling to more accurately capture inherent patterns.

Therefore, the researchers designed a spatiotemporal knowledge embedding layer to thoroughly explore the interaction between visual representation and spatiotemporal knowledge.

Figure 4: Space (left) and time (right) knowledge embedding layer

Spatial-temporal aggregation module

As mentioned above, the spatial knowledge embedding layer explores the spatial co-occurrence correlation within each image, and the temporal knowledge embedding layer explores the temporal transfer correlation between different images, thereby fully exploring Interactions between visual representations and spatiotemporal knowledge.

Nevertheless, these two layers ignore long-term contextual information, which is helpful for identifying most dynamically changing visual relationships.

To this end, the researchers further designed a spatiotemporal aggregation (STA) module to aggregate these representations of each object pair to predict the final semantic labels and their relationships. It takes as input spatial and temporal embedded relationship representations of the same subject-object pairs in different frames.

Specifically, the researchers concatenated these representations of the same object pairs to generate contextual representations.

Then, to find the same subject-object pairs in different frames, the predicted object labels and IoU (i.e. Intersection of Unions) are adopted to match the same subject-object pairs detected in the frames .

Finally, considering that the relationship in the frame has different representations in different batches, the earliest representation in the sliding window is selected.

Experimental results

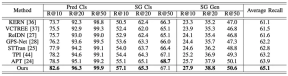

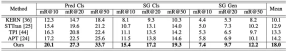

In order to comprehensively evaluate the performance of the proposed framework, the researchers compared the existing video scene graph generation method (STTran , TPI, APT), advanced image scene graph generation methods (KERN, VCTREE, ReIDN, GPS-Net) were also selected for comparison.

Among them, in order to ensure fair comparison, the image scene graph generation method achieves the goal of generating a corresponding scene graph for a given video by identifying each frame of image.

Figure 5: Experimental results using Recall as the evaluation index on the Action Genome data set

Figure 6: Experimental results using mean Recall as the evaluation index on the Action Genome data set

The above is the detailed content of Sun Yat-sen University's new spatiotemporal knowledge embedding framework drives the latest progress in video scene graph generation tasks, published in TIP '24. For more information, please follow other related articles on the PHP Chinese website!

![Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]](https://img.php.cn/upload/article/001/242/473/174717025174979.jpg?x-oss-process=image/resize,p_40) Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AM

Can't use ChatGPT! Explaining the causes and solutions that can be tested immediately [Latest 2025]May 14, 2025 am 05:04 AMChatGPT is not accessible? This article provides a variety of practical solutions! Many users may encounter problems such as inaccessibility or slow response when using ChatGPT on a daily basis. This article will guide you to solve these problems step by step based on different situations. Causes of ChatGPT's inaccessibility and preliminary troubleshooting First, we need to determine whether the problem lies in the OpenAI server side, or the user's own network or device problems. Please follow the steps below to troubleshoot: Step 1: Check the official status of OpenAI Visit the OpenAI Status page (status.openai.com) to see if the ChatGPT service is running normally. If a red or yellow alarm is displayed, it means Open

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AM

Calculating The Risk Of ASI Starts With Human MindsMay 14, 2025 am 05:02 AMOn 10 May 2025, MIT physicist Max Tegmark told The Guardian that AI labs should emulate Oppenheimer’s Trinity-test calculus before releasing Artificial Super-Intelligence. “My assessment is that the 'Compton constant', the probability that a race to

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AM

An easy-to-understand explanation of how to write and compose lyrics and recommended tools in ChatGPTMay 14, 2025 am 05:01 AMAI music creation technology is changing with each passing day. This article will use AI models such as ChatGPT as an example to explain in detail how to use AI to assist music creation, and explain it with actual cases. We will introduce how to create music through SunoAI, AI jukebox on Hugging Face, and Python's Music21 library. Through these technologies, everyone can easily create original music. However, it should be noted that the copyright issue of AI-generated content cannot be ignored, and you must be cautious when using it. Let’s explore the infinite possibilities of AI in the music field together! OpenAI's latest AI agent "OpenAI Deep Research" introduces: [ChatGPT]Ope

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AM

What is ChatGPT-4? A thorough explanation of what you can do, the pricing, and the differences from GPT-3.5!May 14, 2025 am 05:00 AMThe emergence of ChatGPT-4 has greatly expanded the possibility of AI applications. Compared with GPT-3.5, ChatGPT-4 has significantly improved. It has powerful context comprehension capabilities and can also recognize and generate images. It is a universal AI assistant. It has shown great potential in many fields such as improving business efficiency and assisting creation. However, at the same time, we must also pay attention to the precautions in its use. This article will explain the characteristics of ChatGPT-4 in detail and introduce effective usage methods for different scenarios. The article contains skills to make full use of the latest AI technologies, please refer to it. OpenAI's latest AI agent, please click the link below for details of "OpenAI Deep Research"

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AM

Explaining how to use the ChatGPT app! Japanese support and voice conversation functionMay 14, 2025 am 04:59 AMChatGPT App: Unleash your creativity with the AI assistant! Beginner's Guide The ChatGPT app is an innovative AI assistant that handles a wide range of tasks, including writing, translation, and question answering. It is a tool with endless possibilities that is useful for creative activities and information gathering. In this article, we will explain in an easy-to-understand way for beginners, from how to install the ChatGPT smartphone app, to the features unique to apps such as voice input functions and plugins, as well as the points to keep in mind when using the app. We'll also be taking a closer look at plugin restrictions and device-to-device configuration synchronization

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AM

How do I use the Chinese version of ChatGPT? Explanation of registration procedures and feesMay 14, 2025 am 04:56 AMChatGPT Chinese version: Unlock new experience of Chinese AI dialogue ChatGPT is popular all over the world, did you know it also offers a Chinese version? This powerful AI tool not only supports daily conversations, but also handles professional content and is compatible with Simplified and Traditional Chinese. Whether it is a user in China or a friend who is learning Chinese, you can benefit from it. This article will introduce in detail how to use ChatGPT Chinese version, including account settings, Chinese prompt word input, filter use, and selection of different packages, and analyze potential risks and response strategies. In addition, we will also compare ChatGPT Chinese version with other Chinese AI tools to help you better understand its advantages and application scenarios. OpenAI's latest AI intelligence

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AM

5 AI Agent Myths You Need To Stop Believing NowMay 14, 2025 am 04:54 AMThese can be thought of as the next leap forward in the field of generative AI, which gave us ChatGPT and other large-language-model chatbots. Rather than simply answering questions or generating information, they can take action on our behalf, inter

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AM

An easy-to-understand explanation of the illegality of creating and managing multiple accounts using ChatGPTMay 14, 2025 am 04:50 AMEfficient multiple account management techniques using ChatGPT | A thorough explanation of how to use business and private life! ChatGPT is used in a variety of situations, but some people may be worried about managing multiple accounts. This article will explain in detail how to create multiple accounts for ChatGPT, what to do when using it, and how to operate it safely and efficiently. We also cover important points such as the difference in business and private use, and complying with OpenAI's terms of use, and provide a guide to help you safely utilize multiple accounts. OpenAI

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Notepad++7.3.1

Easy-to-use and free code editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.