Technology peripherals

Technology peripherals AI

AI The TF-T2V technology jointly developed by Huake, Ali and other companies reduces the cost of AI video production!

The TF-T2V technology jointly developed by Huake, Ali and other companies reduces the cost of AI video production!In the past two years, with the opening of large-scale graphic and text data sets such as LAION-5B, a series of methods with amazing effects have emerged in the field of image generation, such as Stable Diffusion, DALL-E 2, ControlNet and Composer . The emergence of these methods has made great breakthroughs and progress in the field of image generation. The field of image generation has developed rapidly in just the past two years.

However, video generation still faces huge challenges. First, compared with image generation, video generation needs to process higher-dimensional data and needs to take into account the additional time dimension, which brings about the problem of timing modeling. To drive learning of temporal dynamics, we need more video-text pair data. However, accurate temporal annotation of videos is very expensive, which limits the size of video-text datasets. Currently, the existing WebVid10M video dataset only contains 10.7M video-text pairs. Compared with the LAION-5B image dataset, the data size is far different. This severely restricts the possibility of large-scale expansion of video generation models.

In order to solve the above problems, the joint research team of Huazhong University of Science and Technology, Alibaba Group, Zhejiang University and Ant Group recently released the TF-T2V video solution:

##Paper address: https://arxiv.org/abs/2312.15770

Project Home page: https://tf-t2v.github.io/

Source code will be released soon: https://github.com/ali-vilab/i2vgen-xl (VGen project) .

This solution takes a different approach and proposes video generation based on large-scale text-free annotated video data, which can learn rich motion dynamics.

Let’s first take a look at the video generation effect of TF-T2V:

文生视频 Task

Prompt word: Generate a video of a large frost-like creature on a snow-covered land.

Prompt word: Generate an animated video of a cartoon bee.

Prompt word: Generate a video containing a futuristic fantasy motorcycle.

Prompt word: Generate a video of a little boy smiling happily.

Prompt words: Generate a video of an old man feeling a headache.

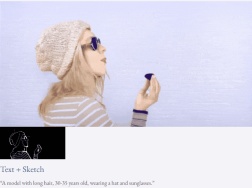

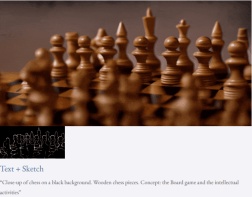

Combined video generation task

Given text and depth map Or text and sketches, TF-T2V can perform controllable video generation:

It can also perform high-resolution video synthesis:

##

Semi-supervised setting

The TF-T2V method in the semi-supervised setting can also generate videos that conform to the description of motion text, such as "People run from right to left."

Method introduction

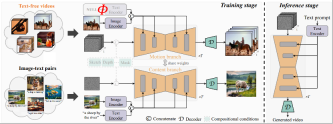

The core idea of TF-T2V The model is divided into a motion branch and an appearance branch. The motion branch is used to model motion dynamics, and the appearance branch is used to learn visual appearance information. These two branches are trained jointly, and finally can achieve text-driven video generation.

In order to improve the temporal consistency of generated videos, the author team also proposed a temporal consistency loss to explicitly learn the continuity between video frames.

It is worth mentioning that TF-T2V is a general framework that is not only suitable for Vincent video tasks, but also for combined Video generation tasks, such as sketch-to-video, video inpainting, first frame-to-video, etc.

For specific details and more experimental results, please refer to the original paper or the project homepage.

In addition, the author team also used TF-T2V as a teacher model and used consistent distillation technology to obtain the VideoLCM model:

Paper address: https://arxiv.org/abs/2312.09109

Project homepage: https://tf-t2v.github.io/

The source code will be released soon: https://github.com/ali-vilab/i2vgen-xl (VGen project).

Unlike previous video generation methods that require about 50 steps of DDIM denoising, the VideoLCM method based on TF-T2V can generate high-fidelity videos with only about 4 steps of inference denoising. , greatly improving the efficiency of video generation.

Let’s take a look at the results of VideoLCM’s 4-step denoising inference:

For specific details and more experimental results, please refer to the original VideoLCM paper or the project homepage.

In short, the TF-T2V solution brings new ideas to the field of video generation and overcomes the challenges caused by data set size and labeling difficulties. Leveraging large-scale text-free annotation video data, TF-T2V is able to generate high-quality videos and is applied to a variety of video generation tasks. This innovation will promote the development of video generation technology and bring broader application scenarios and business opportunities to all walks of life.

The above is the detailed content of The TF-T2V technology jointly developed by Huake, Ali and other companies reduces the cost of AI video production!. For more information, please follow other related articles on the PHP Chinese website!

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM

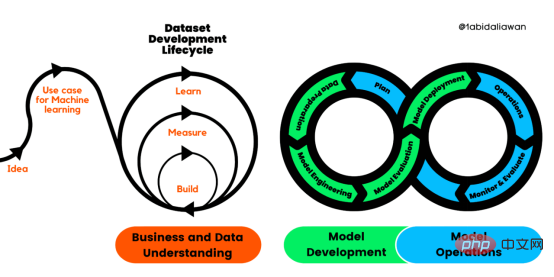

解读CRISP-ML(Q):机器学习生命周期流程Apr 08, 2023 pm 01:21 PM译者 | 布加迪审校 | 孙淑娟目前,没有用于构建和管理机器学习(ML)应用程序的标准实践。机器学习项目组织得不好,缺乏可重复性,而且从长远来看容易彻底失败。因此,我们需要一套流程来帮助自己在整个机器学习生命周期中保持质量、可持续性、稳健性和成本管理。图1. 机器学习开发生命周期流程使用质量保证方法开发机器学习应用程序的跨行业标准流程(CRISP-ML(Q))是CRISP-DM的升级版,以确保机器学习产品的质量。CRISP-ML(Q)有六个单独的阶段:1. 业务和数据理解2. 数据准备3. 模型

人工智能的环境成本和承诺Apr 08, 2023 pm 04:31 PM

人工智能的环境成本和承诺Apr 08, 2023 pm 04:31 PM人工智能(AI)在流行文化和政治分析中经常以两种极端的形式出现。它要么代表着人类智慧与科技实力相结合的未来主义乌托邦的关键,要么是迈向反乌托邦式机器崛起的第一步。学者、企业家、甚至活动家在应用人工智能应对气候变化时都采用了同样的二元思维。科技行业对人工智能在创建一个新的技术乌托邦中所扮演的角色的单一关注,掩盖了人工智能可能加剧环境退化的方式,通常是直接伤害边缘人群的方式。为了在应对气候变化的过程中充分利用人工智能技术,同时承认其大量消耗能源,引领人工智能潮流的科技公司需要探索人工智能对环境影响的

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PM

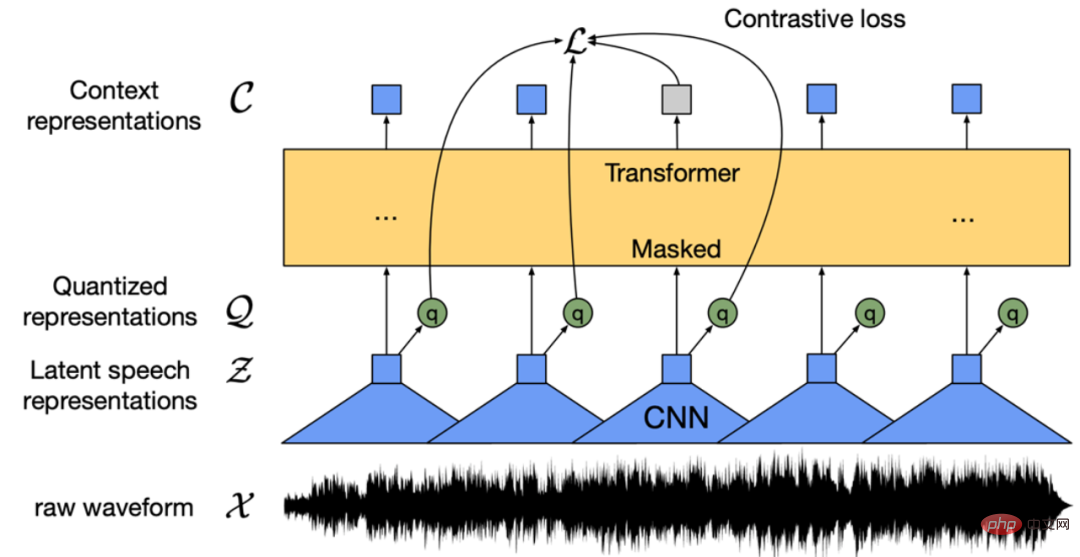

找不到中文语音预训练模型?中文版 Wav2vec 2.0和HuBERT来了Apr 08, 2023 pm 06:21 PMWav2vec 2.0 [1],HuBERT [2] 和 WavLM [3] 等语音预训练模型,通过在多达上万小时的无标注语音数据(如 Libri-light )上的自监督学习,显著提升了自动语音识别(Automatic Speech Recognition, ASR),语音合成(Text-to-speech, TTS)和语音转换(Voice Conversation,VC)等语音下游任务的性能。然而这些模型都没有公开的中文版本,不便于应用在中文语音研究场景。 WenetSpeech [4] 是

条形统计图用什么呈现数据Jan 20, 2021 pm 03:31 PM

条形统计图用什么呈现数据Jan 20, 2021 pm 03:31 PM条形统计图用“直条”呈现数据。条形统计图是用一个单位长度表示一定的数量,根据数量的多少画成长短不同的直条,然后把这些直条按一定的顺序排列起来;从条形统计图中很容易看出各种数量的多少。条形统计图分为:单式条形统计图和复式条形统计图,前者只表示1个项目的数据,后者可以同时表示多个项目的数据。

自动驾驶车道线检测分类的虚拟-真实域适应方法Apr 08, 2023 pm 02:31 PM

自动驾驶车道线检测分类的虚拟-真实域适应方法Apr 08, 2023 pm 02:31 PMarXiv论文“Sim-to-Real Domain Adaptation for Lane Detection and Classification in Autonomous Driving“,2022年5月,加拿大滑铁卢大学的工作。虽然自主驾驶的监督检测和分类框架需要大型标注数据集,但光照真实模拟环境生成的合成数据推动的无监督域适应(UDA,Unsupervised Domain Adaptation)方法则是低成本、耗时更少的解决方案。本文提出对抗性鉴别和生成(adversarial d

数据通信中的信道传输速率单位是bps,它表示什么Jan 18, 2021 pm 02:58 PM

数据通信中的信道传输速率单位是bps,它表示什么Jan 18, 2021 pm 02:58 PM数据通信中的信道传输速率单位是bps,它表示“位/秒”或“比特/秒”,即数据传输速率在数值上等于每秒钟传输构成数据代码的二进制比特数,也称“比特率”。比特率表示单位时间内传送比特的数目,用于衡量数字信息的传送速度;根据每帧图像存储时所占的比特数和传输比特率,可以计算数字图像信息传输的速度。

数据分析方法有哪几种Dec 15, 2020 am 09:48 AM

数据分析方法有哪几种Dec 15, 2020 am 09:48 AM数据分析方法有4种,分别是:1、趋势分析,趋势分析一般用于核心指标的长期跟踪;2、象限分析,可依据数据的不同,将各个比较主体划分到四个象限中;3、对比分析,分为横向对比和纵向对比;4、交叉分析,主要作用就是从多个维度细分数据。

聊一聊Python 实现数据的序列化操作Apr 12, 2023 am 09:31 AM

聊一聊Python 实现数据的序列化操作Apr 12, 2023 am 09:31 AM在日常开发中,对数据进行序列化和反序列化是常见的数据操作,Python提供了两个模块方便开发者实现数据的序列化操作,即 json 模块和 pickle 模块。这两个模块主要区别如下:json 是一个文本序列化格式,而 pickle 是一个二进制序列化格式;json 是我们可以直观阅读的,而 pickle 不可以;json 是可互操作的,在 Python 系统之外广泛使用,而 pickle 则是 Python 专用的;默认情况下,json 只能表示 Python 内置类型的子集,不能表示自定义的

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

MantisBT

Mantis is an easy-to-deploy web-based defect tracking tool designed to aid in product defect tracking. It requires PHP, MySQL and a web server. Check out our demo and hosting services.

Atom editor mac version download

The most popular open source editor

Dreamweaver Mac version

Visual web development tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 English version

Recommended: Win version, supports code prompts!