Technology peripherals

Technology peripherals AI

AI New technology Repaint123: Efficiently generate high-quality single-view 3D in just 2 minutes!

New technology Repaint123: Efficiently generate high-quality single-view 3D in just 2 minutes!New technology Repaint123: Efficiently generate high-quality single-view 3D in just 2 minutes!

The method of converting an image into 3D usually uses the Score Distillation Sampling (SDS) method. Although the results are impressive, there are still several shortcomings, including multi-view inconsistency, over-saturation, Issues such as over-smoothed textures and slow generation speeds.

In order to solve these problems, researchers from Peking University, National University of Singapore, Wuhan University and other institutions proposed Repaint123 to alleviate multi-view bias, texture degradation, and accelerate the generation process.

Paper address: https://arxiv.org/pdf/2312.13271.pdf

GitHub :https://github.com/PKU-YuanGroup/repaint123

Project address: https://pku-yuangroup.github.io/repaint123/

The core idea is to combine the image generation capabilities of the 2D diffusion model with the texture alignment capabilities to produce high-quality multi-view images.

The author further proposes visibility-aware adaptive redraw intensity to improve the quality of the generated image.

#The generated high-quality, multi-view consistent images enable fast 3D content generation using a simple mean square error (MSE) loss.

The author has experimentally proven that Repaint123 is able to generate high-quality 3D content in 2 minutes, with multi-view consistency and fine textures.

The main contributions of this article are as follows:

1. Repaint123 comprehensively considers the controllable redrawing process from image to 3D generation, and can generate Consistent high-quality image sequences from multiple perspectives.

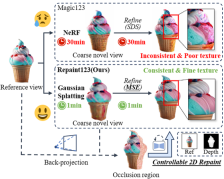

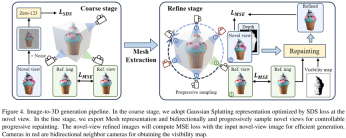

2. Repaint123 proposes a simple single-view 3D generated baseline. In the coarse model stage, Zero123 is used as the 3D prior and SDS loss to quickly optimize the Gaussian Splatting geometry (1 minute). The fine model The stage uses Stable Diffusion as 2D prior with MSE loss to quickly refine the Mesh texture (1 minute).

3. Extensive experiments have verified the effectiveness of the Repaint123 method, which can generate 3D content matching the quality of 2D generation from a single image in just 2 minutes.

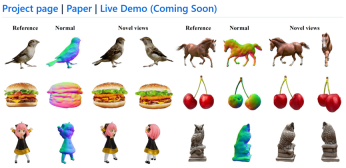

Figure 1: Paper motivation: fast, consistent, high-quality single-view 3D generation

Specific method:

Repaint123’s main improvements focus on the mesh refinement stage, which consists of two parts: consistent high-quality image sequence generation from multiple perspectives, and fast and high-quality 3D reconstruction.

In the rough model stage, the author uses 3D Gaussian Splatting as the 3D representation, and the rough model geometry and texture are optimized through SDS loss.

In the refinement stage, the author converts the coarse model into a mesh representation and proposes a progressive and controllable texture refinement redrawing scheme.

First, the authors gradually redraw the invisible areas relative to the previously optimized view through geometric control and guidance from the reference image, thereby obtaining a view-consistent image of the novel view.

Then, the authors adopt image cues for classifier-free guidance and design an adaptive redrawing strategy to further improve the generation quality of overlapping regions.

Finally, by generating view-consistent high-quality images, the authors leverage a simple MSE loss to quickly generate 3D content.

Multi-viewing consistent high-quality image sequence generation:

As shown in Figure 2, multi-viewing consistent high-quality image sequence generation points It is the following four parts:

Figure 2: Multi-view consistent image generation process

DDIM Inversion

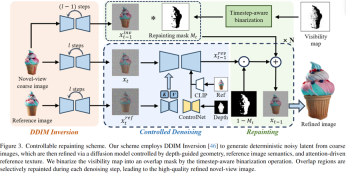

#In order to preserve the 3D consistent low-frequency texture information generated in the rough model stage, the author uses DDIM Inversion to invert the image to a certain latent for subsequent denoising. Generate faithful and consistent images as a basis.

Controllable Denoising

In order to control the consistency of geometry and long-range texture, in the denoising stage, the author uses ControlNet to introduce the depth map of coarse model rendering as a geometric prior, and injects the Attention feature of the reference map for texture migration.

At the same time, in order to perform Classifier-free guidance to improve image quality, the paper uses CLIP to encode the reference image into an image prompt denoising network.

Obtain Occlusion Mask

To obtain occlusion from a novel view of the rendered image In and depth map Dn Mask Mn, given the redraw reference view Vr of Ir and Dr, the author first scales the 2D pixel points from Vr to the 3D point cloud by using the depth Dr, and then renders the 3D point cloud Pr from the new perspective Vn to obtain the depth map Dn'.

The author considers the area with different depth values between the two novel view depth maps (Dn and Dn') to be the occlusion area in the occlusion mask.

Progressively Repainting both Occlusions and Overlaps

In order to ensure that the overlapping areas of the image sequence and adjacent images are aligned at the pixel level , the author uses a progressive local redrawing strategy to generate harmonious and consistent adjacent areas while keeping the overlapping areas unchanged, and so on from the reference perspective to 360°.

However, as shown in Figure 3, the author found that the overlapping area also needs to be refined, because the visual resolution of an area that was previously strabismus becomes larger when facing directly, and more needs to be added. high-frequency information.

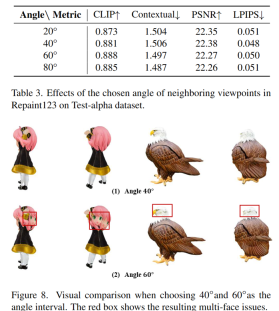

In order to choose the appropriate thinning intensity to ensure fidelity while improving quality, the author draws on the projection theorem and the idea of image super-resolution to propose a simple and direct visibility perceptibility method. The redrawing strategy is used to refine the overlapping area, and the thinning intensity is equal to 1-cosθ* (where θ* is the maximum angle between all previous camera angles and the normal vector of the viewed surface), thereby adaptively redrawing the overlapping area.

Figure 3: Relationship between camera angle and thinning intensity

Fast and high-quality 3D reconstruction :

As shown in Figure 4, the author adopts a two-stage method, first using Gaussian Splatting representation to quickly generate reasonable geometry and rough texture, and at the same time using the multi-view consistent generated above With high-quality image sequences, the authors were able to perform fast 3D texture reconstruction using a simple MSE loss.

Figure 4: Repaint123 two-stage single-view 3D generation framework

Experimental results

The author compared multiple single-view generation task methods and achieved the most advanced results in terms of consistency, quality, and speed on RealFusion15 and Test-alpha data sets.

Single view 3D generation visualization comparison

Single view 3D generation quantitative comparison

Ablation experiment

At the same time, The author also conducted ablation experiments on the effectiveness of each module used in the paper and the increment of perspective rotation:

The above is the detailed content of New technology Repaint123: Efficiently generate high-quality single-view 3D in just 2 minutes!. For more information, please follow other related articles on the PHP Chinese website!

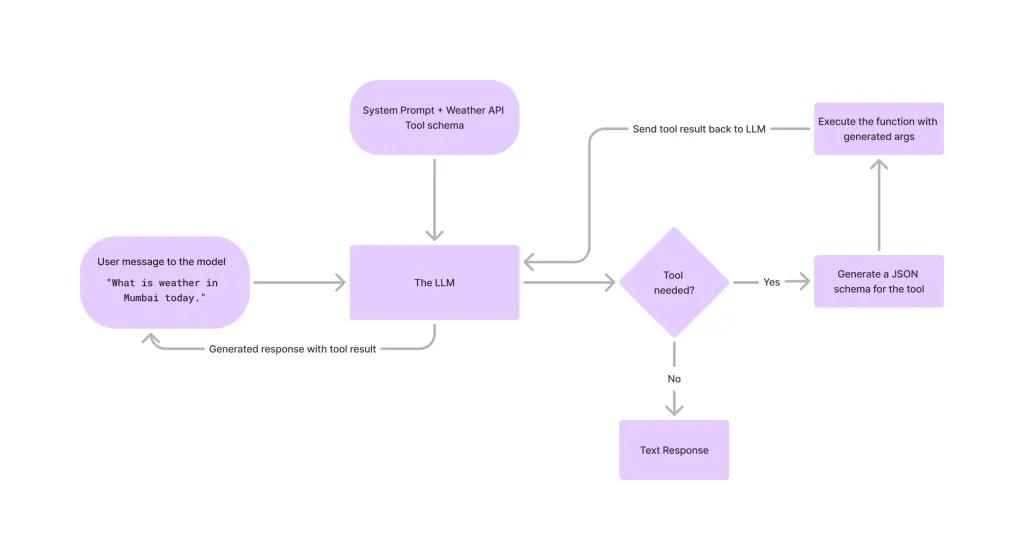

Tool Calling in LLMsApr 14, 2025 am 11:28 AM

Tool Calling in LLMsApr 14, 2025 am 11:28 AMLarge language models (LLMs) have surged in popularity, with the tool-calling feature dramatically expanding their capabilities beyond simple text generation. Now, LLMs can handle complex automation tasks such as dynamic UI creation and autonomous a

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AM

How ADHD Games, Health Tools & AI Chatbots Are Transforming Global HealthApr 14, 2025 am 11:27 AMCan a video game ease anxiety, build focus, or support a child with ADHD? As healthcare challenges surge globally — especially among youth — innovators are turning to an unlikely tool: video games. Now one of the world’s largest entertainment indus

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM

UN Input On AI: Winners, Losers, And OpportunitiesApr 14, 2025 am 11:25 AM“History has shown that while technological progress drives economic growth, it does not on its own ensure equitable income distribution or promote inclusive human development,” writes Rebeca Grynspan, Secretary-General of UNCTAD, in the preamble.

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AM

Learning Negotiation Skills Via Generative AIApr 14, 2025 am 11:23 AMEasy-peasy, use generative AI as your negotiation tutor and sparring partner. Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AM

TED Reveals From OpenAI, Google, Meta Heads To Court, Selfie With MyselfApr 14, 2025 am 11:22 AMThe TED2025 Conference, held in Vancouver, wrapped its 36th edition yesterday, April 11. It featured 80 speakers from more than 60 countries, including Sam Altman, Eric Schmidt, and Palmer Luckey. TED’s theme, “humanity reimagined,” was tailor made

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AM

Joseph Stiglitz Warns Of The Looming Inequality Amid AI Monopoly PowerApr 14, 2025 am 11:21 AMJoseph Stiglitz is renowned economist and recipient of the Nobel Prize in Economics in 2001. Stiglitz posits that AI can worsen existing inequalities and consolidated power in the hands of a few dominant corporations, ultimately undermining economic

What is Graph Database?Apr 14, 2025 am 11:19 AM

What is Graph Database?Apr 14, 2025 am 11:19 AMGraph Databases: Revolutionizing Data Management Through Relationships As data expands and its characteristics evolve across various fields, graph databases are emerging as transformative solutions for managing interconnected data. Unlike traditional

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AM

LLM Routing: Strategies, Techniques, and Python ImplementationApr 14, 2025 am 11:14 AMLarge Language Model (LLM) Routing: Optimizing Performance Through Intelligent Task Distribution The rapidly evolving landscape of LLMs presents a diverse range of models, each with unique strengths and weaknesses. Some excel at creative content gen

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Dreamweaver Mac version

Visual web development tools

SublimeText3 English version

Recommended: Win version, supports code prompts!

Notepad++7.3.1

Easy-to-use and free code editor

Atom editor mac version download

The most popular open source editor

SAP NetWeaver Server Adapter for Eclipse

Integrate Eclipse with SAP NetWeaver application server.