Technology peripherals

Technology peripherals AI

AI Time series multi-task integrated large-scale model based on Adapter and GPT

Time series multi-task integrated large-scale model based on Adapter and GPTTime series multi-task integrated large-scale model based on Adapter and GPT

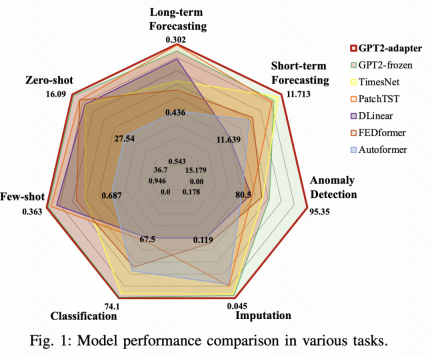

Today I would like to talk to you about the latest work on large model time series forecasting. From Alibaba Damo Academy, a general time series analysis framework based on adapter is proposed, which can be used in long-term forecasting, short-term forecasting, and zero-shot. Remarkable results have been achieved on 7 time series tasks, including few-shot, anomaly detection, time series classification, and time series filling.

Paper title: One size fits all: Universal time series analysis using pre-trained language models and specially designed adapters

Downloadable link: https://arxiv .org/pdf/2311.14782v1.pdf

1. Background

In the field of time series prediction, one of the difficulties in building large models is the lack of sufficient training data like in the NLP or CV fields. . This article proposes a solution, which is to adapt it to time series based on large-scale models trained in the field of NLP or CV, combined with Adapter technology, to solve various time series problems

Adapters are widely used in fields such as NLP and CV. Especially in recent large model applications, adapters are often used to perform lightweight finetune of large models. The Adapter is a lightweight network. By inserting it into some modules in the large model, then fixing the parameters of the large model, and only updating the parameters of the adapter, you can achieve lightweight large model finetune.

Picture

Picture

Next, I will introduce to you how in this work of Alibaba Damo Academy, we use adapter to combine pre-trained NLP and CV models to build a unified time series model.

2. Overall structure

The model proposed in this article is based on the pre-trained language model of Freeze parameters and is implemented by combining 4 types of adapters. The overall model structure is shown in the figure below.

Picture

Picture

First, for the input time series, we will use the RevIN method for normalization. This means that we subtract the mean from each time series and divide by the variance. Next, we will use the PatchTST method to split the time series into multiple segments through sliding windows and generate segment embeddings. The processed time series will be input into a pre-trained language model in the NLP field. During the entire training process, the original parameters of the language model will remain unchanged, and we will only update the newly added 4 types of adapter parameters

3. Adapter design

This article introduces four types of Adapters that can be plugged into different locations in large models in the fields of NLP and CV to achieve the goal of adapting time series. These four adapters are time adapter, channel adapter, frequency adapter and exception adapter

Time adapter: Time adapter is an MLP network used to fuse time dimension information. In this paper, we adopt a bottleneck structure to first map high-dimensional information in the time dimension or space dimension to a low-dimensional space, and then map it back to the high-dimensional space. The purpose of this is to avoid the risk of over-fitting in the process of extracting time series relationships

Channel Adaptor: The structure of the channel adapter is similar to the temporal adapter. The difference is that it is performed in the spatial dimension and is used to extract the variables of the multivariate sequence. The relationship between them also uses bottleect;

Picture

Picture

Frequency Adapter: The frequency adapter extracts time series information in the frequency domain. This part will The time series is mapped to the frequency domain, MLP is performed in the frequency domain, and then mapped back to the time domain to achieve the extraction of global information in the frequency domain.

Anomaly Adapter: This part mainly implements a new time series anomaly detection method. The attention score matrix is used here. For normal sequences, the attention score matrix exhibits periodic repetition characteristics, while abnormal sequences do not. Therefore, a Gaussian kernel is used as anomaly adapter in this article, and the output result of attention and its calculated KL divergence are used for time series anomaly detection.

Picture

Picture

In addition, different data will be affected by each adapter to varying degrees. Therefore, a gated network is used in this article to selectively Using adapter

4 and experimental results

, the effects of 7 time series tasks were compared. The time series unified large model proposed in this article achieved results in each task that exceeded those of various SOTA models in the industry. Effect. Taking the long-term prediction task as an example, the unified model based on GPT2 Adapter performs best

picture

picture

The above is the detailed content of Time series multi-task integrated large-scale model based on Adapter and GPT. For more information, please follow other related articles on the PHP Chinese website!

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AM

Let's Dance: Structured Movement To Fine-Tune Our Human Neural NetsApr 27, 2025 am 11:09 AMScientists have extensively studied human and simpler neural networks (like those in C. elegans) to understand their functionality. However, a crucial question arises: how do we adapt our own neural networks to work effectively alongside novel AI s

New Google Leak Reveals Subscription Changes For Gemini AIApr 27, 2025 am 11:08 AM

New Google Leak Reveals Subscription Changes For Gemini AIApr 27, 2025 am 11:08 AMGoogle's Gemini Advanced: New Subscription Tiers on the Horizon Currently, accessing Gemini Advanced requires a $19.99/month Google One AI Premium plan. However, an Android Authority report hints at upcoming changes. Code within the latest Google P

How Data Analytics Acceleration Is Solving AI's Hidden BottleneckApr 27, 2025 am 11:07 AM

How Data Analytics Acceleration Is Solving AI's Hidden BottleneckApr 27, 2025 am 11:07 AMDespite the hype surrounding advanced AI capabilities, a significant challenge lurks within enterprise AI deployments: data processing bottlenecks. While CEOs celebrate AI advancements, engineers grapple with slow query times, overloaded pipelines, a

MarkItDown MCP Can Convert Any Document into Markdowns!Apr 27, 2025 am 09:47 AM

MarkItDown MCP Can Convert Any Document into Markdowns!Apr 27, 2025 am 09:47 AMHandling documents is no longer just about opening files in your AI projects, it’s about transforming chaos into clarity. Docs such as PDFs, PowerPoints, and Word flood our workflows in every shape and size. Retrieving structured

How to Use Google ADK for Building Agents? - Analytics VidhyaApr 27, 2025 am 09:42 AM

How to Use Google ADK for Building Agents? - Analytics VidhyaApr 27, 2025 am 09:42 AMHarness the power of Google's Agent Development Kit (ADK) to create intelligent agents with real-world capabilities! This tutorial guides you through building conversational agents using ADK, supporting various language models like Gemini and GPT. W

Use of SLM over LLM for Effective Problem Solving - Analytics VidhyaApr 27, 2025 am 09:27 AM

Use of SLM over LLM for Effective Problem Solving - Analytics VidhyaApr 27, 2025 am 09:27 AMsummary: Small Language Model (SLM) is designed for efficiency. They are better than the Large Language Model (LLM) in resource-deficient, real-time and privacy-sensitive environments. Best for focus-based tasks, especially where domain specificity, controllability, and interpretability are more important than general knowledge or creativity. SLMs are not a replacement for LLMs, but they are ideal when precision, speed and cost-effectiveness are critical. Technology helps us achieve more with fewer resources. It has always been a promoter, not a driver. From the steam engine era to the Internet bubble era, the power of technology lies in the extent to which it helps us solve problems. Artificial intelligence (AI) and more recently generative AI are no exception

How to Use Google Gemini Models for Computer Vision Tasks? - Analytics VidhyaApr 27, 2025 am 09:26 AM

How to Use Google Gemini Models for Computer Vision Tasks? - Analytics VidhyaApr 27, 2025 am 09:26 AMHarness the Power of Google Gemini for Computer Vision: A Comprehensive Guide Google Gemini, a leading AI chatbot, extends its capabilities beyond conversation to encompass powerful computer vision functionalities. This guide details how to utilize

Gemini 2.0 Flash vs o4-mini: Can Google Do Better Than OpenAI?Apr 27, 2025 am 09:20 AM

Gemini 2.0 Flash vs o4-mini: Can Google Do Better Than OpenAI?Apr 27, 2025 am 09:20 AMThe AI landscape of 2025 is electrifying with the arrival of Google's Gemini 2.0 Flash and OpenAI's o4-mini. These cutting-edge models, launched weeks apart, boast comparable advanced features and impressive benchmark scores. This in-depth compariso

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.

Dreamweaver CS6

Visual web development tools

SublimeText3 Chinese version

Chinese version, very easy to use

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software