Technology peripherals

Technology peripherals AI

AI Take a trip to the future, the first multi-view prediction + planning autonomous driving world model arrives

Take a trip to the future, the first multi-view prediction + planning autonomous driving world model arrivesRecently, the concept of world model has triggered a wave of enthusiasm, but how can the field of autonomous driving watch the "fire" from afar. A team from the Institute of Automation, Chinese Academy of Sciences, proposed for the first time a new multi-view world model called Drive-WM, aiming to enhance the safety of end-to-end autonomous driving planning.

Website: https://drive-wm.github.io

Paper URL: https ://arxiv.org/abs/2311.17918

The first multi-view prediction and planning autonomous driving world model

In CVPR2023 Autonomous Driving At the seminar, the two major technology giants Tesla and Wayve showed off their black technology, and a new concept called "generative world model" became popular in the field of autonomous driving. Wayve even released the GAIA-1 generative AI model, demonstrating its stunning video scene generation capabilities. Recently, researchers from the Institute of Automation of the Chinese Academy of Sciences have also proposed a new autonomous driving world model - Drive-WM, which realizes a multi-view predictive world model for the first time and is seamlessly integrated with the current mainstream end-to-end autonomous driving planner. .

Drive-WM leverages the powerful generation capabilities of the Diffusion model to generate realistic video scenes.

Imagine that you are driving, and your on-board system is predicting future developments based on your driving habits and road conditions, and generating corresponding visual feedback to guide the selection of trajectory routes. This ability to foresee the future combined with a planner will greatly improve the safety of autonomous driving!

#Forecasting and planning based on multi-view world models.

#The combination of world model and end-to-end autonomous driving improves driving safety

## The #Drive-WM model combines the world model with end-to-end planning for the first time, opening a new chapter in the development of end-to-end autonomous driving. At each time step, the planner can use the world model to predict possible future scenarios, and then use the image reward function to fully evaluate them.

Adopt the best Estimation method and extended planning tree technology can achieve more effective and safer planning

Drive-WM explores two applications of world models in end-to-end planning through innovative research

1. Demonstrates the use of world models in the face of OOD scene robustness. Through comparative experiments, the author found that the current end-to-end planner's performance is not ideal when facing OOD situations.

The author gives the following picture. When a slight lateral offset is perturbed to the initial position, it is difficult for the current end-to-end planner to output a reasonable planned route.

The end-to-end planner has difficulty outputting a reasonable planned route when facing an OOD situation.

Drive-WM’s powerful generation capability provides new ideas for solving OOD problems. The author uses the generated videos to fine-tune the planner and learn from the OOD data, so that the planner can have better performance when facing such a scenario

2. This shows Introducing the enhanced role of future scenario evaluation in end-to-end planning

How to build a multi-view video generation model

Spatial-temporal consistency of multi-view video generation has always been a challenging problem. Drive-WM expands the capabilities of video generation by introducing temporal layer coding, and achieves multi-view video generation through view decomposition modeling. This generation method of view decomposition can greatly improve the consistency between views

Drive-WM overall model design

High-quality video generation and controllability

Drive-WM achieves high-quality multi-view video generation with excellent Controllability. It provides a variety of control options to control the generation of multi-view videos through text, scene layout, and motion information, and also provides new possibilities for future neural simulators

For example, use text to change weather and lighting:

##For example, pedestrian generation and foreground editing:

Use speed and direction control methods:

Generate rare events , such as turning around at an intersection or driving into the grass on the side

Conclusion

Drive-WM not only demonstrated its powerful multi-view video generation capabilities, but also revealed the world model and terminal There is huge potential in combining end-to-end driving models. We believe that in the future, world models can help achieve a safer, more stable, and more reliable end-to-end autonomous driving system.

The above is the detailed content of Take a trip to the future, the first multi-view prediction + planning autonomous driving world model arrives. For more information, please follow other related articles on the PHP Chinese website!

在 CARLA自动驾驶模拟器中添加真实智体行为Apr 08, 2023 pm 02:11 PM

在 CARLA自动驾驶模拟器中添加真实智体行为Apr 08, 2023 pm 02:11 PMarXiv论文“Insertion of real agents behaviors in CARLA autonomous driving simulator“,22年6月,西班牙。由于需要快速prototyping和广泛测试,仿真在自动驾驶中的作用变得越来越重要。基于物理的模拟具有多种优势和益处,成本合理,同时消除了prototyping、驾驶员和弱势道路使用者(VRU)的风险。然而,主要有两个局限性。首先,众所周知的现实差距是指现实和模拟之间的差异,阻碍模拟自主驾驶体验去实现有效的现实世界

特斯拉自动驾驶算法和模型解读Apr 11, 2023 pm 12:04 PM

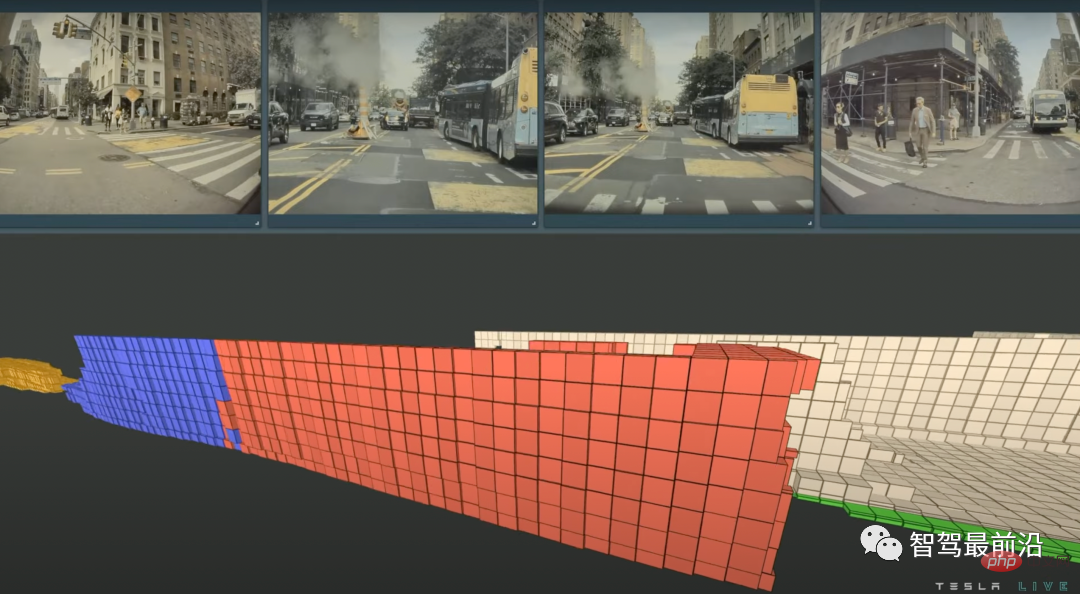

特斯拉自动驾驶算法和模型解读Apr 11, 2023 pm 12:04 PM特斯拉是一个典型的AI公司,过去一年训练了75000个神经网络,意味着每8分钟就要出一个新的模型,共有281个模型用到了特斯拉的车上。接下来我们分几个方面来解读特斯拉FSD的算法和模型进展。01 感知 Occupancy Network特斯拉今年在感知方面的一个重点技术是Occupancy Network (占据网络)。研究机器人技术的同学肯定对occupancy grid不会陌生,occupancy表示空间中每个3D体素(voxel)是否被占据,可以是0/1二元表示,也可以是[0, 1]之间的

一文通览自动驾驶三大主流芯片架构Apr 12, 2023 pm 12:07 PM

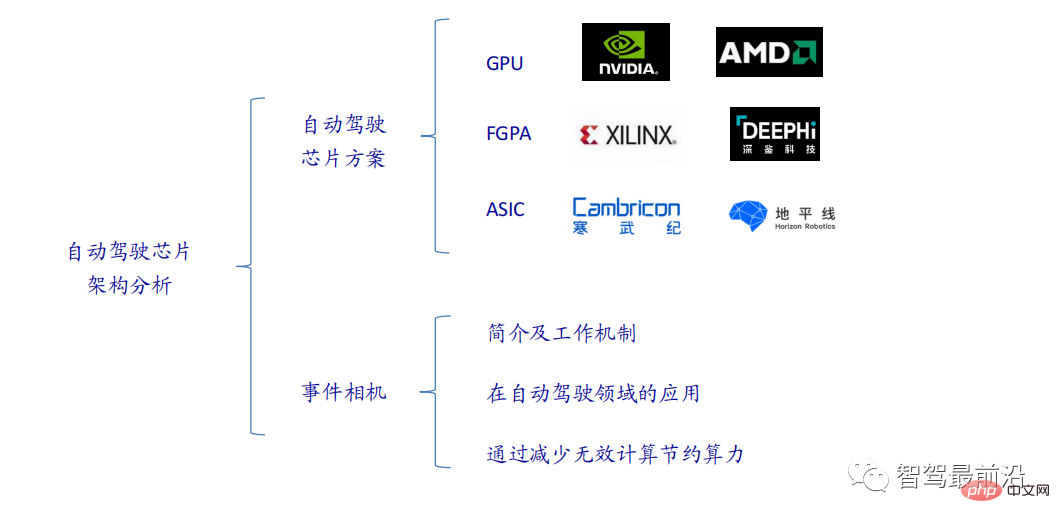

一文通览自动驾驶三大主流芯片架构Apr 12, 2023 pm 12:07 PM当前主流的AI芯片主要分为三类,GPU、FPGA、ASIC。GPU、FPGA均是前期较为成熟的芯片架构,属于通用型芯片。ASIC属于为AI特定场景定制的芯片。行业内已经确认CPU不适用于AI计算,但是在AI应用领域也是必不可少。 GPU方案GPU与CPU的架构对比CPU遵循的是冯·诺依曼架构,其核心是存储程序/数据、串行顺序执行。因此CPU的架构中需要大量的空间去放置存储单元(Cache)和控制单元(Control),相比之下计算单元(ALU)只占据了很小的一部分,所以CPU在进行大规模并行计算

自动驾驶汽车激光雷达如何做到与GPS时间同步?Mar 31, 2023 pm 10:40 PM

自动驾驶汽车激光雷达如何做到与GPS时间同步?Mar 31, 2023 pm 10:40 PMgPTP定义的五条报文中,Sync和Follow_UP为一组报文,周期发送,主要用来测量时钟偏差。 01 同步方案激光雷达与GPS时间同步主要有三种方案,即PPS+GPRMC、PTP、gPTPPPS+GPRMCGNSS输出两条信息,一条是时间周期为1s的同步脉冲信号PPS,脉冲宽度5ms~100ms;一条是通过标准串口输出GPRMC标准的时间同步报文。同步脉冲前沿时刻与GPRMC报文的发送在同一时刻,误差为ns级别,误差可以忽略。GPRMC是一条包含UTC时间(精确到秒),经纬度定位数据的标准格

特斯拉自动驾驶硬件 4.0 实物拆解:增加雷达,提供更多摄像头Apr 08, 2023 pm 12:11 PM

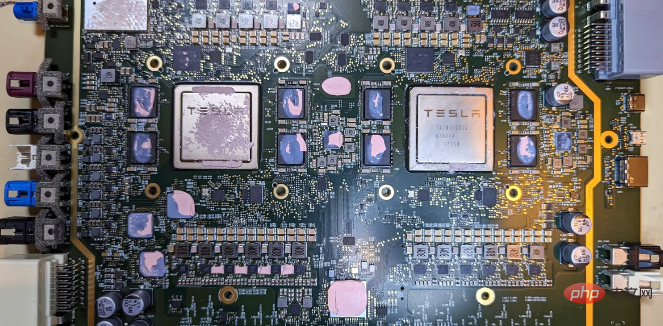

特斯拉自动驾驶硬件 4.0 实物拆解:增加雷达,提供更多摄像头Apr 08, 2023 pm 12:11 PM2 月 16 日消息,特斯拉的新自动驾驶计算机,即硬件 4.0(HW4)已经泄露,该公司似乎已经在制造一些带有新系统的汽车。我们已经知道,特斯拉准备升级其自动驾驶硬件已有一段时间了。特斯拉此前向联邦通信委员会申请在其车辆上增加一个新的雷达,并称计划在 1 月份开始销售,新的雷达将意味着特斯拉计划更新其 Autopilot 和 FSD 的传感器套件。硬件变化对特斯拉车主来说是一种压力,因为该汽车制造商一直承诺,其自 2016 年以来制造的所有车辆都具备通过软件更新实现自动驾驶所需的所有硬件。事实证

端到端自动驾驶中轨迹引导的控制预测:一个简单有力的基线方法TCPApr 10, 2023 am 09:01 AM

端到端自动驾驶中轨迹引导的控制预测:一个简单有力的基线方法TCPApr 10, 2023 am 09:01 AMarXiv论文“Trajectory-guided Control Prediction for End-to-end Autonomous Driving: A Simple yet Strong Baseline“, 2022年6月,上海AI实验室和上海交大。当前的端到端自主驾驶方法要么基于规划轨迹运行控制器,要么直接执行控制预测,这跨越了两个研究领域。鉴于二者之间潜在的互利,本文主动探索两个的结合,称为TCP (Trajectory-guided Control Prediction)。具

一文聊聊SLAM技术在自动驾驶的应用Apr 09, 2023 pm 01:11 PM

一文聊聊SLAM技术在自动驾驶的应用Apr 09, 2023 pm 01:11 PM定位在自动驾驶中占据着不可替代的地位,而且未来有着可期的发展。目前自动驾驶中的定位都是依赖RTK配合高精地图,这给自动驾驶的落地增加了不少成本与难度。试想一下人类开车,并非需要知道自己的全局高精定位及周围的详细环境,有一条全局导航路径并配合车辆在该路径上的位置,也就足够了,而这里牵涉到的,便是SLAM领域的关键技术。什么是SLAMSLAM (Simultaneous Localization and Mapping),也称为CML (Concurrent Mapping and Localiza

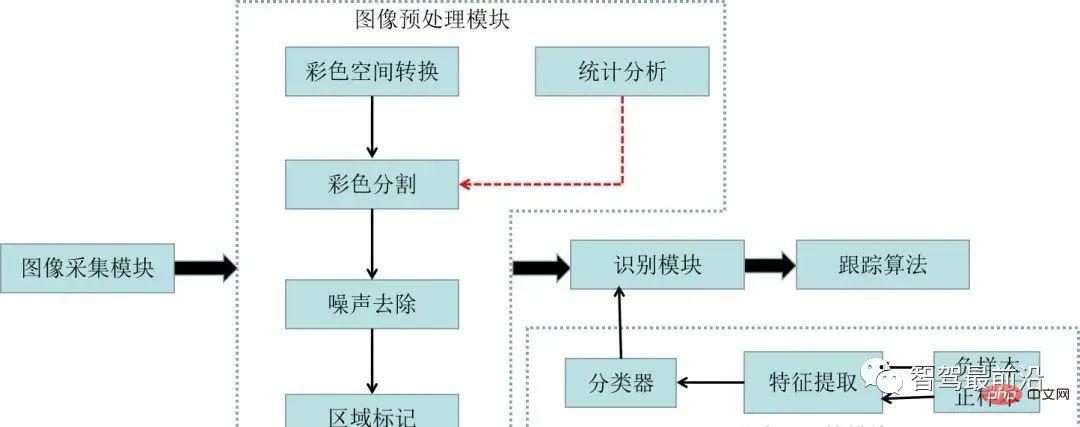

一文聊聊自动驾驶中交通标志识别系统Apr 12, 2023 pm 12:34 PM

一文聊聊自动驾驶中交通标志识别系统Apr 12, 2023 pm 12:34 PM什么是交通标志识别系统?汽车安全系统的交通标志识别系统,英文翻译为:Traffic Sign Recognition,简称TSR,是利用前置摄像头结合模式,可以识别常见的交通标志 《 限速、停车、掉头等)。这一功能会提醒驾驶员注意前面的交通标志,以便驾驶员遵守这些标志。TSR 功能降低了驾驶员不遵守停车标志等交通法规的可能,避免了违法左转或者无意的其他交通违法行为,从而提高了安全性。这些系统需要灵活的软件平台来增强探测算法,根据不同地区的交通标志来进行调整。交通标志识别原理交通标志识别又称为TS

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

Zend Studio 13.0.1

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

Safe Exam Browser

Safe Exam Browser is a secure browser environment for taking online exams securely. This software turns any computer into a secure workstation. It controls access to any utility and prevents students from using unauthorized resources.