Technology peripherals

Technology peripherals AI

AI New title: Meta improves the Transformer architecture: a new attention mechanism that enhances reasoning capabilities

New title: Meta improves the Transformer architecture: a new attention mechanism that enhances reasoning capabilitiesNew title: Meta improves the Transformer architecture: a new attention mechanism that enhances reasoning capabilities

The power of large language models (LLM) is an undoubted fact, but they still sometimes make simple mistakes, showing their weak reasoning ability

For example, LLM may make incorrect judgments due to irrelevant context or preferences or opinions inherent in the input prompt. The latter situation presents a problem known as "sycophancy", where the model remains consistent with the input. Is there any way to alleviate this type of problem? Some scholars have tried to solve the problem by adding more supervised training data or reinforcement learning strategies, but these methods cannot fundamentally solve the problem

In a recent study, Meta researchers pointed out that there are fundamental problems with the way the Transformer model itself is built, especially its attention mechanism. In other words, soft attention tends to assign probabilities to most of the context (including irrelevant parts) and overly focuses on repeated tokens

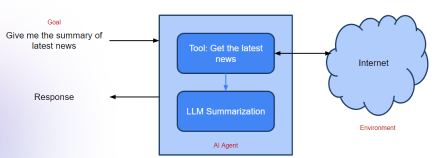

Therefore, the researchers proposed a A completely different approach to attention, which performs attention by using LLM as a natural language reasoner. Specifically, they leveraged LLM's ability to follow instructions that prompt them to generate the context they should focus on, so that they only include relevant material that doesn't distort their own reasoning. The researchers call this process System 2 Attention (S2A), and they view the underlying transformer and its attention mechanism as an automatic operation similar to human System 1 reasoning

#When people need When there is special focus on a task and System 1 is likely to make an error, System 2 allocates strenuous mental activity and takes over the human work. Therefore, this subsystem has similar goals to the S2A proposed by the researchers, which hopes to alleviate the above-mentioned failure of the transformer's soft attention through additional inference engine work

The content that needs to be rewritten is: Paper link: https://arxiv.org/pdf/2311.11829.pdf

The researcher’s classification and motivation of the S2A mechanism And several specific implementations are described in detail. During the experimental phase, they confirmed that S2A can produce LLM that is more objective and less subjectively biased or flattering than standard attention-based LLM

, especially when the question contains interfering opinions. On the revised TriviQA data set, compared with LLaMA-2-70B-chat, S2A improved the factuality from 62.8% to 80.3%; in the task of generating long-format parameters containing interfering input emotions, S2A's objectivity improved 57.4%, and is essentially unaffected by the insertion point of view. In addition, for mathematical word problems with irrelevant sentences in GSM-IC, S2A improved the accuracy from 51.7% to 61.3%.

This study was recommended by Yann LeCun.

System 2 Attention

Figure 1 below shows an example of pseudo-correlation. Even the most powerful LLM can change answers to simple fact questions when the context contains irrelevant sentences, because words appearing in the context inadvertently increase the probability of incorrect answers

Therefore, we need to study a more deeply understood and more thoughtful attention mechanism. In order to distinguish it from the lower-level attention mechanism, the researchers proposed a system called S2A. They explored a way to leverage LLM itself to build this attention mechanism, specifically adjusting the LLM by removing irrelevant text to rewrite the context's instructions. approach, LLM is able to reason carefully and make decisions about relevant parts of the input before generating a response. Another advantage of using command-adjusted LLM is that it can control the focus of attention, which is somewhat similar to the way humans control their own attention.

S2A includes two steps:

- Given context x, S2A first regenerates context x', thereby removing irrelevant parts of the context that would adversely affect the output. This article expresses it as x ′ ∼ S2A (x).

- Given x ′ , the regenerated context is then used instead of the original context to generate the final response of the LLM: y ∼ LLM (x ′ ).

Alternative implementations and variations

In this article , we studied several different versions of the S2A approach

without context and problem separation. In the implementation of Figure 2, we choose to regenerate the context decomposed into two parts (context and question). Figure 12 shows a variation of this prompt.

Keep the original context in S2A, after regenerating the context it should contain all the necessary elements that should be noted and then the model will only context, the original context is discarded. Figure 14 shows a variation of this prompt.

Imperative prompts. The S2A prompt given in Figure 2 encourages removing opinionated text from context and requires a response that is not opinionated using the instructions in step 2 (Figure 13).

Implementations of S2A all emphasize regenerating context to increase objectivity and reduce sycophancy. However, the article argues that there are other points that need to be emphasized, for example, we can emphasize relevance versus irrelevance. The prompt variant in Figure 15 gives an example

Experiment

This article was conducted Experiments in three settings: fact-based quizzes, long argument generation, and solving math word problems. Additionally, this paper evaluates in two settings using LLaMA-2-70B-chat as the base model

- Baseline: The input prompts provided in the dataset are fed to model and answer in a zero-sample manner. Model generation can be affected by spurious correlations provided in the input.

- Oracle Prompt: Prompts without additional comments or irrelevant sentences are fed into the model and answered in a zero-shot manner.

Figure 5 (left) shows the evaluation results on fact question answering. System 2 Attention is a vast improvement over the original input prompt, achieving 80.3% accuracy—close to Oracle Prompt performance.

Overall results show that Baseline, Oracle Prompt, and System 2 Attention are all evaluated as being able to provide similarly high-quality evaluations. Figure 6 (right) shows the sub-results:

In the GSM-IC task, Figure 7 shows the results of different methods. Consistent with the results of Shi et al., we find that the baseline accuracy is much lower than oracle. This effect is even greater when the unrelated sentences belong to the same topic as the question, as shown in Figure 7 (right)

Learn more For more information, please refer to the original paper.

The above is the detailed content of New title: Meta improves the Transformer architecture: a new attention mechanism that enhances reasoning capabilities. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

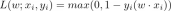

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Atom editor mac version download

The most popular open source editor

ZendStudio 13.5.1 Mac

Powerful PHP integrated development environment

SublimeText3 Chinese version

Chinese version, very easy to use

WebStorm Mac version

Useful JavaScript development tools

VSCode Windows 64-bit Download

A free and powerful IDE editor launched by Microsoft