Technology peripherals

Technology peripherals AI

AI Rewritten title: Exploring the application areas of semi-supervised learning and its related scenarios

Rewritten title: Exploring the application areas of semi-supervised learning and its related scenariosRewritten title: Exploring the application areas of semi-supervised learning and its related scenarios

Labs Introduction

With the development of the Internet, enterprises can obtain more and more data. This data helps companies better understand users, known as customer profiles, and can improve user experience. However, there may be a large amount of unlabeled data in these data. If all data is manually labeled, there will be two problems. First, manual labeling is time-consuming and inefficient. As the amount of data increases, more people will need to be hired and it will take longer, and the cost will be higher. Secondly, as the size of users increases, it is difficult to keep up with the growth of data through manual labeling

Part 01, What is semi-supervised learning

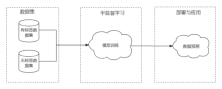

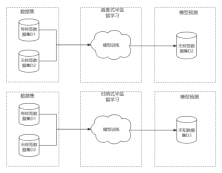

Semi-supervised learning refers to training a model using both labeled and unlabeled data. Semi-supervised learning usually constructs an attribute space based on labeled data, and then extracts effective information from unlabeled data to fill (or reconstruct) the attribute space. Therefore, the initial training set of semi-supervised learning is usually divided into labeled data set D1 and unlabeled data set D2, and then the semi-supervised learning model is trained through basic steps such as preprocessing and feature extraction, and then the trained model is used for Production environment to provide services to users.

Part 02. Assumptions of semi-supervised learning

In order to achieve effective label data supplementation with labeled data "useful" information in the data, making some assumptions about data segmentation and other aspects. The basic assumption of semi-supervised learning is that p(x) contains the information of p(y|x), that is, the unlabeled data should contain information that is useful for label prediction and is different from the labeled data or is difficult to obtain from the labeled data. information extracted from the data. In addition, there are some assumptions that serve the algorithm. For example, the similarity hypothesis (smoothness hypothesis) means that in the attribute space constructed by data samples, close or similar samples have the same label; the low-density separation hypothesis means that there is a decision boundary that can distinguish different labels where there are few data samples. The data.

The main purpose of the above assumption is to show that labeled data and unlabeled data come from the same data distribution.

Part 03, Semi-supervised learning algorithm classification

There are many semi-supervised learning algorithms, which can be roughly divided into Transductive learning and Inductive learning (Inductive model) , the difference between the two lies in the selection of the test data set used for model evaluation. Direct push semi-supervised learning means that the data set that needs to predict the label is the unlabeled data set used for training. The purpose of learning is to further improve the accuracy of the prediction results. Inductive learning predicts labels for completely unknown data sets.

In addition, the steps of common semi-supervised learning algorithms are: the first step is to train the model on labeled data, and then use this model Pseudo-label the unlabeled data, then combine the pseudo-labels and labeled data into a new training set, train a new model on this training set, and finally use this model to label the prediction data set.

Part 04. Summary

The biggest problem with semi-supervised learning is that in many cases, the performance of the model depends on labeled data set, and the quality requirements for labeled data sets are relatively high. Even the prediction accuracy of semi-supervised learning models is not much different from the results of supervised models based on labeled data sets. On the contrary, semi-supervised models are in order to effectively extract the features in unlabeled data. Effective information will consume more resources. Therefore, the development direction of semi-supervised learning is to improve the robustness of the algorithm and the effectiveness of data extraction.

Currently in the field of semi-supervised learning, PU-Learning (positive and negative sample learning) is a popular algorithm. This type of algorithm is mainly applied to data sets with only positive samples and unlabeled data. Its advantage is that in certain scenarios, we can obtain reliable positive sample data sets relatively easily, and the amount of data is relatively large. For example, in spam detection, we can easily obtain a large amount of normal email data

The above is the detailed content of Rewritten title: Exploring the application areas of semi-supervised learning and its related scenarios. For more information, please follow other related articles on the PHP Chinese website!

How to Build Your Personal AI Assistant with Huggingface SmolLMApr 18, 2025 am 11:52 AM

How to Build Your Personal AI Assistant with Huggingface SmolLMApr 18, 2025 am 11:52 AMHarness the Power of On-Device AI: Building a Personal Chatbot CLI In the recent past, the concept of a personal AI assistant seemed like science fiction. Imagine Alex, a tech enthusiast, dreaming of a smart, local AI companion—one that doesn't rely

AI For Mental Health Gets Attentively Analyzed Via Exciting New Initiative At Stanford UniversityApr 18, 2025 am 11:49 AM

AI For Mental Health Gets Attentively Analyzed Via Exciting New Initiative At Stanford UniversityApr 18, 2025 am 11:49 AMTheir inaugural launch of AI4MH took place on April 15, 2025, and luminary Dr. Tom Insel, M.D., famed psychiatrist and neuroscientist, served as the kick-off speaker. Dr. Insel is renowned for his outstanding work in mental health research and techno

The 2025 WNBA Draft Class Enters A League Growing And Fighting Online HarassmentApr 18, 2025 am 11:44 AM

The 2025 WNBA Draft Class Enters A League Growing And Fighting Online HarassmentApr 18, 2025 am 11:44 AM"We want to ensure that the WNBA remains a space where everyone, players, fans and corporate partners, feel safe, valued and empowered," Engelbert stated, addressing what has become one of women's sports' most damaging challenges. The anno

Comprehensive Guide to Python Built-in Data Structures - Analytics VidhyaApr 18, 2025 am 11:43 AM

Comprehensive Guide to Python Built-in Data Structures - Analytics VidhyaApr 18, 2025 am 11:43 AMIntroduction Python excels as a programming language, particularly in data science and generative AI. Efficient data manipulation (storage, management, and access) is crucial when dealing with large datasets. We've previously covered numbers and st

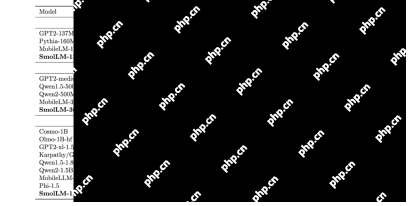

First Impressions From OpenAI's New Models Compared To AlternativesApr 18, 2025 am 11:41 AM

First Impressions From OpenAI's New Models Compared To AlternativesApr 18, 2025 am 11:41 AMBefore diving in, an important caveat: AI performance is non-deterministic and highly use-case specific. In simpler terms, Your Mileage May Vary. Don't take this (or any other) article as the final word—instead, test these models on your own scenario

AI Portfolio | How to Build a Portfolio for an AI Career?Apr 18, 2025 am 11:40 AM

AI Portfolio | How to Build a Portfolio for an AI Career?Apr 18, 2025 am 11:40 AMBuilding a Standout AI/ML Portfolio: A Guide for Beginners and Professionals Creating a compelling portfolio is crucial for securing roles in artificial intelligence (AI) and machine learning (ML). This guide provides advice for building a portfolio

What Agentic AI Could Mean For Security OperationsApr 18, 2025 am 11:36 AM

What Agentic AI Could Mean For Security OperationsApr 18, 2025 am 11:36 AMThe result? Burnout, inefficiency, and a widening gap between detection and action. None of this should come as a shock to anyone who works in cybersecurity. The promise of agentic AI has emerged as a potential turning point, though. This new class

Google Versus OpenAI: The AI Fight For StudentsApr 18, 2025 am 11:31 AM

Google Versus OpenAI: The AI Fight For StudentsApr 18, 2025 am 11:31 AMImmediate Impact versus Long-Term Partnership? Two weeks ago OpenAI stepped forward with a powerful short-term offer, granting U.S. and Canadian college students free access to ChatGPT Plus through the end of May 2025. This tool includes GPT‑4o, an a

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Dreamweaver Mac version

Visual web development tools

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

SublimeText3 Chinese version

Chinese version, very easy to use

WebStorm Mac version

Useful JavaScript development tools

MinGW - Minimalist GNU for Windows

This project is in the process of being migrated to osdn.net/projects/mingw, you can continue to follow us there. MinGW: A native Windows port of the GNU Compiler Collection (GCC), freely distributable import libraries and header files for building native Windows applications; includes extensions to the MSVC runtime to support C99 functionality. All MinGW software can run on 64-bit Windows platforms.