Technology peripherals

Technology peripherals AI

AI NeRF and the past and present of autonomous driving, a summary of nearly 10 papers!

NeRF and the past and present of autonomous driving, a summary of nearly 10 papers!NeRF and the past and present of autonomous driving, a summary of nearly 10 papers!

Since Neural Radiance Fields was proposed in 2020, the number of related papers has increased exponentially. It has not only become an important branch direction of three-dimensional reconstruction, but has also gradually become active at the research frontier as an important tool for autonomous driving.

NeRF has suddenly emerged in the past two years, mainly because it skips the feature point extraction and matching, epipolar geometry and triangulation, PnP plus Bundle Adjustment and other steps of the traditional CV reconstruction pipeline, and even skips the reconstruction of mesh, Texture and ray tracing learn a radiation field directly from a 2D input image, and then output a rendered image from the radiation field that approximates a real photo. In other words, let an implicit 3D model based on a neural network fit the 2D image from a specified perspective, and make it have both new perspective synthesis and capabilities. The development of NeRF is also closely related to autonomous driving, which is specifically reflected in the application of real scene reconstruction and autonomous driving simulators. NeRF is good at rendering photo-level images, so street scenes modeled with NeRF can provide highly realistic training data for autonomous driving; NeRF maps can be edited to combine buildings, vehicles, and pedestrians into various corners that are difficult to capture in reality. case can be used to test the performance of algorithms such as perception, planning, and obstacle avoidance. Therefore, NeRF is a branch of 3D reconstruction and a modeling tool. Mastering NeRF has become an indispensable skill for researchers doing reconstruction or autonomous driving.

Today I will sort out the content related to Nerf and autonomous driving. Nearly 11 articles will take you to explore the past and present of Nerf and autonomous driving;

1. The beginning of Nerf The rewritten content is: NeRF: Neural Radiation Field Representation of Scenes for View Synthesis. In the first article of ECCV2020

, a Nerf method is proposed, which uses a sparse input view set to optimize the underlying continuous volume scene function to achieve the latest view results for synthesizing complex scenes. This algorithm uses a fully connected (non-convolutional) deep network to represent the scene. The input is a single continuous 5D coordinate (including spatial position (x, y, z) and viewing direction (θ, ξ)), and the output is the spatial position of Volume density and view-related emission radiationNERF uses 2D posed images as supervision. There is no need to convolve the image. Instead, it learns a set of hidden images by continuously learning position encoding and using image color as supervision. formula parameters, representing complex three-dimensional scenes. Through implicit representation, rendering from any perspective can be completed. 2.Mip-NeRF 360

2.Mip-NeRF 360

The research content of CVPR2020 is about outdoor borderless scenes. Among them, Mip-NeRF 360: Boundless anti-aliasing neural radiation field is one of the research directions

Paper link: https://arxiv.org/pdf/2111.12077.pdfAlthough neural Radiative Fields (NeRF) have demonstrated good view synthesis results on small bounding regions of objects and space, but they are difficult to implement in "boundaryless" scenes where the camera may point in any direction and the content may exist at any distance. In this case, existing NeRF-like models often produce blurry or low-resolution renderings (due to imbalanced detail and scale of nearby and distant objects), are slower to train, and suffer from poor reconstruction from a set of small images. Artifacts may occur due to the inherent ambiguity of the task in large scenes. This paper proposes an extension of mip-NeRF, a NeRF variant that solves sampling and aliasing problems, that uses nonlinear scene parameterization, online distillation, and a new distortion-based regularizer to overcome the problems brought by unbounded scenes. challenges. It achieves a 57% reduction in mean square error compared to mip-NeRF and is able to generate realistic synthetic views and detailed depth maps for highly complex, boundaryless real-world scenes.

#3.Instant-NGP

#The content that needs to be rewritten is: "Display Mixed scene representation of voxels plus implicit features (SIGGRAPH 2022)》Real-time neurographic primitives encoded with multi-resolution hashing

The content that needs to be rewritten is: Link: https ://nvlabs.github.io/instant-ngp

Let us first take a look at the similarities and differences between Instant-NGP and NeRF:

- Also based on volume rendering

- Different from NeRF's MLP, NGP uses a sparse parameterized voxel grid as scene expression;

- Based on gradients, it optimizes the scene and MLP at the same time ( One of the MLPs is used as decoder).

It can be seen that the large framework is still the same. The most important difference is that NGP selects the parameterized voxel grid as the scene expression. Through learning, the parameters saved in voxel become the shape of the scene density. The biggest problem with MLP is that it is slow. In order to reconstruct the scene with high quality, a relatively large network is often required, and it will take a lot of time to pass through the network for each sampling point. Interpolation within the grid is much faster. However, if the grid wants to express high-precision scenes, it requires high-density voxels, which will cause extremely high memory usage. Considering that there are many places in the scene that are blank, NVIDIA proposed a sparse structure to express the scene.

##4. F2-NeRF

F2-NeRF: Fast Neural Radiance Field Training with Free Camera TrajectoriesPaper link: https://totoro97.github.io/projects/f2-nerf/Proposed a new grid-based NeRF, called F2-NeRF (Fast Free NeRF), for new view synthesis, which can achieve arbitrary input camera trajectories and only requires a few minutes of training time . Existing fast grid-based NeRF training frameworks, such as Instant NGP, Plenoxels, DVGO or TensoRF, are mainly designed for bounded scenes and rely on spatial warpping to handle unbounded scenes. Two existing widely used spatial warpping methods only target forward-facing trajectories or 360◦ object-centered trajectories, but cannot handle arbitrary trajectories. This article conducts an in-depth study of the mechanism of spatial warpping to handle unbounded scenes. We further propose a new spatial warpping method called perspective warpping, which allows us to handle arbitrary trajectories in the grid-based NeRF framework. Extensive experiments show that F2-NeRF is able to render high-quality images using the same perspective warping on two collected standard datasets and a new free trajectory dataset.

Real-time rendering The mobile application implements the function of Nerf exporting Mesh, and this technology has been adopted by the CVPR2023 conference!

MobileNeRF: Exploiting the Polygon Rasterization Pipeline for Efficient Neural Field Rendering on Mobile Architectures.

The content that needs to be rewritten is: https://arxiv.org/pdf/2208.00277.pdf

Neural Radiation Fields (NeRF) have demonstrated the amazing ability to synthesize 3D scene images from novel views. However, they rely on specialized volumetric rendering algorithms based on ray marching that do not match the capabilities of widely deployed graphics hardware. This paper introduces a new textured polygon-based NeRF representation that can efficiently synthesize new images through standard rendering pipelines. NeRF is represented as a set of polygons whose textures represent binary opacity and feature vectors. Traditional rendering of polygons using a z-buffer produces an image in which each pixel has characteristics that are interpreted by a small view-dependent MLP running in the fragment shader to produce the final pixel color. This approach enables NeRF to render using a traditional polygon rasterization pipeline that provides massive pixel-level parallelism, enabling interactive frame rates across a variety of computing platforms, including mobile phones.

6.Co-SLAM

Our real-time visual localization and NeRF mapping work has been included in CVPR2023

Co-SLAM: Joint Coordinate and Sparse Parametric Encodings for Neural Real-Time SLAM

Paper link: https://arxiv.org/pdf/2304.14377.pdf

Co-SLAM is a real-time An RGB-D SLAM system that uses neural implicit representations for camera tracking and high-fidelity surface reconstruction. Co-SLAM represents the scene as a multi-resolution hash grid to exploit its ability to quickly converge and represent local features. In addition, in order to incorporate surface consistency priors, Co-SLAM uses a block encoding method, which proves that it can powerfully complete scene completion in unobserved areas. Our joint encoding combines the advantages of Co-SLAM’s speed, high-fidelity reconstruction, and surface consistency priors. Through a ray sampling strategy, Co-SLAM is able to globally bundle adjustments to all keyframes!

##7.Neuralangelo

The current best NeRF surface reconstruction method (CVPR2023)

The first open source autonomous driving NeRF simulation tool.

What needs to be rewritten is: https://arxiv.org/pdf/2307.15058.pdf

Self-driving cars can drive smoothly under ordinary circumstances. It is generally believed that realistic sensor simulation Will play a key role in resolving remaining corner situations. To this end, MARS proposes an autonomous driving simulator based on neural radiation fields. Compared with existing works, MARS has three distinctive features: (1) Instance awareness. The simulator models the foreground instances and the background environment separately using separate networks so that the static (e.g., size and appearance) and dynamic (e.g., trajectory) characteristics of the instances can be controlled separately. (2) Modularity. The simulator allows flexible switching between different modern NeRF-related backbones, sampling strategies, input modes, etc. It is hoped that this modular design can promote academic progress and industrial deployment of NeRF-based autonomous driving simulations. (3) Real. The simulator is set up for state-of-the-art photorealistic results with optimal module selection.

The most important point is: open source!

##9.UniOcc

For the content that needs to be re-written, "NeRF and 3D occupy the network, AD2023 Challenge"UniOcc: Unifying Vision-Centric 3D Occupancy Prediction with Geometric and Semantic Rendering.Paper link: https://arxiv.org/abs/2306.09117 UniOCC is a vision-centric 3D occupancy prediction method. Traditional occupancy prediction methods mainly use 3D occupancy labels to optimize the projection features of 3D space. However, the generation process of these labels is complex and expensive, relies on 3D semantic annotations, and is limited by voxel resolution and cannot provide fine-grained space. Semantics. To address this issue, this paper proposes a new unified occupancy (UniOcc) prediction method that explicitly imposes spatial geometric constraints and supplements fine-grained semantic supervision through volume ray rendering. This approach significantly improves model performance and demonstrates the potential in reducing manual annotation costs. Considering the complexity of labeling 3D occupancy, we further introduce the depth-sensing teacher-student (DTS) framework to utilize unlabeled data to improve prediction accuracy. Our solution achieved an mIoU score of 51.27% in the official ranking of single models, ranking third in this challenge

Produced by Wowaoao, it is definitely a high-quality product!

UniSim: A neural closed-loop sensor simulator

Paper link: https://arxiv.org/pdf/2308.01898.pdf

An important reason that hinders the popularization of autonomous driving But security is still not enough. The real world is too complex, especially with the long tail effect. Boundary scenarios are critical to safe driving and are diverse but difficult to encounter. It is very difficult to test the performance of autonomous driving systems in these scenarios because they are difficult to encounter and very expensive and dangerous to test in the real world

To solve this challenge, both industry and academia have begun to pay attention to simulation System development. At the beginning, the simulation system mainly focused on simulating the movement behavior of other vehicles/pedestrians and testing the accuracy of the autonomous driving planning module. In recent years, the focus of research has gradually shifted to sensor-level simulation, that is, simulation to generate raw data such as lidar and camera images, to achieve end-to-end testing of autonomous driving systems from perception, prediction to planning.

Different from previous work, UniSim has simultaneously achieved for the first time:

- High realism:

- Can accurately simulate reality World (pictures and LiDAR), reducing the domain gap Closed-loop simulation:

- Can generate rare dangerous scenes to test unmanned car, and allows the unmanned car to interact freely with the environment Scalable:

- Can be easily expanded to more scenes, and only needs to be collected once data, you can reconstruct and simulate the test

UniSim First, from the collected data,

reconstructthe autonomous driving scene in the digital world, including cars, pedestrians, roads, buildings and traffic signs. Then, control the reconstructed scene for simulation to generate some rare key scenes.

Closed-loop simulationUniSim can perform closed-loop simulation testing. First, by controlling the behavior of the car, UniSim can create a dangerous and rare scene. , For example, a car suddenly comes oncoming in the current lane; then, UniSim simulates and generates corresponding data; then, runs the autonomous driving system and outputs the results of path planning; based on the results of path planning, the unmanned vehicle moves to the next designated location , and update the scene (the location of the unmanned vehicle and other vehicles); then we continue to simulate, run the autonomous driving system, and update the virtual world state... Through this closed-loop test, the autonomous driving system and the simulation environment can interact to create A scene completely different from the original data

The above is the detailed content of NeRF and the past and present of autonomous driving, a summary of nearly 10 papers!. For more information, please follow other related articles on the PHP Chinese website!

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AM

What is Graph of Thought in Prompt EngineeringApr 13, 2025 am 11:53 AMIntroduction In prompt engineering, “Graph of Thought” refers to a novel approach that uses graph theory to structure and guide AI’s reasoning process. Unlike traditional methods, which often involve linear s

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AM

Optimize Your Organisation's Email Marketing with GenAI AgentsApr 13, 2025 am 11:44 AMIntroduction Congratulations! You run a successful business. Through your web pages, social media campaigns, webinars, conferences, free resources, and other sources, you collect 5000 email IDs daily. The next obvious step is

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AM

Real-Time App Performance Monitoring with Apache PinotApr 13, 2025 am 11:40 AMIntroduction In today’s fast-paced software development environment, ensuring optimal application performance is crucial. Monitoring real-time metrics such as response times, error rates, and resource utilization can help main

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM

ChatGPT Hits 1 Billion Users? 'Doubled In Just Weeks' Says OpenAI CEOApr 13, 2025 am 11:23 AM“How many users do you have?” he prodded. “I think the last time we said was 500 million weekly actives, and it is growing very rapidly,” replied Altman. “You told me that it like doubled in just a few weeks,” Anderson continued. “I said that priv

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AM

Pixtral-12B: Mistral AI's First Multimodal Model - Analytics VidhyaApr 13, 2025 am 11:20 AMIntroduction Mistral has released its very first multimodal model, namely the Pixtral-12B-2409. This model is built upon Mistral’s 12 Billion parameter, Nemo 12B. What sets this model apart? It can now take both images and tex

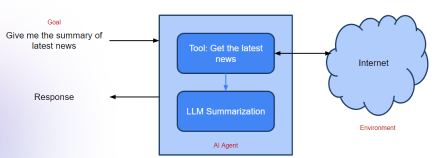

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AM

Agentic Frameworks for Generative AI Applications - Analytics VidhyaApr 13, 2025 am 11:13 AMImagine having an AI-powered assistant that not only responds to your queries but also autonomously gathers information, executes tasks, and even handles multiple types of data—text, images, and code. Sounds futuristic? In this a

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AM

Applications of Generative AI in the Financial SectorApr 13, 2025 am 11:12 AMIntroduction The finance industry is the cornerstone of any country’s development, as it drives economic growth by facilitating efficient transactions and credit availability. The ease with which transactions occur and credit

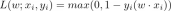

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AM

Guide to Online Learning and Passive-Aggressive AlgorithmsApr 13, 2025 am 11:09 AMIntroduction Data is being generated at an unprecedented rate from sources such as social media, financial transactions, and e-commerce platforms. Handling this continuous stream of information is a challenge, but it offers an

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

SublimeText3 Chinese version

Chinese version, very easy to use

mPDF

mPDF is a PHP library that can generate PDF files from UTF-8 encoded HTML. The original author, Ian Back, wrote mPDF to output PDF files "on the fly" from his website and handle different languages. It is slower than original scripts like HTML2FPDF and produces larger files when using Unicode fonts, but supports CSS styles etc. and has a lot of enhancements. Supports almost all languages, including RTL (Arabic and Hebrew) and CJK (Chinese, Japanese and Korean). Supports nested block-level elements (such as P, DIV),

DVWA

Damn Vulnerable Web App (DVWA) is a PHP/MySQL web application that is very vulnerable. Its main goals are to be an aid for security professionals to test their skills and tools in a legal environment, to help web developers better understand the process of securing web applications, and to help teachers/students teach/learn in a classroom environment Web application security. The goal of DVWA is to practice some of the most common web vulnerabilities through a simple and straightforward interface, with varying degrees of difficulty. Please note that this software

Dreamweaver Mac version

Visual web development tools

SecLists

SecLists is the ultimate security tester's companion. It is a collection of various types of lists that are frequently used during security assessments, all in one place. SecLists helps make security testing more efficient and productive by conveniently providing all the lists a security tester might need. List types include usernames, passwords, URLs, fuzzing payloads, sensitive data patterns, web shells, and more. The tester can simply pull this repository onto a new test machine and he will have access to every type of list he needs.